This week you’ll be able to listen to the talks of Jonathan Hurst (Professor of Robotics at Oregon State University, and Chief Technology Officer at Agility Robotics) and Andrea Thomaz (Associate Professor of Robotics at the University of Texas at Austin, and CEO of Diligent Robotics) as part of this series that brings you the plenary and keynote talks from the IEEE/RSJ IROS2020 (International Conference on Intelligent Robots and Systems). Jonathan’s talk is in the topic of humanoids, while Andrea’s is about human-robot interaction.

|

|

Prof. Jonathan Hurst – Design Contact Dynamics in Advance Bio: Jonathan W. Hurst is Chief Technology Officer and co-founder of Agility Robotics, and Professor and co-founder of the Oregon State University Robotics Institute. He holds a B.S. in mechanical engineering and an M.S. and Ph.D. in robotics, all from Carnegie Mellon University. His university research focuses on understanding the fundamental science and engineering best practices for robotic legged locomotion and physical interaction. Agility Robotics is bringing this new robotic mobility to market, solving problems for customers, working towards a day when robots can go where people go, generate greater productivity across the economy, and improve quality of life for all. |

|

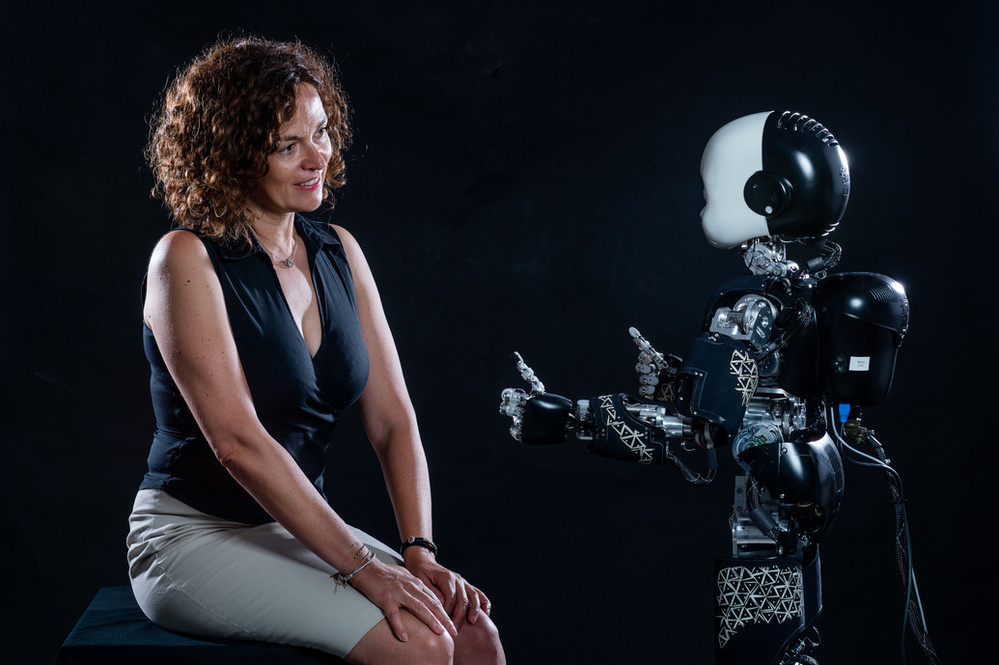

Prof. Andrea Thomaz – Human + Robot Teams: From Theory to Practice Bio: Andrea Thomaz is the CEO and Co-Founder of Diligent Robotics and a renowned social robotics expert. Her accolades include being recognized by the National Academy of Science as a Kavli Fellow, the US President’s Council of Advisors on Science and Tech, MIT Technology Review on its Next Generation of 35 Innovators Under 35 list, Popular Science on its Brilliant 10 list, TEDx as a featured keynote speaker on social robotics and Texas Monthly on its Most Powerful Texans of 2018 list. Andrea’s robots have been featured in the New York Times and on the covers of MIT Technology Review and Popular Science. Her passion for social robotics began during her work at the MIT Media Lab, where she focused on using AI to develop machines that address everyday human needs. Andrea co-founded Diligent Robotics to pursue her vision of creating socially intelligent robot assistants that collaborate with humans by doing their chores so humans can have more time for the work they care most about. She earned her Ph.D. from MIT and B.S. in Electrical and Computer Engineering from UT Austin, and was a Robotics Professor at UT Austin and Georgia Tech (where she directed the Socially Intelligent Machines Lab). Andrea is published in the areas of Artificial Intelligence, Robotics, and Human-Robot Interaction. Her research aims to computationally model mechanisms of human social learning in order to build social robots and other machines that are intuitive for everyday people to teach. Andrea has received an NSF CAREER award in 2010 and an Office of Naval Research Young Investigator Award in 2008. In addition Diligent Robotics robot Moxi has been featured on NBC Nightly News and most recently in National Geographic “The robot revolution has arrived”. |