Goudsmit Magnetics – Magnetic robot grippers for automated processes

For university classrooms, are telepresence robots the next best thing to being there?

‘Mole-bot’ optimized for underground and space exploration

‘Mole-bot’ optimized for underground and space exploration

Mechanical engineers develop coronavirus decontamination robot

Mechanical engineers develop coronavirus decontamination robot

How is COVID-19 Impacting and Transforming the Humanoid Robot Industry?

KUBeetle-S: An insect-inspired robot that can fly for up to 9 minutes

Personal mobility machine needs no help at Tokyo airport

COVID-19 To Reduce 2020 Industrial Robot Revenue By >8%, Followed By A Rapid Growth To Return From 2021

Titan Medical, Medtronic agree to cooperate on surgical robotics development

The development of systems for robot-assisted surgery is difficult, with the need to meet stringent clinical requirements, get regulator approvals, and keep costs under control. Today, Titan Medical Inc. announced an agreement with Medtronic PLC to advance the design and development of surgical robots. The onetime rivals also signed a licensing agreement regarding some of Titan’s intellectual property.

Under the agreement, both companies can develop robot-assisted surgical systems in their respective businesses, while Titan will receive a series of payments that reach $31 million in return for Medtronic’s license for the technologies. The payments will arrive as milestones are completed and verified.

Milestones include fundraising

A steering committee including representatives from Toronto-based Titan Medical and Dublin, Ireland-based Medtronic will oversee work toward achievement of the milestones. One of them is for Titan to raise an additional $18 million in capital within four months of the development start date, which is expected to occur this month.

Titan has also received from Medtronic a senior secured loan of $1.5 million that will be increased increased by an amount equal to certain legal expenses related to transactions and intellectual property with an interest rate of 8% per annum. The loan is repayable on June 4, 2023, or upon the earlier completion of the last milestone.

Until the loan is repaid, Medtronic may have one non-voting observer on Titan’s board of directors. Charles Federico, who has served as the company’s chairman since May 2019, and John Schellhorn, who has served as a director since June 2017, have decided to retire from Titan’s board. The board will consist of three members, including David McNally; John Barker, an independent director; and Stephen Randall, Titan’s chief financial officer, while a search for additional independent directors is conducted.

The 2020 Healthcare Robotics Engineering Forum is coming in September.

Titan Medical pays $10M for Medtronic surgical robot licenses

Under the terms of the separate agreement, Medtronic has licensed certain robot-assisted surgical technologies from Titan for an upfront payment of $10 million. Titan said it retains the rights to continue to develop and commercialize those technologies for its own business.

“These agreements with Medtronic will allow Titan to continue to develop its single-port robotic surgical technologies while sharing our expertise and technologies with Medtronic,” stated David McNally, president and CEO of Titan Medical. “We are very excited about the opportunity to continue Titan’s pioneering work to bring new single-port surgical options to the market.”

These agreements are between Medtronic and Titan Medical, which is not affiliated with Titan Spine, which Medtronic acquired in 2019. They are another step in Medtronic’s effort to break into the robot-assisted surgery space, which remains dominated by Intuitive Surgical and its da Vinci SP.

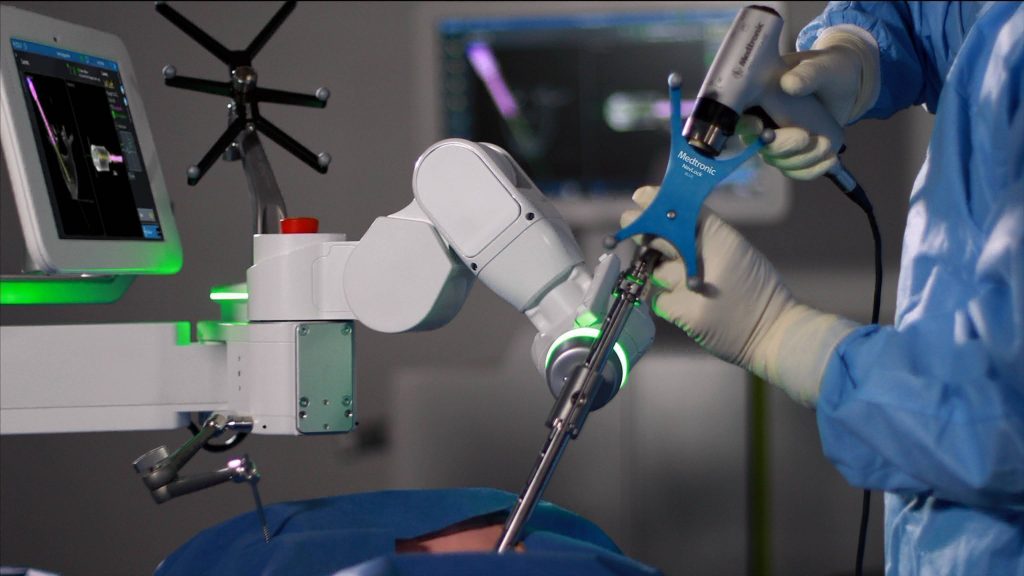

The Mazor X Stealth robot-assisted spinal surgical system. Source: Medtronic

Medtronic completed a $1.7 billion purchase of Mazor Robotics in December 2018. A month later, the company launched its Mazor X Stealth robotic-assisted spinal surgical platform in the U.S. In September 2019, Medtronic unveiled its new Hugo system that is set to rival the da Vinci SP.

Editor’s note: For more about this and other medical device deals, visit our sibling site, MassDevice.

The post Titan Medical, Medtronic agree to cooperate on surgical robotics development appeared first on The Robot Report. Read More