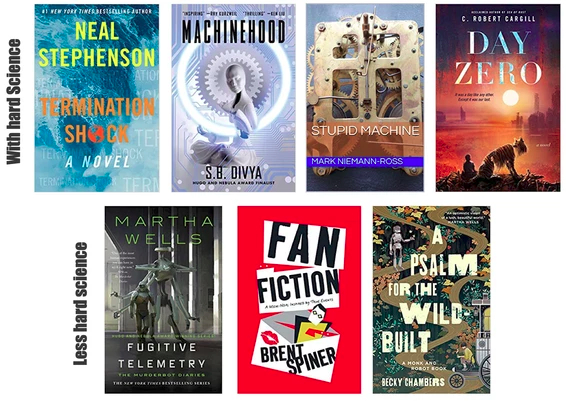

Robot science fiction books of 2021

Scifi robot books of 2021

2021 produced four new scifi books with good hard science underpinning their description of robots and three where there was less science but lots of interesting ideas about robots. Not only are these books enjoyable on their own, fiction can serve as teachable moments in robots and STEM and inspire a robot-obsessed teen to read more and improve their reading comprehension.

- Termination Shock

- Machinehood

- Stupid Machine

- Day Zero

- Fugitive Telemetry

- Fan Fiction

- A Psalm for the Wild-Built

Let’s start with the scifi book I most frequently recommended to friends to read in 2021: Termination Shock by Neal Stephenson. It is not a robot book per se but robots and automation are realistically interspersed through it- and the book is one of Stephenson’s best, pulling together LOTS of technology, subplots, and themes similar to what he did in Diamond Age. One of the technology threads is how drones are ubiquitous throughout the book, with small drones being used singly or in swarms for surveillance and social media and bigger drones used for delivery, human transport, and, well, mayhem. Nominally the book is about climate change and how a group of individuals led by a rich Texan plan to cut through the COP26 meetings blather and get on with geoengineering the environment. Except money and geoengineering is the easy part… It’s a dramedy of a book and manages to never lecture or push political agendas, instead it is hard science wrapped with memorable characters, a compelling plot, and a sense of humor, with an “oh my!” twist at the end.

A great way to think about how drones are becoming subtly integrated as tools into military, security, and journalism. And that at the end of the day, despite the huge investment in anti-drone technology, a shotgun with bird shot may be our best defense for small drones. Check out the RTSF topics page for more links to the science.

Unlike Termination Shock, robots and AI *are* the subject of S.B. Divya’s Machinehood. It’s a thorny book with a piercingly sharp commentary on the gig economy, climate change, automation, and ethics. A rogue neo-Buddhist decides that intelligent machines deserve legal rights and protections, similar to animals, which she calls the Machinehood Manifesto. Then she leads a terrorist cell to force governments to incorporate machinehood protections into their legal framework. A SpecialOps operator is tasked to take her down, which she does with the help of her sister-in-law. Lots of action, lots of ideas, lots of realism and full of thought provoking jabs. The book echoes real-world arguments since the 1980’s about treating robots as animals from a legal perspective.

It is a wonderful, very useful introduction to issues in robot ethics and autonomy and the very real concept of treating robots like animals- which is covered in the non-fiction book The New Breed by Kate Darling. You can read more about that in my recent Science Robotics article.

Stupid Machine by Mark Niemann-Ross is a comedy with an interesting and timely plot about autonomous cars, cyber hackers, and social justice warriors in Portland. It would be tempting to hack an autonomous car to drive an annoying semi-full time, I-protest-everything activist off a bridge, wouldn’t it? Plus there is a nice Ubik-like smart house subplot. Not as well-written, plotted, and memorable as Termination Shock and Machinehood, but a quick, easy read.

The idea that autonomous cars will be both ubiquitous and hackable makes it a nice teachable moment about cybersecurity. A recent scifi book that explains more about the workings of autonomous driving is David Walton’s There Laws Lethal. You can read my Science Robotics article on autonomous cars in scifi here.

Like Machinehood, Day Zero by C. Robert Cargill is set a near future. It’s the prequel to Sea of Rust, one of my all time favorite robot scifi books. Sea of Rust is an evocative story about what happens after the robot revolution and how the robots themselves descend into a kind of Mad Max hell. Day Zero isn’t as striking as Sea of Rust, but very enjoyable as a prequel. If you loved Spielberg’s AI: Artificial Intelligence, then you’ll doubly love Day Zero because it is told from the POV of Pounce, the Teddy-like robot, who protects his boy Ezra during the robot revolution. You don’t have to read Sea of Rust first, Day Zero is a stand alone, but I recommend you do. I hope there are more books in the Sea of Rust series.!

In terms of robotics, Day Zero is a good introduction to nursebots, healthcare robots, and domestic assistance robots. You can learn more about the science of nannybots at the Science Robotics article and domestic robotics, sometimes called domotics at Science Robotics here.

Of course, there was a lot of other robot science fiction in 2021, just with less science. Here are three books for you to consider that have some robots in with real world science.

The Murderbot Diaries by Martha Wells is one of my top “you’ve got to read!” The latest addition, Fugitive Telemetry, continues the delightful Bildungsroman of the galaxy’s snarkiest robot. ROFL as always, it maintains the usual clever plotting and action that makes the Murderbot Diaries a favorite of both the Hugos and Nebulas awards. And Murderbot, like the humans in Termination Shock, has a swarm of drones and knows how to use them. Oh, SecUnit, I love you!

And I love the series as way of illustrating real-world problems in software engineering and cybersecurity for robotics. Check out the discussions of software engineering in the first book and the Internet of Things in the fourth.

It’s hard not to like Lieutenant Commander Data on Star Trek and Picard, right? Well, it’s hard not to like Brent Spiner, the nice Jewish boy from Houston who grew up to write a funny, self-mocking semi-fictional autobiography as well as star in a hit TV series. He calls his book, Fan Fiction, a “mem-noir” where an actor, conveniently named Brent Spiner, on the third season of a modest hit conveniently called “ST:TNG” is being stalked by a someone purported to be Lal, Data’s short-lived robot daughter. The hapless actor finds it is as if he is living in a Raymond Chandler novel. Brent reflects on his life and how he got to this point in his career as he tries to go about shooting episodes, going to parties at the Roddenberrys, signing autographs at cons, and hanging out with Patrick Stewart, Levar Burton, and Jonathan Frakes. Yet Spiner comes across as a regular guy, grounded and grateful- and amused- at “making it” in Hollywood. It makes me long for a follow up- what was life for that actor after the years of grueling 16 hour days on set and the increasing fame?

OK, there is not much there in terms of teachable moments about robots, but it is still fun.

A Psalm for the Wild-Built by Becky Chambers, the reigning comfort lit scifi writer (and that’s a good thing!), is a sentimental Solar-punk book. The book doesn’t have a lot of action but could be perfect for middle schoolers (though some f-bombs are dropped) or a read-aloud to younger children. Or something to just to enjoy instead of listening to Lake Woebegone tales or re-reading Cadfael books. The premise is that in a future world, robots spontaneously gained sentience and then left human occupied terrorizes to explore being a robot. Now they are back, self-actualized, and ready to explore humanity by asking “what do humans need?”

Doesn’t sound like there’s much about real robots, does it? And yet, it has one of the most cogent explanations of agency, of what makes something more than a machine, which is a fundamental concept in artificial intelligence and in how autonomy is different than automation.

Hopefully this list of books gives you something to read and, more importantly, something to think about in 2022!

Innovative AI Targets the Problem of Drivable Path Detection in Winter Weather

How robots learn to hike

How robots learn to hike

The legged robot ANYmal on the rocky path to the summit of Mount Etzel, which stands 1,098 metres above sea level. (Photo: Takahiro Miki)

By Christoph Elhardt

Steep sections on slippery ground, high steps, scree and forest trails full of roots: the path up the 1,098-metre-high Mount Etzel at the southern end of Lake Zurich is peppered with numerous obstacles. But ANYmal, the quadrupedal robot from the Robotic Systems Lab at ETH Zurich, overcomes the 120 vertical metres effortlessly in a 31-minute hike. That’s 4 minutes faster than the estimated duration for human hikers – and with no falls or missteps.

This is made possible by a new control technology, which researchers at ETH Zurich led by robotics professor Marco Hutter recently presented in the journal Science Robotics. “The robot has learned to combine visual perception of its environment with proprioception – its sense of touch – based on direct leg contact. This allows it to tackle rough terrain faster, more efficiently and, above all, more robustly,” Hutter says. In the future, ANYmal can be used anywhere that is too dangerous for humans or too impassable for other robots.

Video: Nicole Davidson / ETH Zurich

Perceiving the environment accurately

To navigate difficult terrain, humans and animals quite automatically combine the visual perception of their environment with the proprioception of their legs and hands. This allows them to easily handle slippery or soft ground and move around with confidence, even when visibility is low. Until now, legged robots have been able to do this only to a limited extent.

“The reason is that the information about the immediate environment recorded by laser sensors and cameras is often incomplete and ambiguous,” explains Takahiro Miki, a doctoral student in Hutter’s group and lead author of the study. For example, tall grass, shallow puddles or snow appear as insurmountable obstacles or are partially invisible, even though the robot could actually traverse them. In addition, the robot’s view can be obscured in the field by difficult lighting conditions, dust or fog.

“That’s why robots like ANYmal have to be able to decide for themselves when to trust the visual perception of their environment and move forward briskly, and when it is better to proceed cautiously and with small steps,” Miki says. “And that’s the big challenge.”

A virtual training camp

Thanks to a new controller based on a neural network, the legged robot ANYmal, which was developed by ETH Zurich researchers and commercialized by the ETH spin-off ANYbotics, is now able to combine external and proprioceptive perception for the first time. Before the robot could put its capabilities to the test in the real world, the scientists exposed the system to numerous obstacles and sources of error in a virtual training camp. This let the network learn the ideal way for the robot to overcome obstacles, as well as when it can rely on environmental data – and when it would do better to ignore that data.

“With this training, the robot is able to master the most difficult natural terrain without having seen it before,” says ETH Zurich Professor Hutter. This works even if the sensor data on the immediate environment is ambiguous or vague. ANYmal then plays it safe and relies on its proprioception. According to Hutter, this allows the robot to combine the best of both worlds: the speed and efficiency of external sensing and the safety of proprioceptive sensing.

Use under extreme conditions

Whether after an earthquake, after a nuclear disaster, or during a forest fire, robots like ANYmal can be used primarily wherever it is too dangerous for humans and where other robots cannot cope with the difficult terrain.

In September of last year, ANYmal was able to demonstrate just how well the new control technology works at the DARPA Subterranean Challenge, the world’s best-known robotics competition. The ETH Zurich robot automatically and quickly overcame numerous obstacles and difficult terrain while autonomously exploring an underground system of narrow tunnels, caves, and urban infrastructure. This was a major part of why the ETH Zurich researchers, as part of the CERBERUS team, took first place with a prize of 2 million dollars.

Robotics of Tomorrow: The Right Network for Warehouse Peak Efficiency

Robotic Dress Pack Component Basics

How robots and bubbles could soon help clean up underwater litter

Free-ranging Risso’s dolphin (Grampus griseus) swimming with plastic litter © Massimiliano Rosso for Maelstrom H2020 project

By Sandrine Ceurstemont

If you happened to be around the coast of Dubrovnik, Croatia in September 2021, you might have spotted two robots scouring the seafloor for debris. The robots were embarking on their inaugural mission and being tested in a real-world environment for the first time, to gauge their ability to perform certain tasks such as recognising garbage and manoeuvring underwater. ‘We think that our project is the first one that will collect underwater litter in an automatic way with robots,’ said Dr Bart De Schutter, a professor at Delft University of Technology in the Netherlands and coordinator of the SeaClear project.

The robots are an example of new innovations being developed to clean up underwater litter. Oceans are thought to contain between 22 and 66 million tonnes of waste, which can differ in type from area to area, where about 94% of it is located on the seafloor. Fishing equipment discarded by fishermen, such as nets, are prevalent in some coastal areas while plastic and glass bottles are mostly found in others, for example. ‘We also sometimes see construction material (in the water) like blocks of concrete or tyres and car batteries,’ said Dr De Schutter.

When litter enters oceans and seas it can be carried by currents to different parts of the world and even pollute remote areas. Marine animals can be affected if they swallow garbage or are trapped in it while human health is also at risk if tiny pieces end up in our food. ‘It’s a very serious problem that we need to tackle,’ said Dr Fantina Madricardo, a researcher at the Institute of Marine Sciences – National Research Council (ISMAR-CNR) in Venice, Italy and coordinator of the Maelstrom project.

(Our robotic system) will be much more efficient, cost effective and safer than the current solution which is based on human divers.

Human divers are currently deployed to pick up waste in some marine areas but it’s not an ideal solution. Experienced divers are needed, which can be hard to find, while the amount of time they can spend underwater is limited by their air supply. Some areas may also be unsafe for humans, due to contamination for example. ‘These are aspects that the automated system we are developing can overcome,’ said Dr De Schutter. ‘(It) will be much more efficient, cost effective and safer than the current solution which is based on human divers.’

SeaClear’s ROV TORTUGA is known as ‘the cleaner’ robot. It collects the litter from the seafloor. @ SeaClear, 2021

A team of litter-seeking robots

Dr De Schutter and his team are building a prototype of their system for the SeaClear project, which is made up of four different robots that will work collaboratively. A robotic vessel, which remains on the water’s surface, will act as a hub by providing electrical power to the other robots and will contain a computer that is the main brain of the system. The three other robots – two that operate underwater and an aerial drone – will be tethered to the vessel.

The system will be able to distinguish between litter and other items on the seafloor, such as animals and seaweed, by using artificial intelligence. An algorithm will be trained with several images of various items it might encounter, from plastic bottles to fish, so that it learns to tell them apart and identify trash

One underwater robot will be responsible for finding litter by venturing close to the sea floor to take close-up scans using cameras and sonar. The drone will also help search for garbage when the water is clear by flying over an area of interest, while in murky areas it will look out for obstacles to avoid such as ships. The system will be able to distinguish between litter and other items on the seafloor, such as animals and seaweed, by using artificial intelligence. An algorithm will be trained with several images of various items it might encounter, from plastic bottles to fish, so that it learns to tell them apart and identify trash.

Litter collection will be taken care of by the second underwater robot, which will pick up items mapped out by its companions. Equipped with a gripper and a suction device, it will collect pieces of waste and deposit them into a tethered basket placed on the seafloor that will later be brought to the surface. ‘We did some initial tests near Dubrovnik where one plastic bottle was deposited on purpose and we collected it with a gripper robot,’ said Dr De Schutter. ‘We will have more experiments where we will try to recognise more pieces of trash in more difficult circumstances and then collect them with the robot.’

Impact on underwater clean-up

Dr De Schutter and his colleagues think that their system will eventually be able to detect up to 90% of litter on the seafloor and collect about 80% of what it identifies. This is in line with some of the objectives of the EU Mission Restore Our Oceans and Waters by 2030, which is aiming to eliminate pollution and restore marine ecosystems by reducing litter at sea.

When the project is over at the end of 2023, the team expects to sell about ten of their automated systems in the next five to seven years. They think it will be of interest to local governments in coastal regions, especially in touristic areas, while companies may also be interested in buying the system and providing a clean-up service or renting out the robots. ‘These are the two main directions that we are looking at,’ said Dr De Schutter.

Honing in on litter hotspots

Another team is also developing a robotic system to tackle garbage on the seafloor as part of the Maelstrom project. However, their first step is to identify hotspots underwater where litter accumulates so that they will know where it should be deployed. Different factors such as water currents, the speed at which a particular discarded item sinks, and underwater features such as canyons all affect where litter will pool. ‘We are developing a mathematical model that can predict where the litter will end up,’ said Dr Madricardo.

Their robotic system, which is being tested near Venice, is composed of a floating platform with eight cables that are connected to a mobile robot that will move around on the seafloor beneath it to collect waste items in a box, using a gripper, hook or suction device depending on the size of the litter. The position and orientation of the robot can be controlled by adjusting the length and tension of the cables and will initially be operated by a human on the platform. However, using artificial intelligence, the robot will learn to recognise different objects and will eventually be able to function independently.

Repurposing underwater litter

Dr Madricardo and her colleagues are also aiming to recycle all the litter that is picked up. A second robot will be tasked with sorting through the retrieved waste and classifying it based on what it is made of, such as organic material, plastic or textiles. Then, the project is teaming up with industrial partners involved in different types of recycling, from plastic to chemical to fibreglass, to transform what they have recovered.

We want to demonstrate that you can really try to recycle everything, which is not easy

Dirty and mixed waste plastics are difficult to recycle, so the team used a portable pyrolysis plant developed under the earlier marGnet project to turn waste plastic into fuel to power their removal technology. This fits with the EU’s goal to move towards a circular economy, where existing products and materials are repurposed for as long as possible, as part of the European Green Deal and Plastics Strategy. ‘We want to demonstrate that you can really try to recycle everything, which is not easy,’ said Dr Madricardo.

Harnessing bubbles to clean up rivers

Dr Madricardo and her colleagues are also developing a second technology focussed on removing litter floating in rivers so that it can be intercepted before it reaches the sea. A curtain of bubbles, called a Bubble Barrier, will be created by pumping air through a perforated tube placed on the bottom of a river, which produces an upwards current to direct litter towards the surface and eventually to the banks where it is collected.

The system has been tested in canals in the Netherlands and is currently being trialled in a river north of Porto in Portugal, where it is expected to be implemented in June. ‘It’s a simple idea that does not have an impact on (boat) navigation,’ said Dr Madricardo. ‘We believe it will not have a negative impact on fauna either, but we will check that.’

Although new technologies will help tackle underwater litter, Dr Madricardo and her team are also aiming to reduce the amount of waste that ends up in water bodies in the first place. The Maelstrom project therefore involves outreach efforts, such as organised coastal clean-up campaigns, to inform and engage citizens about what they can do to limit marine litter. ‘We really believe that a change (in society) is needed,’ said Dr Madricardo. ‘There are technologies (available) but we also need to make a collective effort to solve this problem.’

The research in this article was funded by the EU. If you liked this article, please consider sharing it on social media.

Flexible skin patch provides haptic feedback from a human operator to a remotely operated robot

Advancing AMR’s with LIDAR

Maria Gini wins the 2022 ACM/SIGAI Autonomous Agents Research Award

Congratulations to Professor Maria Gini on winning the ACM/SIGAI Autonomous Agents Research Award for 2022! This prestigious prize recognises years of research and leadership in the field of robotics and multi-agent systems.

Maria Gini is Professor of Computer Science and Engineering at the University of Minnesota, and has been at the forefront of the field of robotics and multi-agent systems for many years, consistently bringing AI into robotics.

Her work includes the development of:

- novel algorithms to connect the logical and geometric aspects of robot motion and learning,

- novel robot programming languages to bridge the gap between high-level programming languages and programming by guidance,

- pioneering novel economic-based multi-agent task planning and execution algorithms.

Her work has spanned both the design of novel algorithms and practical applications. These applications have been utilized in settings as varied as warehouses and hospitals, with uses such as surveillance, exploration, and search and rescue.

Maria has been an active member and leader of the agents community since its inception. She has been a consistent mentor and role model, deeply committed to bringing diversity to the fields of AI, robotics, and computing. She is also the former President of International Foundation for Autonomous Agents and Multiagent Systems (IFAAMAS).

Maria will be giving an invited talk at AAMAS 2022. More details on this will be available soon on the conference website.

Robotics Pioneer Exotec Raises $335M Series D to Improve Supply Chain Resilience for Global Retailers

A framework to optimize the efficiency and comfort of robot-assisted feeding systems

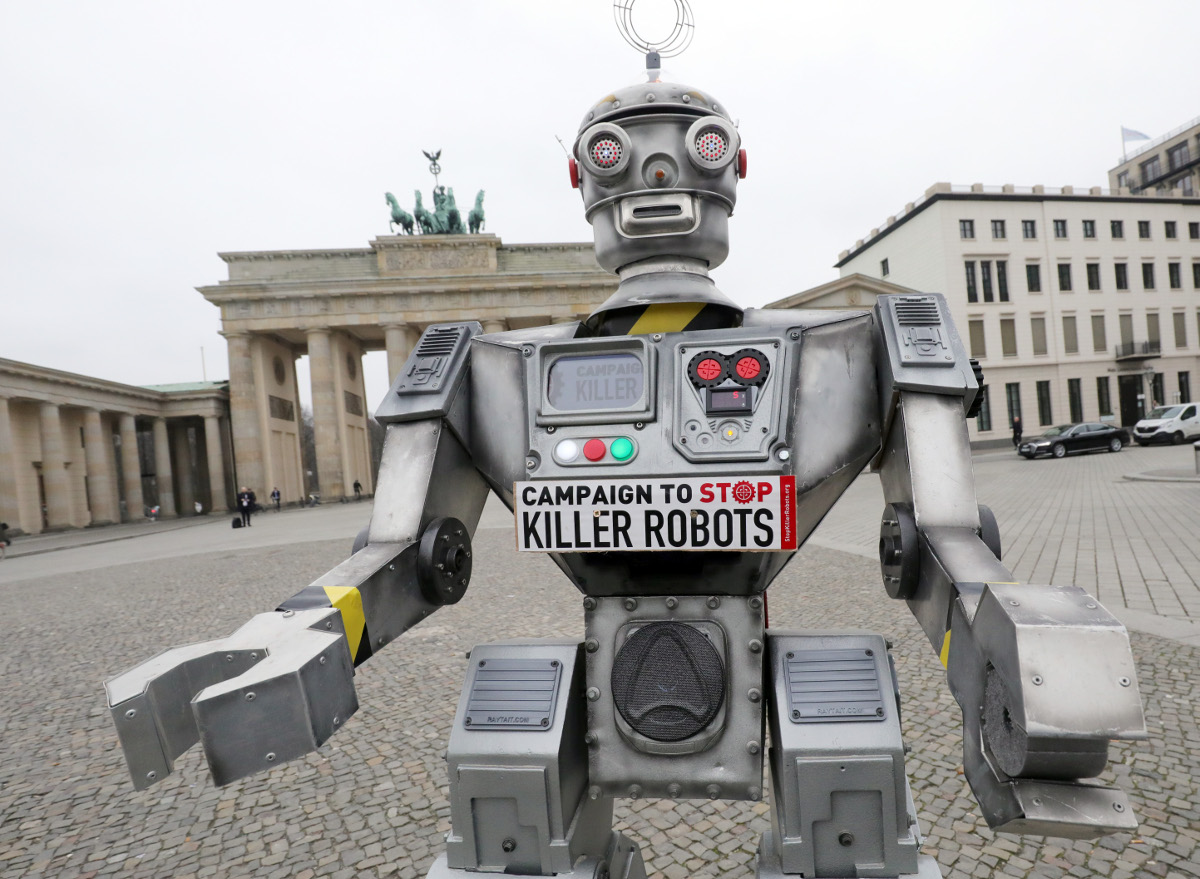

UN fails to agree on ‘killer robot’ ban as nations pour billions into autonomous weapons research

Humanitarian groups have been calling for a ban on autonomous weapons. Wolfgang Kumm/picture alliance via Getty Images

By James Dawes

Autonomous weapon systems – commonly known as killer robots – may have killed human beings for the first time ever last year, according to a recent United Nations Security Council report on the Libyan civil war. History could well identify this as the starting point of the next major arms race, one that has the potential to be humanity’s final one.

The United Nations Convention on Certain Conventional Weapons debated the question of banning autonomous weapons at its once-every-five-years review meeting in Geneva Dec. 13-17, 2021, but didn’t reach consensus on a ban. Established in 1983, the convention has been updated regularly to restrict some of the world’s cruelest conventional weapons, including land mines, booby traps and incendiary weapons.

Autonomous weapon systems are robots with lethal weapons that can operate independently, selecting and attacking targets without a human weighing in on those decisions. Militaries around the world are investing heavily in autonomous weapons research and development. The U.S. alone budgeted US$18 billion for autonomous weapons between 2016 and 2020.

Meanwhile, human rights and humanitarian organizations are racing to establish regulations and prohibitions on such weapons development. Without such checks, foreign policy experts warn that disruptive autonomous weapons technologies will dangerously destabilize current nuclear strategies, both because they could radically change perceptions of strategic dominance, increasing the risk of preemptive attacks, and because they could be combined with chemical, biological, radiological and nuclear weapons themselves.

As a specialist in human rights with a focus on the weaponization of artificial intelligence, I find that autonomous weapons make the unsteady balances and fragmented safeguards of the nuclear world – for example, the U.S. president’s minimally constrained authority to launch a strike – more unsteady and more fragmented. Given the pace of research and development in autonomous weapons, the U.N. meeting might have been the last chance to head off an arms race.

Lethal errors and black boxes

I see four primary dangers with autonomous weapons. The first is the problem of misidentification. When selecting a target, will autonomous weapons be able to distinguish between hostile soldiers and 12-year-olds playing with toy guns? Between civilians fleeing a conflict site and insurgents making a tactical retreat?

Killer robots, like the drones in the 2017 short film ‘Slaughterbots,’ have long been a major subgenre of science fiction. (Warning: graphic depictions of violence.)

The problem here is not that machines will make such errors and humans won’t. It’s that the difference between human error and algorithmic error is like the difference between mailing a letter and tweeting. The scale, scope and speed of killer robot systems – ruled by one targeting algorithm, deployed across an entire continent – could make misidentifications by individual humans like a recent U.S. drone strike in Afghanistan seem like mere rounding errors by comparison.

Autonomous weapons expert Paul Scharre uses the metaphor of the runaway gun to explain the difference. A runaway gun is a defective machine gun that continues to fire after a trigger is released. The gun continues to fire until ammunition is depleted because, so to speak, the gun does not know it is making an error. Runaway guns are extremely dangerous, but fortunately they have human operators who can break the ammunition link or try to point the weapon in a safe direction. Autonomous weapons, by definition, have no such safeguard.

Importantly, weaponized AI need not even be defective to produce the runaway gun effect. As multiple studies on algorithmic errors across industries have shown, the very best algorithms – operating as designed – can generate internally correct outcomes that nonetheless spread terrible errors rapidly across populations.

For example, a neural net designed for use in Pittsburgh hospitals identified asthma as a risk-reducer in pneumonia cases; image recognition software used by Google identified Black people as gorillas; and a machine-learning tool used by Amazon to rank job candidates systematically assigned negative scores to women.

The problem is not just that when AI systems err, they err in bulk. It is that when they err, their makers often don’t know why they did and, therefore, how to correct them. The black box problem of AI makes it almost impossible to imagine morally responsible development of autonomous weapons systems.

The proliferation problems

The next two dangers are the problems of low-end and high-end proliferation. Let’s start with the low end. The militaries developing autonomous weapons now are proceeding on the assumption that they will be able to contain and control the use of autonomous weapons. But if the history of weapons technology has taught the world anything, it’s this: Weapons spread.

Market pressures could result in the creation and widespread sale of what can be thought of as the autonomous weapon equivalent of the Kalashnikov assault rifle: killer robots that are cheap, effective and almost impossible to contain as they circulate around the globe. “Kalashnikov” autonomous weapons could get into the hands of people outside of government control, including international and domestic terrorists.

The Kargu-2, made by a Turkish defense contractor, is a cross between a quadcopter drone and a bomb. It has artificial intelligence for finding and tracking targets, and might have been used autonomously in the Libyan civil war to attack people. Ministry of Defense of Ukraine, CC BY

High-end proliferation is just as bad, however. Nations could compete to develop increasingly devastating versions of autonomous weapons, including ones capable of mounting chemical, biological, radiological and nuclear arms. The moral dangers of escalating weapon lethality would be amplified by escalating weapon use.

High-end autonomous weapons are likely to lead to more frequent wars because they will decrease two of the primary forces that have historically prevented and shortened wars: concern for civilians abroad and concern for one’s own soldiers. The weapons are likely to be equipped with expensive ethical governors designed to minimize collateral damage, using what U.N. Special Rapporteur Agnes Callamard has called the “myth of a surgical strike” to quell moral protests. Autonomous weapons will also reduce both the need for and risk to one’s own soldiers, dramatically altering the cost-benefit analysis that nations undergo while launching and maintaining wars.

Asymmetric wars – that is, wars waged on the soil of nations that lack competing technology – are likely to become more common. Think about the global instability caused by Soviet and U.S. military interventions during the Cold War, from the first proxy war to the blowback experienced around the world today. Multiply that by every country currently aiming for high-end autonomous weapons.

Undermining the laws of war

Finally, autonomous weapons will undermine humanity’s final stopgap against war crimes and atrocities: the international laws of war. These laws, codified in treaties reaching as far back as the 1864 Geneva Convention, are the international thin blue line separating war with honor from massacre. They are premised on the idea that people can be held accountable for their actions even during wartime, that the right to kill other soldiers during combat does not give the right to murder civilians. A prominent example of someone held to account is Slobodan Milosevic, former president of the Federal Republic of Yugoslavia, who was indicted on charges of crimes against humanity and war crimes by the U.N.’s International Criminal Tribunal for the Former Yugoslavia.

But how can autonomous weapons be held accountable? Who is to blame for a robot that commits war crimes? Who would be put on trial? The weapon? The soldier? The soldier’s commanders? The corporation that made the weapon? Nongovernmental organizations and experts in international law worry that autonomous weapons will lead to a serious accountability gap.

To hold a soldier criminally responsible for deploying an autonomous weapon that commits war crimes, prosecutors would need to prove both actus reus and mens rea, Latin terms describing a guilty act and a guilty mind. This would be difficult as a matter of law, and possibly unjust as a matter of morality, given that autonomous weapons are inherently unpredictable. I believe the distance separating the soldier from the independent decisions made by autonomous weapons in rapidly evolving environments is simply too great.

The legal and moral challenge is not made easier by shifting the blame up the chain of command or back to the site of production. In a world without regulations that mandate meaningful human control of autonomous weapons, there will be war crimes with no war criminals to hold accountable. The structure of the laws of war, along with their deterrent value, will be significantly weakened.

A new global arms race

Imagine a world in which militaries, insurgent groups and international and domestic terrorists can deploy theoretically unlimited lethal force at theoretically zero risk at times and places of their choosing, with no resulting legal accountability. It is a world where the sort of unavoidable algorithmic errors that plague even tech giants like Amazon and Google can now lead to the elimination of whole cities.

In my view, the world should not repeat the catastrophic mistakes of the nuclear arms race. It should not sleepwalk into dystopia.

This is an updated version of an article originally published on September 29, 2021.

James Dawes does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.