The IEEE International Conference on Robotics and Automation (ICRA) kicks off with the largest in-person participation and number of represented countries ever

Photo credit: Wise Owl Multimedia

The IEEE International Conference on Robotics and Automation (ICRA), taking place simultaneously at the Pennsylvania Convention Center in Philadelphia and virtually, has just kicked off. ICRA 2022 brings together the world’s top researchers and most important companies to share ideas and advances in the fields of robotics and automation.

This is the first time the ICRA community is reunited after the pandemic, resulting in record breaking attendance with over 7,000 registrations and 95 countries represented. As the ICRA 2022 Co-Chair Vijay Kumar (University of Pennsylvania, USA) states, “we could not be happier to host the largest robotics conference in the world in Philadelphia, and the beginning of the re-emergence from the pandemic after a three year hiatus. We are back!”

Many important developments in robotics and automation have historically been first presented at ICRA, and in its 39th year, ICRA 2022 promises to take this trend one step further. As the practical and socio-economic impact of our field continues to expand, robotics and automation are increasingly taking the center stage in our lives and will play an important role in the Future of Work and Society, the theme of this year’s conference. Indeed, the Future of Work Forum Session being held on Thursday May 26th will specifically address the impact of automation on the future of employment, featuring panelists Jeff Burnstein (A3), Erik Brynjolfssonn (Stanford), Moshe Vardi (Rice University), Michael Lotito (Littler), Bernd Liepert (EuRobotics), Cecilia Laschi (NUS), and Ioana Marinescu (University of Pennsylvania). Five more Forums will be happening from Tuesday to Thursday, including an Industry Forum on Tuesday May 24th, or a Startup Forum on Wednesday May 25th.

The conference will also feature Plenaries and Keynotes by world-renowned roboticists on topics such as Robot Ethics, Legged Robots for Industrial and Search & Rescue Applications, Robot Farming, Autonomous Logistics or Smart Sensing, as well as 1500 Technical Talks on the state-of-the-art in robotics. A total of 39 researchers have been nominated to the 13 awards that ICRA 2022 is giving on Thursday, for their outstanding research contributions in categories such as Automation, Coordination, Interaction, Learning, Locomotion, Manipulation, Navigation or Planning, among others. As the ICRA 2022 Program Chair Hadas Kress-Gazit (Cornell University, USA) comments, “we are so excited to see the latest and greatest in robotics research and meet old and new friends!”.

Furthermore, a robot exhibition hall has been prepared with nearly 100 confirmed Exhibitors offering robot displays and demos from companies like Dyson, Motional, Built Robotics, NVIDIA, Technology Innovation Institute, Boston Dynamics, Pal-Robotics, KUKA Robotics, Toyota Research Institute, Tesla or Waymo, among others.

Competitions are also a big part of ICRA 2022. A total of 10 exciting Competitions will be taking place from Monday May 23rd to Friday May 27th, on the following topics: Autonomous Ground & Aerial Robots Navigation, Localization and Mapping, Robot Manipulation, Autonomous Racing, Roboethics, and LEGO League for 12th grade students.

To complete the program of the largest worldwide robotics conference, there will also be several Industry Tech Huddles led by industry experts, Technical Tours to the Singh Center for Nanotechnology, Penn Medicine and the General Robotics, Automation, Sensing and Perception (GRASP) Laboratory, and several Networking Events.

ICRA 2022 is also proud to partner with the RAD Lab and several Philadelphia-based art galleries to offer a central space for art in its program. Building on the previous ICRA robotic art programs, this year’s installment explores aesthetic and creative influences on robot motion through interactive, expressive, and meditative robotic art installations. The exhibition and the associated workshop will provide new perspectives on imagining new technology futures.

“This is a very special conference for the majority of ICRA attendees,” ICRA 2022 General Co-Chair George J. Pappas (University of Pennsylvania, USA) comments. “The reason? 64% of all registrants and 56% of all in-person registrants are attending ICRA for the very first time! Given this influx of fantastic talent to our field, the future of ICRA is as bright as it has ever been.”

Not everyone can attend ICRA in person. That’s why the ICRA Organizing Committee, the IEEE Robotics and Automation Society and OhmniLabs have teamed up to offer access to the OhmiLab’s telepresence robots that will be on site. Three OhmniBots will be in the main exhibition hall (with all the other robots) from opening to closing on Tuesday May 24th, Wednesday May 25th and Thursday May 26th, with time slots aligning with Poster Sessions, networking breaks and Expo Hall hours.

No matter where you are, we hope you enjoy the conference either in person or virtually!

We would like to thank ICRA 2022 Partners, which have also supported us in record numbers this year.

Researchers develop algorithm to divvy up tasks for human-robot teams

Study explores how older adults react while interacting with humanoid robots

Talking Automate 2022 with TM Robotics

ep.353: Autonomous Flight Demo with CMU AirLab – ICRA Day 1, with Sebastian Scherer

Sebastian Scherer from CMU’s Airlab gives us a behind-the-scenes demo at ICRA of their Autonomous Flight Control AI. Their approach aims to cooperate with human pilots and act the way they would.

The team took this approach to create a more natural, less intrusive process for co-habiting human and AI pilots at a single airport. They describe it as a Turing Test, where ideally the human pilot will be unable to distinguish an AI from a person operating the plane.

Their communication system works parallel with a 6-camera hardware package based on the Nvidia AGX Dev Kit. This kit measures the angular speed of objects flying across the videos.

In this world, high angular velocity means low risk — since the object is flying at a fast speed perpendicular to the camera plane.

Low angular velocity indicates high risk since the object could be flying directly at the plane, headed for a collision.

Links

- Download mp3 (19.3 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

Building a culture of pioneering responsibly

Twisted soft robots navigate mazes without human or computer guidance

Industrial robots – the perfect worker?

Open-sourcing MuJoCo

ep.352: Robotics Grasping and Manipulation Competition Spotlight, with Yu Sun

Yu Sun, Professor of Computer Science and Engineering at the University of South Florida, created and organized the Robotic Grasping and Manipulation Competition. Yu talks about the impact robots will have in domestic environments, the disparity between industry and academia showcased by competitions, and the commercialization of research.

Yu Sun

Yu Sun is a Professor in the Department of Computer Science and Engineering at the University of South Florida (Assistant Professor 2009-2015, Associate Professor 2015-2020, Associate Chair of Graduate Affairs 2018-2020). He was a Visiting Associate Professor at Stanford University from 2016 to 2017, and received his Ph.D. degree in Computer Science from the University of Utah in 2007. Then he had his Postdoctoral training at Mitsubishi Electric Research Laboratories (MERL), Cambridge, MA (2007-2008) and the University of Utah (2008-2009).

He initiated the IEEE RAS Technical Committee on Robotic Hands, Grasping, and Manipulation and served as its first co-Chair. Yu Sun also served on several editorial boards as an Associate Editor and Senior Editor, including IEEE Transactions on Robotics, IEEE Robotics and Automation Letters (RA-L), ICRA, and IROS.

Links

- Download mp3 (49.0 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

ep.351: Early Days of ICRA Competitions, with Bill Smart

Bill Smart, Professor of Mechanical Engineering and Robotics at Oregon State University, helped start competitions as part of ICRA. In this episode, Bill dives into the high-level decisions involved with creating a meaningful competition. The conversation explores how competitions are there to showcase research, potential ideas for future competitions, the exciting phase of robotics we are currently in, and the intersection of robotics, ethics, and law.

Bill Smart

Dr. Smart does research in the areas of robotics and machine learning. In robotics, Smart is particularly interested in improving the interactions between people and robots; enabling robots to be self-sufficient for weeks and months at a time; and determining how they can be used as personal assistants for people with severe motor disabilities. In machine learning, Smart is interested in developing strategies for teaching robots to act effectively (or even optimally), based on long-term interactions with the world and given intermittent and at times incorrect feedback on their performance.

Links

- Download mp3 (29.1 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

Soft Grippers Can Handle Small and Delicate Parts With Greater Ease

New imaging method makes tiny robots visible in the body

By Florian Meyer

How can a blood clot be removed from the brain without any major surgical intervention? How can a drug be delivered precisely into a diseased organ that is difficult to reach? Those are just two examples of the countless innovations envisioned by the researchers in the field of medical microrobotics. Tiny robots promise to fundamentally change future medical treatments: one day, they could move through patient’s vasculature to eliminate malignancies, fight infections or provide precise diagnostic information entirely noninvasively. In principle, so the researchers argue, the circulatory system might serve as an ideal delivery route for the microrobots, since it reaches all organs and tissues in the body.

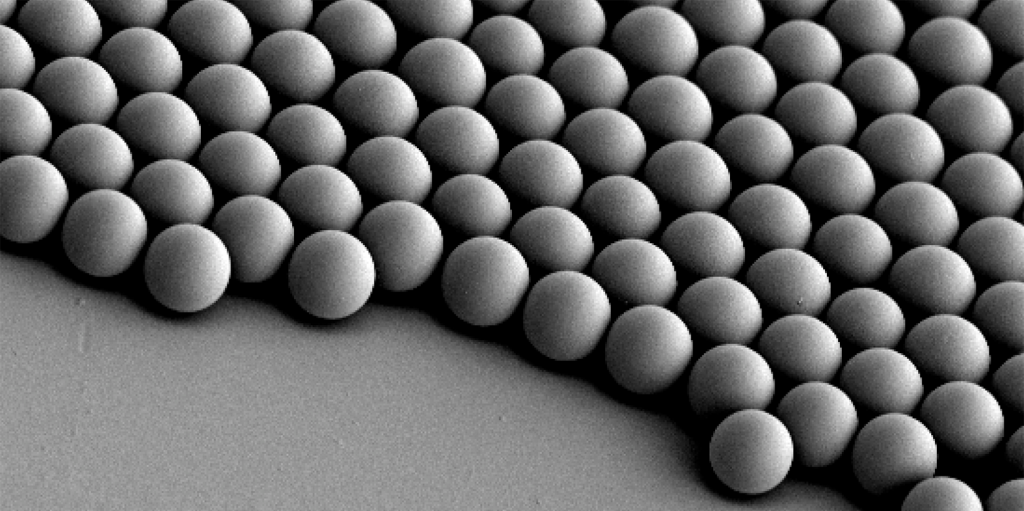

For such microrobots to be able to perform the intended medical interventions safely and reliably, they must not be larger than a biological cell. In humans, a cell has an average diameter of 25 micrometres – a micrometre is one millionth of a metre. The smallest blood vessels in humans, the capillaries, are even thinner: their average diameter is only 8 micrometres. The microrobots must be correspondingly small if they are to pass through the smallest blood vessels unhindered. However, such a small size also makes them invisible to the naked eye – and science too, has not yet found a technical solution to detect and track the micron-sized robots individually as they circulate in the body.

Tracking circulating microrobots for the first time

“Before this future scenario becomes reality and microrobots are actually used in humans, the precise visualisation and tracking of these tiny machines is absolutely necessary,” says Paul Wrede, who is a doctoral fellow at the Max Planck ETH Center for Learnings Systems (CLS). “Without imaging, microrobotics is essentially blind,” adds Daniel Razansky, Professor of Biomedical Imaging at ETH Zurich and the University of Zurich and a member of the CLS. “Real-time, high-resolution imaging is thus essential for detecting and controlling cell-sized microrobots in a living organism.” Further, imaging is also a prerequisite for monitoring therapeutic interventions performed by the robots and verifying that they have carried out their task as intended. “The lack of ability to provide real-time feedback on the microrobots was therefore a major obstacle on the way to clinical application.”

“Without imaging, microrobotics is essentially blind.”

Daniel Razansky

Together with Metin Sitti, a world-leading microrobotics expert who is also a CLS member as Director at the Max Planck Institute for Intelligent Systems (MPI-IS) and ETH Professor of Physical Intelligence, and other researchers, the team has now achieved an important breakthrough in efficiently merging microrobotics and imaging. In a study just published in the scientific journal Science Advances, they managed for the first time to clearly detect and track tiny robots as small as five micrometres in real time in the brain vessels of mice using a non-invasive imaging technique.

The researchers used microrobots with sizes ranging from 5 to 20 micrometres. The tiniest robots are about the size of red blood cells, which are 7 to 8 micrometres in diameter. This size makes it possible for the intravenously injected microrobots to travel even through the thinnest microcapillaries in the mouse brain.

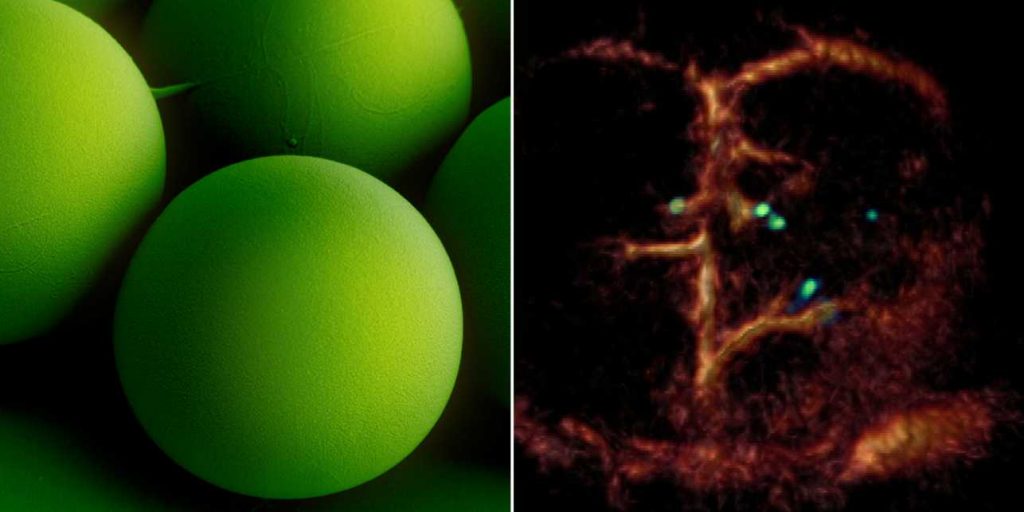

A breakthrough: Tiny circulating microrobots, which are as small as red blood cells (left picture), were visualised one-by-one in the blood vessels of mice with optoacoustic imaging (right picture). Image: ETH Zurich / Max Planck Institute for Intelligent Systems

The researchers also developed a dedicated optoacoustic tomography technology in order to actually detect the tiny robots one by one, in high resolution and in real time. This unique imaging method makes it possible to detect the tiny robots in deep and hard-to-reach regions of the body and brain, which would not have been possible with optical microscopy or any other imaging technique. The method is called optoacoustic because light is first emitted and absorbed by the respective tissue. The absorption then produces tiny ultrasound waves that can be detected and analysed to result in high-resolution volumetric images.

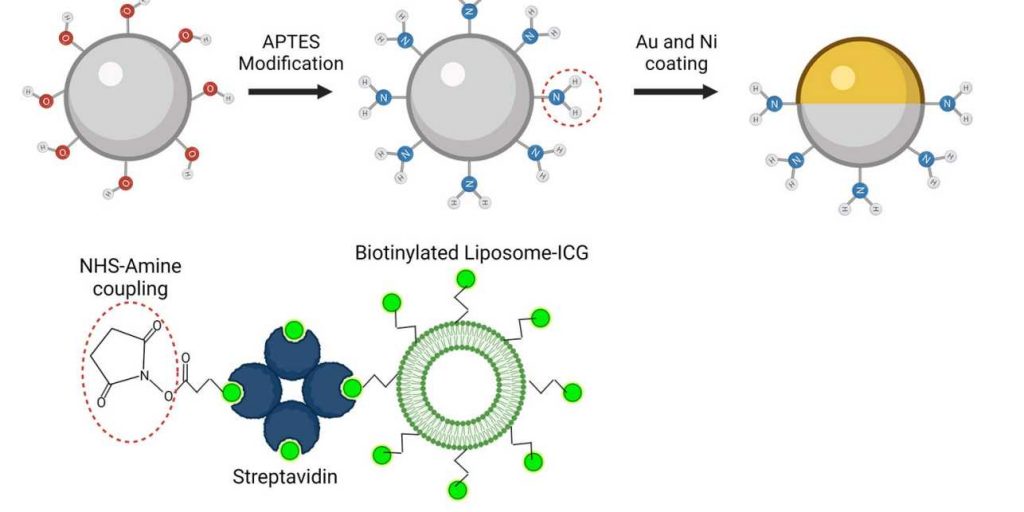

Janus-faced robots with gold layer

To make the microrobots highly visible in the images, the researchers needed a suitable contrast material. For their study, they therefore used spherical, silica particle-based microrobots with a so-called Janus-type coating. This type of robot has a very robust design and is very well qualified for complex medical tasks. It is named after the Roman god Janus, who had two faces. In the robots, the two halves of the sphere are coated differently. In the current study, the researchers coated one half of the robot with nickel and the other half with gold.

The spherical microrobots consist of silica-based particles and have been coated half with nickel (Ni) and half with gold (Au) and loaded with green-dyed nanobubbles (liposomes). In this way, they can be detected individually with the new optoacoustic imaging technique. Image: ETH Zurich / MPI-IS

“Gold is a very good contrast agent for optoacoustic imaging,” explains Razansky, “without the golden layer, the signal generated by the microrobots is just too weak to be detected.” In addition to gold, the researchers also tested the use of small bubbles called nanoliposomes, which contained a fluorescent green dye that also served as a contrast agent. “Liposomes also have the advantage that you can load them with potent drugs, which is important for future approaches to targeted drug delivery,” says Wrede, the first author of the study. The potential uses of liposomes will be investigated in a follow-up study.

Furthermore, the gold also allows to minimise the cytotoxic effect of the nickel coating – after all, if in the future microrobots are to operate in living animals or humans, they must be made biocompatible and non-toxic, which is part of an ongoing research. In the present study, the researchers used nickel as a magnetic drive medium and a simple permanent magnet to pull the robots. In follow-up studies, they want to test the optoacoustic imaging with more complex manipulations using rotating magnetic fields.

“This would give us the ability to precisely control and move the microrobots even in strongly flowing blood,” says Metin Sitti. “In the present study we focused on visualising the microrobots. The project was tremendously successful thanks to the excellent collaborative environment at the CLS that allowed combining the expertise of the two research groups at MPI-IS in Stuttgart for the robotic part and ETH Zurich for the imaging part,” Sitti concludes.