Workers have long confronted dangerous and dirty jobs. They’ve had to dig to the bottom of mines, or put themselves in harm’s way to decommission ageing nuclear sites. It’s time to make these jobs safer and more efficient, robots are just starting to provide the necessary tools.

Mining

Mining has become much safer, yet workers continue to die every year in accidents across Europe, highlighting the perils of this genuinely needed industry. Everyday products use minerals extracted from mining, and 30 million jobs in the EU depend on their supply. Robots are a way to modernise an industry that is constantly under pressure with the fall in prices of commodities and the lack of safe access to hard-to-reach resources. Making mining greener is also a key concern.

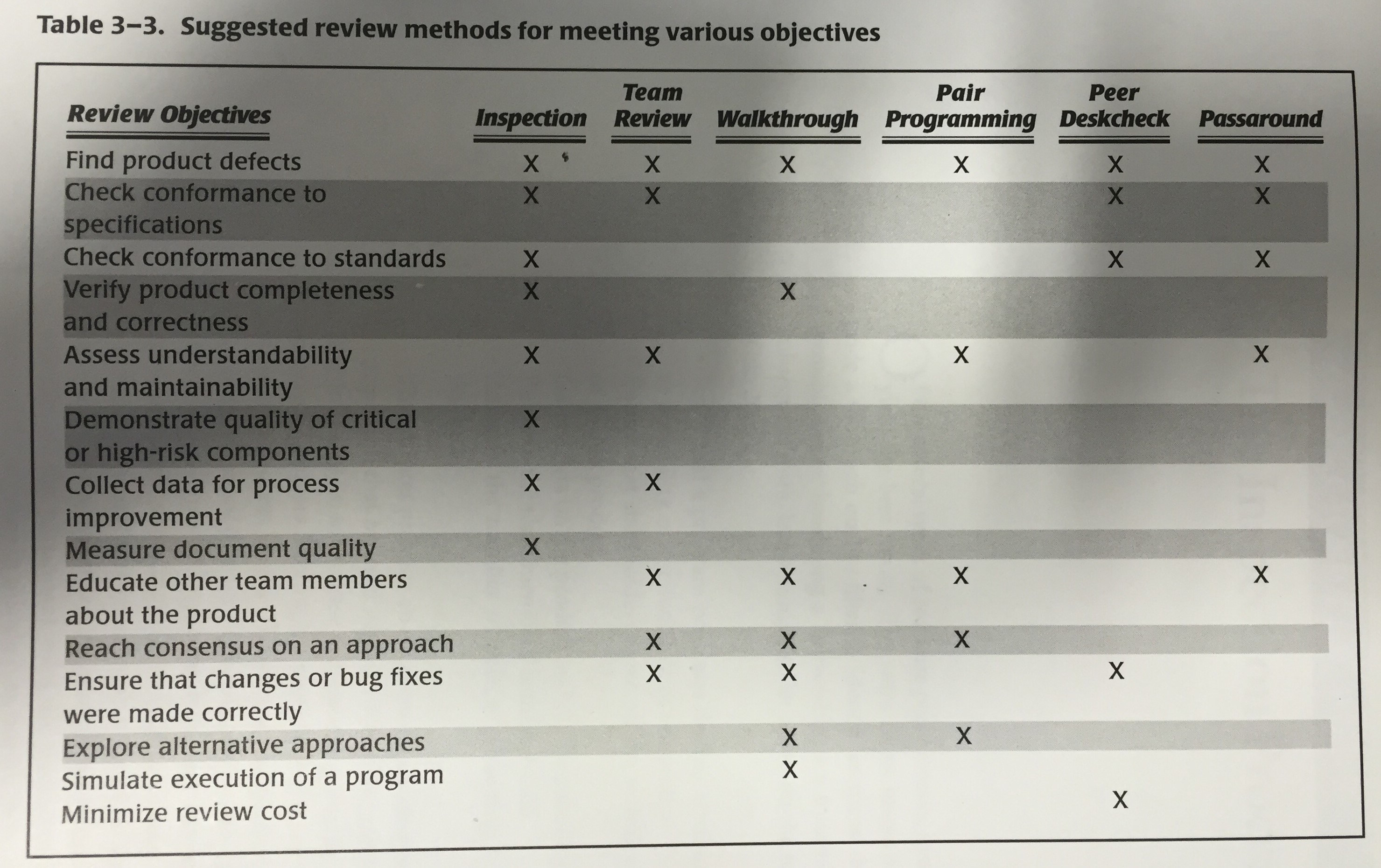

The vision is for people to move away from the rock face, and onto the surface. In an ideal world where mining 4.0 is the norm, a central control room will run all operations in the mine, which will become a zero-entry zone for workers. Robots will take care of safety critical tasks such as drilling, extracting, crushing and transport of excavated material. The mine could operate continuously while experts on the surface are in charge of managing, monitoring, optimising and maintenance of the systems – essentially making mining a high-tech job.

A recent report from the IDC echoes this vision saying, “The future of mining is to create the capability to manage the mine as a system – through an integrated web of technologies such as virtualization, robotics, Internet of Things (IoT), sensors, connectivity, and mobility – to command, control, and respond.”

And companies are betting their money on it, “69% of mining companies globally are looking for remote operation and monitoring centres, 56% at new mine methods, 29% at robotics and 27% at unmanned drones.”

Europe is also heavily investing, with several large projects over the past 15 years. The European project I2Mine, which finished last year, focussed on technologies suitable for underground mining activities (at depths greater than 1500m). With 23M Euros invested, it was the biggest EU RTD project funded in the mining sector.

Project Manager, Dr Horst Hejny, said: “The overall objective of the project was the development of innovative technologies and methods for sustainable mining at greater depths.”

One result of the project was a set of sensors for material recognition and boundary layer detection and sorting, as well as a new cutting head which allows for continuous operation.

Full, and even remote, automation, however, is still a long way ahead. Like any robotic system, automated mining will have to deal with a plethora of real-world challenges. And navigating underground mines, or manipulating rock, are very far from ideal laboratory settings. As an intermediate step, researchers are looking to set up test sites where they can experiment with the technology outside of the lab and before deployment in safety critical areas. Juha Röning from the University of Oulu in Finland uses Oulu Zone, a race track that could prove helpful to test automated driving for the mining industry. His laboratory has previous experience in this area, having tested an automated dumper robot for excavated material. It’s an obvious application for a country that Juha says “invented mining”. There is more to it than autonomous driving, however, and his laboratory has been thinking about ways to improve the infrastructure around the deployment of mobile robots, including using advanced positioning systems to increase the precision of robot tracking and control.

Another test site, RACE, which stands for Remote Applications in Challenging Environments, was recently opened by the UK Atomic Energy Authority. The facility conducts R&D and commercial activities focused on developing robots and autonomous systems for challenging environments.

On their website, they claim to be challenging ‘challenging environments’ saying: “Challenging Environments exist in numerous sectors, nuclear, petrochemical, space exploration, construction and mining are examples. The technical hurdle is different for different physical environments and includes radiation, extreme temperature, limited access, vacuum and magnetic fields, but solutions will have common features. The commercial imperative is to enable safe and cost efficient operations.”

Rather than develop full turn-key solutions for mines, many European companies have been providing automation solutions for very specific tasks. Swedish company Sandvik, for example, demonstrated a fully automated LHD (Load, Haul, Dump machine) vehicle.

Also based in Sweden, Atlas Copco has an autonomous LHD system of their own called Scooptram.

Polish company KGHM, a leader in copper and silver production, has been deeply involved in many R&D projects across Europe. Their mines in Lubin and Polkowice Sieroszowice have served as test sites for recent developments. KGHM, and mining companies Boliden and LKAB in Sweden joined forces with several major global suppliers and the academia to develop a common vision for future mining for 2011 to 2020.

The report discusses how to make deep mining of the future “safer, leaner and greener.” The short answer: “we need an innovative organisation that attracts talented young men and women to meet the grand challenges and opportunities of future mineral supply.” They add however that “by 2030 we will not yet have achieved invisible mining, zero waste, or the fully intelligent, automated mine without any human presence”. More time is needed.

Robotics technology also opens a new frontier in areas that can be mined beyond what is currently human-reachable. The new push is towards mining the deep sea, or space in a responsible manner. UK-based company Soil Machine Dynamics Ltd recently developed three vehicles that operate at depths of up to 2,500m on seafloor massive sulphide (SMS) deposits for the company Nautilus Minerals. The subsea mining machines weigh up to 310t and have vessel-based power and control systems, pilot consoles, umbilical systems and launch and recovery systems.

As for the space race, asteroids provide an untapped resource for metallic elements such as iron, nickel, and cobalt. Although space robots have been shown to navigate and drill in space, scaling up to meaningful extraction quantities will be a challenge. And it’s still unclear if the cost and complexity of space mining justify the means.

Nuclear Decommissioning

Like mining, nuclear decommissioning requires zero-entry operations. Across Europe, there are plans to close up to 80 civilian nuclear power reactors in the next ten years.

“The total cost of nuclear decommissioning in the UK alone is currently estimated at £60 billion. Analysis by the National Nuclear Laboratory indicates that 20% of the cost of complex decommissioning will be spent on RAS (Robotics and Autonomous Systems) technology.” – RAS UK Strategy.

Designing robots for the nuclear environment is especially challenging because the robots need to be robust, reliable, safe, and also need to withstand a highly radioactive environment.

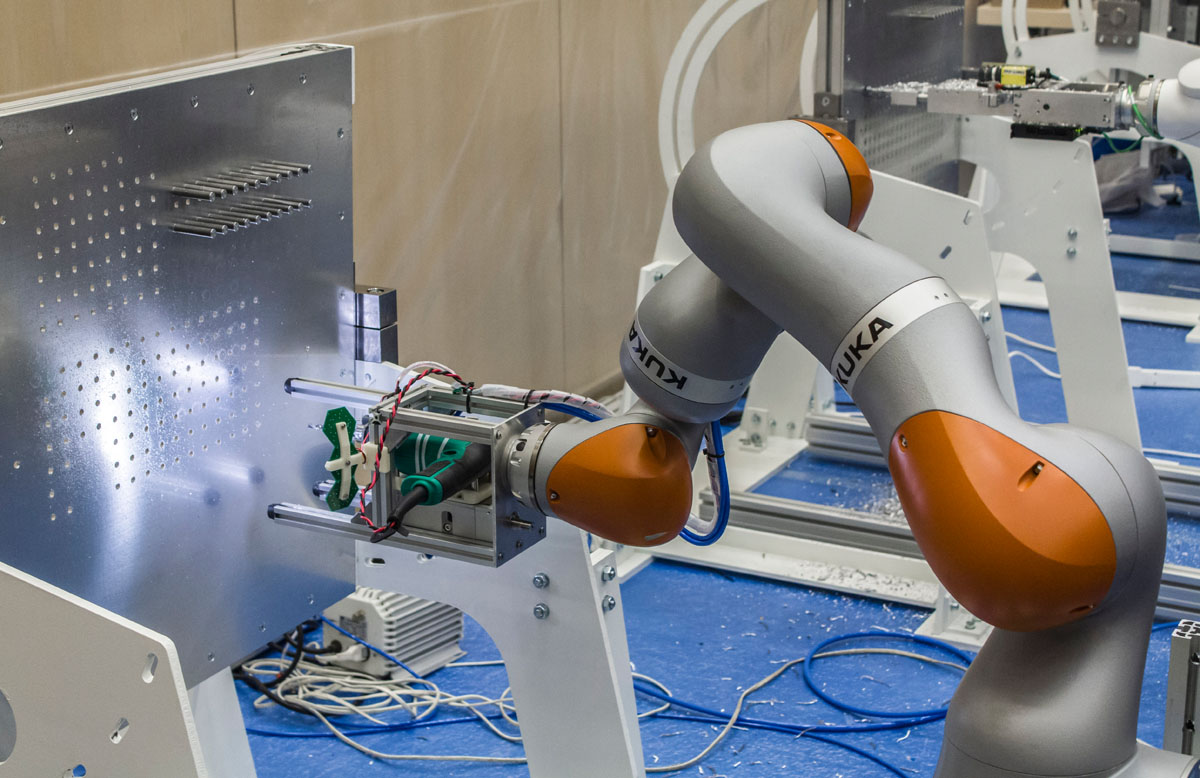

In 2012, one of the high hazard plants at Sellafield UK used a custom-made remotely operated robot arm to isolate the risk caused by a 60-year-old First Generations Magnox Storage Pond. The arm had to separate and remove redundant pipework in a high radiation area, and then clean and seal a contaminated pond wall. The redundant pipework was then isolated with special sealants, before its remote removal. The robotic arm then scabbled the pond wall and applied a specialist coating to seal the concrete.

Over 80,000 hours of testing in a separate test facility were needed before the team had confidence the robots would perform flawlessly on such a high-risk task.

The Sellafield site has since added a “Riser” (Remote Intelligence Survey Equipment for Radiation) quadcopter developed by Blue Bear Systems Research Ltd and Createc Ltd. It is equipped with a system that allows it to map the inside of a building and radiation levels.

Little underwater vehicles were deployed in the nuclear storage pools. The robots build on existing technology developed for inspection of offshore oil and gas industries prepared by company James Fisher Nuclear. They were initially sent to image the environment but are now used for basic manipulation tasks.

In Marcoule France, Maestro, a tele-operated robot arm, is also being using to decommission a site. The robot can laser-cut 4mx2m metal structures into smaller pieces. Humans could do this faster, but 30 minutes on the site would be lethal.

And with so many robot arms entering the field, KUKA has also developed a suite of robots specifically for nuclear decommissioning.

Given the high-risk nature of nuclear decommissioning, traditional robotic solutions seem to be favoured for now, as they are tested and understood. However, a new wave of innovative solutions is also making its way to the market.

Swiss startup Rovenso, for example, developed ROVéo, a robot whose unique four-wheel design allows it to climb over obstacles up to two-thirds its height. They aim to produce a larger-scale model equipped with a robotic arm for use in dismantling nuclear plants.

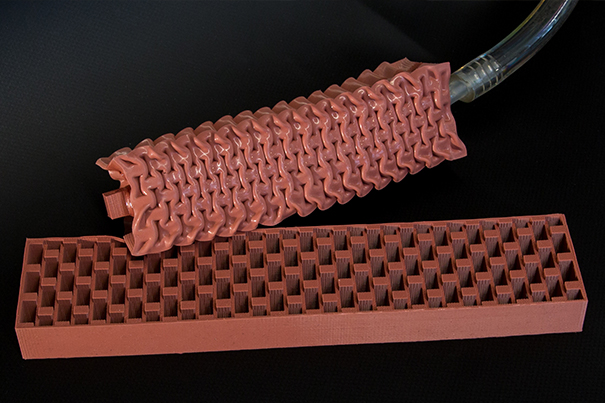

OCRobotics in the UK is also working closely with the nuclear industry to build robots that have a better reach than modern industrial robot arms. Their snake arms can be fit with lasers or other tools, and can slither through nearly any structure.

Andy Graham, Technical Director at OC Robotics, said “Robots have the potential to improve everyone’s quality of life. Reducing the need for people to enter hazardous workplaces and confined spaces is central to what we do at OC Robotics, whether the application is in manufacturing industries, inspection and maintenance in the oil and gas sector, or decommissioning nuclear power stations. Users are becoming more and more aware of the potential for robots to enable their workers to work more comfortably and safely from outside these spaces”.

“The Lasersnake 2 project, led by OC Robotics and part-funded by the UK government, has developed and is currently testing a snake-arm robot equipped with a powerful laser capable of cutting effortlessly through 60mm thick steel. The same snake-arm robot can be equipped with a gripper enabling it to lift 25kg at a reach of about 5m, and has also been demonstrated underwater in an environment similar to a nuclear storage pond. With this cutting capability and the ability to snake through small holes and around obstacles, this enables “keyhole surgery” for nuclear decommissioning, leaving containment structures, shielding and cells intact while dismantling the processing equipment inside them”.

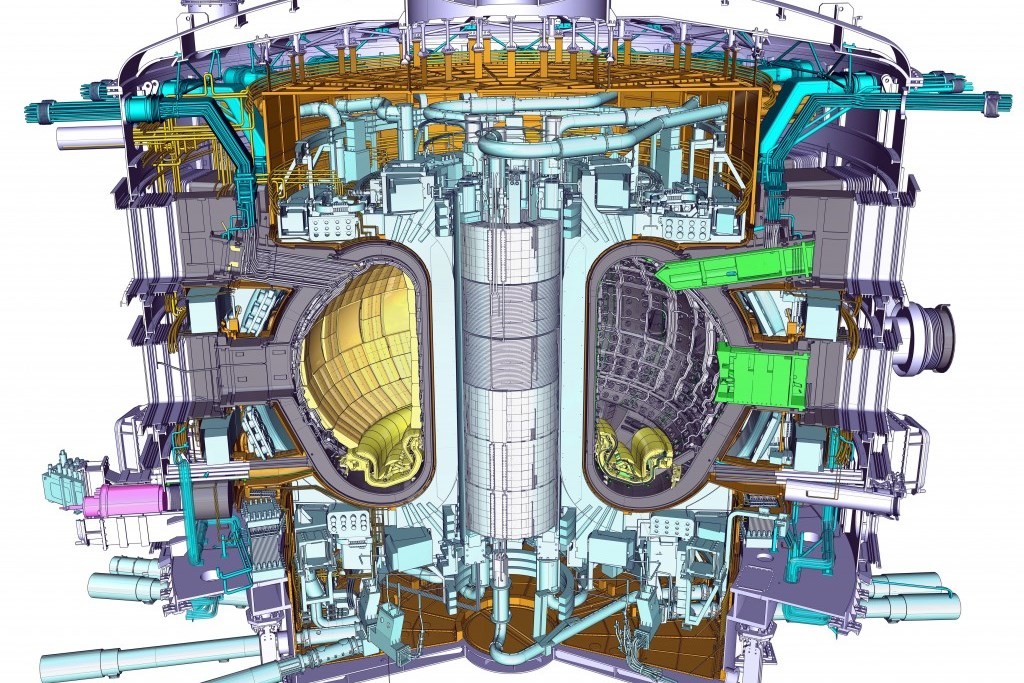

Beyond decommissioning, robots are also being used for new energy infrastructure including at ITER, the next generation fusion research device being built in the south of France that will achieve ‘burning plasma’, one of the required steps for fusion power. Remote handling is critical to ITER, says project partner RACE.

“Cutting and welding large diameter stainless steel pipes is a fundamental process for remote maintenance of ITER.” RACE has been developing concepts to replace remotely the beam sources of the neutral beam heating system, high energy ion beams that are used to heat the plasmas to 200M °C.

From their website, “A monorail crane was designed with high lift in a compact space, with an innovative control system for high radiation environments. The beam line transporter operates along the full length of the beam line, like an industrial production line. It has a load capacity of many tonnes, haptic feedback and is fully remotely operated and remotely recoverable.”

“ITER provides some seriously challenging environments for robotics: high radiation dose; elevated temperatures; limited access; large, compact equipment and some very challenging inspection and maintenance procedures to implement fast and reliably, without failure.”

These projects are just part of a worldwide effort to advance the safety of nuclear applications. Japan has also been working on its robot fleet, in response to the Fukushima disaster and the cleanup efforts still ahead. Their robots take different shapes and forms depending on their task, and can blast dry ice, inspect vents, cut pipes, and remove debris.

Competitions like the recent Darpa Robotics Challenge, or the European Robotics League (ERL) Emergency Challenge, have been driving the state of the art forward. ERL Emergency is an outdoor multi-domain robotic competition inspired by the 2011 Fukushima accident. The challenge requires teams of land, underwater and flying robots to work together to survey the scene, collect environmental data, and identify critical hazards.

“Robotics competitions are not just for testing a robot outside a laboratory, or engaging with an audience; they are events that get people together, inspire younger generations and facilitate cooperation and exchange of knowledge between multiple research groups. Robotics competitions provide a perfect platform for challenging, developing and showcasing robotics technologies.” said Marta Palau, ERL Emergency Project Manager

Similar scenarios are also being explored by Juha Röning from the University of Oulu in Finland. He aims to use flying robots to map radiation levels after a nuclear accident thanks to support from the Nordic Nuclear Safety Research Agency. He says “in the future, flying robots could be used to map radiation levels, and then a second team of ground robots could be sent in for the cleanup”.

Overall, robots are helping workers avoid dirty and dangerous areas, while making the job more efficient, and potentially fulfilling. We are only at the initial stages, however, as many of these high-risk tasks require years of testing before new technologies are implemented.