This guide to ‘colliding opposite disciplines with your research’ is intended to help students and researchers, or indeed anyone who might otherwise be looking for some ideas on how to approach research or methods for designing concepts and solutions, to broaden their thinking and approach to research. This guide is mainly focused on the disciplines of science and engineering with the idea of collaborating with other distinct disciplines. However, the overall principles remain for any multidisciplinary research.

The guide is written into three different sections;

- Is it all just hot STEAM?

- When worlds collide.

- The common goal – how can I develop my multidisciplinary research?

With the assistance of this guide, it will help to open new ways of thinking about research, highlight the ‘unseen’ benefits of multidisciplinary approaches to research and how they can be extremely advantageous and can lend for an optimal delivery. It will help you to contemplate how, when, and why you should open up your research to other disciplines.

Is it all just hot STEAM?

If we think of the Arts and Science then I think, for the most part, that people would think of them as opposites. People are either ‘arty’ or ‘science-y’. A large factor in this thinking may come from the fact that people are seen as left-brained or right-brained, where one side of the brain is dominant. Left-brained thinkers are said to be methodical and analytical, whereas right-brained thinkers are said to be creative or artistic. What should happen, though, if you were able to work across these two separated sides and create whilst you analyse?

Do we need to get out of this thought, that art and science don’t belong together? I would firmly argue that we do.

The acronym STEM is widely known as Science, Technology, Engineering and Mathematics. However, another acronym, possibly less well known, is STEAM. STEAM is Science, Technology, Engineering, (liberal) Arts and Mathematics. This represents all aspects of art such as drama, music, design, media and visual arts. STEM primarily focuses on scientific concepts, whilst STEAM investigates the same concepts, but does this through investigation and problem-based learning methods used in an imaginative process.

The application of the arts to science is not a new practice Leonardo DaVinci is an early example of someone using STEAM to make discoveries and explain them to several generations.

There are many advantages to applying the arts to science and engineering. For example, would increasing application of the arts to science and engineering make more young people want to do science and engineering as it looks visually more attractive and significant? Could it help them develop a love for the STEM subjects and support them to seeing it as being more relatable than a Bunsen burner in a school laboratory.

A prime, and very recent, example of STEAM being applied was the crewed Space-X launch of the Dragon capsule in 2020. This launch represents the essence of advanced technology that is both on the forefront of science and engineering development as well technological aesthetics. From the design of the sleek logos, through to the futuristic spacesuits and even continuing onto the matt black launch platform, it was clear throughout this launch that every single detail had been considered.

Some may argue that by adding this artistic touch to technology that the ‘nitty-gritty science’ of the design and aesthetic becomes lost. If something doesn’t ‘look’ complicated and complex can it really be advanced or sophisticated? Well yes! Have you ever heard the saying “when someone makes something look simple, they have spent hours perfecting it” Take for example the space suits and touch screen controls of the Dragon SpaceX launch. The suits look like they were designed for a film set of Hollywood’s renditions of Space travel. A spacesuit without oxygen inlets, pressurized helmets and fitted to individual body contours.

To the layman, these may simply look like pleasant visuals. Though, to the trained eye and relevantly knowledgeable mind the pure fact that these two components of the flight look so streamline and simplistic not only nods to but reinforces that all aspects of the flight was saturated in superior engineering from hundreds of magnificent minds. That is the beauty of STEAM.

When worlds collide

There are several advantages to multidisciplinary research. Now more than before, there has been focus on research becoming more multidisciplinary. As the world is in the fourth industry revolution (Industry 4) and with constant and significant advances in technology and AI there is increased necessity for research to meet complex and substantial scientific and engineering global challenges. Consider, a real-world problem, either one you know something about or one you are researching. Completing all aspects of this issue and its application you will notice that, repeatedly, it cannot be confined to one single discipline.

A multidisciplinary environment in research allows for different theories, methodologies, modes of thinking (convergent, divergent and lateral) and perspectives to come together for one common goal and purpose. The beauty of multidisciplinary research sits within this divergence of thinking, approaches, and theories which provides a much broader context to create innovate and bespoke discoveries and solutions.

In research, it can often be the case that students or academics end up working in quite a niche area of research, which of course has great advantages of its own as one can become a leading expert in a particular field. However, when a specific piece of research is presented that needs expert knowledge and experience from another field or discipline it can be difficult for an individual to become skilled or versed enough (often within pressing timelines) to lead on that area of the work. Here, a multidisciplinary environment/team will allow for contributions and skilled knowledge from other disciplines to have input without all researchers having to masters each other’s skills and knowledge but to only understand.

Often, an effective way to further show value and demonstrate a concept is to lead by example…

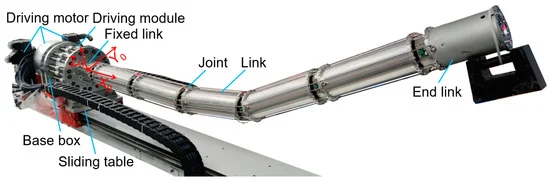

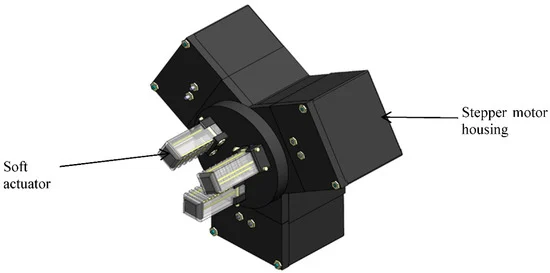

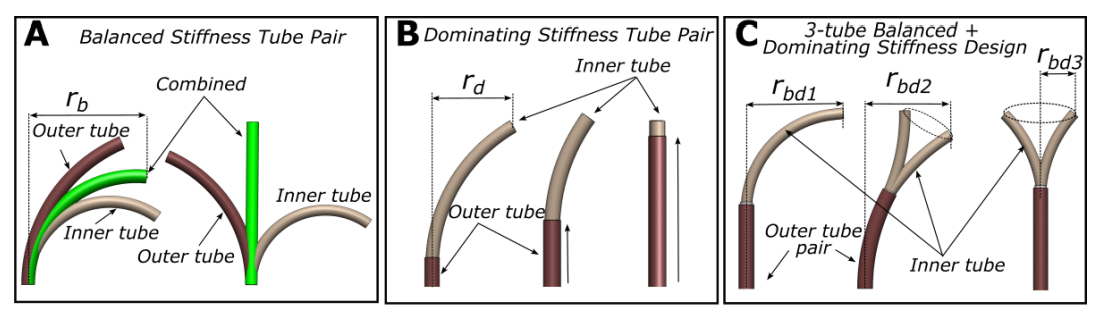

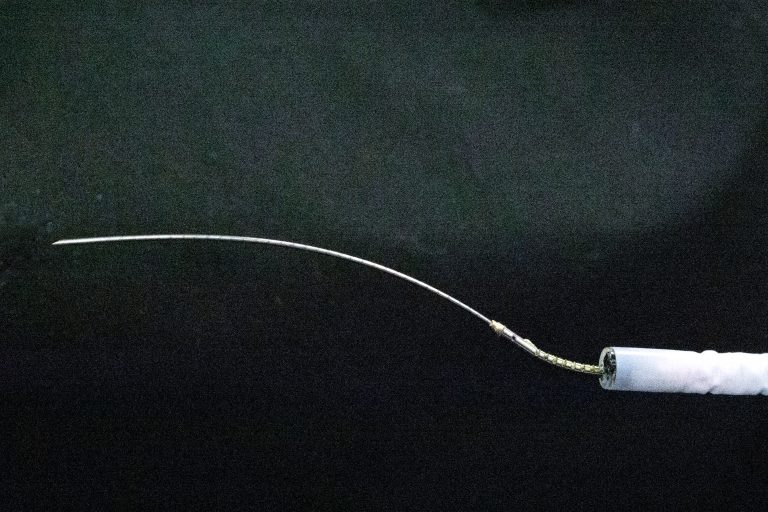

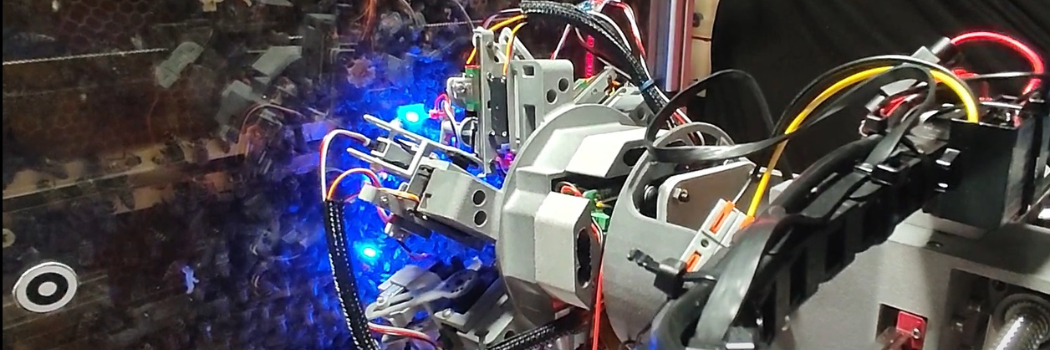

Consider the discipline of robotics. A robot represents a wide array of disciplines.

In a true multidisciplinary approach, there is great research capability in designing and building new robotic systems, that offers the ability to apply bespoke robotic based solutions to a range of applications.

In the case of social and healthcare robotics. Here, robotics represents aspects of electrical/mechanical engineering, material science, psychology, and medicine. In these environments, robots are much more than circuitry and AI. Other disciplines come from the end user requirements, the operational environments, and bespoke requirements/purposes.

Design in robotics is something that is often overlooked. However, it is extremely important in the creation of robotic solutions for both end users and client requirements. The physical appearance of a robot can affect presumptions and expectations of how a robotic system should or will perform.

Aesthetics, more than one is conscious of, influences aspects of our lives, and the decisions we make. The collaboration of the arts/design and robotics can be particularly effective towards increasing trust in robots. Establishing human-robot interaction (HRI) trust is especially pertinent where robots are being used in individual personal and healthcare roles.

For several years, it has been recognised and understood that trust is a crucial aspect of effective human-robot interaction for social robots as it closes the discipline gap between human psychology and artificial intelligence (AI).

A robot’s physical appearance can positively or negatively affect a human’s interaction with a robot. Just as humans can make decision upon first meeting someone, a human can make a similar decision based on the first impression when interacting with a robot for the first time. As a result, co-design improves the engagement and the quality of the interaction between (multidisciplinary) researchers and the end user. In the cases of healthcare or surgical robotics, it should be instinctive for a researcher to involve a person from a medical background, such as surgeons and/or medical device developers, to co-create a robotic solution fitting to the healthcare issue. To create ‘sightless’ to the knowledge and experience of the real life reality and important key issues that must be considered and adopted would be developing from a position of being completely ignorant to the challenge and effective solution.

Sustainability is a major current area of research, sustainability for the world and sustainability for technology research and development. Considering sustainability in robotic technology development the factors that should be considered, for example, are the choice & quantity of materials, the possibility of using recycled materials (e.g. in soft robotics) and effective design to limit single use robotics, or inefficient processes. This research is a topic of a truly multidisciplinary nature. How can this be considered or deciphered? It can be broken down in the resulting way;

- Considerations – material choices, quantity of materials, effective & efficient design.

- Following considerations – where do the materials come from, how does the technology affect the environment and society.

- Draw out research points – lithium batteries, mining, supporting jobs roles or taking job roles, damage to environment, moving local communities.

- What disciplines do we need? – material scientists, chemical scientists, robotics engineers, policy makers, lawyers, sociologists, economists.

The common goal – how can I develop my multidisciplinary research?

A multidisciplinary research environment may be outside of your established or traditional research approaches or considerations. If so, there may be several questions that immediately come to mind about working in these types of collaborations. Such as how do you put together a multidisciplinary research group? Will the terminology and language across our disciplines be the same? How will other disciplines approach the problem – ‘will there be too many cooks’?

It is common that with change, new methodologies and approaches to working (especially if you are set in a certain way of doing things over many years), for there to be some initial challenges and a period of adaptation.

If you are interested to work in or create a multidisciplinary team here are some tips for developing this research approach.

- Identify and acknowledge your dominant discipline/perspective (and all that it encompasses) – this can seem like an obvious point to make and think. However, considering your discipline will make you think of the fields that sit with it and this will help to identity disciplines and fields that are completely out with your area. As well as groups or researchers that do not sit within your department or similarly researchers that may be in another group within the department that you had not considered working with before.

- Consider the research you are conducting. What are the underlying theories? Where are the natural overlaps? This can create a research ecosystem that will help you identify areas that you and others can co-create within. As a brief example, physics provides the fundamental basis for biology.

- Identify the areas of knowledge are you lacking in? What areas of knowledge do you need strengthened? Where are the gaps in your research? This will help you to identify what type of expertise you need and where to get this from.

- Next, identify who are the end users, what are the applications? This will help you think of the bigger picture of your research and what expertise should have input in the work. For example, in the case of healthcare, surgeons should have input in medical device robotics or in the instance of assisted living the end user must have input of information about their requirements and daily living situation.

- Lastly, make sure you know what you are talking about. Put together a short brief of the purpose of the work and an outline answering these key indicators listed here. Ensure this conveys the purpose and end goal of the work, the gaps and where the other disciplines can add value. Identify, the group or induvial person you think could create a beneficial multidisciplinary team and contact them to present this information to them. Of course, in certain circumstances this can lead to the development of research grants!

Recognise that there are certain points to consider such as,

- When collaborating with people from other disciplines there can be initial hurdles to overcome with how easy it is to convey your ideas to them, the languages that speak in and the ways they communicate. Take time to verse yourself in others ways of doing things and their language and methods on communicating.

- Other disciplines may not work in a factually driven way, and it could be more a creative/holistic view of thinking. Be open minded, be open to adapting to new ways of doing things. This is advantageous to you too!

- It may take some brainstorming sessions and design workshops (for instances) to get some momentum going in the work. However, take time to reflect on what has been done so far and always move forward with the same purpose and goal. Remember there was a reason that you created this team. Reflect on this.

Lastly, do not let these considerations stop or hinder your ideas of working in a multidisciplinary environment. There is so much to learn in these types of research teams, and it is always interesting, it is guaranteed. There is no research quite like the output form a team that is not confined into one discipline.

So, the next time you are designing, creating, or innovating, consider; am I letting off enough STEAM and are worlds colliding?

This work by Dr Karen Donaldson is licensed under a Creative Commons Attribution licence 4.0.