Tesla’s fetal crash

Tesla can do better than its current public response to the recent fatal crash involving one of its vehicles. I would like to see more introspection, credibility, and nuance.

Introspection

Over the last few weeks, Tesla has blamed the deceased driver and a damaged highway crash attenuator while lauding the performance of Autopilot, its SAE level 2 driver assistance system that appears to have directed a Model X into the attenuator. The company has also disavowed its own responsibility: “The fundamental premise of both moral and legal liability is a broken promise, and there was none here.”

In Tesla’s telling, the driver knew he should pay attention, he did not pay attention, and he died. End of story. The same logic would seem to apply if the driver had hit a pedestrian instead of a crash barrier. Or if an automaker had marketed an outrageously dangerous car accompanied by a warning that the car was, in fact, outrageously dangerous. In the 1980 comedy Airplane!, a television commentator dismisses the passengers on a distressed airliner: “They bought their tickets. They knew what they were getting into. I say let ‘em crash.” As a rule, it’s probably best not to evoke a character in a Leslie Nielsen movie.

It may well turn out that the driver in this crash was inattentive, just as the US National Transportation Safety Board (NTSB) concluded that the Tesla driver in an earlier fatal Florida crash was inattentive. But driver inattention is foreseeable (and foreseen), and “[j]ust because a driver does something stupid doesn’t mean they – or others who are truly blameless – should be condemned to an otherwise preventable death.” Indeed, Ralph Nader’s argument that vehicle crashes are foreseeable and could be survivable led Congress to establish the National Highway Traffic Safety Administration (NHTSA).

Airbags are a particularly relevant example. Airbags are unquestionably a beneficial safety technology. But early airbags were designed for average-size male drivers—a design choice that endangered children and lighter adults. When this risk was discovered, responsible companies did not insist that because an airbag is safer than no airbag, nothing more should be expected of them. Instead, they designed second-generation airbags that are safer for everyone.

Similarly, an introspective company—and, for that matter, an inquisitive jury—would ask whether and how Tesla’s crash could have been reasonably prevented. Tesla has appropriately noted that Autopilot is neither “perfect” nor “reliable,” and the company is correct that the promise of a level 2 system is merely that the system will work unless and until it does not. Furthermore, individual autonomy is an important societal interest, and driver responsibility is a critical element of road traffic safety. But it is because driver responsibility remains so important that Tesla should consider more effective ways of engaging and otherwise managing the imperfect human drivers on which the safe operation of its vehicles still depends.

Such an approach might include other ways of detecting driver engagement. NTSB has previously expressed its concern over using only steering wheel torque as a proxy for driver attention. And GM’s own level 2 system, Super Cruise, tracks driver head position.

Such an approach may also include more aggressive measures to deter distraction. Tesla could alert law enforcement when drivers are behaving dangerously. It could also distinguish safety features from convenience features—and then more stringently condition convenience on the concurrent attention of the driver. For example, active lane keeping (which might ping pong the vehicle between lane boundaries) could enhance safety even if active lane centering is not operative. Similarly, automatic deceleration could enhance safety even if automatic acceleration is inoperative.

NTSB’s ongoing investigation is an opportunity to credibly address these issues. Unfortunately, after publicly stating its own conclusions about the crash, Tesla is no longer formally participating in NTSB’s investigation. Tesla faults NTSB for this outcome: “It’s been clear in our conversations with the NTSB that they’re more concerned with press headlines than actually promoting safety.” That is not my impression of the people at NTSB. Regardless, Tesla’s argument might be more credible if it did not continue what seems to be the company’s pattern of blaming others.

Credibility

Tesla could also improve its credibility by appropriately qualifying and substantiating what it says. Unfortunately, Tesla’s claims about the relative safety of its vehicles still range from “lacking” to “ludicrous on their face.” (Here are some recent views.)

Tesla repeatedly emphasizes that “our first iteration of Autopilot was found by the U.S. government to reduce crash rates by as much as 40%.” NHTSA reached its conclusion after (somehow) analyzing Tesla’s data—data that both Tesla and NHTSA have kept from public view. Accordingly, I don’t know whether the underlying math actually took only five minutes, but I can attempt some crude reverse engineering to complement the thoughtful analyses already done by others.

Let’s start with NHTSA’s summary: The Office of Defects Investigation (ODI) “analyzed mileage and airbag deployment data supplied by Tesla for all MY 2014 through 2016 Model S and 2016 Model X vehicles equipped with the Autopilot Technology Package, either installed in the vehicle when sold or through an OTA update, to calculate crash rates by miles travelled prior to and after Autopilot installation. [An accompanying chart] shows the rates calculated by ODI for airbag deployment crashes in the subject Tesla vehicles before and after Autosteer installation. The data show that the Tesla vehicles crash rate dropped by almost 40 percent after Autosteer installation”—from 1.3 to 0.8 crashes per million miles.

This raises at least two questions. First, how do these rates compare to those for other vehicles? Second, what explains the asserted decline?

Comparing Tesla’s rates is especially difficult because of a qualification that NHTSA’s report mentions only once and that Tesla’s statements do not acknowledge at all. The rates calculated by NHTSA are for “airbag deployment crashes” only—a category that NHSTA does not generally track for nonfatal crashes.

NHTSA does estimate rates at which vehicles are involved in crashes. (For a fair comparison, I look at crashed vehicles rather than crashes.) With respect to crashes resulting in injury, 2015 rates were 0.88 crashes per million miles for light trucks and 1.26 for passenger cars. And with respect to property-damage only crashes, they were 2.35 for light trucks and 3.12 for passenger cars. This means that, depending on the correlation between airbag deployment and crash injury (and accounting for the increasing number and sophistication of airbags), Tesla’s rates could be better than, worse than, or comparable to these national estimates.

Airbag deployment is a complex topic, but the upshot is that, by design, airbags do not always inflate. An analysis by the Pennsylvania Department of Transportation suggests that airbags deploy in less than half of the airbag-equipped vehicles that are involved in reported crashes, which are generally crashes that cause physical injury or significant property damage. (The report’s shift from reportable crashes to reported crashes creates some uncertainty, but let’s assume that any crash that results in the deployment of an airbag is serious enough to be counted.)

Data from the same analysis show about two reported crashed vehicles per million miles traveled. Assuming a deployment rate of 50 percent suggests that a vehicle deploys an airbag in a crash about once every million miles that it travels, which is roughly comparable to Tesla’s post-Autopilot rate.

Indeed, at least two groups with access to empirical data—the Highway Loss Data Institute and AAA – The Auto Club Group—have concluded that Tesla vehicles do not have a low claim rate (in addition to having a high average cost per claim), which suggests that these vehicles do not have a low crash rate either.

Tesla offers fatality rates as another point of comparison: “In the US, there is one automotive fatality every 86 million miles across all vehicles from all manufacturers. For Tesla, there is one fatality, including known pedestrian fatalities, every 320 million miles in vehicles equipped with Autopilot hardware. If you are driving a Tesla equipped with Autopilot hardware, you are 3.7 times less likely to be involved in a fatal accident.”

In 2016, there was one fatality for every 85 million vehicle miles traveled—close to the number cited by Tesla. For that same year, NHTSA’s FARS database shows 14 fatalities across 13 crashes involving Tesla vehicles. (Ten of these vehicles were model year 2015 or later; I don’t know whether Autopilot was equipped at the time of the crash.) By the end of 2016, Tesla vehicles had logged about 3.5 billion miles worldwide. If we accordingly assume that Tesla vehicles traveled 2 billion miles in the United States in 2016 (less than one tenth of one percent of US VMT), we can estimate one fatality for every 150 million miles traveled.

It is not surprising if Tesla’s vehicles are less likely to be involved in a fatal crash than the US vehicle fleet in its entirety. That fleet, after all, has an average age of more than a decade. It includes vehicles without electronic stability control, vehicles with bald tires, vehicles without airbags, and motorcycles. Differences between crashes involving a Tesla vehicle and crashes involving no Tesla vehicles could therefore have nothing to do with Autopilot.

More surprising is the statement that Tesla vehicles equipped with Autopilot are much safer than Tesla vehicles without Autopilot. At the outset, we don’t know how often Autopilot was actually engaged (rather than merely equipped), we don’t know the period of comparison (even though crash and injury rates fluctuate over the calendar year), and we don’t even know whether this conclusion is statistically significant. Nonetheless, on the assumption that the unreleased data support this conclusion, let’s consider three potential explanations:

First, perhaps Autopilot is incredibly safe. If we assume (again, because we just don’t know otherwise) that Autopilot is actually engaged for half of the miles traveled by vehicles on which it is installed, then a 40 percent reduction in airbag deployments per million miles really means an 80 percent reduction in airbag deployments while Autopilot is engaged. Pennsylvania data show that about 20 percent of vehicles in reported crashes are struck in the rear, and if we further assume that Autopilot would rarely prevent another vehicle from rear-ending a Tesla, then Autopilot would essentially need to prevent every other kind of crash while engaged in order to achieve such a result.

Second, perhaps Tesla’s vehicles had a significant performance issue that the company corrected in an over-the-air update at or around the same time that it introduced Autopilot. I doubt this—but the data released are as consistent with this conclusion as with a more favorable one.

Third, perhaps Tesla introduced or upgraded other safety features in one of these OTA updates. Indeed, Tesla added automatic emergency braking and blind spot warning about half a year before releasing Autopilot, and Autopilot itself includes side collision avoidance. Because these features may function even when Autopilot is not engaged and might not induce inattention to the same extent as Autopilot, they should be distinguished from rather than conflated with Autopilot. I can see an argument that more people will be willing to pay for convenience plus safety than for just safety alone, but I have not seen Tesla make this more nuanced argument.

Nuance

In general, Tesla should embrace more nuance. Currently, the company’s explicit and implicit messages regarding this fatal crash have tended toward the absolute. The driver was at fault—and therefore Tesla was not. Autopilot improves safety—and therefore criticism is unwarranted. The company needs to be able to communicate with the public about Autopilot—and therefore it should share specific and, in Tesla’s view, exculpatory information about the crash that NTSB is investigating.

Tesla understands nuance. Indeed, in its statement regarding its relationship with NTSB, the company noted that “we will continue to provide technical assistance to the NTSB.” Tesla should embrace a systems approach to road traffic safety and acknowledge the role that the company can play in addressing distraction. It should emphasize the limitations of Autopilot as vigorously as it highlights the potential of automation. And it should cooperate with NTSB while showing that it “believe[s] in transparency” by releasing data that do not pertain specifically to this crash but that do support the company’s broader safety claims.

For good measure, Tesla should also release a voluntary safety self-assessment. (Waymo and General Motors have.) Autopilot is not an automated driving system, but that is where Tesla hopes to go. And by communicating with introspection, credibility, and nuance, the company can help make sure the public is on board.

Robots in Depth with Frank Tobe

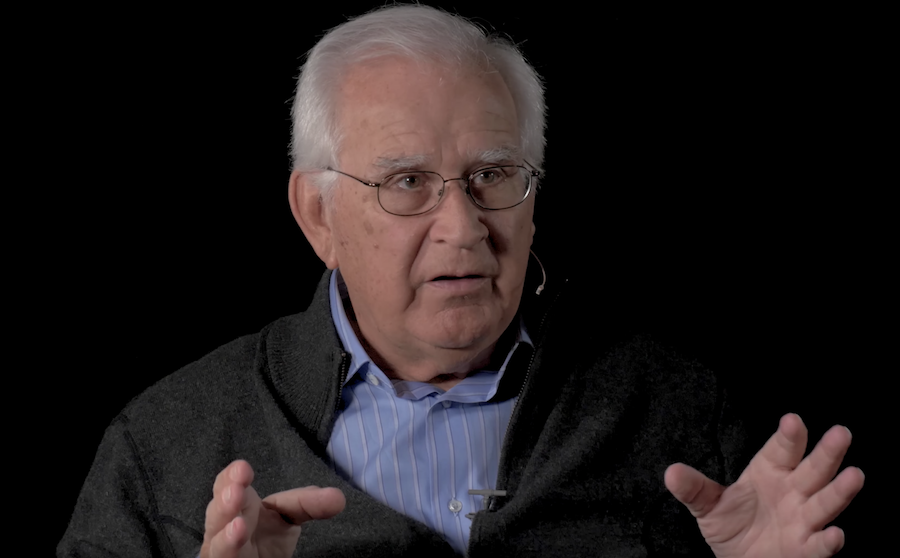

In this episode of Robots in Depth, Per Sjöborg speaks with Frank Tobe about his experience covering robotics in The Robot Report and creating the index Robo-Stox.

In this episode of Robots in Depth, Per Sjöborg speaks with Frank Tobe about his experience covering robotics in The Robot Report and creating the index Robo-Stox.

Frank talks about how and why he shifted to robotics and how looking for an investment opportunity in robotics lead him to start Robo-Stox (since renamed to ROBO Global), a robotics focused index company.

Both companies have given Frank a unique perspective on the robotics scene as a whole, over a significant period of time.

Teradyne acquires MiR for $272M, continues robotics spree

In a surprise but smart move, Teradyne (NYSE:TER), the American test solutions provider that acquired Universal Robots in 2015 and Energid Technologies earlier in 2018, acquired Danish MiR (Mobile Industrial Robots) for $148 million with an additional $124 million predicated on very achievable milestones between now and 2020.

In a surprise but smart move, Teradyne (NYSE:TER), the American test solutions provider that acquired Universal Robots in 2015 and Energid Technologies earlier in 2018, acquired Danish MiR (Mobile Industrial Robots) for $148 million with an additional $124 million predicated on very achievable milestones between now and 2020.

MiR was just returning from celebrating its 300% growth in 2017 at a company get-together in Barcelona with 70 MiR employees from all over the world when the announcement was made. It tripled its revenue from autonomous mobile robots (AMRs) in 2017. MiR co-founder and CEO Thomas Visti said that growth in 2017 was primarily due to multinational companies that returned with orders for larger fleets of mobile robots after it tested and analyzed the results of its initial MiR robot orders.

Another factor in MiR’s 2017 growth was the launch of the MiR200 which can lift 440 pounds, pull 1,100 pounds, is ESD approved and cleanroom certified. “The MiR200 has been very well received and represents a large part of our sales. The product meets clear needs in the market and increases potential applications for autonomous mobile robots. Combined with our new and extremely user-friendly interface – which even employees without programming experience can use – it makes it even simpler for our customers to implement and use our robots,” Visti said.

Another factor in MiR’s 2017 growth was the launch of the MiR200 which can lift 440 pounds, pull 1,100 pounds, is ESD approved and cleanroom certified. “The MiR200 has been very well received and represents a large part of our sales. The product meets clear needs in the market and increases potential applications for autonomous mobile robots. Combined with our new and extremely user-friendly interface – which even employees without programming experience can use – it makes it even simpler for our customers to implement and use our robots,” Visti said.

Another reason for MiRs rapid growth has been its initial strategic decision to develop and market solely to a growing network of integrator/distributors originally developed by Visti when he was VP of Sales at Universal Robots. MiR presently has 132 distributors in 40 countries with regional offices in New York, San Diego, Singapore, Dortmund, Barcelona and Shanghai – and the lists are growing.

Teradyne, by this acquisition, is hoping to capitalize on the synergies between MiR and UR as shown in the chart above. Both offer end users fast ROI and low cost of entry and provide Teradyne with attractive gross margins and rapid growth.

“We are excited to have MiR join Teradyne’s widening portfolio of advanced, intelligent, automation products,” said Mark Jagiela, President and CEO of Teradyne. “MiR is the market leader in the nascent, but fast growing market for collaborative autonomous mobile robots (AMRs). Like Universal Robots’ collaborative robots, MiR collaborative AMRs lower the barrier for both large and small enterprises to incrementally automate their operations without the need for specialty staff or a re-layout of their existing workflow. This, combined with a fast return on investment, opens a vast new automation market. Following the path proven with Universal Robots, we expect to leverage Teradyne’s global capabilities to expand MiR’s reach.”

Earlier this year, Teradyne acquired Energid for $25 million in a talent and intellectual property acquisition. Energid, which is exhibiting and speaking at the Robotics Summit & Showcase, developed the robot control and tasking framework Actin which is used in industrial, commercial, collaborative, medical and space-based robotic systems and is a UR partner. Teradyne sees Actin as an enabling technology for advanced motion control and collision avoidance.

MiR founder and CSO Niels Jul Jacobsen, Visti, and investors Esben Østergaard, Torben Frigaard Rasmussen, and Søren Michael Juul Jørgensen should all be proud of their achievement thus far. Congratulations to all!

Teradyne and Mobile Industrial Robots (MiR) Announce Teradyne’s Acquisition of MiR

What’s the Difference Between Analog and Neuromorphic Chips in Robots?

Securing The Robots

Fido funeral: In Japan, a send-off for robot dogs

New robot for skull base surgery alleviates surgeon’s workload

MODEX 2018: Old and new have never been so far apart

MODEX, ProMat and CeMAT are the biggest global material handling and logistics supply chain tech trade shows. But as I walked the corridors of this year’s MODEX in Atlanta, I was particularly aware of the widening disparity between the old and new.

Stats: Material Handling and Logistics

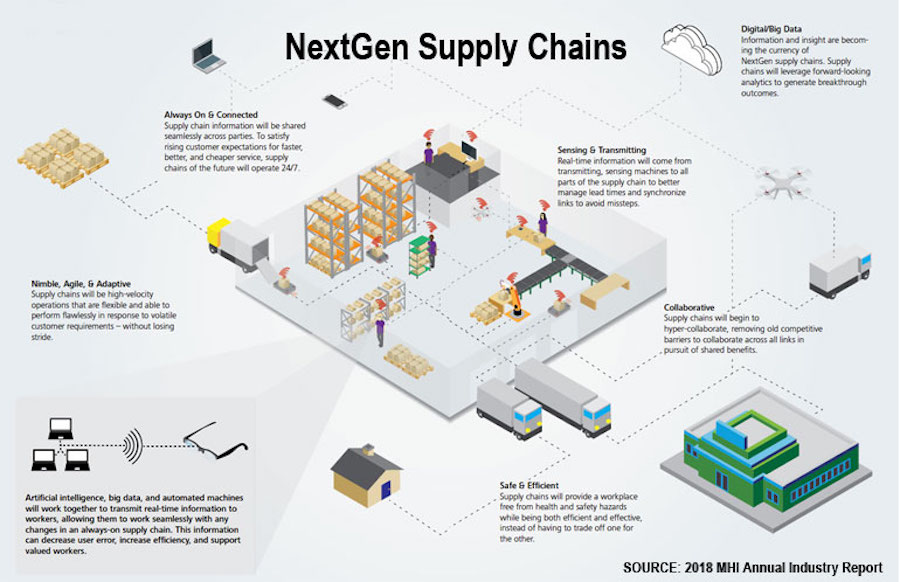

The 2018 MHI Annual Industry Report found that the 2018 adoption rate for driverless vehicles in material handling was only 10% and the adoption rate for AI was just 5% while the rate for robotics and automation was 35%.

The report indicated the top technologies expected to be a source of either disruption or competitive advantage within the next 3-5 years to be: (shown in order of importance)

- Robotics and Automation – picking, packing, sorting orders; loading, unloading, stacking; receiving and put-away; assembly operations; QC and inspection processing

- Predictive Analytics – manage lead times; synchronize links; avoid missteps

- Internet-of-Things (IoT) – enable real-time info flow; predictive analytics; and QC

- Artificial Intelligence – faster deliveries; reduced redundancies; improved analytics

- Driverless Vehicles – autonomous vehicles (and conversion kits for existing AGVs, tows and lifts); SLAM and point-to-point navigation; some with manipulators

The report also listed the key barriers to adoption of driverless vehicles and AI: (shown in order of importance)

- lack of a clear business case

- lack of adequate talent

- lack of understanding of the technology landscape

- lack of access to capital to make investments

- cybersecurity

Other research reports were more optimistic in their forecasts:

- A $3,500 report from QY Research made rosy forecasts for global parcel sorting robots and last mile delivery robots

- A $10,000 report from Interact Analysis forecast five years of double-digit growth for AMRs and AGVs converted to AMRs

- A $4,450 report from Grand View Research forecasts significant growth through 2024 driven by increasing demand for autonomous and safe point-to-point material handling equipment

Transforming lifts, tows, carts and AGVs to AMRs and VGVs

Human-operated AGVs, tows, lifts and other warehouse and factory vehicles have been a staple in material movement for decades. Now, with low-cost cameras, sensors and advanced vision systems, they are slowly transitioning to more flexible autonomous mobile robots that can tow, lift and carry. AMRs are Automated Mobile Robots which can be human operated or autonomous or a combination of both.

- Vendors providing conversion systems for existing lifts and carts to Vision Guided Vehicles (AMRs) for line-side replenishment, pallet movement, etc. include:

- Vendors providing grasping capabilities in addition to autonomous mobility include:

- Vendors providing high speed random grasping from moving conveyors or bins:

- RightHand Robotics

- Universal Logic

- Kinema SystemsVendors providing AMRs, VGVs and AIVs (Autonomous Intelligent Vehicles) for goods-to-person, load transfer, restocking, etc. include:

Navigation systems have changed as well and often don’t require floor grid markings, barcodes or extensive indoor localization and segregation systems such as those used by Kiva Systems (and subsequently Amazon). SLAM and combinations of floor grids, SLAM, path planning, and collision avoidance systems are adding flexibility to swarms of point-to-point mobile robots.

Kiva look-alikes emerge

In March 2012, in an effort to make their fulfillment centers as efficient as possible, Amazon acquired Kiva Systems for $775 million and almost immediately took them in-house, leaving a disgruntled set of Kiva customers who couldn’t expand and a larger group of prospective clients who were left with a technological gap and no solutions. I wrote about this gap and about the whole community of new providers that had sprung up to fill the void and were beginning to offer and demonstrate their solutions. Many of those new providers are listed above.

Recently, another set of competitors has emerged in this space:

- Companies started in China who copied the Kiva Systems formula to provide Kiva-like goods-to-person robot services and dynamic free-form warehousing to the major Chinese e-commerce vendors such as:

- Now some of those companies are expanding outside of China and SE Asia to Europe and America:

Bottom Line

There are many forms of warehousing. But the area where NextGen tools are needed the most are in high-turn distribution and fulfillment centers.

The rate of acceptance of e-commerce is changing warehousing forever, particularly distribution and fulfillment centers. Total e-commerce sales for 2017 were $453.5 billion, an increase of 16.0% from 2016. E-commerce sales in 2017 accounted for 8.9% of total sales versus 8.0% of total sales in 2016 according to the U.S. Department of Commerce.

Consequently flexibility and an ability to handle an ever-increasing number of parcels is paramount and fixed costs for conveyors, elevators and old style AS/RS systems has become anathema to warehouse executives worldwide. Hence the need to invest in NextGen Supply Chain methods as shown at shows like MODEX.

Although 31,000 people went to this year’s MODEX, I wonder how many share my view about the disparity between the old and new shown at the show. Certainly there were enough new tech vendors offering “a mixed fleet of intelligent, collaborative mobile robots and fully-autonomous, zero-infrastructure AGVs designed specifically for safe and flexible material flows in dynamic, human-centric environments.” Yet the emphasis on the show – and the favorable booth space placement – was to the old-line vendors rather than the NextGen companies listed above.

Go figure!

Shared autonomy via deep reinforcement learning

By Siddharth Reddy

Imagine a drone pilot remotely flying a quadrotor, using an onboard camera to navigate and land. Unfamiliar flight dynamics, terrain, and network latency can make this system challenging for a human to control. One approach to this problem is to train an autonomous agent to perform tasks like patrolling and mapping without human intervention. This strategy works well when the task is clearly specified and the agent can observe all the information it needs to succeed. Unfortunately, many real-world applications that involve human users do not satisfy these conditions: the user’s intent is often private information that the agent cannot directly access, and the task may be too complicated for the user to precisely define. For example, the pilot may want to track a set of moving objects (e.g., a herd of animals) and change object priorities on the fly (e.g., focus on individuals who unexpectedly appear injured). Shared autonomy addresses this problem by combining user input with automated assistance; in other words, augmenting human control instead of replacing it.

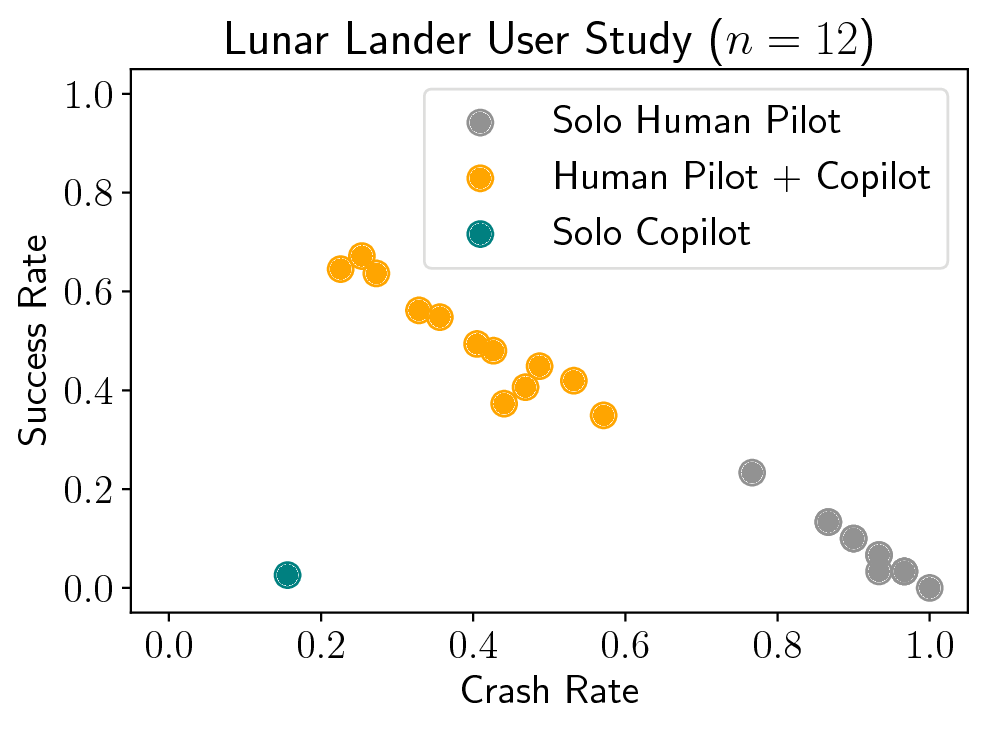

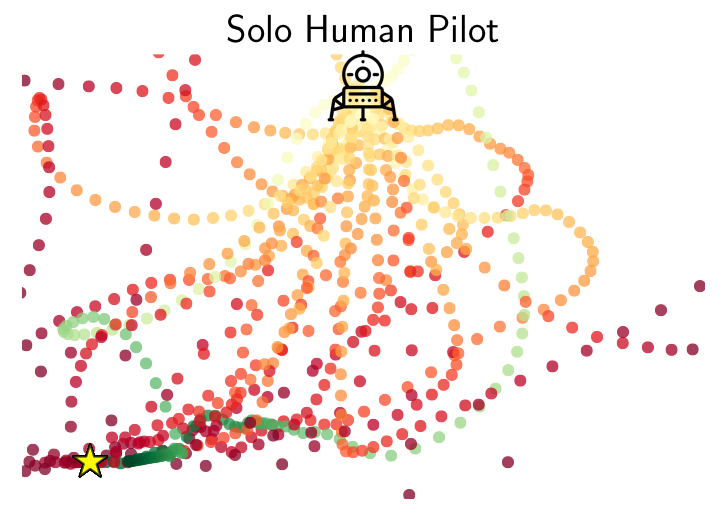

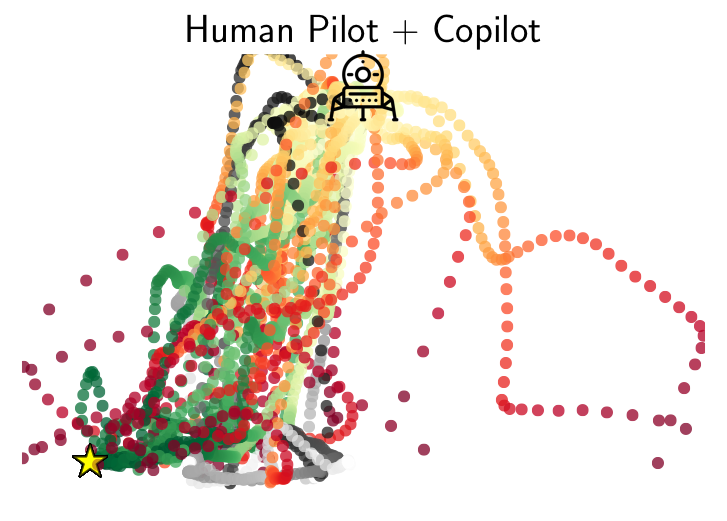

A blind, autonomous pilot (left), suboptimal human pilot (center), and combined human-machine team (right) play the Lunar Lander game.

Background

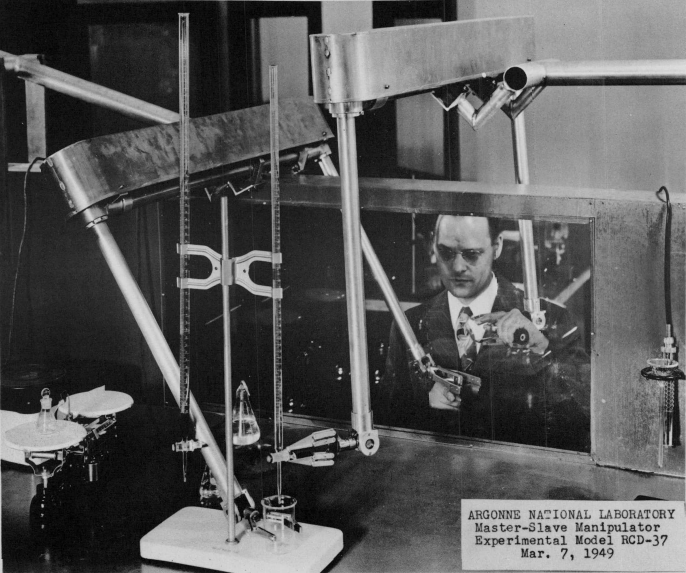

The idea of combining human and machine intelligence in a shared-control system goes back to the early days of Ray Goertz’s master-slave manipulator in 1949, Ralph Mosher’s Hardiman exoskeleton in 1969, and Marvin Minsky’s call for telepresence in 1980. After decades of research in robotics, human-computer interaction, and artificial intelligence, interfacing between a human operator and a remote-controlled robot remains a challenge. According to a review of the 2015 DARPA Robotics Challenge, “the most cost effective research area to improve robot performance is Human-Robot Interaction.The biggest enemy of robot stability and performance in the DRC was operator errors. Developing ways to avoid and survive operator errors is crucial for real-world robotics. Human operators make mistakes under pressure, especially without extensive training and practice in realistic conditions.”

|

|

|

Master-slave robotic manipulator (Goertz, 1949) |

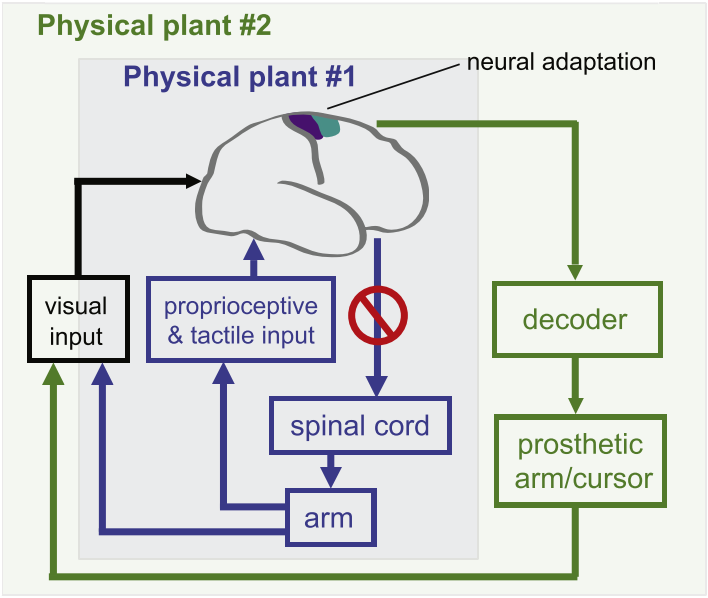

Brain-computer interface for neural prosthetics (Shenoy & Carmena, 2014) |

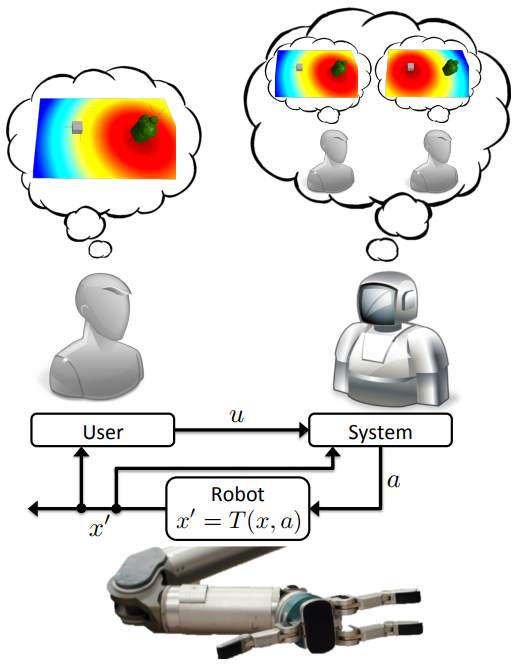

Formalism for model-based shared autonomy (Javdani et al., 2015) |

One research thrust in shared autonomy approaches this problem by inferring the user’s goals and autonomously acting to achieve them. Chapter 5 of Shervin Javdani’s Ph.D. thesis contains an excellent review of the literature. Such methods have made progress toward better driver assist, brain-computer interfaces for prosthetic limbs, and assistive teleoperation, but tend to require prior knowledge about the world; specifically, (1) a dynamics model that predicts the consequences of taking a given action in a given state of the environment, (2) the set of possible goals for the user, and (3) an observation model that describes the user’s behavior given their goal. Model-based shared autonomy algorithms are well-suited to domains in which this knowledge can be directly hard-coded or learned, but are challenged by unstructured environments with ill-defined goals and unpredictable user behavior. We approached this problem from a different angle, using deep reinforcement learning to implement model-free shared autonomy.

Deep reinforcement learning uses neural network function approximation to tackle the curse of dimensionality in high-dimensional, continuous state and action spaces, and has recently achieved remarkable success in training autonomous agents from scratch to play video games, defeat human world champions at Go, and control robots. We have taken preliminary steps toward answering the following question: can deep reinforcement learning be useful for building flexible and practical assistive systems?

Model-Free RL with a Human in the Loop

To enable shared-control teleoperation with minimal prior assumptions, we devised a model-free deep reinforcement learning algorithm for shared autonomy. The key idea is to learn an end-to-end mapping from environmental observation and user input to agent action, with task reward as the only form of supervision. From the agent’s perspective, the user acts like a prior policy that can be fine-tuned, and an additional sensor generating observations from which the agent can implicitly decode the user’s private information. From the user’s perspective, the agent behaves like an adaptive interface that learns a personalized mapping from user commands to actions that maximizes task reward.

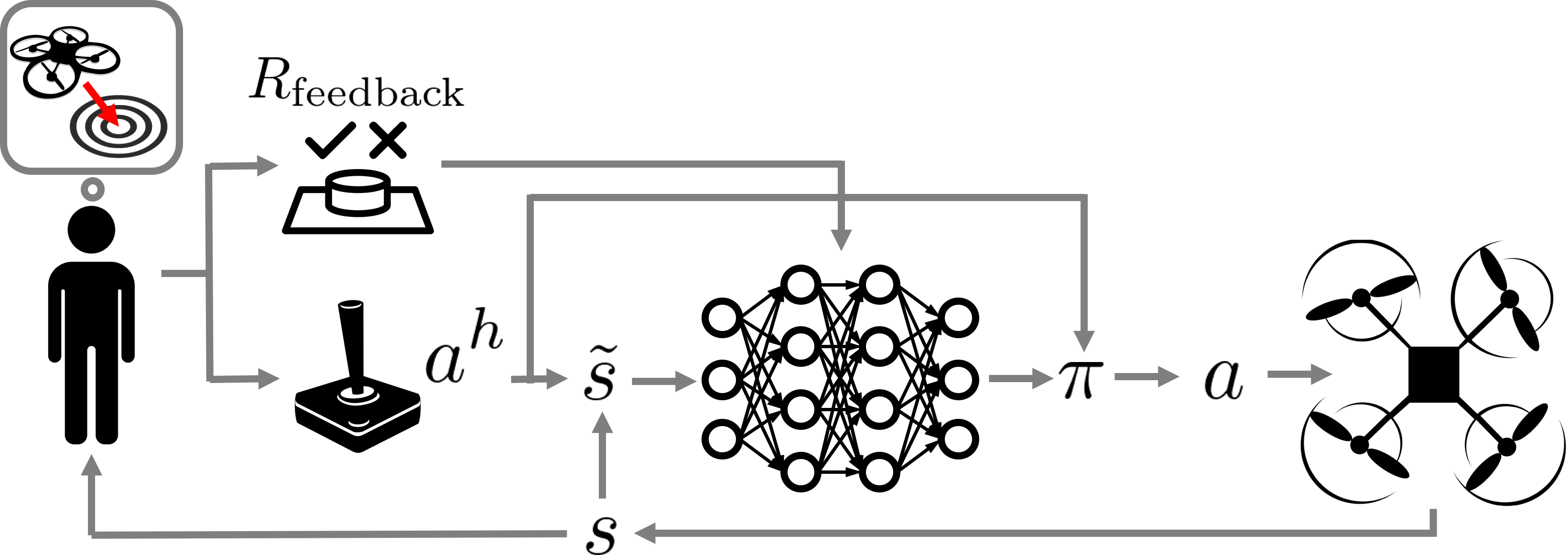

Fig. 1: An overview of our human-in-the-loop deep Q-learning algorithm for model-free shared autonomy

One of the core challenges in this work was adapting standard deep RL techniques to leverage control input from a human without significantly interfering with the user’s feedback control loop or tiring them with a long training period. To address these issues, we used deep Q-learning to learn an approximate state-action value function that computes the expected future return of an action given the current environmental observation and the user’s input. Equipped with this value function, the assistive agent executes the closest high-value action to the user’s control input. The reward function for the agent is a combination of known terms computed for every state, and a terminal reward provided by the user upon succeeding or failing at the task. See Fig. 1 for a high-level schematic of this process.

Learning to Assist

Prior work has formalized shared autonomy as a partially-observable Markov decision process (POMDP) in which the user’s goal is initially unknown to the agent and must be inferred in order to complete the task. Existing methods tend to assume the following components of the POMDP are known ex-ante: (1) the dynamics of the environment, or the state transition distribution $T$; (2) the set of possible goals for the user, or the goal space $\mathcal{G}$; and (3) the user’s control policy given their goal, or the user model $\pi_h$. In our work, we relaxed these three standard assumptions. We introduced a model-free deep reinforcement learning method that is capable of providing assistance without access to this knowledge, but can also take advantage of a user model and goal space when they are known.

In our problem formulation, the transition distribution $T$, the user’s policy $\pi_h$, and the goal space $\mathcal{G}$ are no longer all necessarily known to the agent. The reward function, which depends on the user’s private information, is

$$

R(s, a, s’) = \underbrace{R_{\text{general}}(s, a, s’)}_\text{known} + \underbrace{R_{\text{feedback}}(s, a, s’)}_\text{unknown, but observed}.

$$

This decomposition follows a structure typically present in shared autonomy: there are some terms in the reward that are known, such as the need to avoid collisions. We capture these in $R_{\text{general}}$. $R_{\text{feedback}}$ is user-generated feedback that depends on their private information. We do not know this function. We merely assume the agent is informed when the user provides feedback (e.g., by pressing a button). In practice, the user might simply indicate once per trial whether the agent succeeded or not.

Incorporating User Input

Our method jointly embeds the agent’s observation of the environment $s_t$ with the information from the user $u_t$ by simply concatenating them. Formally,

$$

\tilde{s}_t = \left[ \begin{array}{c} s_t \\ u_t \end{array} \right].

$$

The particular form of $u_t$ depends on the available information. When we do not know the set of possible goals $\mathcal{G}$ or the user’s policy given their goal $\pi_h$, as is the case for most of our experiments, we set $u_t$ to the user’s action $a^h_t$. When we know the goal space $\mathcal{G}$, we set $u_t$ to the inferred goal $\hat{g}_t$. In particular, for problems with known goal spaces and user models, we found that using maximum entropy inverse reinforcement learning to infer $\hat{g}_t$ led to improved performance. For problems with known goal spaces but unknown user models, we found that under certain conditions we could improve performance by training an LSTM recurrent neural network to predict $\hat{g}_t$ given the sequence of user inputs using a training set of rollouts produced by the unassisted user.

Q-Learning with User Control

Model-free reinforcement learning with a human in the loop poses two challenges: (1) maintaining informative user input and (2) minimizing the number of interactions with the environment. If the user input is a suggested control, consistently ignoring the suggestion and taking a different action can degrade the quality of user input, since humans rely on feedback from their actions to perform real-time control tasks. Popular on-policy algorithms like TRPO are difficult to deploy in this setting since they give no guarantees on how often the user’s input is ignored. They also tend to require a large number of interactions with the environment, which is impractical for human users. Motivated by these two criteria, we turned to deep Q-learning.

Q-learning is an off-policy algorithm, enabling us to address (1) by modifying the behavior policy used to select actions given their expected returns and the user’s input. Drawing inspiration from the minimal intervention principle embodied in recent work on parallel autonomy and outer-loop stabilization, we execute a feasible action closest to the user’s suggestion, where an action is feasible if it isn’t that much worse than the optimal action. Formally,

$$

\pi_{\alpha}(a \mid \tilde{s}, a^h) = \delta\left(a = \mathop{\arg\max}\limits_{\{a : Q'(\tilde{s}, a) \geq (1 – \alpha) Q'(\tilde{s}, a^\ast)\}} f(a, a^h)\right),

$$

where $f$ is an action-similarity function and $Q'(\tilde{s}, a) = Q(\tilde{s}, a) – \min_{a’ \in \mathcal{A}} Q(\tilde{s}, a’)$ maintains a sane comparison for negative Q values. The constant $\alpha \in [0, 1]$ is a hyperparameter that controls the tolerance of the system to suboptimal human suggestions, or equivalently, the amount of assistance.

Mindful of (2), we note that off-policy Q-learning tends to be more sample-efficient than policy gradient and Monte Carlo value-based methods. The structure of our behavior policy also speeds up learning when the user is approximately optimal: for appropriately large $\alpha$, the agent learns to fine-tune the user’s policy instead of learning to perform the task from scratch. In practice, this means that during the early stages of learning, the combined human-machine team performs at least as well as the unassisted human instead of performing at the level of a random policy.

User Studies

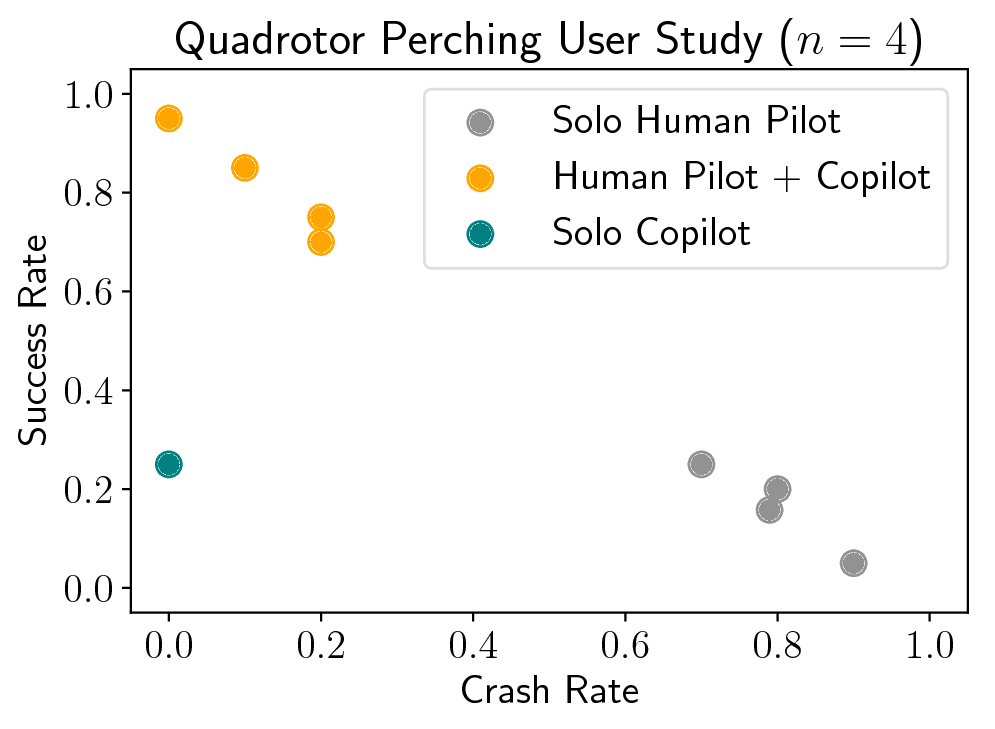

We applied our method to two real-time assistive control problems: the Lunar Lander game and a quadrotor landing task. Both tasks involved controlling motion using a discrete action space and low-dimensional state observations that include position, orientation, and velocity information. In both tasks, the human pilot had private information that was necessary to complete the task, but wasn’t capable of succeeding on their own.

The Lunar Lander Game

The objective of the game was to land the vehicle between the flags without crashing or flying out of bounds using two lateral thrusters and a main engine. The assistive copilot could observe the lander’s position, orientation, and velocity, but not the position of the flags.

|

|

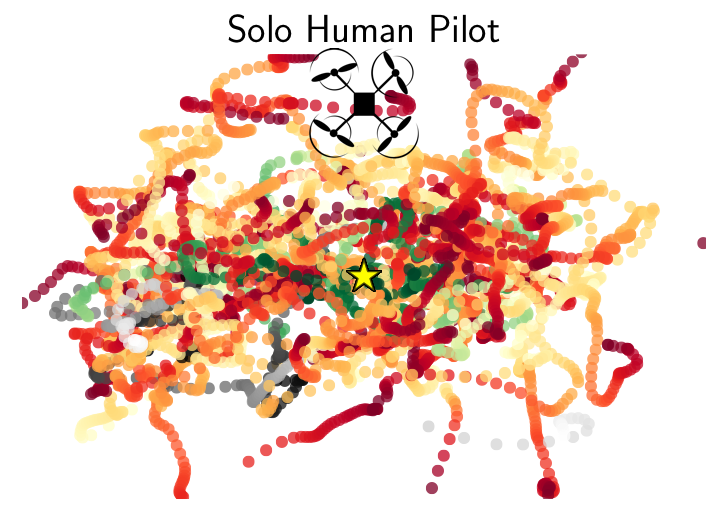

Human Pilot (Solo): The human pilot can’t stabilize and keeps crashing. |

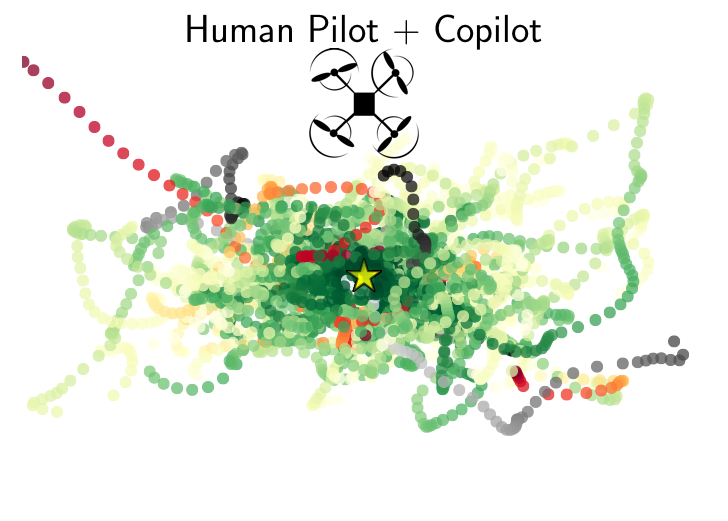

Human Pilot + RL Copilot: The copilot improves stability while giving the pilot enough freedom to land between the flags. |

Humans rarely beat the Lunar Lander game on their own, but with a copilot they did much better.

Fig. 2a: Success and crash rates averaged over 30 episodes.

Fig. 2b-c: Trajectories followed by human pilots with and without a copilot on Lunar Lander. Red trajectories end in a crash or out of bounds, green in success, and gray in neither. The landing pad is marked by a star. For the sake of illustration, we only show data for a landing site on the left boundary.

In simulation experiments with synthetic pilot models (not shown here), we also observed a significant benefit to explicitly inferring the goal (i.e., the location of the landing pad) instead of simply adding the user’s raw control input to the agent’s observations, suggesting that goal spaces and user models can and should be taken advantage of when they are available.

One of the drawbacks of analyzing Lunar Lander is that the game interface

and physics do not reflect the complexity and unpredictability of a real-world robotic shared autonomy task.

To evaluate our method in a more realistic environment, we formulated a task for a human pilot flying a real quadrotor.

Quadrotor Landing Task

The objective of the task was to land a Parrot AR-Drone 2 on a small, square landing pad at some distance from its initial take-off position, such that the drone’s first-person camera was pointed at a random object in the environment (e.g., a red chair), without flying out of bounds or running out of time. The pilot used a keyboard to control velocity, and was blocked from getting a third-person view of the drone so that they had to rely on the drone’s first-person camera feed to navigate and land. The assistive copilot observed position, orientation, and velocity, but did not know which object the pilot wanted to look at.

|

|

|

Human Pilot (Solo): The pilot’s display only showed the drone’s first-person view, so pointing the camera was easy but finding the landing pad was hard. |

Human Pilot + RL Copilot: The copilot didn’t know where the pilot wanted to point the camera, but it knew where the landing pad was. Together, the pilot and copilot succeeded at the task. |

Humans found it challenging to simultaneously point the camera at the desired scene and navigate to the precise location of a feasible landing pad under time constraints.

The assistive copilot had little trouble navigating to and landing on the landing pad, but did not know where to point the camera because it did not know what the human wanted to observe after landing. Together, the human could focus on pointing the camera and the copilot could focus on landing precisely on the landing pad.

Fig. 3a: Success and crash rates averaged over 20 episodes.

Fig. 3b-c: A bird’s-eye view of trajectories followed by human pilots with and without a copilot on the quadrotor landing task. Red trajectories end in a crash or out of bounds, green in success, and gray in neither. The landing pad is marked by a star.

Our results showed that combined pilot-copilot teams significantly outperform individual pilots and copilots.

What’s Next?

Our method has a major weakness: model-free deep reinforcement learning typically requires lots of training data, which can be burdensome for human users operating physical robots. We mitigated this issue in our experiments by pretraining the copilot in simulation without a human pilot in the loop. Unfortunately, this is not always feasible for real-world applications due to the difficulty of building high-fidelity simulators and designing rich user-agnostic reward functions $R_{\text{general}}$. We are currently exploring different approaches to this problem.

If you want to learn more, check out our pre-print on arXiv: Siddharth Reddy, Anca Dragan, Sergey Levine, Shared Autonomy via Deep Reinforcement Learning, arXiv, 2018.

The paper will appear at Robotics: Science and Systems 2018 from June 26-30. To encourage replication and extensions, we have released our code. Additional videos are available through the project website.

This article was initially published on the BAIR blog, and appears here with the authors’ permission.

ROBOTT-NET use case: Danfoss automated assembly line

Short delivery time, high flexibility and reduced costs for handling parts before assembly. These are the main goals that Danfoss Drives wanted to achieve by creating an automated assembly line. But while the goals were clear, the way to achieve them was cloudier.

Short delivery time, high flexibility and reduced costs for handling parts before assembly. These are the main goals that Danfoss Drives wanted to achieve by creating an automated assembly line. But while the goals were clear, the way to achieve them was cloudier.

“How to do it and with what technology, we haven’t decided yet. And that’s what we’re seeking help for”, says Technology Engineer Peter Lund Andersen from Danfoss Drives.

To find out which technologies and solutions are suitable for an automated assembly line Danfoss Drive received assistance from Danish Technological Institute’s Center for Robot Technology.

Danfoss Drives is namely one of the Danish companies that has received a so-called “voucher” through ROBOTT-NET, which offers a network of the leading European technological service institutes in robotics.

With the voucher, Danfoss Drive has an easy access to high technological solutions and robot experts outside of Denmark.

The challenge for Danfoss Drives has been that all their products are delivered in many different forms of packaging. They now want to pick the products automatically.

“Having more technological service institutes involved in the project means that we can draw on the core competence within each service institute and thereby combine each competence into one joint, great solution”, says Peter Lund Andersen. Adding that, “we have given quite a few of our tasks to English MTC, that specializes in mechanical construction. In Odense at the Danish Technological Institute they are experts in vision technology, so they take care of that part”.

You can check out Danfoss Drives’ voucher page here and watch the video of the use case below.

The main purpose of ROBOTT-NET is to gather and share the latest knowledge about robot technology that can improve production in European companies.

Note: ROBOTT-NET will be at HANNOVER MESSE from April 24-27, 2018. If you are there, make sure you pass by Stand G46 in Hall 6 by the European Commission and see project results from EU-funded projects like nextgenio, ultraSURFACE, covr, fed4sae, DiFiCIL, IPP4CPPS, Smart Anything Everywhere (SAE), RADICLE, cloudSME, BEinCPPS, CloudiFacturing & Fortissimo.

Robot Launch 2018 in full swing – like Tennibot!

With the Robot Launch 2018 competition in full swing – deadline May 15 for entries wanting to compete on stage in Brisbane at ICRA 2018 – we thought it was time to look at last years’ Robot Launch finalists. And a very successful bunch they are too!

Tennibot won the CES 2018 Innovation Award, was covered in media like Times, Discovery Channel and LA Times. Tennibot also won $40,000 from the Alabama Launchpad competition and are launching a crowdfunding campaign today!

Tennibot uses computer vision and artificial intelligence to locate/pick up tennis balls and navigate on the court. Tennibot is the world’s first autonomous tennis ball collector. The Tennibot team has already won the Tennis Industry Innovation Challenge. So, if you think that Tennis + Robots = Your kind of sport – then head over to Tennibot.com to learn more and purchase your Tennibot before it’s too late!

Other 2017 finalists include

- Semio, from California have a software platform for developing and deploying social robot skills.

- Apellix from Florida who provide software controlled aerial robotic systems that utilize tethered and untethered drones to move workers from harm’s way.

- Mothership Aeronautics from Silicon Valley have a solar powered drone capable of ‘infinity cruise’ where more power is generated than consumed.

- Kinema Systems, impressive approach to logistical challenges from the original Silicon Valley team that developed ROS.

- BotsandUs, highly awarded UK startup with a beautifully designed social robot for retail.

- Fotokite, smart team from ETHZurich with a unique approach to using drones in large scale venues.

- C2RO, from Canada are creating an expansive cloud based AI platform for service robots.

- krtkl, from Silicon Valley are high end embedded board designed for both prototyping and deployment.

Apellix were also winners of Automate 2017 startup competition. Mothership have raised a $1.25 million seed round from the likes of Draper Ventures. Kinema Systems has just won the NVIDIA Inception Challenge out of more than 200 entrants and splits $1 million prize money with two other AI startups. BotsAndUs have trialled Bo in more than 11,000 customer service interactions. Krtkl is focused on revenue not fundraising, C2RO is building partnerships with companies like Qihan. And Fotokite just won the $1 million Genius NY competition.

Fotokite CEO Christopher McCall holds a ceremonial $1 million check after the company received the top prize in the Genius NY business competition at the Marriott Syracuse Downtown on Monday. (Rick Moriarty | rmoriarty@syracuse.com) via Syracuse.com

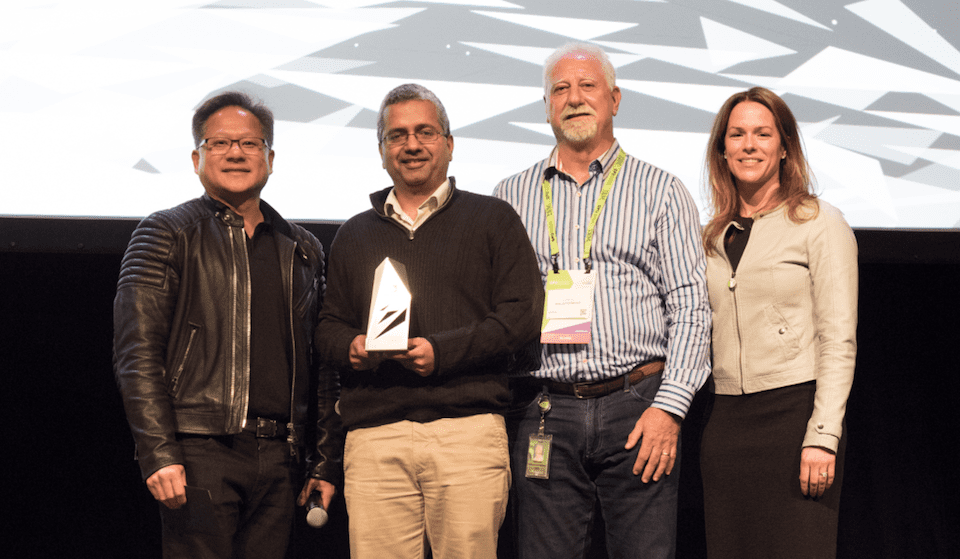

NVIDIA CEO and founder Jensen Huang, Kinema Systems CEO and founder Sachin Chitta, NVIDIA founder Chris Malachowsky, NVIDIA VP of healthcare and AI business development Kimberly Powell. via NVIDIA blog

You can watch the pitch presentation here: https://youtu.be/BzcrREvD8k0

You’ll also see some other familiar names from the shortlist for 2017, not to mention lots of success for our 2016 top startups. We can’t wait to see who will be finalists in 2018!

The Robot Launch startup competition has been running since 2014 and has helped robotics startups reach investors, build a reputation and grow their markets. We’ve had entries from all over the world and one of the significant trends has been how rapidly the stage of startup entrants has advanced. We now judge startups in several divisions: Preseed, Seed and PostSeed (or Pre Series A)

Do you have a startup idea, a prototype or a seed stage startup in robotics, sensors or AI?

Submit your entries by May 15 2018, if you want to be selected to pitch on the main stage of ICRA 2018 on May 22 in Brisbane Australia for a chance to win $3000 AUD prize from QUT bluebox!

The top 10 startups will pitch live on stage to a panel of investors and mentors including:

- Martin Duursma, Main Sequence Ventures

- Chris Moehle, The Robotics Hub Fund

- Yotam Rosenbaum, QUT bluebox

- Roland Siegwart, ETH Zurich

Entries are also in the running for a place in the QUT bluebox accelerator*, the Silicon Valley Robotics Accelerator*, mentorship from all the VC judges and potential investment of up to $250,000 from The Robotics Hub Fund*. (*conditions apply – details on application)

CONDITIONS:

Pre Seed category consists of an idea and proof of concept or prototype – customer validation is also desirable.

Seed category consists of a startup younger than 24 months, with less than $250k previous investment.

Post Seed category consists of a startup younger than 36 months, with less than $2.5m previous investment.

CAN’T MAKE IT TO AUSTRALIA?

No problems, mate! We’ll be continuing the Robot Launch competition with additional rounds in the US and in Europe through out the summer. Go ahead and enter now anyway!

Enter the Robot Launch Startup Competition at ICRA 2018 here.

FOR YOUR GUIDE ON GOOD PITCH DOCUMENTS:

A sample Investor One Pager can be seen here. And your pitch should cover the content described in Nathan Gold’s 13 slide format.