I took part in the first panel at the BSI conference The Digital World: Artificial Intelligence. The subject of the panel was AI Governance and Ethics. My co-panelist was Emma Carmel, and we were expertly chaired by Katherine Holden.

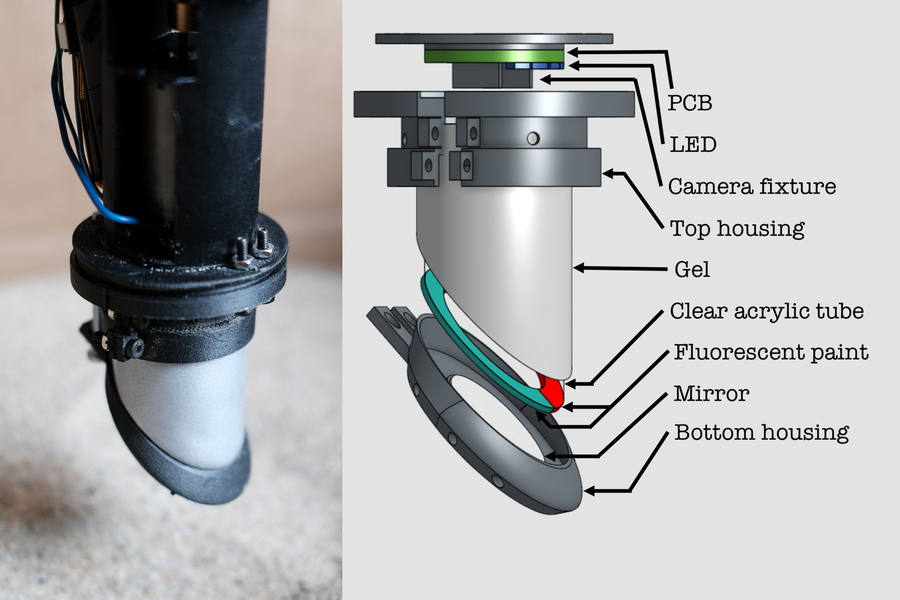

Emma and I each gave short opening presentations prior to the Q&A. The title of my talk was Why is Ethical Governance in AI so hard? Something I’ve thought about alot in recent months.

Here are the slides exploring that question.

And here are my words.

Early in 2018 I wrote a short blog post with the title Ethical Governance: what is it and who’s doing it? Good ethical governance is important because in order for people to have confidence in their AI they need to know that it has been developed responsibly. I concluded my piece by asking for examples of good ethical governance. I had several replies, but none were nominating AI companies.

So. why is it that 3 years on we see some of the largest AI companies on the planet shooting themselves in the foot, ethically speaking? I’m not at all sure I can offer an answer but, in the next few minutes, I would like to explore the question: why is ethical governance in AI so hard?

But from a new perspective.

Slide 2

In the early 1970s I spent a few months labouring in a machine shop. The shop was chaotic and disorganised. It stank of machine oil and cigarette smoke, and the air was heavy with the coolant spray used to keep the lathe bits cool. It was dirty and dangerous, with piles of metal swarf cluttering the walkways. There seemed to be a minor injury every day.

Skip forward 40 years and machine shops look very different.

Slide 3

So what happened? Those of you old enough will recall that while British design was world class – think of the British Leyland Mini, or the Jaguar XJ6 – our manufacturing fell far short. “By the mid 1970s British cars were shunned in Europe because of bad workmanship, unreliability, poor delivery dates and difficulties with spares. Japanese car manufacturers had been selling cars here since the mid 60s but it was in the 1970s that they began to make real headway. Japanese cars lacked the style and heritage of the average British car. What they did have was superb build quality and reliability”*.

What happened was Total Quality Management. The order and cleanliness of modern machine shops like this one is a strong reflection of TQM practices.

Slide 4

In the late 1970s manufacturing companies in the UK learned – many the hard way – that ‘quality’ is not something that can be introduced by appointing a quality inspector. Quality is not something that can be hired in.

This word cloud reflects the influence from Japan. The words Japan, Japanese and Kaizen – which roughly translates as continuous improvement – appear here. In TQM everyone shares the responsibility for quality. People at all levels of an organization participate in kaizen, from the CEO to assembly line workers and janitorial staff. Importantly suggestions from anyone, no matter who, are valued and taken equally seriously.

Slide 5

In 2018 my colleague Marina Jirotka and I published a paper on ethical governance in robotics and AI. In that paper we proposed 5 pillars of good ethical governance. The top four are:

- have an ethical code of conduct,

- train everyone on ethics and responsible innovation,

- practice responsible innovation, and

- publish transparency reports.

The 5th pillar underpins these four and is perhaps the hardest: really believe in ethics.

Now a couple of months ago I looked again at these 5 pillars and realised that they parallel good practice in Total Quality Management: something I became very familiar with when I founded and ran a company in the mid 1980s.

Slide 6

So, if we replace ethics with quality management, we see a set of key processes which exactly parallel our 5 pillars of good ethical governance, including the underpinning pillar: believe in total quality management.

I believe that good ethical governance needs the kind of corporate paradigm shift that was forced on UK manufacturing industry in the 1970s.

Slide 7

In a nutshell I think ethics is the new quality

Yes, setting up an ethics board or appointing an AI ethics officer can help, but on their own these are not enough. Like Quality, everyone needs to understand and contribute to ethics. Those contributions should be encouraged, valued and acted upon. Nobody should be fired for calling out unethical practices.

Until corporate AI understands this we will, I think, struggle to find companies that practice good ethical governance.

Quality cannot be ‘inspected in’, and nor can ethics.

Thank you.

Notes.

[1] I’m quoting here from the excellent history of British Leyland by Ian Nicholls.

[2] My company did a huge amount of work for Motorola and – as a subcontractor – we became certified software suppliers within their six sigma quality management programme.

[3] It was competitive pressure that forced manufacturing companies in the 1970s to up their game by embracing TQM. Depressingly the biggest AI companies face no such competitive pressures, which is why regulation is both necessary and inevitable.