Autonomous Drones Provide Information on Remaining Resources in Historic Mining Area

With Piab’s Bag Grippers, Sapho Increases Its Pace

#298: Cognitive Robotics Under Uncertainty, with Marlyse Reeves

In this episode Lilly Clark interviews Marlyse Reeves, PhD student at MIT, about her work in cognitive robotics and hybrid activity-motion planning. Reeves discusses the role of robotics in space, the challenges of multi-vehicle missions, planning under uncertainty, and her work on an underwater exploration mission.

Marlyse Reeves

Marlyse is a third-year PhD student in the Computer Science and Artificial Intelligence Laboratory at MIT. She received her B.S. in Aeronautics and Astronautics from MIT in 2017. Her current research in the Model-based Embedded and Robotic Systems Group focuses on multi-vehicle online planning, incorporating complex dynamics and constraints. She is also interested in risk-aware planning, fault protection and diagnosis, and adaptive sampling. Outside of the lab, she enjoys playing soccer, dancing, and reading science fiction.

Links

Real-time Quality Inspection of Adhesive Beads With uEye LE Board Level Cameras

Indoor Tracking Technologies in Robots & Drones

Smart Manufacturing Experience 2020

Engaging the public in robotics: 11 tips from 5,000 robotics events across Europe

Europe is focussed on making robots that work for the benefit of society. This requires empowering future roboticists and users of all ages and backgrounds. In its 9th edition, the European Robotics Week (#ERW2019) is expected to host more than 1000 events across Europe. Over the years, and over 5,000 events, the organisers have learned a thing or two about reaching the public, and ultimately making the robots people want.

Demystify robotics

For many, robots are only seen in the media or science fiction. The robotics community promises ubiquitous robots, yet most people don’t encounter robots in their work or daily lives. This matters. The Eurobarometer 2017 survey of attitudes towards the impact of digitisation found that the more familiar people are with robots, the more positive they are about the technology. A recent workshop for ERW organisers highlighted the “importance of being able to touch, feel, see and enjoy the presence of robots in order to remove the ‘fear factor’ and improve the image of robots.” People need to interact with real robots to understand their potential, and limitations.

Bring robots to public places

Most robotics events happen where roboticists and their robots already are, in universities and industry. This works well for those who show interest in the field, and have the means to attend. To reach a broader audience, robots need to be brought to public places, such as city centres, or shopping malls. ERW organisers said “don’t expect ordinary people to come to universities.” In Ghent Belgium for example, space was found in the city library to give visitors an opportunity to interact with robots. More recently, the Smart Cities Robotics (SciRoc) challenge held an international robot competition in a shopping mall in the UK.

Tackle global challenges

Robots have a role to play in tackling today’s most pressing challenges, whether it’s the environment, healthcare, assistive living, or education. Robots can also improve efficiencies in industry and avoid 4D (dangerous, dirty, difficult, drudgerous) jobs. This is not often explicitly highlighted, with robots presented for the sake of it as fun gadgets, instead of useful tools. By positioning robots as the helpers of tomorrow, we empower users to imagine their applications, and roboticists receive meaningful feedback on their use. Such applications may also be more exciting for a broader diversity of people.

The ‘Blue-Eyed Dragon’ Robot by Biljana Vicković (with the University of Belgrade, Mihajlo Institute, Robotics Laboratory Belgrade, Serbia) for example introduced an innovative and socially useful robotic artwork into a public space with a tin recycling function. It integrates robotics into an artwork with a demonstrable ecological, social and cultural impact. “The essence of this innovative work of art is that it enables the public to interact with it. As such people are direct participants and not merely an audience. In this way contemplation is replaced by action.” says its creator.

Tell stories about people who work with robots

Useful robots will ultimately be embedded in society, our work, our lives. Their role is often presented from the developers’s or industry’s perspective. This leaves the public with the sense that robots are being “done to them”, rather than with them. By bringing the users in the discussion, we hear stories of how they use the technology, what their hopes and concerns are, and ultimately design better robots and inspire future users to make use of robots themselves.

Bring a diversity of people together

Making robots requires a large range of backgrounds, from social sciences, law, and business, to hardware and software engineering. Domain expertise, will also be key. Assistive robots will require input from nurse carers for example. Engaging with a diverse population of makers and users will help ensure the technology is developed for everyone. The ERW2019 central event in Poznan features a panel dedicated to women in digital and robotics.

Carmela Sánchez from Hisparob in Spain says “this year, our motto for ERW is Robotic Thinking and Inclusion. We focus on how robotics and computational thinking can help inclusion: inclusion of different abilities, social, and economic backgrounds, and genders.”

Avoid hype and exaggerations

Inflated expectations about robotics may lead to disappointment when robots are deployed, or may lead to unfounded fears about their use. A recent ERW organiser commented “Robots are not prevalent or visible in society at large and so prevailing perceptions about robots are largely shaped by media presentation, which too often resort to negative stereotypes.” It’s worth noting robots are typically made for a single task, and many do not look like a humanoid robot. With this lens, robots no longer seem too difficult to engineer, and are far from science fiction depictions. This could be empowering for those who would like to become roboticist, and could help users imagine robots that would be helpful to them. The Smart CIties Robotics challenge for example showed the crowds how robots could help them take a lift, or deliver emergency medicine in a mall.

Teach teachers

By teaching teachers to teach robotics, we can reach many more students than what is possible through all the European Robotics Week combined. Lia Garcia, founder of Logix5 and a national coordinator of ERW in Spain underscored the need to engage the education sector: “We have to work with teachers. We need to get robotics onto the school curriculum, onto the teaching college curriculum and to get to teachers who teach teachers.” Workshops that teach educators, and help spread the word among local teachers are essential. As an added encouragement, they could receive CPD (continuing professional development) credits for taking part in robotics workshops. The ERW2019 central event in Poznan features a workshop dedicated to robotics education in Europe on 15 November.

Run competitions

Competitions are an important way of bringing students into robotics. It’s fun, and exciting, and shows they can build something that works in the real world. Europe now hosts several large robotics competitions including the European Robotics League (Emergency, Consumer, Professional, and Smart Cities). While these competitions are tailored to university students, others are run for kids. The ERW event page already has over 100 robot competitions and challenges listed for this year. Fiorella Operto from Scuola di Robotica has coordinated more than 100 teams from all over Italy committed to using a humanoid robot to promote the Italian Cultural Heritage. The 2020 edition of the NAO Challenge is devoted to “Arts&Cultures”, asking robotics to improve the knowledge of and to promote beautiful Italian art.

Keep it fun

More than ever, we have a broad range of tools to engage with the public. It could be as simple as drawing pictures of robots, to developing robot-themes escape rooms, or engaging on social media including youtube, twitter, instagram and tiktok. Robots are fun, which is why they are such good tools in education. Be creative with demos and activities. Make robots dance, allow people to decorate them, play games. University of Bristol for example will be running a swarm-themed escape room called Swarm Escape!.

Engage with stakeholders

Events with the public are a good opportunity to engage with stakeholders, including government, industry, and users. This is important as stakeholders will ultimately be the ones making robots a reality. Having them participate in such events helps them understand the potential, invest in technology and skills, and shape policy. It could also provide funding for some of the more ambitious events. “For the first time since 2012, Robotics Place, the cluster of Occitanie, organizes a one day meeting with its members on November 20th in Toulouse. Robotics Place members will meet with press, politics, students, partners and professional customers.” says Philippe Roussel, a local coordinator for France.

Act regionally, connect across Europe

Events are present across Europe, organised regionally for the local community. Connecting these events at a European scale increases impact, raise awareness, builds momentum, and allows for lessons to be shared across the content. euRobotics and Digital Innovation Hubs provide valuable resources for these purposes.

Yet there is a divide in access, with cities being better catered to than rural communities, or areas that are poorer. The challenge is to provide everyone with access and exposure to robotics and its opportunities. Extra effort should be made to reach out to underserved communities, for example using a “robot roadshow”. Organisers of ERW said “a further benefit of this cross-border approach would be to enhance the European dimension.” As an example, from May 2020, a 105m long floating Science Center called the MS Experimenta will be touring southern Germany, bringing science from port to port.

Get involved

Feeling inspired, ready to make a difference? Organise your own European Robotics Week event, big or small, and register it here along with the over 900 events already announced.

#IROS2019 videos and exhibit floor – update

The 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (#IROS2019) was held in Macau earlier this month. The theme this year was “robots connecting people”.

For those who couldn’t make it in person, or couldn’t possibly see everything, IROS launched IROS TV. You can catch all 28 videos here, or watch the three summary videos below.

And here’s my quick tour of the exhibit floor.

Finally, follow #IROS2019 or @IROS2019MACAU on twitter.

Did you publish at IROS? Send your stories to me and we’ll get them on Robohub.

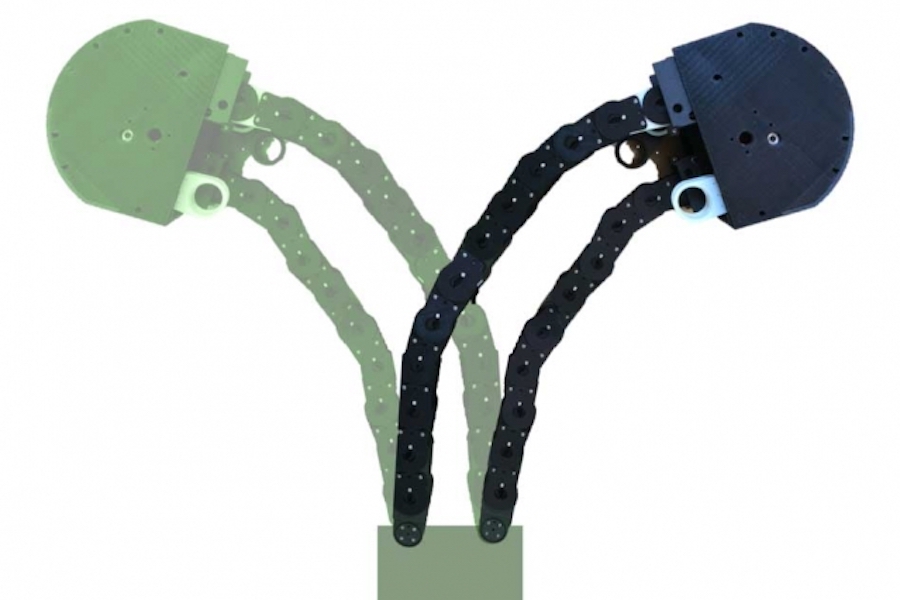

Flexible yet sturdy robot is designed to “grow” like a plant

The new “growing robot” can be programmed to grow, or extend, in different directions, based on the sequence of chain units that are locked and fed out from the “growing tip,” or gearbox.

Image courtesy of researchers, edited by MIT News

In today’s factories and warehouses, it’s not uncommon to see robots whizzing about, shuttling items or tools from one station to another. For the most part, robots navigate pretty easily across open layouts. But they have a much harder time winding through narrow spaces to carry out tasks such as reaching for a product at the back of a cluttered shelf, or snaking around a car’s engine parts to unscrew an oil cap.

Now MIT engineers have developed a robot designed to extend a chain-like appendage flexible enough to twist and turn in any necessary configuration, yet rigid enough to support heavy loads or apply torque to assemble parts in tight spaces. When the task is complete, the robot can retract the appendage and extend it again, at a different length and shape, to suit the next task.

The appendage design is inspired by the way plants grow, which involves the transport of nutrients, in a fluidized form, up to the plant’s tip. There, they are converted into solid material to produce, bit by bit, a supportive stem.

Likewise, the robot consists of a “growing point,” or gearbox, that pulls a loose chain of interlocking blocks into the box. Gears in the box then lock the chain units together and feed the chain out, unit by unit, as a rigid appendage.

The researchers presented the plant-inspired “growing robot” this week at the IEEE International Conference on Intelligent Robots and Systems (IROS) in Macau. They envision that grippers, cameras, and other sensors could be mounted onto the robot’s gearbox, enabling it to meander through an aircraft’s propulsion system and tighten a loose screw, or to reach into a shelf and grab a product without disturbing the organization of surrounding inventory, among other tasks.

“Think about changing the oil in your car,” says Harry Asada, professor of mechanical engineering at MIT. “After you open the engine roof, you have to be flexible enough to make sharp turns, left and right, to get to the oil filter, and then you have to be strong enough to twist the oil filter cap to remove it.”

“Now we have a robot that can potentially accomplish such tasks,” says Tongxi Yan, a former graduate student in Asada’s lab, who led the work. “It can grow, retract, and grow again to a different shape, to adapt to its environment.”

The team also includes MIT graduate student Emily Kamienski and visiting scholar Seiichi Teshigawara, who presented the results at the conference.

The last foot

The design of the new robot is an offshoot of Asada’s work in addressing the “last one-foot problem” — an engineering term referring to the last step, or foot, of a robot’s task or exploratory mission. While a robot may spend most of its time traversing open space, the last foot of its mission may involve more nimble navigation through tighter, more complex spaces to complete a task.

Engineers have devised various concepts and prototypes to address the last one-foot problem, including robots made from soft, balloon-like materials that grow like vines to squeeze through narrow crevices. But Asada says such soft extendable robots aren’t sturdy enough to support “end effectors,” or add-ons such as grippers, cameras, and other sensors that would be necessary in carrying out a task, once the robot has wormed its way to its destination.

“Our solution is not actually soft, but a clever use of rigid materials,” says Asada, who is the Ford Foundation Professor of Engineering.

Chain links

Once the team defined the general functional elements of plant growth, they looked to mimic this in a general sense, in an extendable robot.

“The realization of the robot is totally different from a real plant, but it exhibits the same kind of functionality, at a certain abstract level,” Asada says.

The researchers designed a gearbox to represent the robot’s “growing tip,” akin to the bud of a plant, where, as more nutrients flow up to the site, the tip feeds out more rigid stem. Within the box, they fit a system of gears and motors, which works to pull up a fluidized material — in this case, a bendy sequence of 3-D-printed plastic units interlocked with each other, similar to a bicycle chain.

As the chain is fed into the box, it turns around a winch, which feeds it through a second set of motors programmed to lock certain units in the chain to their neighboring units, creating a rigid appendage as it is fed out of the box.

The researchers can program the robot to lock certain units together while leaving others unlocked, to form specific shapes, or to “grow” in certain directions. In experiments, they were able to program the robot to turn around an obstacle as it extended or grew out from its base.

“It can be locked in different places to be curved in different ways, and have a wide range of motions,” Yan says.

When the chain is locked and rigid, it is strong enough to support a heavy, one-pound weight. If a gripper were attached to the robot’s growing tip, or gearbox, the researchers say the robot could potentially grow long enough to meander through a narrow space, then apply enough torque to loosen a bolt or unscrew a cap.

Auto maintenance is a good example of tasks the robot could assist with, according to Kamienski. “The space under the hood is relatively open, but it’s that last bit where you have to navigate around an engine block or something to get to the oil filter, that a fixed arm wouldn’t be able to navigate around. This robot could do something like that.”

This research was funded, in part, by NSK Ltd.

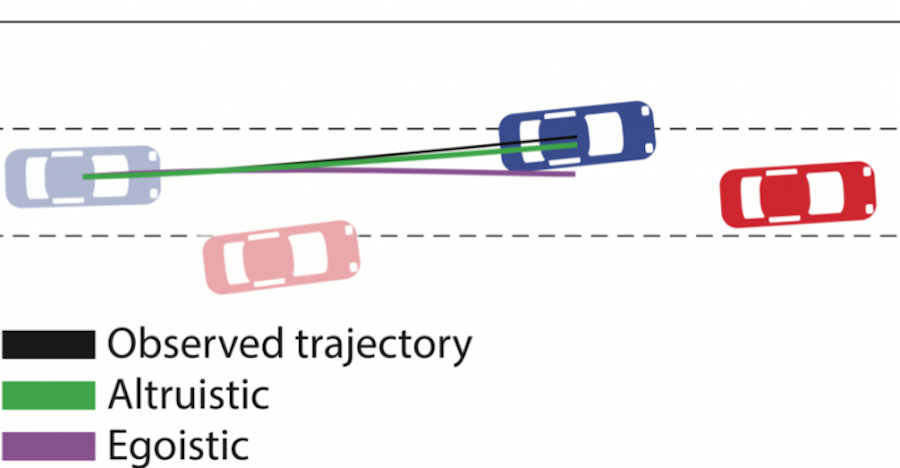

Predicting people’s driving personalities

Image courtesy of the researchers.

Self-driving cars are coming. But for all their fancy sensors and intricate data-crunching abilities, even the most cutting-edge cars lack something that (almost) every 16-year-old with a learner’s permit has: social awareness.

While autonomous technologies have improved substantially, they still ultimately view the drivers around them as obstacles made up of ones and zeros, rather than human beings with specific intentions, motivations, and personalities.

But recently a team led by researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) has been exploring whether self-driving cars can be programmed to classify the social personalities of other drivers, so that they can better predict what different cars will do — and, therefore, be able to drive more safely among them.

In a new paper, the scientists integrated tools from social psychology to classify driving behavior with respect to how selfish or selfless a particular driver is.

Specifically, they used something called social value orientation (SVO), which represents the degree to which someone is selfish (“egoistic”) versus altruistic or cooperative (“prosocial”). The system then estimates drivers’ SVOs to create real-time driving trajectories for self-driving cars.

Testing their algorithm on the tasks of merging lanes and making unprotected left turns, the team showed that they could better predict the behavior of other cars by a factor of 25 percent. For example, in the left-turn simulations their car knew to wait when the approaching car had a more egoistic driver, and to then make the turn when the other car was more prosocial.

While not yet robust enough to be implemented on real roads, the system could have some intriguing use cases, and not just for the cars that drive themselves. Say you’re a human driving along and a car suddenly enters your blind spot — the system could give you a warning in the rear-view mirror that the car has an aggressive driver, allowing you to adjust accordingly. It could also allow self-driving cars to actually learn to exhibit more human-like behavior that will be easier for human drivers to understand.

“Working with and around humans means figuring out their intentions to better understand their behavior,” says graduate student Wilko Schwarting, who was lead author on the new paper that will be published this week in the latest issue of the Proceedings of the National Academy of Sciences. “People’s tendencies to be collaborative or competitive often spills over into how they behave as drivers. In this paper, we sought to understand if this was something we could actually quantify.”

Schwarting’s co-authors include MIT professors Sertac Karaman and Daniela Rus, as well as research scientist Alyssa Pierson and former CSAIL postdoc Javier Alonso-Mora.

A central issue with today’s self-driving cars is that they’re programmed to assume that all humans act the same way. This means that, among other things, they’re quite conservative in their decision-making at four-way stops and other intersections.

While this caution reduces the chance of fatal accidents, it also creates bottlenecks that can be frustrating for other drivers, not to mention hard for them to understand. (This may be why the majority of traffic incidents have involved getting rear-ended by impatient drivers.)

“Creating more human-like behavior in autonomous vehicles (AVs) is fundamental for the safety of passengers and surrounding vehicles, since behaving in a predictable manner enables humans to understand and appropriately respond to the AV’s actions,” says Schwarting.

To try to expand the car’s social awareness, the CSAIL team combined methods from social psychology with game theory, a theoretical framework for conceiving social situations among competing players.

The team modeled road scenarios where each driver tried to maximize their own utility and analyzed their “best responses” given the decisions of all other agents. Based on that small snippet of motion from other cars, the team’s algorithm could then predict the surrounding cars’ behavior as cooperative, altruistic, or egoistic — grouping the first two as “prosocial.” People’s scores for these qualities rest on a continuum with respect to how much a person demonstrates care for themselves versus care for others.

In the merging and left-turn scenarios, the two outcome options were to either let somebody merge into your lane (“prosocial”) or not (“egoistic”). The team’s results showed that, not surprisingly, merging cars are deemed more competitive than non-merging cars.

The system was trained to try to better understand when it’s appropriate to exhibit different behaviors. For example, even the most deferential of human drivers knows that certain types of actions — like making a lane change in heavy traffic — require a moment of being more assertive and decisive.

For the next phase of the research, the team plans to work to apply their model to pedestrians, bicycles, and other agents in driving environments. In addition, they will be investigating other robotic systems acting among humans, such as household robots, and integrating SVO into their prediction and decision-making algorithms. Pierson says that the ability to estimate SVO distributions directly from observed motion, instead of in laboratory conditions, will be important for fields far beyond autonomous driving.

“By modeling driving personalities and incorporating the models mathematically using the SVO in the decision-making module of a robot car, this work opens the door to safer and more seamless road-sharing between human-driven and robot-driven cars,” says Rus.

The research was supported by the Toyota Research Institute for the MIT team. The Netherlands Organization for Scientific Research provided support for the specific participation of Mora.