Novel 3-D printing method embeds sensing capabilities within robotic actuators

Modular-based Systems for Defense Drones

U.T.SEC: UAS Will Change Our Lives Fundamentally – Including in the Security Field

Lack of Machine Guarding Again Named to OSHA’S Top 10 Most Cited Violations List

Designers envision robots helping chronically ill children

The Machines Are Taking Over Space

Humanoid robot supports emergency response teams

Robots in Depth with Henrik Christensen

In this episode of Robots in Depth, Per Sjöborg speaks with Henrik Christensen, the Qualcomm Chancellor’s Chair of Robot Systems and a Professor of Computer Science at Dept. of Computer Science and Engineering UC San Diego. He is also the director of the Institute for Contextual Robotics. Prior to UC San Diego he was the founding director of Institute for Robotics and Intelligent machines (IRIM) at Georgia Institute of Technology (2006-2016).

In this episode of Robots in Depth, Per Sjöborg speaks with Henrik Christensen, the Qualcomm Chancellor’s Chair of Robot Systems and a Professor of Computer Science at Dept. of Computer Science and Engineering UC San Diego. He is also the director of the Institute for Contextual Robotics. Prior to UC San Diego he was the founding director of Institute for Robotics and Intelligent machines (IRIM) at Georgia Institute of Technology (2006-2016).

Christensen shares stories from his life in European robotics research, his views on the robot revolution, and experience developing robotics roadmaps.

IDS NXT – Novel Vision app-based sensors and cameras

New brain computer interfaces lead many to ask, is Black Mirror real?

It’s called the “grain,” a small IoT device implanted into the back of people’s skulls to record their memories. Human experiences are simply played back on “redo mode” using a smart button remote. The technology promises to reduce crime, terrorism and simplify human relationships with greater transparency. While this is a description of Netflix’s Black Mirror episode, “The Entire History of You,” in reality the concept is not as far-fetched as it may seem. This week life came closer to imitating art with the $19 million grant by the US Department of Defense to a group of six universities to begin work on “neurograins.”

In the past, Brian Computer Interfaces (BCIs) have utilized wearable technologies, such as headbands and helmets, to control robots, machines and various household appliances for people with severe disabilities. This new DARPA grant is focused on developing a “cortical intranet” for uplinks and downlinks directly to the cerebral cortex, potentially taking mind control to the next level. According to lead researcher Arto Nurmikko of Brown University, “What we’re developing is essentially a micro-scale wireless network in the brain enabling us to communicate directly with neurons on a scale that hasn’t previously been possible.”

Nurmikko boasts of the numerous medicinal outcomes of the research, “The understanding of the brain we can get from such a system will hopefully lead to new therapeutic strategies involving neural stimulation of the brain, which we can implement with this new neurotechnology.” The technology being developed by Nurmikko’s international team will eventually create a wireless neural communication platform that will be able to record and stimulate brian activity at an unprecedented level of detail and precision. This will be accomplished by implanting a mesh network of tens of thousands of granular micro-devices into a person’s cranium. The surgeons will place this layer of neurograins around the cerebral cortex that will be controlled by a nearby electronic patch just below a person’s skin.

In describing how it will work, Nurmikko explains, “We aim to be able to read out from the brain how it processes, for example, the difference between touching a smooth, soft surface and a rough, hard one and then apply microscale electrical stimulation directly to the brain to create proxies of such sensation. Similarly, we aim to advance our understanding of how the brain processes and makes sense of the many complex sounds we listen to every day, which guide our vocal communication in a conversation and stimulate the brain to directly experience such sounds.”

Nurmikko further describes, “We need to make the neurograins small enough to be minimally invasive but with extraordinary technical sophistication, which will require state-of-the-art microscale semiconductor technology. Additionally, we have the challenge of developing the wireless external hub that can process the signals generated by large populations of spatially distributed neurograins at the same time.”

While current BCIs are able to process the activity of 100 neurons at once, Nurmikko’s objective is to work at a level of 100,000 simultaneous inputs. “When you increase the number of neurons tenfold, you increase the amount of data you need to manage by much more than that because the brain operates through nested and interconnected circuits,” Nurmikko remarks. “So this becomes an enormous big data problem for which we’ll need to develop new computational neuroscience tools.” The researchers plan to first test their theory in the sensory and auditory functions of mammals.

Brain-Computer Interfaces is one of the fastest growing areas of healthcare technologies; while today it is valued at just under a billion dollars, it is forecasted to grow to $2 billion in the next five years. According to the report, the uptick in the market will be driven by an estimated increase in treating aging, fatal diseases and people with disabilities. The funder of Nurmikko’s project is DARPA’s Neural Engineering System Design program, which was formed to treat injured military personnel by “creating novel hardware and algorithms to understand how various forms of neural sensing and actuation might improve restorative therapeutic outcomes.” While DARPA’s project will provide numerous discoveries that will improve the quality of life for society’s most vulnerable, it also opens a Pandora’s box of ethical issues with the prospect of the US military potentially funding armies of cyborgs.

In response to rising ethical concerns, last month ethicists from the University of Basel in Switzerland drafted a new biosecurity framework for research in neurotechnology. The biggest concern expressed in the report was the implementation of “dual-use” technologies that have both military and medical benefits. The ethicists called for a complete ban on such innovations and strongly recommended fast tracking regulations to protect “the mental privacy and integrity of humans,”

The ethicists raise important questions about taking grant money from groups like DARPA, as “This military research has raised concern about the risks associated with the weaponization of neurotechnology, sparking a debate about controversial questions: Is it legitimate to conduct military research on brain technology? And how should policy-makers regulate dual-use neurotechnology?” The suggested framework reads like a science fiction novel, “This has resulted in a rapid growth in brain technology prototypes aimed at modulating the emotions, cognition, and behavior of soldiers. These include neurotechnological applications for deception detection and interrogation as well as brain-computer interfaces for military purposes.” However, one is reminded of the fact that the development of BCIs is moving more quickly than public policy is able to debate its merits.

The framework’s lead author Marcello Ienca of Basel’s Institute for Biomedical Ethics understands the tremendous positive benefits of BCIs for a global aging population, especially for people suffering from Alzheimer’s and spinal cord injuries. In fact, the Swiss team calls for increased private investment of these neurotechnologies, not an outright prohibition. At the same time, Ienca stresses that in order to protect against misuse, such as brain manipulation by nefarious global actors, it is critical to raise awareness and debate surrounding the ethical issues of implanting neurograins into populations of humans. In an interview with the Guardian last year, Ienca summed up his concern very succinctly by saying, “The information in our brains should be entitled to special protections in this era of ever-evolving technology. When that goes, everything goes.”

In the spirit of open debate our next RobotLab forum will be on “The Future of Robotic Medicine” with Dr. Joel Stein of Columbia University and Kate Merton of JLabs on March 6th @ 6pm in New York City, RSVP.

How robot math and smartphones led researchers to a drug discovery breakthrough

By Ian Haydon, University of Washington

Robotic movement can be awkward.

For us humans, a healthy brain handles all the minute details of bodily motion without demanding conscious attention. Not so for brainless robots – in fact, calculating robotic movement is its own scientific subfield.

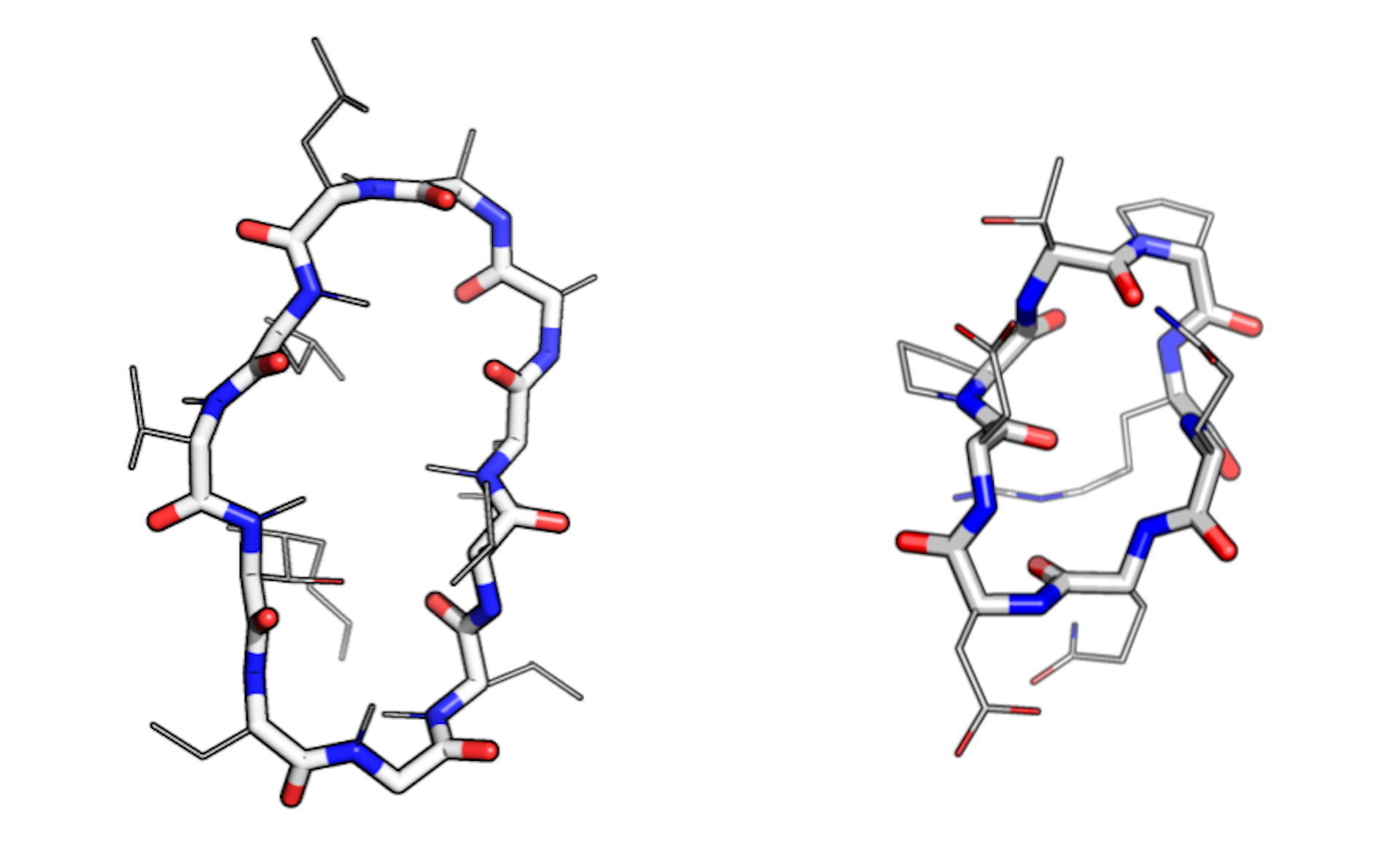

My colleagues here at the University of Washington’s Institute for Protein Design have figured out how to apply an algorithm originally designed to help robots move to an entirely different problem: drug discovery. The algorithm has helped unlock a class of molecules known as peptide macrocycles, which have appealing pharmaceutical properties.

One small step, one giant leap

Roboticists who program movement conceive of it in what they call “degrees of freedom.” Take a metal arm, for instance. The elbow, wrist and knuckles are movable and thus contain degrees of freedom. The forearm, upper arm and individual sections of each finger do not. If you want to program an android to reach out and grasp an object or take a calculated step, you need to know what its degrees of freedom are and how to manipulate them.

The more degrees of freedom a limb has, the more complex its potential motions. The math required to direct even simple robotic limbs is surprisingly abstruse; Ferdinand Freudenstein, a father of the field, once called the calculations underlying the movement of a limb with seven joints “the Mount Everest of kinematics.”

Freudenstein developed his kinematics equations at the dawn of the computer era in the 1950s. Since then, roboticists have increasingly relied on algorithms to solve these complex kinematic puzzles. One algorithm in particular – known as “generalized kinematic closure” – bested the seven joint problem, allowing roboticists to program fine control into mechanical hands.

Molecular biologists took notice.

Many molecules inside living cells can be conceived of as chains with pivot points, or degrees of freedom, akin to tiny robotic arms. These molecules flex and twist according to the laws of chemistry. Peptides and their elongated cousins, proteins, often must adopt precise three-dimensional shapes in order to function. Accurately predicting the complex shapes of peptides and proteins allows scientists like me to understand how they work.

Mastering macrocycles

While most peptides form straight chains, a subset, known as macrocycles, form rings. This shape offers distinct pharmacological advantages. Ringed structures are less flexible than floppy chains, making macrocycles extremely stable. And because they lack free ends, some can resist rapid degradation in the body – an otherwise common fate for ingested peptides.

Natural macrocycles such as cyclosporin are among the most potent therapeutics identified to date. They combine the stability benefits of small-molecule drugs, like aspirin, and the specificity of large antibody therapeutics, like herceptin. Experts in the pharmaceutical industry regard this category of medicinal compounds as “attractive, albeit underappreciated.”

“There is a huge diversity of macrocycles in nature – in bacteria, plants, some mammals,” said Gaurav Bhardwaj, a lead author of the new report in Science, “and nature has evolved them for their own particular functions.” Indeed, many natural macrocycles are toxins. Cyclosporin, for instance, displays anti-fungal activity yet also acts as a powerful immunosuppressant in the clinic making it useful as a treatment for rheumatoid arthritis or to prevent rejection of transplanted organs.

A popular strategy for producing new macrocycle drugs involves grafting medicinally useful features onto otherwise safe and stable natural macrocycle backbones. “When it works, it works really well, but there’s a limited number of well-characterized structures that we can confidently use,” said Bhardwaj. In other words, drug designers have only had access to a handful of starting points when making new macrocycle medications.

To create additional reliable starting points, his team used generalized kinematic closure – the robot joint algorithm – to explore the possible conformations, or shapes, that macrocycles can adopt.

Adaptable algorithms

As with keys, the exact shape of a macrocycle matters. Build one with the right conformation and you may unlock a new cure.

Modeling realistic conformations is “one of the hardest parts” of macrocycle design, according to Vikram Mulligan, another lead author of the report. But thanks to the efficiency of the robotics-inspired algorithm, the team was able to achieve “near-exhaustive sampling” of plausible conformations at “relatively low computational cost.”

The calculations were so efficient, in fact, that most of the work did not require a supercomputer, as is usually the case in the field of molecular engineering. Instead, thousands of smartphones belonging to volunteers were networked together to form a distributed computing grid, and the scientific calculations were doled out in manageable chunks.

With the initial smartphone number crunching complete, the team pored over the results – a collection of hundreds of never-before-seen macrocycles. When a dozen such compounds were chemically synthesized in the lab, nine were shown to actually adopt the predicted conformation. In other words, the smartphones were accurately rendering molecules that scientists can now optimize for their potential as targeted drugs.

The team estimates the number of macrocycles that can confidently be used as starting points for drug design has jumped from fewer than 10 to over 200, thanks to this work. Many of the newly designed macrocycles contain chemical features that have never been seen in biology.

To date, macrocyclic peptide drugs have shown promise in battling cancer, cardiovascular disease, inflammation and infection. Thanks to the mathematics of robotics, a few smartphones and some cross-disciplinary thinking, patients may soon see even more benefits from this promising class of molecules.

Ian Haydon, Doctoral Student in Biochemistry, University of Washington

This article was originally published on The Conversation. Read the original article.

![]()

New Horizon 2020 robotics projects: ROSIN

In 2016, the European Union co-funded 17 new robotics projects from the Horizon 2020 Framework Programme for research and innovation. 16 of these resulted from the robotics work programme, and 1 project resulted from the Societal Challenges part of Horizon 2020. The robotics work programme implements the robotics strategy developed by SPARC, the Public-Private Partnership for Robotics in Europe (see the Strategic Research Agenda).

EuRobotics regularly publishes video interviews with projects, so that you can find out more about their activities. You can also see many of these projects at the upcoming European Robotics Forum (ERF) in Tampere Finland March 13-15.

This week features ROSIN: ROS-Industrial quality-assured robot software.

Objectives

Make ROS-Industrial the open-source industrial standard for intelligent industrial robots, and put Europe in a leading position within this global initiative.

Presently, potential users are waiting for improved quality and quantity of ROS-Industrial components, but both can improve only when more parties contribute and use ROS-Industrial. We will apply European funding to address both sides of this stalemate:

- improving the availability of high-quality components, through Focused Technical Projects and software quality assurance.

- increasing the community size, until ROS becomes self-sustaining as an industrial standard, through an education program and dissemination.

Expected Impact

ROSIN will propel the open-source robot software project ROS-Industrial beyond the critical mass required for further autonomous growth. As a result, it will become a widely adopted standard for industrial intelligent robot software components, e.g. for 3D perception and motion planning. System integrators, software companies, and robot producers will use the open-source framework and the rich choice in libraries to build their own closed-source derivatives which they will sell and for which they will provide support to industrial customers.

Partners

TECHNISCHE UNIVERSITEIT DELFT (TU Delft)

FRAUNHOFER GESELLSCHAFT ZUR FOERDERUNG DER ANGEWANDTEN FORSCHUNG E.V. (FHG)

IT-UNIVERSITETET I KOBENHAVN (ITU)

FACHHOCHSCHULE AACHEN (FHA)

FUNDACION TECNALIA RESEARCH & INNOVATION (TECNALIA)

ABB AB (ABB AB)

Coordinator:

Coordinator: Prof. Martijn Wisse

Contact: Dr. Carlos Hernandez

Delft University of Technology

Project website: www.rosin-project.eu

If you enjoyed reading this article, you may also want to read:

- New Horizon 2020 robotics projects: RobMoSys

- New Horizon 2020 robotics projects, 2016: REELER

- New Horizon 2020 robotics projects, 2016: HEPHAESTUS

- New Horizon 2020 robotics projects, 2016: Co4Robots

- New Horizon 2020 robotics projects, 2016: An.Dy

- New Horizon 2020 robotics projects, 2016: BADGER

- Two Horizon 2020 projects researching EU Digital Industrial Platform for Robotics

- EU’s Horizon 2020 has funded $179 million in robotics PPPs

See all the latest robotics news on Robohub, or sign up for our weekly newsletter.

Robo-picker grasps and packs

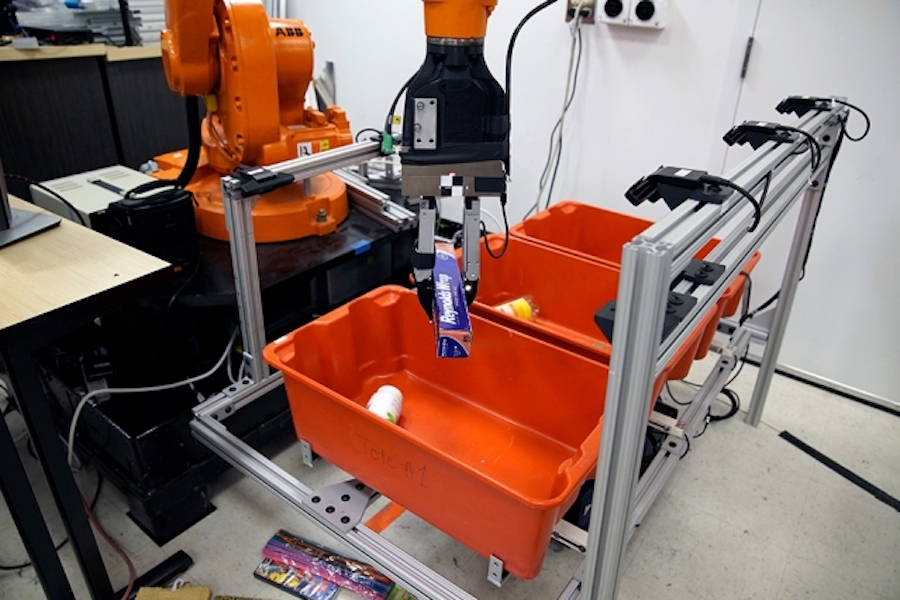

Image: Melanie Gonick/MIT

Unpacking groceries is a straightforward albeit tedious task: You reach into a bag, feel around for an item, and pull it out. A quick glance will tell you what the item is and where it should be stored.

Now engineers from MIT and Princeton University have developed a robotic system that may one day lend a hand with this household chore, as well as assist in other picking and sorting tasks, from organizing products in a warehouse to clearing debris from a disaster zone.

The team’s “pick-and-place” system consists of a standard industrial robotic arm that the researchers outfitted with a custom gripper and suction cup. They developed an “object-agnostic” grasping algorithm that enables the robot to assess a bin of random objects and determine the best way to grip or suction onto an item amid the clutter, without having to know anything about the object before picking it up.

Once it has successfully grasped an item, the robot lifts it out from the bin. A set of cameras then takes images of the object from various angles, and with the help of a new image-matching algorithm the robot can compare the images of the picked object with a library of other images to find the closest match. In this way, the robot identifies the object, then stows it away in a separate bin.

In general, the robot follows a “grasp-first-then-recognize” workflow, which turns out to be an effective sequence compared to other pick-and-place technologies.

“This can be applied to warehouse sorting, but also may be used to pick things from your kitchen cabinet or clear debris after an accident. There are many situations where picking technologies could have an impact,” says Alberto Rodriguez, the Walter Henry Gale Career Development Professor in Mechanical Engineering at MIT.

Rodriguez and his colleagues at MIT and Princeton will present a paper detailing their system at the IEEE International Conference on Robotics and Automation, in May.

Building a library of successes and failures

While pick-and-place technologies may have many uses, existing systems are typically designed to function only in tightly controlled environments.

Today, most industrial picking robots are designed for one specific, repetitive task, such as gripping a car part off an assembly line, always in the same, carefully calibrated orientation. However, Rodriguez is working to design robots as more flexible, adaptable, and intelligent pickers, for unstructured settings such as retail warehouses, where a picker may consistently encounter and have to sort hundreds, if not thousands of novel objects each day, often amid dense clutter.

The team’s design is based on two general operations: picking — the act of successfully grasping an object, and perceiving — the ability to recognize and classify an object, once grasped.

The researchers trained the robotic arm to pick novel objects out from a cluttered bin, using any one of four main grasping behaviors: suctioning onto an object, either vertically, or from the side; gripping the object vertically like the claw in an arcade game; or, for objects that lie flush against a wall, gripping vertically, then using a flexible spatula to slide between the object and the wall.

Rodriguez and his team showed the robot images of bins cluttered with objects, captured from the robot’s vantage point. They then showed the robot which objects were graspable, with which of the four main grasping behaviors, and which were not, marking each example as a success or failure. They did this for hundreds of examples, and over time, the researchers built up a library of picking successes and failures. They then incorporated this library into a “deep neural network” — a class of learning algorithms that enables the robot to match the current problem it faces with a successful outcome from the past, based on its library of successes and failures.

“We developed a system where, just by looking at a tote filled with objects, the robot knew how to predict which ones were graspable or suctionable, and which configuration of these picking behaviors was likely to be successful,” Rodriguez says. “Once it was in the gripper, the object was much easier to recognize, without all the clutter.”

From pixels to labels

The researchers developed a perception system in a similar manner, enabling the robot to recognize and classify an object once it’s been successfully grasped.

To do so, they first assembled a library of product images taken from online sources such as retailer websites. They labeled each image with the correct identification — for instance, duct tape versus masking tape — and then developed another learning algorithm to relate the pixels in a given image to the correct label for a given object.

“We’re comparing things that, for humans, may be very easy to identify as the same, but in reality, as pixels, they could look significantly different,” Rodriguez says. “We make sure that this algorithm gets it right for these training examples. Then the hope is that we’ve given it enough training examples that, when we give it a new object, it will also predict the correct label.”

Last July, the team packed up the 2-ton robot and shipped it to Japan, where, a month later, they reassembled it to participate in the Amazon Robotics Challenge, a yearly competition sponsored by the online megaretailer to encourage innovations in warehouse technology. Rodriguez’s team was one of 16 taking part in a competition to pick and stow objects from a cluttered bin.

In the end, the team’s robot had a 54 percent success rate in picking objects up using suction and a 75 percent success rate using grasping, and was able to recognize novel objects with 100 percent accuracy. The robot also stowed all 20 objects within the allotted time.

For his work, Rodriguez was recently granted an Amazon Research Award and will be working with the company to further improve pick-and-place technology — foremost, its speed and reactivity.

“Picking in unstructured environments is not reliable unless you add some level of reactiveness,” Rodriguez says. “When humans pick, we sort of do small adjustments as we are picking. Figuring out how to do this more responsive picking, I think, is one of the key technologies we’re interested in.”

The team has already taken some steps toward this goal by adding tactile sensors to the robot’s gripper and running the system through a new training regime.

“The gripper now has tactile sensors, and we’ve enabled a system where the robot spends all day continuously picking things from one place to another. It’s capturing information about when it succeeds and fails, and how it feels to pick up, or fails to pick up objects,” Rodriguez says. “Hopefully it will use that information to start bringing that reactiveness to grasping.”

This research was sponsored in part by ABB Inc., Mathworks, and Amazon.