A Guide to Self-Healing Robots

#302: Robots That Can See, Do, and Win, with Juxi Leitner

In this episode, Lilly interviews Juxi Leitner, a Postdoctoral Research Fellow at the Queensland University of Technology and Co-Founder/CEO of LYRO Robotics. LYRO spun out of the 2017 win of the Amazon Robotics Challenge by Team ACRV. Here Juxi discusses deep learning, computer vision, intent in grasping and manipulation, and bridging the gap between abstract and low-level understandings of the world. He also discusses why robotics is really an integration field, the Amazon and other robotics challenges, and what’s important to consider when spinning an idea into a company.

Juxi Leitner

Juxi Leitner is co-founder of LYRO Robotics, a deep-tech startup based in Brisbane, Australia, creating robotic picking and packing solutions. LYRO is a spin-out of the Australian Centre of Excellence for Robotic Vision (ACRV), where Juxi is the research lead for the manipulation research stream (previously Vision and Action project). His research focus is on integrating Robotics, Computer Vision and Machine Learning/Artificial Intelligence (AI) for robust grasping and manipulation in real-world scenarios. In 2017 his team won the Amazon Robotics Challenge. Juxi is active in the local Brisbane deep-tech ecosystem and started Brisbane.AI and the Brisbane robotics interest group.

Links

Robotics by Pilz – open and compatible

How Robots Are Changing the Maritime Industry

Trends in Industrial Robotics to Watch in 2020

RoboticsTomorrow – Special Tradeshow Coverage<br>ATX West, MD&M and Design & Manufacturing

Automation Technology Expo (ATX) West and Design & Manufacturing (D&M) Pacific to Showcase Latest Innovations Highlighting the Future of Factory 4.0

How Do You Train a Retail Robot?

Emergent behavior by minimizing chaos

By Glen Berseth

All living organisms carve out environmental niches within which they can maintain relative predictability amidst the ever-increasing entropy around them (1), (2). Humans, for example, go to great lengths to shield themselves from surprise — we band together in millions to build cities with homes, supplying water, food, gas, and electricity to control the deterioration of our bodies and living spaces amidst heat and cold, wind and storm. The need to discover and maintain such surprise-free equilibria has driven great resourcefulness and skill in organisms across very diverse natural habitats. Motivated by this, we ask: could the motive of preserving order amidst chaos guide the automatic acquisition of useful behaviors in artificial agents?

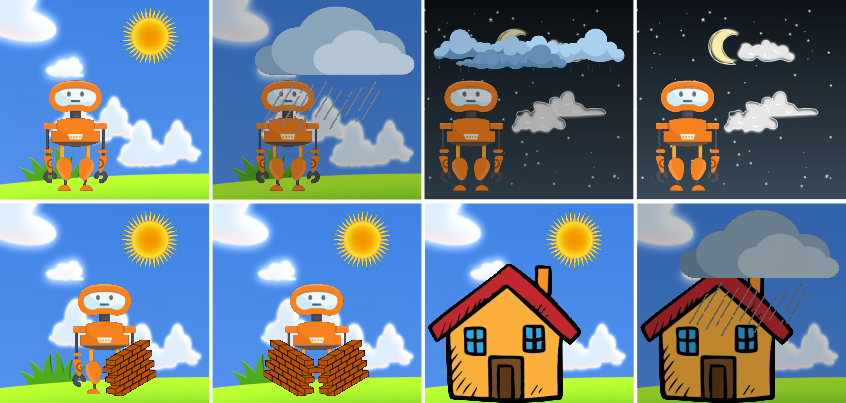

How might an agent in an environment acquire complex behaviors and skills with no external supervision? This central problem in artificial intelligence has evoked several candidate solutions, largely focusing on novelty-seeking behaviors (3), (4), (5). In simulated worlds, such as video games, novelty-seeking intrinsic motivation can lead to interesting and meaningful behavior. However, these environments may be fundamentally lacking compared to the real world. In the real world, natural forces and other agents offer bountiful novelty. Instead, the challenge in natural environments is allostasis: discovering behaviors that enable agents to maintain an equilibrium (homeostasis), for example to preserve their bodies, their homes, and avoid predators and hunger. In the example below we shown an example where an agent is experiencing random events due to the changing weather. If the agent learns to build a shelter, in this case a house, the agent will reduce the observed effects from weather.

We formalize homeostasis as an objective for reinforcement learning based on surprise minimization (SMiRL). In entropic and dynamic environments with undesirable forms of novelty, minimizing surprise (i.e., minimizing novelty) causes agents to naturally seek an equilibrium that can be stably maintained.

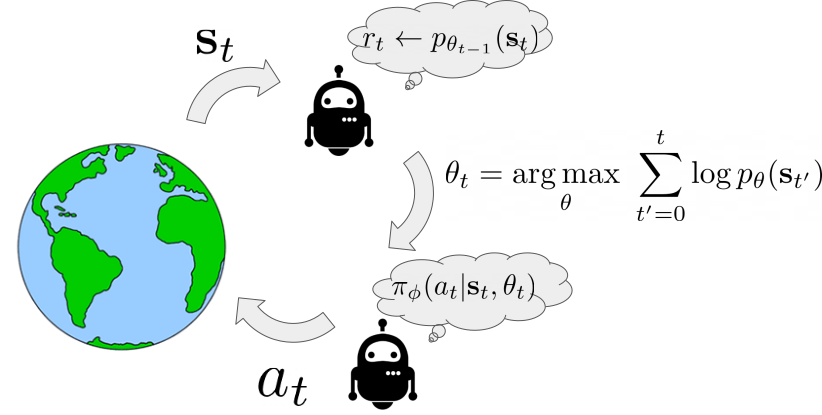

Here we show an illustration of the agent interaction loop using SMiRL. When the agent observes a state $\mathbf{s}$, it computes the probability of this new state given the belief the agent has $r_{t} \leftarrow p_{\theta_{t-1}}(\textbf{s})$. This belief models the states the agent is most familiar with – i.e., the distribution of states it has seen in the past. Experiencing states that are more familiar will result in higher reward. After the agent experience a new state it updates its belief $p_{\theta_{t-1}}(\textbf{s})$ over states to include the most recent experience. Then, the goal of the action policy $\pi(a|\textbf{s}, \theta_{t})$ is to choose actions that will result in the agent consistently experiencing familiar states. Crucially, the agent understands that its beliefs will change in the future. This means that it has two mechanisms by which to maximize this reward: taking actions to visit familiar states, and taking actions to visit states that will change its beliefs such that future states are more familiar. It is this latter mechanism that results in complex emergent behavior. Below, we visualize a policy trained to play the game of Tetris. On the left the blocks the agent chooses are shown and on the right is a visualization of $p_{\theta_{t}}(\textbf{s})$. We can see how as the episode progresses the belief over possible locations to place blocks tends to favor only the bottom row. This encourages the agent to eliminate blocks to prevent board from filling up.

Left: Tetris. Right: HauntedHouse.

Emergent behavior

The SMiRL agent demonstrates meaningful emergent behaviors in a number of different environments. In the Tetris environment, the agent is able to learn proactive behaviors to eliminate rows and properly play the game. The agent also learns emergent game playing behavior in the VizDoom environment, acquiring an effective policy for dodging the fireballs thrown by the enemies. In both of these environments, stochastic and chaotic events force the SMiRL agent to take a coordinated course of action to avoid unusual states, such as full Tetris boards or fireball explorations.

Left: Doom Hold The Line. Right: Doom Defend The Line.

Biped

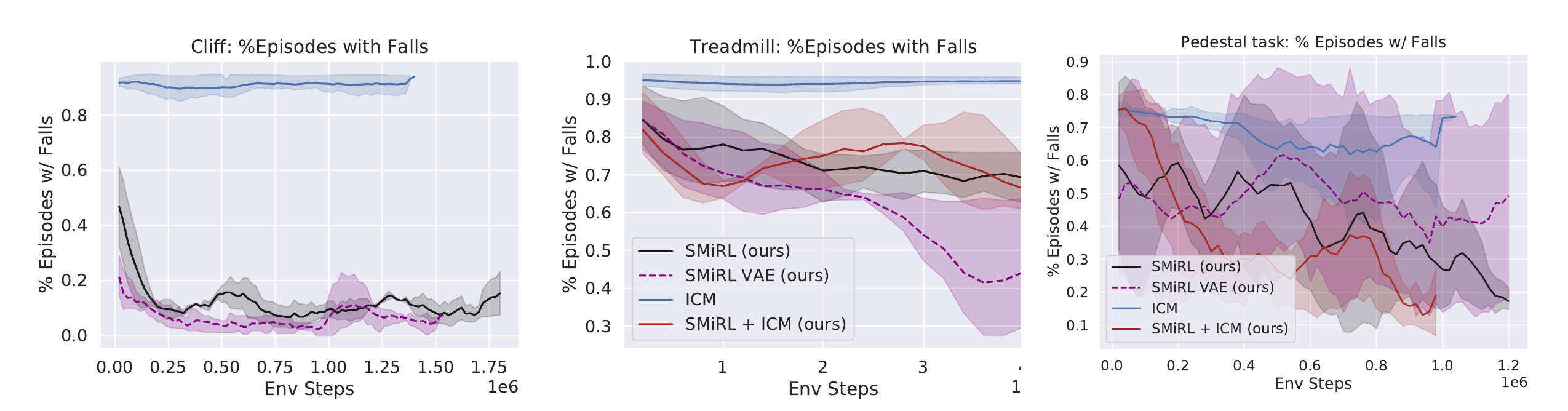

In the Cliff environment, the agent learns a policy that greatly reduces the probability of falling off of the cliff by bracing against the ground and stabilize itself at the edge, as shown in the figure below. In the Treadmill environment, SMiRL learns a more complex locomotion behavior, jumping forward to increase the time it stays on the treadmill, as shown in figure below.

Left: Cliff. Right: Treadmill.

Comparison to Intrinsic motivation:

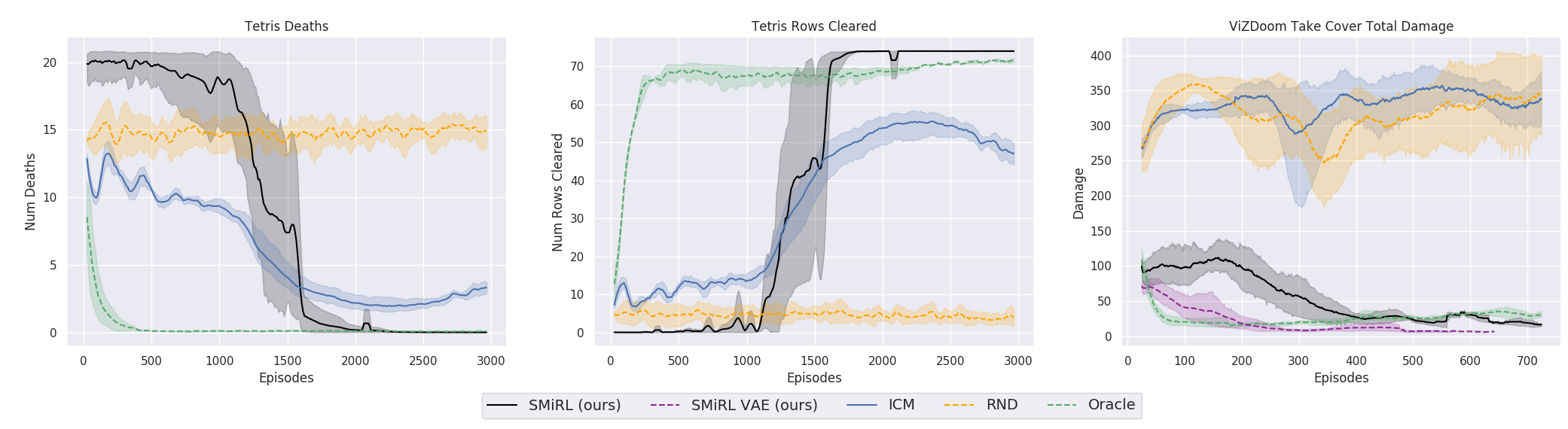

Intrinsic motivation is the idea that behavior is driven by internal reward signals that are task independent. Below, we show plots of the environment-specific rewards over time on Tetris, VizDoomTakeCover, and the humanoid domains. In order to compare SMiRL to more standard intrinsic motivation methods, which seek out states that maximize surprise or novelty, we also evaluated ICM (5) and RND (6). We include an oracle agent that directly optimizes the task reward. On Tetris, after training for $2000$ epochs, SMiRL achieves near perfect play, on par with the oracle reward optimizing agent, with no deaths. ICM seeks novelty by creating more and more distinct patterns of blocks rather than clearing them, leading to deteriorating game scores over time. On VizDoomTakeCover, SmiRL effectively learns to dodge fireballs thrown by the adversaries.

The baseline comparisons for the Cliff and Treadmill environments have a similar outcome. The novelty seeking behavior of ICM causes it to learn a type of irregular behavior that causes the agent to jump off the Cliff and roll around on the Treadmill, maximizing the variety (and quantity) of falls.

SMiRL + Curiosity:

While on the surface, SMiRL minimizes surprise and curiosity approaches like ICM maximize novelty, they are in fact not mutually incompatible. In particular, while ICM maximizes novelty with respect to a learned transition model, SMiRL minimizes surprise with respect to a learned state distribution. We can combine ICM and SMiRL to achieve even better results on the Treadmill environment.

Left: Treadmill+ICM. Right: Pedestal.

Insights:

The key insight utilized by our method is that, in contrast to simple simulated domains, realistic environments exhibit dynamic phenomena that gradually increase entropy over time. An agent that resists this growth in entropy must take active and coordinated actions, thus learning increasingly complex behaviors. This is different from commonly proposed intrinsic exploration methods based on novelty, which instead seek to visit novel states and increase entropy. SMiRL holds promise for a new kind of unsupervised RL method that produces behaviors that are closely tied to the prevailing disruptive forces, adversaries, and other sources of entropy in the environment.

This article was initially published on the BAIR blog, and appears here with the author’s permission.

CES 2020: A smart city oasis

Like the city that hosts the Consumer Electronics Show (CES) there is a lot of noise on the show floor. Sifting through the lights, sounds and people can be an arduous task even for the most experienced CES attendees. Hidden past the North Hall of the Las Vegas Convention Center (LVCC) is a walkway to a tech oasis housed in the Westgate Hotel. This new area hosting SmartCity/IoT innovations is reminiscent of the old Eureka Park complete with folding tables and ballroom carpeting. The fact that such enterprises require their own area separate from the main halls of the LVCC and the startup pavilions of the Sands Hotel is an indication of how urbanization is being redefined by artificial intelligence.

Many executives naively group AI into its own category with SmartCity inventions as a niche use case. However as Akio Toyoda, Chief Executive of Toyota, presented at CES it is the reverse. The “Woven City,” initiative by the car manufacturer illustrates that autonomous cars, IoT devices and intelligent robots are subservient to society and, hence, require their own “living laboratory”. Toyoda boldly described a novel construction project for a city of the future 60 miles from Tokyo, “With people, buildings and vehicles all connected and communicating with each other through data and sensors, we will be able to test AI technology, in both the virtual and the physical world, maximizing its potential. We want to turn artificial intelligence into intelligence amplified.” Woven City will include 2,000 residents (mostly existing and former employees) on a 175 mile acre site (formerly a Toyota factory) at the foothills of Mount Fuji, providing academics, scientists and inventors a real-life test environment.

Many executives naively group AI into its own category with SmartCity inventions as a niche use case. However as Akio Toyoda, Chief Executive of Toyota, presented at CES it is the reverse. The “Woven City,” initiative by the car manufacturer illustrates that autonomous cars, IoT devices and intelligent robots are subservient to society and, hence, require their own “living laboratory”. Toyoda boldly described a novel construction project for a city of the future 60 miles from Tokyo, “With people, buildings and vehicles all connected and communicating with each other through data and sensors, we will be able to test AI technology, in both the virtual and the physical world, maximizing its potential. We want to turn artificial intelligence into intelligence amplified.” Woven City will include 2,000 residents (mostly existing and former employees) on a 175 mile acre site (formerly a Toyota factory) at the foothills of Mount Fuji, providing academics, scientists and inventors a real-life test environment.

Toyota has hired the Dutch architectural firm Bjarke Ingels Group (BIG) to design its urban biosphere. According to Bjarke Ingels, “Homes in the Woven City will serve as test sites for new technology, such as in-home robotics to assist with daily life. These smart homes will take advantage of full connectivity using sensor-based AI to do things automatically, like restocking your fridge, or taking out your trash — or even taking care of how healthy you are.” While construction is set to begin in 2021, the architect is already boasting: “In an age when technology, social media and online retail is replacing and eliminating our natural meeting places, the Woven City will explore ways to stimulate human interaction in the urban space. After all, human connectivity is the kind of connectivity that triggers wellbeing and happiness, productivity and innovation.”

Walking back into the LVCC from the Westgate, I heard Toyoda’s keynote in my head – “mobility for all” – forming a prism in which to view the rest of the show. Looking past Hyundai/Uber’s massive Air Taxi and Omron’s ping-pong playing robot; thousands of suited executives led me under LG’s television waterfall to the Central Hall. Hidden behind an out of place Delta Airlines lounge, I discovered a robotics startup already fulfilling aspects of the Woven City. Vancouver-based A&K Robotics displayed a proprietary autonomous mobility solution serving the ballooning geriatric population. The U.S. Census Bureau projects that citizens over the ages of 65 will double from “52 million in 2018 to 95 million by 2060” (or close to a quarter of the country’s population). This statistic parallels other global demographic trends for most first world countries. In Japan, the current population of elderly already exceeds 28% of its citizenry, with more than 70,000 over the age of 100. When A&K first launched its company it marketed conversion kits for turning manual industrial machines into autonomous vehicles. Today, the Canadian team is applying its passion for unmanned systems to improve the lives of the most vulnerable – people with disabilities. As Jessica Yip, A&K COO, explains, “When we founded the company we set out to develop and prove our technology first in industrial environments moving large cleaning machines that have to be accurate because of their sheer size. Now we’re applying this proven system to working with people who face mobility challenges.” The company plans to initially sell its elegant self-driving wheelchair (shown below) to airports, a $2 billion opportunity serving 63 million passengers worldwide.

Walking back into the LVCC from the Westgate, I heard Toyoda’s keynote in my head – “mobility for all” – forming a prism in which to view the rest of the show. Looking past Hyundai/Uber’s massive Air Taxi and Omron’s ping-pong playing robot; thousands of suited executives led me under LG’s television waterfall to the Central Hall. Hidden behind an out of place Delta Airlines lounge, I discovered a robotics startup already fulfilling aspects of the Woven City. Vancouver-based A&K Robotics displayed a proprietary autonomous mobility solution serving the ballooning geriatric population. The U.S. Census Bureau projects that citizens over the ages of 65 will double from “52 million in 2018 to 95 million by 2060” (or close to a quarter of the country’s population). This statistic parallels other global demographic trends for most first world countries. In Japan, the current population of elderly already exceeds 28% of its citizenry, with more than 70,000 over the age of 100. When A&K first launched its company it marketed conversion kits for turning manual industrial machines into autonomous vehicles. Today, the Canadian team is applying its passion for unmanned systems to improve the lives of the most vulnerable – people with disabilities. As Jessica Yip, A&K COO, explains, “When we founded the company we set out to develop and prove our technology first in industrial environments moving large cleaning machines that have to be accurate because of their sheer size. Now we’re applying this proven system to working with people who face mobility challenges.” The company plans to initially sell its elegant self-driving wheelchair (shown below) to airports, a $2 billion opportunity serving 63 million passengers worldwide.

In the United States the federal government mandates that airlines provide ‘free, prompt wheelchair assistance between curbside and cabin seat’ as part of the 1986 Air Carrier Access Act. Since passing the bill, airport wheelchair assistance has mushroomed to an almost unserviceable rate as carriers struggle to fulfill the mandated free service. In reviewing the airlines performance Eric Lipp, of the disability advocacy group Open Doors, complains, “Ninety percent of the wheelchair problems exist because there’s no money in it. I’m not 100% convinced that airline executives are really willing to pay for this service.” In balancing profits with accessibility, airlines have employed unskilled, underpaid workers to push disabled fliers to their seats. A&K’s solution has the potential of both liberating passengers and improving the airlines’ bottomline performance. Yip contends, “We’re embarking on moving people, starting in airports to help make traveling long distances more enjoyable and empowering.”

A&K joins a growing fleet of technology companies in tackling the airport mobility issue. Starting in 2017, Panasonic partnered with All Nippon Airways (ANA) to pilot self-driving wheelchairs in Tokyo’s Narita International Airport. As Juichi Hirasawa, Senior Vice President of ANA, states: “ANA’s partnership with Panasonic will make Narita Airport more welcoming and accessible, both of which are crucial to maintaining the airport’s status as a hub for international travel in the years to come. The robotic wheelchairs are just the latest element in ANA’s multi-faceted approach to improving hospitality in the air and on the ground.” Last December, the Abu Dhabi International Airport publicly demonstrated for a week autonomous wheelchairs manufactured by US-based WHILL. Ahmed Al Shamisi, Acting Chief Operations Officer of Abu Dhabi Airports, asserted: “Convenience is one of the most important factors in the traveller experience today. We want to make it even easier for passengers to enjoy our airports with ease. Through these trials, we have shown that restricted mobility passengers and their families can enjoy greater freedom of movement while still ensuring that the technology can be used safely and securely in our facilities.” Takeshi Ueda of WHILL enthusiastically added, “Seeing individuals experience the benefits of the seamless travel experience from security to boarding is so rewarding, and we are eager to translate this experience to airports across the globe.”

A&K joins a growing fleet of technology companies in tackling the airport mobility issue. Starting in 2017, Panasonic partnered with All Nippon Airways (ANA) to pilot self-driving wheelchairs in Tokyo’s Narita International Airport. As Juichi Hirasawa, Senior Vice President of ANA, states: “ANA’s partnership with Panasonic will make Narita Airport more welcoming and accessible, both of which are crucial to maintaining the airport’s status as a hub for international travel in the years to come. The robotic wheelchairs are just the latest element in ANA’s multi-faceted approach to improving hospitality in the air and on the ground.” Last December, the Abu Dhabi International Airport publicly demonstrated for a week autonomous wheelchairs manufactured by US-based WHILL. Ahmed Al Shamisi, Acting Chief Operations Officer of Abu Dhabi Airports, asserted: “Convenience is one of the most important factors in the traveller experience today. We want to make it even easier for passengers to enjoy our airports with ease. Through these trials, we have shown that restricted mobility passengers and their families can enjoy greater freedom of movement while still ensuring that the technology can be used safely and securely in our facilities.” Takeshi Ueda of WHILL enthusiastically added, “Seeing individuals experience the benefits of the seamless travel experience from security to boarding is so rewarding, and we are eager to translate this experience to airports across the globe.”

At the end of Toyoda’s remarks, he joked, “So by now, you may be thinking has this guy lost his mind? Is he a Japanese version of Willie Wonka?” As laughter permeated the theater, he excitedly confessed, “Perhaps, but I truly believe that THIS is the project that can benefit everyone, not just Toyota.” As I flew home, I left Vegas more encouraged about the future, entrepreneurs today are focused on something bigger than robots. In the words of Yip, “As a company we’re looking to serve all people, and are strategically focused on airports as a step towards smart cities where everyone has the opportunity to participate fully in society in whatever way they are interested. Regardless of age, physical challenges, or other, we want people to be able to get out of their homes and into their communities. To be able to see each other, interact, go to work or travel whenever they want to.”

Sign up today for next RobotLab event forum on Automating Farming: From The Holy Land To The Golden State, February 6th in New York City.