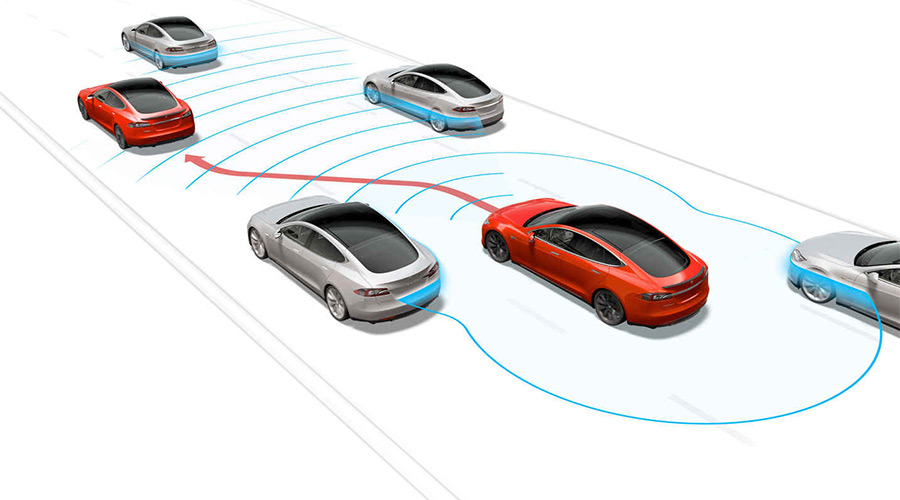

Tesla Motors autopilot (photo:Tesla)

Five years to the day after I criticized Uber for testing its self-proclaimed “self-driving” vehicles on California roads without complying with the testing requirements of California’s automated driving law, I find myself criticizing Tesla for testing its self-proclaimed “full self-driving” vehicles on California roads without complying with the testing requirements of California’s automated driving law.

As I emphasized in 2016, California’s rules for “autonomous technology” necessarily apply to inchoate automated driving systems that, in the interest of safety, still use human drivers during on-road testing. “Autonomous vehicles testing with a driver” may be an oxymoron, but as a matter of legislative intent it cannot be a null set.

There is even a way to mortar the longstanding linguistic loophole in California’s legislation: Automated driving systems undergoing development arguably have the “capability to drive a vehicle without the active physical control or monitoring by a human operator” even though they do not yet have the demonstrated capability to do so safely. Hence the human driver.

(An imperfect analogy: Some kids can drive vehicles, but it’s less clear they can do so safely.)

When supervised by that (adult) human driver, these nascent systems function like the advanced driver assistance features available in many vehicles today: They merely work unless and until they don’t. This is why I distinguish between the aspirational level (what the developer hopes its system can eventually achieve) and the functional level (what the developer assumes its system can currently achieve).

(SAE J3016, the source for the (in)famous levels of driving automation, similarly notes that “it is incorrect to classify” an automated driving feature as a driver assistance feature “simply because on-road testing requires” driver supervision. The version of J3016 referenced in regulations issued by the California Department of Motor Vehicles does not contain this language, but subsequent versions do.)

The second part of my analysis has developed as Tesla’s engineering and marketing have become more aggressive.

Back in 2016, I distinguished Uber’s AVs from Tesla’s Autopilot. While Uber’s AVs were clearly on the automated-driving side of a blurry line, the same was not necessarily true of Tesla’s Autopilot:

In some ways, the two are similar: In both cases, a human driver is (supposed to be) closely supervising the performance of the driving automation system and intervening when appropriate, and in both cases the developer is collecting data to further develop its system with a view toward a higher level of automation.

In other ways, however, Uber and Tesla diverge. Uber calls its vehicles self-driving; Tesla does not. Uber’s test vehicles are on roads for the express purpose of developing and demonstrating its technologies; Tesla’s production vehicles are on roads principally because their occupants want to go somewhere.

Like Uber then, Tesla now uses the term “self-driving.” And not just self-driving: full self-driving. (This may have pushed Waymo to call its vehicles “fully driverless“—a term that is questionable and yet still far more defensible. Perhaps “fully” is the English language’s new “very.”)

Tesla’s use of “FSD” is, shall we say, very misleading. After all, its “full self-driving” cars still need human drivers. In a letter to the California DMV, the company characterized “FSD” as a level two driver assistance feature. And I agree, to a point: “FSD” is functionally a driver assistance system. For safety reasons, it clearly requires supervision by an attentive human driver.

At the same time, “FSD” is aspirationally an automated driving system. The name unequivocally communicates Tesla’s goal for development, and the company’s “beta” qualifier communicates the stage of that development. Tesla intends for its “full self-driving” to become, well, full self-driving, and its limited beta release is a key step in that process.

And so while Tesla’s vehicles are still on roads principally because their occupants want to go somewhere, “FSD” is on a select few of those vehicles because Tesla wants to further develop—we might say “test”—it. In the words of Tesla’s CEO: “It is impossible to test all hardware configs in all conditions with internal QA, hence public beta.”

Tesla’s instructions to its select beta testers show that Tesla is enlisting them in this testing. Since the beta software “may do the wrong thing at the worst time,” drivers should “always keep your hands on the wheel and pay extra attention to the road. Do not become complacent…. Use Full Self-Driving in limited Beta only if you will pay constant attention to the road, and be prepared to act immediately….”

California’s legislature envisions a similar role for the test drivers of “autonomous vehicles”: They “shall be seated in the driver’s seat, monitoring the safe operation of the autonomous vehicle, and capable of taking over immediate manual control of the autonomous vehicle in the event of an autonomous technology failure or other emergency.” These drivers, by the way, can be “employees, contractors, or other persons designated by the manufacturer of the autonomous technology.”

Putting this all together:

- Tesla is developing an automated driving system that it calls “full self-driving.”

- Tesla’s development process involves testing “beta” versions of “FSD” on public roads.

- Tesla carries out this testing at least in part through a select group of designated customers.

- Tesla instructs these customers to carefully supervise the operation of “FSD.”

Tesla’s “FSD” has the “capability to drive a vehicle without the active physical control or monitoring by a human operator,” but it does not yet have the capability to do so safely. Hence the human drivers. And the testing. On public roads. In California. For which the state has a specific law. That Tesla is not following.

As I’ve repeatedly noted, the line between testing and deployment is not clear—and is only getting fuzzier in light of over-the-air updates, beta releases, pilot projects, and commercial demonstrations. Over the last decade, California’s DMV has performed admirably in fashioning rules, and even refashioning itself, to do what the state’s legislature told it to do. The issues that it now faces with Tesla’s “FSD” are especially challenging and unavoidably contentious.

But what is increasingly clear is that Tesla is testing its inchoate automated driving system on California roads. And so it is reasonable—and indeed prudent—for California’s DMV to require Tesla to follow the same rules that apply to every other company testing an automated driving system in the state.

Tesla’s fetal crash

Tesla can do better than its current public response to the recent fatal crash involving one of its vehicles. I would like to see more introspection, credibility, and nuance.

Introspection

Over the last few weeks, Tesla has blamed the deceased driver and a damaged highway crash attenuator while lauding the performance of Autopilot, its SAE level 2 driver assistance system that appears to have directed a Model X into the attenuator. The company has also disavowed its own responsibility: “The fundamental premise of both moral and legal liability is a broken promise, and there was none here.”

In Tesla’s telling, the driver knew he should pay attention, he did not pay attention, and he died. End of story. The same logic would seem to apply if the driver had hit a pedestrian instead of a crash barrier. Or if an automaker had marketed an outrageously dangerous car accompanied by a warning that the car was, in fact, outrageously dangerous. In the 1980 comedy Airplane!, a television commentator dismisses the passengers on a distressed airliner: “They bought their tickets. They knew what they were getting into. I say let ‘em crash.” As a rule, it’s probably best not to evoke a character in a Leslie Nielsen movie.

It may well turn out that the driver in this crash was inattentive, just as the US National Transportation Safety Board (NTSB) concluded that the Tesla driver in an earlier fatal Florida crash was inattentive. But driver inattention is foreseeable (and foreseen), and “[j]ust because a driver does something stupid doesn’t mean they – or others who are truly blameless – should be condemned to an otherwise preventable death.” Indeed, Ralph Nader’s argument that vehicle crashes are foreseeable and could be survivable led Congress to establish the National Highway Traffic Safety Administration (NHTSA).

Airbags are a particularly relevant example. Airbags are unquestionably a beneficial safety technology. But early airbags were designed for average-size male drivers—a design choice that endangered children and lighter adults. When this risk was discovered, responsible companies did not insist that because an airbag is safer than no airbag, nothing more should be expected of them. Instead, they designed second-generation airbags that are safer for everyone.

Similarly, an introspective company—and, for that matter, an inquisitive jury—would ask whether and how Tesla’s crash could have been reasonably prevented. Tesla has appropriately noted that Autopilot is neither “perfect” nor “reliable,” and the company is correct that the promise of a level 2 system is merely that the system will work unless and until it does not. Furthermore, individual autonomy is an important societal interest, and driver responsibility is a critical element of road traffic safety. But it is because driver responsibility remains so important that Tesla should consider more effective ways of engaging and otherwise managing the imperfect human drivers on which the safe operation of its vehicles still depends.

Such an approach might include other ways of detecting driver engagement. NTSB has previously expressed its concern over using only steering wheel torque as a proxy for driver attention. And GM’s own level 2 system, Super Cruise, tracks driver head position.

Such an approach may also include more aggressive measures to deter distraction. Tesla could alert law enforcement when drivers are behaving dangerously. It could also distinguish safety features from convenience features—and then more stringently condition convenience on the concurrent attention of the driver. For example, active lane keeping (which might ping pong the vehicle between lane boundaries) could enhance safety even if active lane centering is not operative. Similarly, automatic deceleration could enhance safety even if automatic acceleration is inoperative.

NTSB’s ongoing investigation is an opportunity to credibly address these issues. Unfortunately, after publicly stating its own conclusions about the crash, Tesla is no longer formally participating in NTSB’s investigation. Tesla faults NTSB for this outcome: “It’s been clear in our conversations with the NTSB that they’re more concerned with press headlines than actually promoting safety.” That is not my impression of the people at NTSB. Regardless, Tesla’s argument might be more credible if it did not continue what seems to be the company’s pattern of blaming others.

Credibility

Tesla could also improve its credibility by appropriately qualifying and substantiating what it says. Unfortunately, Tesla’s claims about the relative safety of its vehicles still range from “lacking” to “ludicrous on their face.” (Here are some recent views.)

Tesla repeatedly emphasizes that “our first iteration of Autopilot was found by the U.S. government to reduce crash rates by as much as 40%.” NHTSA reached its conclusion after (somehow) analyzing Tesla’s data—data that both Tesla and NHTSA have kept from public view. Accordingly, I don’t know whether the underlying math actually took only five minutes, but I can attempt some crude reverse engineering to complement the thoughtful analyses already done by others.

Let’s start with NHTSA’s summary: The Office of Defects Investigation (ODI) “analyzed mileage and airbag deployment data supplied by Tesla for all MY 2014 through 2016 Model S and 2016 Model X vehicles equipped with the Autopilot Technology Package, either installed in the vehicle when sold or through an OTA update, to calculate crash rates by miles travelled prior to and after Autopilot installation. [An accompanying chart] shows the rates calculated by ODI for airbag deployment crashes in the subject Tesla vehicles before and after Autosteer installation. The data show that the Tesla vehicles crash rate dropped by almost 40 percent after Autosteer installation”—from 1.3 to 0.8 crashes per million miles.

This raises at least two questions. First, how do these rates compare to those for other vehicles? Second, what explains the asserted decline?

Comparing Tesla’s rates is especially difficult because of a qualification that NHTSA’s report mentions only once and that Tesla’s statements do not acknowledge at all. The rates calculated by NHTSA are for “airbag deployment crashes” only—a category that NHSTA does not generally track for nonfatal crashes.

NHTSA does estimate rates at which vehicles are involved in crashes. (For a fair comparison, I look at crashed vehicles rather than crashes.) With respect to crashes resulting in injury, 2015 rates were 0.88 crashes per million miles for light trucks and 1.26 for passenger cars. And with respect to property-damage only crashes, they were 2.35 for light trucks and 3.12 for passenger cars. This means that, depending on the correlation between airbag deployment and crash injury (and accounting for the increasing number and sophistication of airbags), Tesla’s rates could be better than, worse than, or comparable to these national estimates.

Airbag deployment is a complex topic, but the upshot is that, by design, airbags do not always inflate. An analysis by the Pennsylvania Department of Transportation suggests that airbags deploy in less than half of the airbag-equipped vehicles that are involved in reported crashes, which are generally crashes that cause physical injury or significant property damage. (The report’s shift from reportable crashes to reported crashes creates some uncertainty, but let’s assume that any crash that results in the deployment of an airbag is serious enough to be counted.)

Data from the same analysis show about two reported crashed vehicles per million miles traveled. Assuming a deployment rate of 50 percent suggests that a vehicle deploys an airbag in a crash about once every million miles that it travels, which is roughly comparable to Tesla’s post-Autopilot rate.

Indeed, at least two groups with access to empirical data—the Highway Loss Data Institute and AAA – The Auto Club Group—have concluded that Tesla vehicles do not have a low claim rate (in addition to having a high average cost per claim), which suggests that these vehicles do not have a low crash rate either.

Tesla offers fatality rates as another point of comparison: “In the US, there is one automotive fatality every 86 million miles across all vehicles from all manufacturers. For Tesla, there is one fatality, including known pedestrian fatalities, every 320 million miles in vehicles equipped with Autopilot hardware. If you are driving a Tesla equipped with Autopilot hardware, you are 3.7 times less likely to be involved in a fatal accident.”

In 2016, there was one fatality for every 85 million vehicle miles traveled—close to the number cited by Tesla. For that same year, NHTSA’s FARS database shows 14 fatalities across 13 crashes involving Tesla vehicles. (Ten of these vehicles were model year 2015 or later; I don’t know whether Autopilot was equipped at the time of the crash.) By the end of 2016, Tesla vehicles had logged about 3.5 billion miles worldwide. If we accordingly assume that Tesla vehicles traveled 2 billion miles in the United States in 2016 (less than one tenth of one percent of US VMT), we can estimate one fatality for every 150 million miles traveled.

It is not surprising if Tesla’s vehicles are less likely to be involved in a fatal crash than the US vehicle fleet in its entirety. That fleet, after all, has an average age of more than a decade. It includes vehicles without electronic stability control, vehicles with bald tires, vehicles without airbags, and motorcycles. Differences between crashes involving a Tesla vehicle and crashes involving no Tesla vehicles could therefore have nothing to do with Autopilot.

More surprising is the statement that Tesla vehicles equipped with Autopilot are much safer than Tesla vehicles without Autopilot. At the outset, we don’t know how often Autopilot was actually engaged (rather than merely equipped), we don’t know the period of comparison (even though crash and injury rates fluctuate over the calendar year), and we don’t even know whether this conclusion is statistically significant. Nonetheless, on the assumption that the unreleased data support this conclusion, let’s consider three potential explanations:

First, perhaps Autopilot is incredibly safe. If we assume (again, because we just don’t know otherwise) that Autopilot is actually engaged for half of the miles traveled by vehicles on which it is installed, then a 40 percent reduction in airbag deployments per million miles really means an 80 percent reduction in airbag deployments while Autopilot is engaged. Pennsylvania data show that about 20 percent of vehicles in reported crashes are struck in the rear, and if we further assume that Autopilot would rarely prevent another vehicle from rear-ending a Tesla, then Autopilot would essentially need to prevent every other kind of crash while engaged in order to achieve such a result.

Second, perhaps Tesla’s vehicles had a significant performance issue that the company corrected in an over-the-air update at or around the same time that it introduced Autopilot. I doubt this—but the data released are as consistent with this conclusion as with a more favorable one.

Third, perhaps Tesla introduced or upgraded other safety features in one of these OTA updates. Indeed, Tesla added automatic emergency braking and blind spot warning about half a year before releasing Autopilot, and Autopilot itself includes side collision avoidance. Because these features may function even when Autopilot is not engaged and might not induce inattention to the same extent as Autopilot, they should be distinguished from rather than conflated with Autopilot. I can see an argument that more people will be willing to pay for convenience plus safety than for just safety alone, but I have not seen Tesla make this more nuanced argument.

Nuance

In general, Tesla should embrace more nuance. Currently, the company’s explicit and implicit messages regarding this fatal crash have tended toward the absolute. The driver was at fault—and therefore Tesla was not. Autopilot improves safety—and therefore criticism is unwarranted. The company needs to be able to communicate with the public about Autopilot—and therefore it should share specific and, in Tesla’s view, exculpatory information about the crash that NTSB is investigating.

Tesla understands nuance. Indeed, in its statement regarding its relationship with NTSB, the company noted that “we will continue to provide technical assistance to the NTSB.” Tesla should embrace a systems approach to road traffic safety and acknowledge the role that the company can play in addressing distraction. It should emphasize the limitations of Autopilot as vigorously as it highlights the potential of automation. And it should cooperate with NTSB while showing that it “believe[s] in transparency” by releasing data that do not pertain specifically to this crash but that do support the company’s broader safety claims.

For good measure, Tesla should also release a voluntary safety self-assessment. (Waymo and General Motors have.) Autopilot is not an automated driving system, but that is where Tesla hopes to go. And by communicating with introspection, credibility, and nuance, the company can help make sure the public is on board.

The senate’s automated driving bill could squash state authority

My previous post on the House and Senate automated driving bills (HB 3388 and SB 1885) concluded by noting that, in addition to the federal government, states and the municipalities within them also play an important role in regulating road safety.These numerous functions involve, among others, designing and maintaining roads, setting and enforcing traffic laws, licensing and punishing drivers, registering and inspecting vehicles, requiring and regulating automotive insurance, and enabling victims to recover from the drivers or manufacturers responsible for their injuries.

My previous post on the House and Senate automated driving bills (HB 3388 and SB 1885) concluded by noting that, in addition to the federal government, states and the municipalities within them also play an important role in regulating road safety.These numerous functions involve, among others, designing and maintaining roads, setting and enforcing traffic laws, licensing and punishing drivers, registering and inspecting vehicles, requiring and regulating automotive insurance, and enabling victims to recover from the drivers or manufacturers responsible for their injuries.

Unfortunately, the Senate bill could preempt many of these functions. The House bill contains modest preemption language and a savings clause that admirably tries to clarify the line between federal and state roles. The Senate bill, in contrast, currently contains a breathtakingly broad preemption provision that was proposed in committee markup by, curiously, a Democratic senator.

(I say “currently” for two reasons. First, a single text of the bill is not available online; only the original text plus the marked-up texts for the Senate Commerce Committee’s amendments to that original have been posted. Second, whereas HB 3388 has passed the full House, SB 1885 is still making its way through the Senate.)

Under one of these amendments to the Senate bill, “[n]o State or political subdivision of a State may adopt, maintain, or enforce any law, rule, or standard regulating the design, construction, or performance of a highly automated vehicle or automated driving system with respect to any of the safety evaluation report subject areas.” These areas are system safety, data recording, cybersecurity, human-machine interface, crashworthiness, capabilities, post-crash behavior, accounting for applicable laws, and automation function.

A savings provision like the one in the House bill was in the original Senate bill but apparently dropped in committee.

A plain reading of this language suggests that all kinds of state and local laws would be void in the context of automated driving. Restrictions on what kind of data can be collected by motor vehicles? Fine for conventional driving, but preempted for automated driving. Penalties for speeding? Fine for conventional driving, but preempted for automated driving. Deregistration of an unsafe vehicle? Same.

The Senate language could have an even more subtly dramatic effect on state personal injury law. Under existing federal law, FMVSS compliance “does not exempt a person from liability at common law.” (The U.S. Supreme Court has fabulously muddied what this provision actually means by, in two cases, reaching essentially opposite conclusions about whether a jury could find a manufacturer liable under state law for injuries caused by a vehicle design that was consistent with applicable FMVSS.)

The Senate bill preserves this statutory language (whatever it means) and even adds a second sentence providing that “nothing” in the automated driving preemption section “shall exempt a person from liability at common law or under a State statute authorizing a civil remedy for damages or other monetary relief.”

Although this would seem to reinforce the power of a jury to determine what is reasonable in a civil suit, the Senate bill makes this second sentence “subject to” the breathtakingly broad preemption language described above. On its plain meaning, this language accordingly restricts rather than respects state tort and product liability law.

This is confusing (whether intentionally or unintentionally), so consider a stylized illustration:

1) You may not use the television.

2) Subject to (1), you may watch The Simpsons.

This language probably bars you from watching The Simpsons (at least on the television). If the intent were instead to permit you to do so, the language would be:

1) You may not use the television.

2) Notwithstanding (1), you may watch The Simpsons.

The amendment as proposed could have said “notwithstanding” instead of “subject to.” It did not.

I do not know the intent of the senators who voted for this automated driving bill and for this amendment to it. They may have intended a result other than the one suggested by their language. Indeed, they may have even addressed these issues without recording the result in the documents subsequently released. If so, they should make these changes, or they should make their changes public.

And if not, everyone from Congress to City Hall should consider what this massive preemption would mean.

Congress’ automated driving bills are both more and less than they seem

Bills being considered by Congress deserve our attention—but not our full attention. To wit: When it comes to safety-related regulation of automated driving, existing law is at least as important as the bills currently in Congress (HB 3388 and SB 1885). Understanding why involves examining all the ways that the developer of an automated driving system might deploy its system in accordance with federal law as well as all the ways that governments might regulate that system. And this examination reveals some critical surprises.

Bills being considered by Congress deserve our attention—but not our full attention. To wit: When it comes to safety-related regulation of automated driving, existing law is at least as important as the bills currently in Congress (HB 3388 and SB 1885). Understanding why involves examining all the ways that the developer of an automated driving system might deploy its system in accordance with federal law as well as all the ways that governments might regulate that system. And this examination reveals some critical surprises.

As automated driving systems get closer to public deployment, their developers are closely evaluating how the full set of Federal Motor Vehicle Safety Standards (FMVSS) will apply to these systems and to the vehicles on which they are installed. Rather than specifying a comprehensive regulatory framework, these standards impose requirements on only some automotive features and functions. Furthermore, manufacturers of vehicles and of components thereof self-certify that their products comply with these standards. In other words, unlike its European counterparts (and a small number of federal agencies overseeing products deemed more dangerous than motor vehicles), the National Highway Traffic Safety Administration (NHTSA) does not prospectively approve most of the products it regulates.

There are at least seven (!) ways that the developer of an automated driving system could conceivably navigate this regulatory regime.

First, the developer might design its automated driving system to comply with a restrictive interpretation of the FMVSS. The attendant vehicle would likely have conventional braking and steering mechanisms as well as other accoutrements for an ordinary human driver. (These conventional mechanisms could be usable, as on a vehicle with only part-time automation, or they might be provided solely for compliance.) NHTSA implied this approach in its 2016 correspondence with Google, while another part of the US Department of Transportation even highlighted those specific FMVSS provisions that a developer would need to design around. Once the developer self-certifies that its system in fact complies with the FMVSS, it can market it.

Second, the developer might ask NHTSA to clarify the agency’s understanding of these provisions with a view toward obtaining a more accommodating interpretation. Previously—and, more to the point, under the previous administration—NHTSA was somewhat restrictive in its interpretation, but a new chief counsel might reach a different conclusion about whether and how the existing standards apply to automated driving. In that case, the developer could again simply self-certify that its system indeed complies with the FMVSS.

Third, the developer might petition NHTSA to amend the FMVSS to more clearly address (or expressly abstain from addressing) automated driving systems. This rulemaking process would be lengthy (measured in years rather than months), but a favorable result would give the developer even more confidence in self-certifying its system.

Fourth, the developer could lobby Congress to shorten this process—or preordain the result—by expressly accommodating automated driving systems in a statute rather than in an agency rule. This is not, by the way, what the bills currently in Congress would do.

Fifth, the developer could request that NHTSA exempt some of its vehicles from portions of the FMVSS. This exemption process, which is prospective approval by another name, requires the applicant to demonstrate that the safety level of its feature or vehicle “at least equals the safety level of the standard.” Under existing law, the developer could exempt no more than 2,500 new vehicles per year. Notably, however, this could include heavy trucks as well as passenger cars.

Sixth, the developer could initially deploy its vehicles “solely for purposes of testing or evaluation” without self-certifying that those vehicles comply with the FMVSS. Although this exception is available only to established automotive manufacturers, a new or recent entrant could partner with or outright buy one of the companies in that category. Many kinds of large-scale pilot and demonstration projects could be plausibly described as “testing or evaluation,” particularly by companies that are comfortable losing money (or comfortable describing their services as “beta”) for years on end.

Seventh, the developer could ignore the FMVSS altogether. Under federal law, “a person may not manufacture for sale, sell, offer for sale, introduce or deliver for introduction in interstate commerce, or import into the United States, any [noncomplying] motor vehicle or motor vehicle equipment.” But under the plain meaning of this provision (and a related definition of “interstate commerce”), a developer could operate a fleet of vehicles equipped with its own automated driving system within a state without certifying that those vehicles comply with the FMVSS.

This is the background law against which Congress might legislate—and against which its bills should be evaluated.

Both bills would dramatically expand the number of exemptions that NHTSA could grant to each manufacturer, eventually reaching 100,000 per year in the House version. Some critics of the bills have suggested that this would give free rein to manufactures to deploy tens of thousands of automated vehicles without any prior approval.

But considering this provision in context provides two key insights. First, automated driving developers may already be able to lawfully deploy tens of thousands of their vehicles without any prior approval—by designing them to comply with the FMVSS, by claiming testing or evaluation, or by deploying an in-state service. Second, the exemption process gives NHTSA far more power than it otherwise has: The applicant must convince the agency to affirmatively permit it to market its system.

Both bills would also require the manufacturer of an automated driving system to submit a “safety evaluation report” to NHTSA that “describes how the manufacturer is addressing the safety of such vehicle or system.” This requirement would formalize the safety assessment letters that NHTSA encouraged in its 2016 and 2017 automated vehicle policies. These three frameworks all evoke my earlier proposal for what I call the “public safety case,” wherein an automated driving developer tells the rest of us what they are doing, why they think it is reasonably safe, and why we should believe them.

Unsurprisingly, I think this is a fine idea. It encourages innovation in safety assurance and regulation, informs regulators, and—if disclosure is meaningful—helps educate the public at large. Congress could strengthen these provisions as currently drafted, and it could give NHTSA the resources needed to effectively engage with these reports. Regardless, in evaluating the bills, it is important to understand that these provisions increase rather than decrease what an automated driving system developer must do under federal law. They are an addition rather than an alternative to each of the seven pathways described above.

Both bills would also exclude heavy trucks and buses from their definitions of automated vehicle. This exclusion, added at the behest of labor groups concerned about the eventual implications of commercial truck automation, means that NHTSA cannot exempt tens of thousands of heavy vehicles per manufacturer from a safety standard. But each truck manufacturer can still seek to exempt up to 2,500 vehicles per year—if such an exemption is even required. And, depending on how language relating to the safety evaluation reports is interpreted, this exemption might even relieve automated truck manufacturers of the obligation to submit these reports.

Finally, these bills largely preserve NHTSA’s existing regulatory authority—and that authority involves much more than making rules and granting exemptions to those rules. Crucially, the agency can conduct investigations and pursue recalls—even if a vehicle fully complies with the applicable FMVSS. This is because ensuring motor vehicle safety requires more than satisfying specific safety standards. And this broader definition of safety—“the performance of a motor vehicle or motor vehicle equipment in a way that protects the public against unreasonable risk of accidents occurring because of the design, construction, or performance of a motor vehicle, and against unreasonable risk of death or injury in an accident, and includes nonoperational safety of a motor vehicle”—gives NHTSA great power.

States and the municipalities within them also play an important role in regulating road safety—and my next post considers the effect of the Senate bill in particular on this state and local authority.