The UK Robotics Growth Partnership (RGP) aims to set the conditions for success to empower the UK to be a global leader in Robotics and Autonomous Systems whilst delivering a smarter, safer, more prosperous, sustainable and competitive UK. The aim is for smart machines to become ubiquitous, woven into the fabric of society, in every sector, every workplace, and at home. If done right, this could lead to increased productivity, and improved quality of life. It could enable us to meet Net Zero targets, and support workers as their roles transition from menial tasks.

One thing that’s striking is that although robotics holds so much potential, they are not yet ready. The covid crisis has made this very clear. If it had been ready, we could have – at scale – deployed robots to sanitise hospitals, enable doctor-patient communication through telepresence, or connect patients with loved ones. Robots could have produced, organised, and delivered much needed samples, tests, PPE, medicine, and food across the UK. And many businesses could be reopening with a robotic interface. Robots could have powered a low-touch economy, where activities continue even when humans can’t be in close physical contact, driving recovery and resilience.

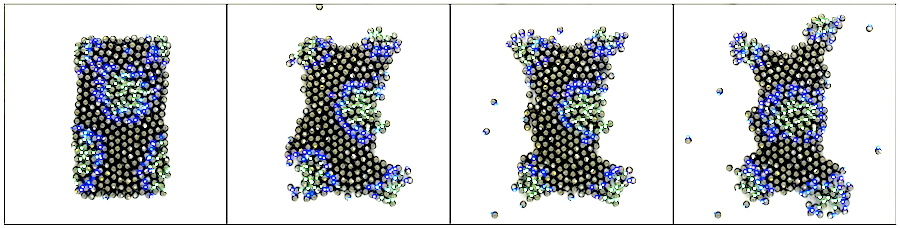

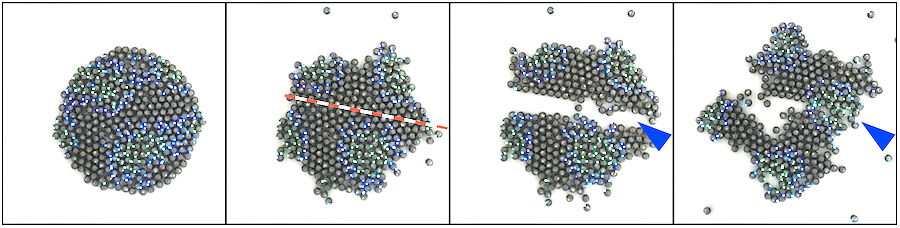

For the past year, we’ve been thinking about this at the RGP. How could we have done things better? What would it take to make an armada of disinfecting robots for a Covid pop-up hospital (called a Nightingale hospital in the UK)? Ideally we would have been able to log into a digital twin of the hospital, port in a model of a robot platform from a database, work-up a solution in the virtual world, and readily port it to an actual testbed to demonstrate its function in the physical world. We could then trial the solution in a living lab, maybe a dedicated Nightingale, before scaling up the solution to other hospitals. Others developing telepresence robots could follow the same methodology, checking that their solutions are interoperable, and working within the same virtual and physical environments.

We have many bits of the puzzle in the UK – great research and industry, plus government buy-in, but what we need is to bring this together.

To explore this further, we spent the last months hosting ThinkIns with Tortoise Media to get feedback from Academia, Industry, Policy and the Public. You can read all the blog posts and watch the videos here:

The future of smart machines: reflections from academia https://ukrgp.org/the-future-of-smart-machines-reflections-from-academia/

Building an ecosystem to make useful robots https://ukrgp.org/building-an-ecosystem-to-make-useful-robots/

Musings with the public about their future with smart machines https://ukrgp.org/musings-with-the-public-about-their-future-with-smart-machines/

Keeping up with the pace of change – positioning the UK as a leader in smart machines https://ukrgp.org/keeping-up-with-the-pace-of-change-positioning-the-uk-as-a-leader-in-smart-machines/

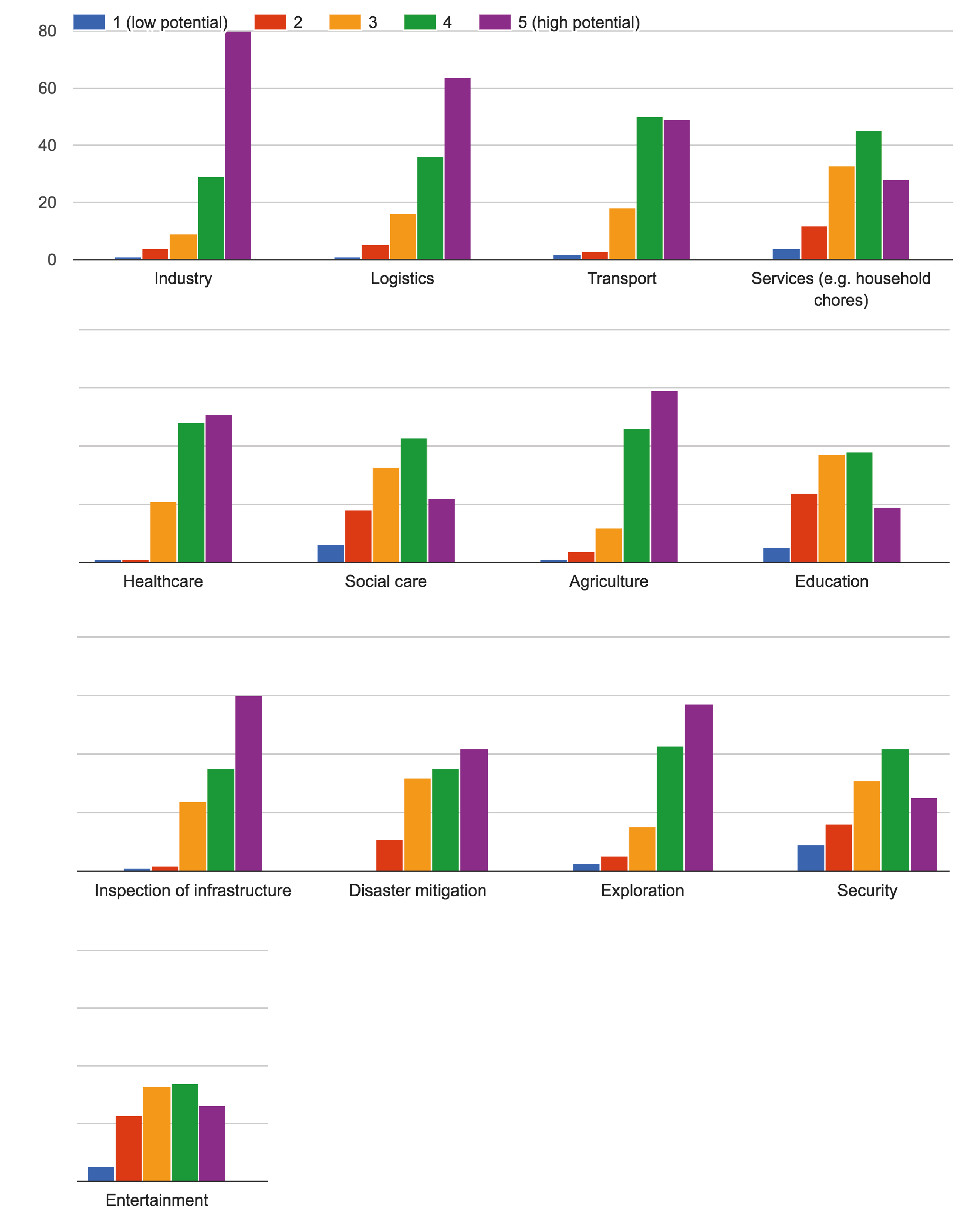

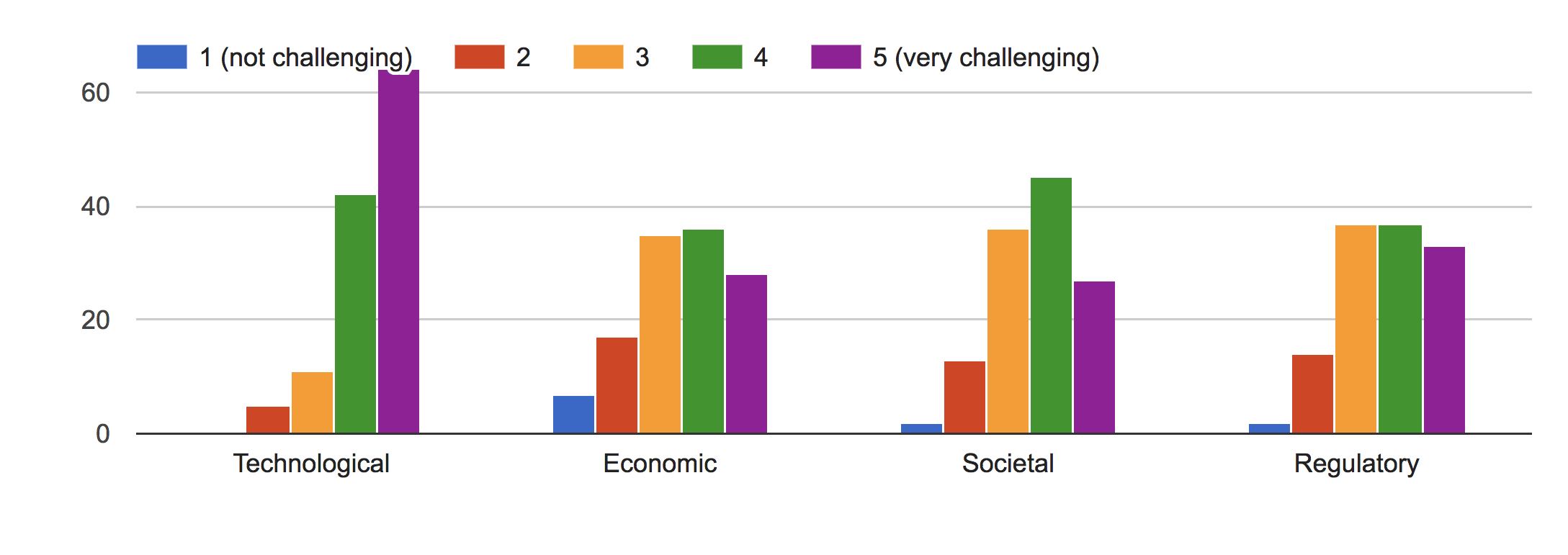

Below are some preliminary findings.

From digital twins to living labs

In his blog, James Kell from Rolls Royce says “The UK is small enough to collaborate well, but big enough to be a global leader. But to be successful we will need new tools, in particular better, cheaper digital twins – synthetic environments where we can develop and test new approaches before we test them in real world environments on our $35m engines.”

Professor Samia Nefti-Meziani from Salford University had a similar comment “Society needs better tools to support a sustainable future, with a new network of synthetic environments to build and fine-tune new technologies, to ensure they work in the real world and to reduce the time from their inception to deployment from years to months. Digital platforms and accessible, open-source software tools will empower SMEs, academics and the public sector to engage and benefit from these new solutions.”

Collaboration across academia and industry

As Samia highlights, “Collaboration was a recurring theme in the ThinkIns, with academic and industry partnerships essential to ensure we target the most pressing challenges and drive innovation in the sectors that need its solutions. As smart machines become more capable and cheaper, their adoption and development within the UK business ecosystem will broaden across sectors and applications.”

Government support to unlock incentives

James continued, “Collaboration needs coordination: Government is critical to convening and leading, creating new ways and incentives to work better together. The new tools will only equip our researchers and SMEs to accelerate product development, validation and speed to market if they can trust each other and all both contribute and benefit. We need new ways for big industry (companies like mine) to have their challenges understood and find new partners to work with, to learn together to develop solutions and put them in place quicker. And if we join up the academics and link across our innovation infrastructure and existing test areas, we will accelerate the adoption of smart machines and unleash the multiple benefits they bring.”

The human element

‘Taking the public with us’, is critical to mass adoption, says Samia. “Key will be:

– Involving the public in co-creating research and industry ambitions, to help them understand and engage with what RAS can offer

– Engaging with those who distrust RAS, to understand their concerns and gain their confidence

– Improving RAS education and lifelong learning, so those with interest and capability can be trained in RAS and directly involved in shaping their future.”

“The sector must ‘show its workings’ and be clear of the problems and challenges to prioritise. Ensuring standards and protocols are developed to protect the input of the public and the quality of the outputs is vital to buy-in in the long-term.”

David Bisset, a Robotics Consultant, commented on the Public session, highlighting that “Smart Machines are already with us, cars, aeroplanes, vacuum cleaners we don’t call them robots but they all use that technology. To make them work requires many skills; industrial design, AI people, sensor experts and interaction designer… and many more. At a human level we need to be able to trust, to know it’s built right and safe.”

He highlights the issue of “Tech Wash” mentioned by the public. “Is ‘smart machine’ just some clever rebranding? The needless selling of technology as a solution to every senior manager’s need to outshine their peers? We need to stop and think about the consequences of forcing through technology driven organisational change without evidence and stop needless disruption. We need to know these things will work!”

Overall, to make smart machines a success, we need to bring the discussion to a human level, to where this makes a difference to people.

Bringing it all together

Rob Buckingham, Head of RACE at the UK Atomic Energy Authority commented on the policy ThinkIn “Robotics includes both tools that are physically discrete from us and physical augmentation. In either case the interface between person and machine is going to be a field of rapid development driven at least in part by gaming and zooming.

The much bigger part is the informed discussion with people, with society, about the world we want to live in. Are we Canute (spoiler alert – it doesn’t end well) or are we the voice of sustainable democracy that values both people and nature?

I think Living Labs are going to sit at the heart of this… physical places where we explore the issues and opportunities together. In my field of nuclear, mock-ups have always been sensible because experimenting with the real thing is only allowed in exceptional circumstances. My hope is that we will invest in many Living Labs around the country that enable us, collectively, to explore the benefits and unintended consequences of our creativity. Of course, we might expect all of the Living Labs to be connected by data and the management of data; indeed we might expect common tech platforms and digital models of ‘nearly everything’ to be one of the highest value spin-offs.”

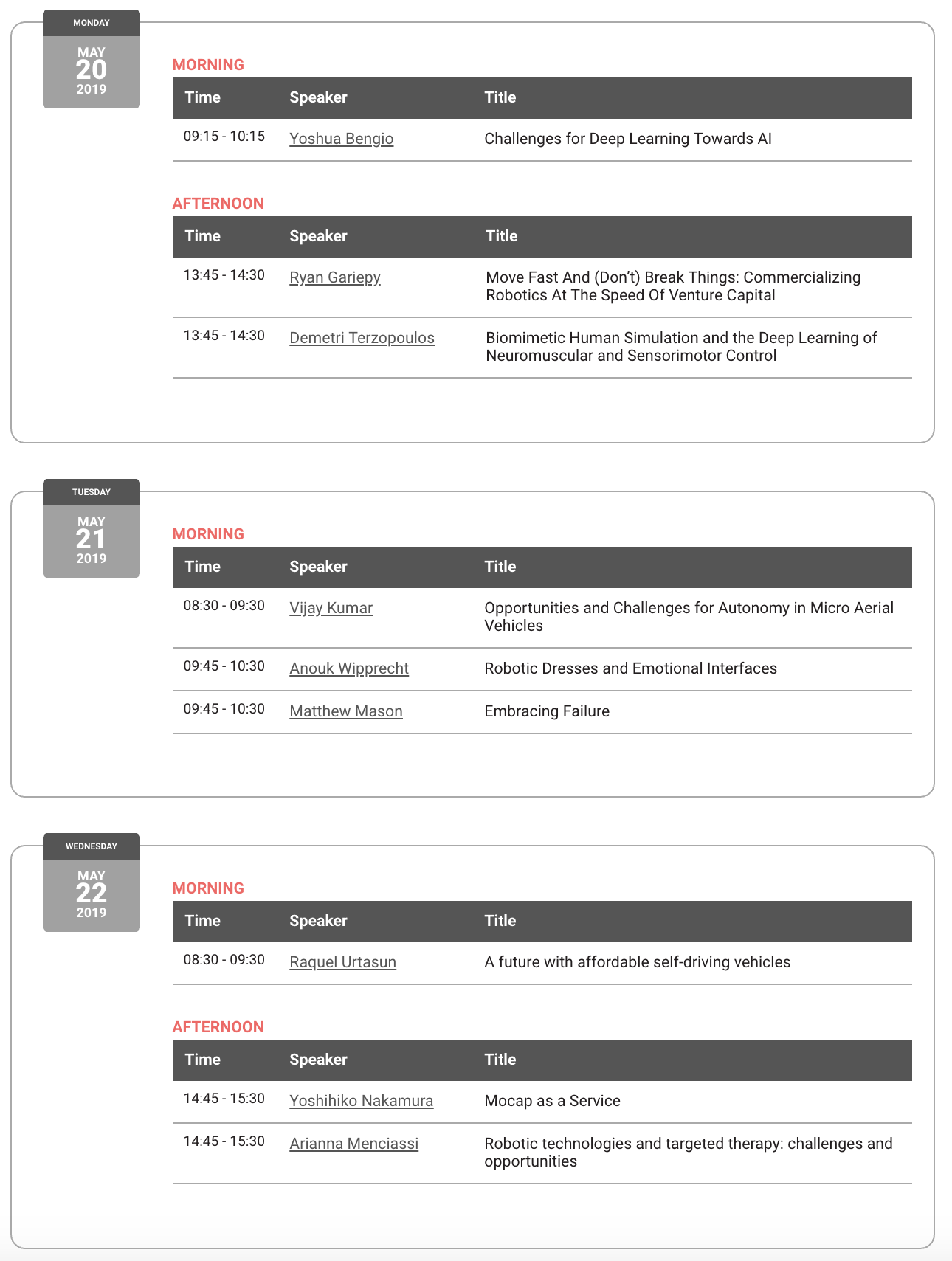

The IEEE International Conference on Robotics and Automation (

The IEEE International Conference on Robotics and Automation (

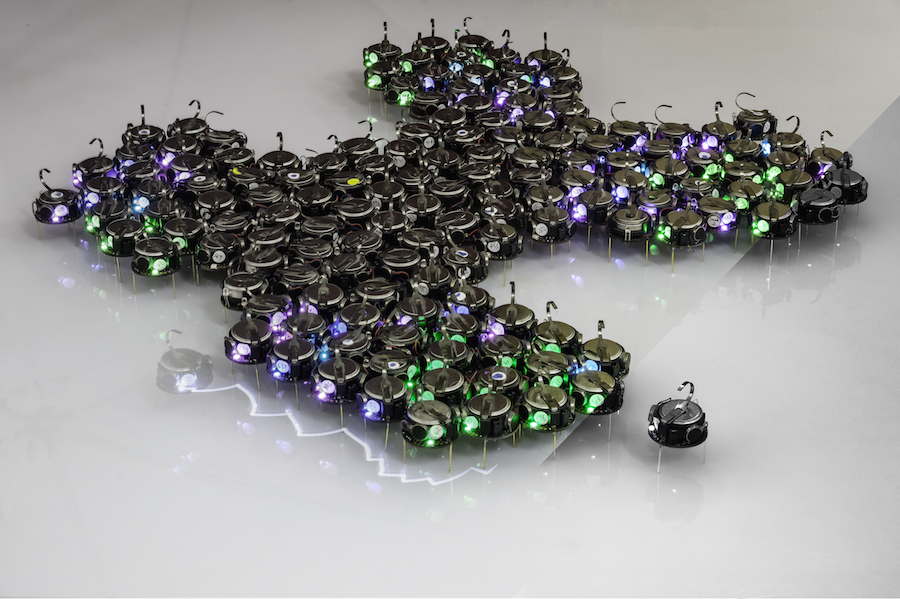

Why is a Robotics Flagship needed?

Why is a Robotics Flagship needed?

The 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (#IROS2018) will be held for the first time in Spain in the lively capital city of Madrid from 1 to 5 October. This year’s motto is “Towards a Robotic Society”.

The 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (#IROS2018) will be held for the first time in Spain in the lively capital city of Madrid from 1 to 5 October. This year’s motto is “Towards a Robotic Society”.