Robot Talk Episode 151 – Robots to study the ocean, with Simona Aracri

Claire chatted to Simona Aracri from National Research Council of Italy about innovative robot designs for oceanography and environmental monitoring.

Simona Aracri is a researcher in the Institute of Marine Engineering at the National Research Council of Italy. Previously, she was a Post Doctoral Research Associate at the University of Edinburgh, working on the award winning project ORCA Hub and focusing on offshore robotic sensors. Her research uses innovative sensors and robotic platforms to push the boundaries of observational oceanography and environmental monitoring. She has spent more than 6 months at sea on oceanographic sampling campaigns, in the Mediterranean Sea, Pacific Ocean and the North Sea.

UniX AI introduces Panther, the world’s first service humanoid robot to enter real household deployment, powered by its differentiated wheeled dual-arm architecture

Electrofluidic fiber muscles could enable silent robotic systems

Origami-inspired robot built from printable polymers uses electric current to move

KNF Introduces Intelligent Pump Features for Flow, Pressure and Vacuum Control and Versatile Dosing

Best agentic AI platforms: Why unified platforms win

Search “best agentic AI platform,” and you’ll drown in a sea of vendor comparisons, feature matrices, and tool catalogs. The real enemy isn’t picking the wrong vendor, though. Building your own AI solution can kill your ambitions before they even get off the ground.

In most enterprises, teams are cobbling together their own mix-and-match stack of open-source tools, cloud services, and point solutions. Marketing has its chatbot builder, IT is experimenting with some hyperscaler’s agent framework, and data science is spinning up vector databases on whatever cloud credits they can scrounge up.

That’s shadow AI in a nutshell, with governance gaps that no compliance audit can easily untangle.

Everyone loves talking about building agents. That’s the easy part.

The part nobody wants to admit is that most of those agents will never make it out of a demo. Siloed teams don’t have a unified way to run them, govern them, or keep them from stepping on each other’s toes.

Enterprises don’t need more pet projects. They need a governed agent workforce: AI that works across teams, clouds, and business systems without falling apart at the slightest disruption.

Key takeaways

- Fragmented AI stacks slow enterprises down. Tool sprawl and shadow AI make agents brittle, hard to govern, and difficult to scale.

- End-to-end means unifying build, deploy, and govern. A single control plane eliminates handoff failures and gets agents into production faster.

- The blank-slate problem is real. Reference architectures, agent templates, and pre-built starter patterns help teams deliver value quickly instead of rebuilding from zero.

- Openness only works with governance. Supporting any tool or model means nothing without consistent security, lineage, and policy controls traveling with every agent.

- Structural partnerships accelerate enterprise readiness. Co-engineered integrations with infrastructure and application providers give teams production-grade agentic workflows without months of manual setup.

Why fragmentation is the real enemy to enterprise AI

Walk into any enterprise today and ask how many different AI tools are running across the organization. The honest answer is usually, “We have no idea.” That’s not incompetence. It’s the natural result of teams trying to perform their jobs as quickly and accurately as possible.

Shadow AI, duplicated efforts, and niche point solutions are all part of the problem.

This leads to two common failure modes that kill more AI initiatives than any vendor selection mistake ever could:

- Tool sprawl and “LEGO block” architectures: Somewhere along the way, “shipping an AI use case” turned into a scavenger hunt. Teams are stitching together 10–14 tools, like vector stores, orchestrators, log aggregators, and governance band-aids, just to get a single agent out the door. Each API and integration point is just another output away from failure, security exposure, or a performance meltdown. A project that should take weeks dissolves into a multi-month integration saga nobody signed up for.

- Siloed, cloud-specific stacks that don’t interoperate: Speed over flexibility is how most teams end up locked into a hyperscaler ecosystem. It’s smooth sailing until you try to plug into a system you don’t control, deploy in a regulated environment, or collaborate with a partner on a different platform. Then you end up choosing between two painful paths: move fast and lose control, or keep control and fall behind.

Any serious conversation about agentic AI platforms has to start with eliminating this fragmentation. Everything else is secondary.

What “end-to-end” actually means for agentic AI

“End-to-end” gets thrown around by nearly every vendor in the space. But in an enterprise context, it has a specific meaning that most tool collections fail to meet.

Real end-to-end coverage spans three critical stages, each with specific requirements that fragmented tool chains struggle to address:

- Build: Teams shouldn’t start from scratch every time they need an agent. That means reference architectures, reusable patterns, and starter kits aligned with real enterprise workflows.

- Operate: Single agents are proofs of concept. Production systems need dozens or hundreds of agents coordinating across systems, sharing memory, handling errors gracefully, and optimizing for cost and latency. That requires sophisticated orchestration, continuous evaluation, and the ability to adjust behavior based on real-world performance.

- Govern: Lineage, access control, policy enforcement, and auditability are needed the moment agents start making decisions and interacting with real business systems. Governance isn’t a checklist. It’s the operating system.

Stitching together separate tools for each stage creates drift, governance gaps, and extended time-to-production. Teams spend more time on integration than innovation, and by the time they’re ready to deploy, the business requirements have already moved on.

From building agents to running an agent workforce

Most platform conversations go off the rails by focusing on building individual agents instead of running a workforce of agents at scale.

That shift changes everything. Running a workforce means you need:

- Shared memory so agents can learn from each other’s interactions

- Consistent reasoning behavior so agents don’t make contradictory decisions

- Centralized policies that update across the entire workforce without redeploying everything

- Unified observability so you can debug multi-agent workflows without chasing logs across a dozen different systems

Most importantly, you need agent lifecycle management at the workforce level. New agents should automatically inherit organizational knowledge and policies. Updates should roll out consistently across related agents to prevent coordination failures.

Building individual agents is a development problem. Running an agent workforce is an operational challenge that requires platform-level thinking. The two require fundamentally different approaches.

How to solve the blank slate problem

The industry loves to offer infinite flexibility, as if giving teams a blank canvas is a gift. It isn’t. Without a starting point, teams spend months making foundational decisions that have already been solved elsewhere, time-to-value slipping straight into the next fiscal year.

What teams actually need is momentum.

That means starting with fully formed agent templates and reference architectures shaped around real enterprise workflows. Not hypotheticals or academic examples, but real document pipelines, supply chain agents, and customer service automations with the hard edge cases already accounted for.

The best templates aren’t code samples polished for a conference demo. They’re production-ready patterns co-engineered with the infrastructure and application providers enterprises already run on, covering security, governance, error handling, and integrations from the start.

The difference in outcome is significant. Teams that start from proven patterns ship in weeks. Teams that start from scratch are still building foundations when the business requirements change.

When the question becomes “What has AI actually delivered?”, blank slates won’t have an answer. Proven patterns will.

Why a unified, vendor-neutral control plane matters

Enterprise AI teams face a structural tension: the tools and infrastructure they need to move fast are rarely the same ones IT needs to maintain control, security, and compliance.

That tension doesn’t resolve itself. It has to be designed around.

A unified control plane gives every team — AI developers, IT, security, and business owners — a single operating environment, without forcing them to abandon the tools they already use. Models, databases, frameworks, and deployment targets remain flexible. Governance, lineage, and policy enforcement travel with every agent, regardless of where it runs.

This matters most at the edges: sovereign cloud deployments, regulated industries, air-gapped environments, and hybrid infrastructure. These are precisely the situations where tool-by-tool governance breaks down, and where a single control plane proves its value.

Vendor neutrality isn’t a feature. It’s the prerequisite for enterprise AI that can scale beyond a single team, a single cloud, or a single use case. As AI becomes more deeply embedded in enterprise systems, the ability to govern across any environment becomes the only sustainable path forward.

What deep infrastructure partnerships actually enable

Not all technology partnerships are equal. Logo-level integrations add a name to a slide. Structural, co-engineered partnerships shape platform architecture and change what’s actually possible for enterprise teams.

The practical difference shows up in time and complexity. When infrastructure capabilities like inference microservices, reasoning models, guardrail frameworks, GPU optimizations, and decision engines are co-engineered into a platform rather than bolted on, teams get access to them without months of manual setup, validation, and tuning.

That acceleration unlocks use cases that require combining reasoning, simulation, and optimization together:

- Supply chain routing that considers real-time constraints and optimizes across multiple objectives

- Digital twins that simulate complex scenarios and recommend actions

- Clinical workflows that reason through patient data while maintaining strict privacy controls

Operational reliability matters as much as technical depth. Production-grade architectures need to be validated across cloud, on-premises, sovereign, and air-gapped environments. Co-engineered integrations carry that validation with them. Teams inherit it rather than having to build it themselves.

The technical and organizational impact of unifying build, deploy, and govern

The technical case for unifying build, deploy, and govern is well understood. The organizational impact is where the real breakthroughs happen.

Assumptions stay intact through every handoff. The entire multi-agent workflow is traceable in one place, so when something misbehaves, teams can diagnose and fix it without hunting through scattered logs across disconnected systems.

Organizationally, a unified platform creates shared clarity. AI teams, IT, security, compliance, and business owners operate from the same source of truth. Governance stops being a bureaucratic burden passed between teams and becomes a shared operating language built into the platform itself.

That shift has a direct effect on shadow AI. When the official platform is easier to use than rogue alternatives, teams stop building around it. Fragmentation recedes, not because it was mandated away, but because the better path became obvious.

What multi-agent orchestration actually requires

Single-agent demos make AI look straightforward. Multi-agent systems reveal the real complexity.

The moment you move beyond one agent, the gaps in most toolchains become obvious. Shared memory, consistent governance, workflow supervision, and unified debugging aren’t optional features. They’re the foundation that keeps multi-agent systems from becoming unmanageable.

Effective multi-agent orchestration requires several capabilities working together: dependency management and retries to handle failures gracefully, dynamic workload optimization to balance cost and performance across agents, and consistent safety and reasoning guardrails applied uniformly across the entire system.

Without these, multi-agent workflows create more operational risk than they eliminate. With them, a coordinated agent workforce becomes possible: one where agents share context, operate under consistent policies, and escalate appropriately when they reach the boundaries of their autonomy.

The workforce analogy holds here. A functioning workforce, human or AI, needs coordination, shared knowledge, guardrails, and clear escalation paths. Orchestration is what makes that possible at scale.

What a unified platform actually delivers

At some point, the architecture discussion has to give way to outcomes. Here’s what enterprises consistently see when the AI lifecycle is properly unified:

- Production timelines collapse. Teams that used to spend 12–18 months on build cycles ship in weeks when they’re not rebuilding foundational infrastructure from scratch. The difference isn’t effort — it’s starting position.

- Inference costs stay manageable. Multi-agent systems can burn through budgets faster than they generate insights. Real-time workload optimization and GPU-aware scheduling keep performance high and costs predictable.

- Resilience increases. When orchestration, retries, and error handling are handled at the platform level, a single failure can’t topple an entire workflow. Issues surface before they become customer-visible outages.

- Governance risk shrinks. Lineage, access control, and policy enforcement remain consistent across all agents. No blind spots, no mystery systems, no surprises in production. Audits become routine rather than disruptive.

These outcomes share a common cause: When the full lifecycle is unified, teams spend their energy on problems that matter to the business instead of problems created by their own infrastructure.

Build an agent workforce, not another tool stack

There’s a point where collecting more tools stops being a strategy and starts being a liability. Every addition creates another integration to maintain, another governance gap to close, and another point of failure to debug at the worst possible moment.

The enterprises making real progress with agentic AI aren’t the ones with the longest tool lists. They’re the ones that stopped stitching and started operating — with platforms that handle coordination, governance, and lifecycle management as core functions rather than afterthoughts.

An agent workforce needs to behave like a real team: coordinated, reliable, scalable, and aligned with business outcomes. That doesn’t happen by accident. It happens by design.

Ready to move from experiments to production-grade impact? See how the Agent Workforce Platform works.

FAQs

What makes an agentic AI platform truly “end-to-end”?

An end-to-end agentic AI platform unifies the entire lifecycle, building agents, orchestrating multi-agent workflows, deploying them across environments, and governing them with consistent policies. Most vendors offer a collection of tools that must be stitched together manually.

A true end-to-end platform provides a single control plane with shared lineage, observability, and governance, so teams can move from prototype to production without rebuilding everything.

Why is fragmentation such a major problem for enterprises?

When teams use different tools, LLMs, and workflows, enterprises end up with brittle agents, inconsistent policies, duplicated infrastructure, and security blind spots. Most production failures happen at the handoff between AI, IT, and DevOps.

Fragmentation also fuels shadow AI, where teams build unmanaged agents without oversight. A unified platform removes these gaps by giving all stakeholders a shared environment and the governance guardrails they need.

How does DataRobot differ from hyperscalers or open-source toolchains?

Hyperscalers and open-source stacks provide components like vector stores, LLMs, gateways, observability tools, but customers must assemble, integrate, and secure them themselves. DataRobot provides a single platform that unifies these pieces, supports any model or framework, and embeds governance from day one.

The difference is agent lifecycle management, multi-agent orchestration, and vendor-neutral governance that scales across the business.

How does the NVIDIA partnership improve enterprise readiness?

DataRobot is co-engineered with NVIDIA, giving customers day-zero access to NVIDIA NIMs, NeMo Guardrails, decision optimizers like cuOpt, and industry-specific SDKs without manual setup.

These integrations turn advanced models and infrastructure into usable, production-grade agentic patterns that would otherwise require months of assembly and validation.

Why does governance need to be embedded from the start?

Governance added at the end creates gaps in lineage, security, access control, and auditability, especially when agents move between tools. DataRobot embeds governance into every stage of the lifecycle: versioning, approvals, policy enforcement, monitoring, and runtime controls are applied automatically. This prevents drift, ensures reproducibility, and gives AI leaders visibility across all agents and workloads, even in highly regulated environments.

How does DataRobot support multi-agent systems at scale?

Multi-agent systems break easily when orchestrators, tools, and safety frameworks aren’t aligned. DataRobot handles coordination, retries, shared memory, policy consistency, and debugging across agents through Covalent orchestration, syftr optimization, and NVIDIA guardrails. Instead of running isolated agent demos, enterprises can run a governed, scalable workforce of agents that collaborate reliably across systems.

The post Best agentic AI platforms: Why unified platforms win appeared first on DataRobot.

These AI-powered guide dogs don’t just lead, they talk

Revolutionizing Cheese Production with AI and Machine Vision: A Success Story from Eberle Automatische Systeme

Generative AI improves a wireless vision system that sees through obstructions

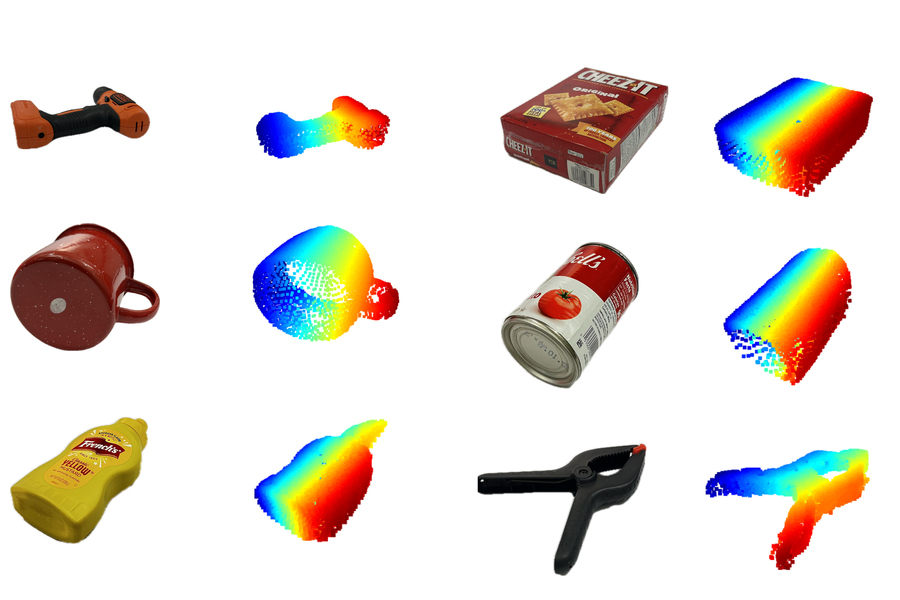

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers.

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers.

By Adam Zewe

MIT researchers have spent more than a decade studying techniques that enable robots to find and manipulate hidden objects by “seeing” through obstacles. Their methods utilize surface-penetrating wireless signals that reflect off concealed items.

Now, the researchers are leveraging generative artificial intelligence models to overcome a longstanding bottleneck that limited the precision of prior approaches. The result is a new method that produces more accurate shape reconstructions, which could improve a robot’s ability to reliably grasp and manipulate objects that are blocked from view.

This new technique builds a partial reconstruction of a hidden object from reflected wireless signals and fills in the missing parts of its shape using a specially trained generative AI model.

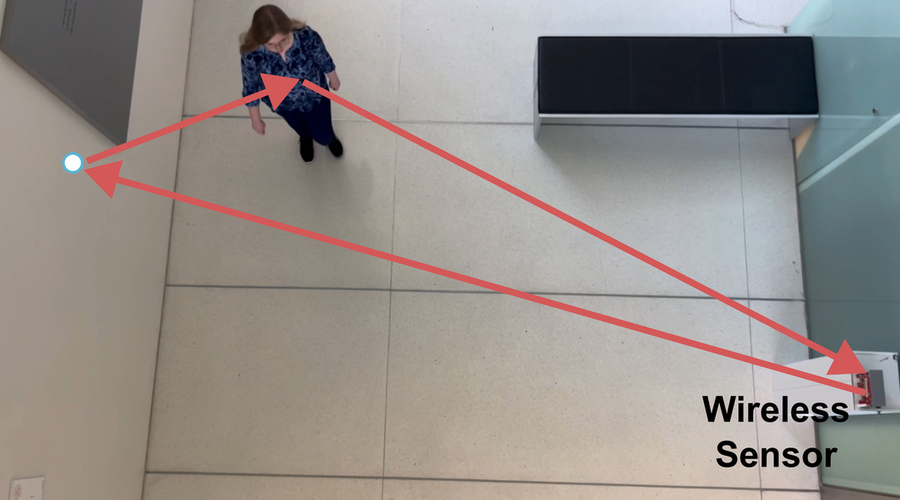

The researchers also introduced an expanded system that uses generative AI to accurately reconstruct an entire room, including all the furniture. The system utilizes wireless signals sent from one stationary radar, which reflect off humans moving in the space.

This overcomes one key challenge of many existing methods, which require a wireless sensor to be mounted on a mobile robot to scan the environment. And unlike some popular camera-based techniques, their method preserves the privacy of people in the environment.

These innovations could enable warehouse robots to verify packed items before shipping, eliminating waste from product returns. They could also allow smart home robots to understand someone’s location in a room, improving the safety and efficiency of human-robot interaction.

“What we’ve done now is develop generative AI models that help us understand wireless reflections. This opens up a lot of interesting new applications, but technically it is also a qualitative leap in capabilities, from being able to fill in gaps we were not able to see before to being able to interpret reflections and reconstruct entire scenes,” says Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science, director of the Signal Kinetics group in the MIT Media Lab, and senior author of two papers on these techniques. “We are using AI to finally unlock wireless vision.”

Adib is joined on the first paper by lead author and research assistant Laura Dodds; as well as research assistants Maisy Lam, Waleed Akbar, and Yibo Cheng; and on the second paper by lead author and former postdoc Kaichen Zhou; Dodds; and research assistant Sayed Saad Afzal. Both papers will be presented at the IEEE Conference on Computer Vision and Pattern Recognition.

Surmounting specularity

The Adib Group previously demonstrated the use of millimeter wave (mmWave) signals to create accurate reconstructions of 3D objects that are hidden from view, like a lost wallet buried under a pile.

These waves, which are the same type of signals used in Wi-Fi, can pass through common obstructions like drywall, plastic, and cardboard, and reflect off hidden objects.

But mmWaves usually reflect in a specular manner, which means a wave reflects in a single direction after striking a surface. So large portions of the surface will reflect signals away from the mmWave sensor, making those areas effectively invisible.

“When we want to reconstruct an object, we are only able to see the top surface and we can’t see any of the bottom or sides,” Dodds explains.

The researchers previously used principles from physics to interpret reflected signals, but this limits the accuracy of the reconstructed 3D shape.

In the new papers, they overcame that limitation by using a generative AI model to fill in parts that are missing from a partial reconstruction.

“But the challenge then becomes: How do you train these models to fill in these gaps?” Adib says.

Usually, researchers use extremely large datasets to train a generative AI model, which is one reason models like Claude and Llama exhibit such impressive performance. But no mmWave datasets are large enough for training.

Instead, the researchers adapted the images in large computer vision datasets to mimic the properties in mmWave reflections.

“We were simulating the property of specularity and the noise we get from these reflections so we can apply existing datasets to our domain. It would have taken years for us to collect enough new data to do this,” Lam says.

The researchers embed the physics of mmWave reflections directly into these adapted data, creating a synthetic dataset they use to teach a generative AI model to perform plausible shape reconstructions.

The complete system, called Wave-Former, proposes a set of potential object surfaces based on mmWave reflections, feeds them to the generative AI model to complete the shape, and then refines the surfaces until it achieves a full reconstruction.

Wave-Former was able to generate faithful reconstructions of about 70 everyday objects, such as cans, boxes, utensils, and fruit, boosting accuracy by nearly 20 percent over state-of-the-art baselines. The objects were hidden behind or under cardboard, wood, drywall, plastic, and fabric.

The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.

The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.

Seeing “ghosts”

The team used this same approach to build an expanded system that fully reconstructs entire indoor scenes by leveraging mmWave reflections off humans moving in a room.

Human motion generates multipath reflections. Some mmWaves reflect off the human, then reflect again off a wall or object, and then arrive back at the sensor, Dodds explains.

These secondary reflections create so-called “ghost signals,” which are reflected copies of the original signal that change location as a human moves. These ghost signals are usually discarded as noise, but they also hold information about the layout of the room.

“By analyzing how these reflections change over time, we can start to get a coarse understanding of the environment around us. But trying to directly interpret these signals is going to be limited in accuracy and resolution.” Dodds says.

They used a similar training method to teach a generative AI model to interpret those coarse scene reconstructions and understand the behavior of multipath mmWave reflections. This model fills in the gaps, refining the initial reconstruction until it completes the scene.

They tested their scene reconstruction system, called RISE, using more than 100 human trajectories captured by a single mmWave radar. On average, RISE generated reconstructions that were about twice as precise than existing techniques.

In the future, the researchers want to improve the granularity and detail in their reconstructions. They also want to build large foundation models for wireless signals, like the foundation models GPT, Claude, and Gemini for language and vision, which could open new applications.

This work is supported, in part, by the National Science Foundation (NSF), the MIT Media Lab, and Amazon.

Find out more

- Wave-Former: Through-Occlusion 3D Reconstruction via Wireless Shape Completion, Laura Dodds, Maisy Lam, Waleed Akbar, Yibo Cheng, Fadel Adib

- RISE: Single Static Radar-based Indoor Scene Understanding, Kaichen Zhou, Laura Dodds, Sayed Saad Afzal, Fadel Adib

Samsung Electronics (005930.KS) — AI Equity Research | April 2026

This analysis was produced by an AI financial research system. All data is sourced exclusively from publicly available filings, earnings transcripts, government data, and free financial aggregators — no proprietary data, paid research, or institutional tools are used. Every figure cited can be independently verified by the reader using the sources listed at the end...

The post Samsung Electronics (005930.KS) — AI Equity Research | April 2026 appeared first on 1redDrop.

Magnetic coil setup guides microrobots without seeing them

Wearable robots improve coordination between pairs of violin players

The Biggest Impact of Autonomous Capture and AI

Walmart Inc. (WMT) — AI Equity Research | April 2026

This analysis was produced by an AI financial research system. All data is sourced exclusively from publicly available filings, earnings transcripts, government data, and free financial aggregators — no proprietary data, paid research, or institutional tools are used. Every figure cited can be independently verified by the reader using the sources listed at the end...

The post Walmart Inc. (WMT) — AI Equity Research | April 2026 appeared first on 1redDrop.