Michael M. Lee

In early 19th-century England, the Luddites rebelled against the introduction of machinery in the textile industry. The Luddites’ name originates from the mythical tale of a weaver’s apprentice called Ned Ludd who, in an act of anger against increasingly dangerous and poor working conditions, supposedly destroyed two knitting machines. Contrary to popular belief, the Luddites were not against technology because they were ignorant or inept at using it (1). In fact, the Luddites were perceptive artisans who cared about their craft, and some even operated machinery. Moreover, they understood the consequences of introducing machinery to their craft and working conditions. Specifically, they were deeply concerned about how technology was being used to shift the balance of power between workers and owners of capital.

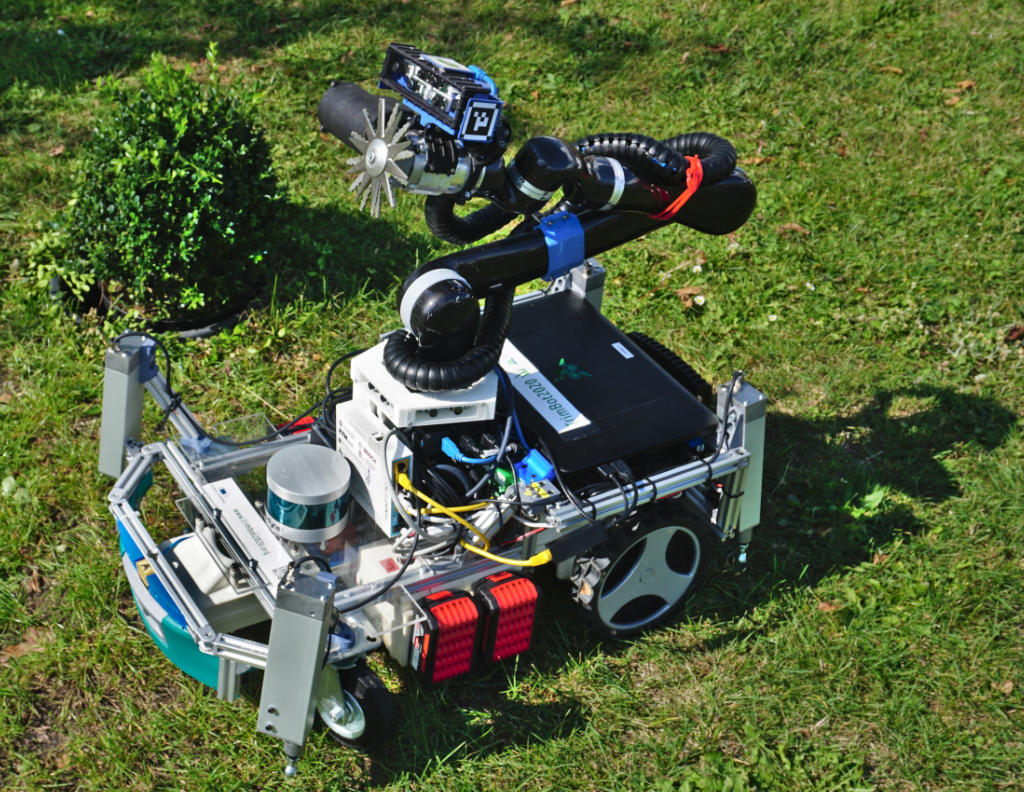

The problem is not the advent of technology; the problem is how technology is applied. This is the essence of the intensely polarizing debate on robotic labor. Too often the debate is oversimplified to two opposing factions: the anti-tech pessimist versus the pro-tech optimist. On the one hand, the deeply pessimistic make the case that there will be greatly diminished workers’ rights, mass joblessness, and a widening gulf between socioeconomic classes. On the other hand, the overly optimistic believe that technology will bring better jobs and unbridled economic wealth. The reality is that, although extreme, both sides have valid points. The debate in its present form lacks a middle ground, leaving little room for nuanced and thoughtful discussion. It is simplistic to assume those who are pessimistic towards technological change do not understand the potential of technology as it is incorrect to conclude those who are optimistic about technological change are not thinking about the consequences. Pessimists may fully understand the potential for technological change and still feel that the drawbacks outweigh benefits. Optimists may not want change at any cost, but they feel that the costs are worthwhile.

There are various examples of how the introduction of machines have made industries more efficient and innovative, raising both the quality of work and the quality of output (for example, automated teller machines in banking, automated telephone exchanges in telecommunications, and industrial robots in manufacturing). An important detail in these success stories that is rarely mentioned, however, are timelines. The first industrial revolution did lead to higher levels of urbanization and rises in output; however, crucially, it took several decades before workers saw higher wages. This period of constant wages in the backdrop of rising output per worker is known as Engels’ pause, named after Friedrich Engels, the philosopher who first observed it (2).

Timing matters because, although there will be gains in the long term, there will certainly be losses in the short term. Support for retraining those most at risk of job displacement is needed to bridge this gap. Unfortunately, progress is disappointingly slow on this front. On one level, there are those who are apathetic to the challenges facing the workforce and feel that the loss of jobs is part of the cut and thrust of technological change. On another level, it is possible that there is a lack of awareness of the challenges of transitioning people to a new era of work. We need to bring change and light to both cases, respectively. Those at risk of being displaced by machines need to feel empowered by being a part of the change and not a by-product of change. Moreover, in developing the infrastructure to retrain and support those at risk, we must also recognize that retraining is itself a solution encased in many unsolved problems that include technical, economic, social, and even cultural challenges.

There is more that roboticists should be doing to advance the debate on robotic labor beyond the current obsessive focus on job-stealing robots. First, roboticists should provide a critical and fair assessment of the current technological state of robots. If the public were aware of just how far the field of robotics needs to advance to realize highly capable and truly autonomous robots, then they might be more assured. Second, roboticists should openly communicate the intent of their research goals and aspirations. Understanding that, in the foreseeable future, robotics will be focused on task replacement, not comprehensive job replacement, changes the conversation from how robots will take jobs from workers to how robots can help workers do their job better. The ideas of collaborative robots and multiplicity are not new (3), but they seldom get the exposure that they deserve. Opening an honest and transparent dialogue between roboticists and the general public will go a long way to building a middle ground that will elevate discussion on the future of work.

References

- J. Sadowski, “I’m a Luddite. You should be one too,” The Conversation, 25 November 2021 [accessed 3 April 2022].

- R. C. Allen, Engels’ pause: Technical change, capital accumulation, and inequality in the British industrial revolution. Explor. Econ. Hist. 46, 418–435 (2019).

- K. Goldberg, Editorial multiplicity has more potential than singularity. IEEE Trans. Autom. Sci. Eng. 12, 395 (2015).

From “Lee, M. M., Robots will open more doors than they close. Science Robotics, 7, 65 (2022).” Reprinted with permission from AAAS. Further distribution or republication of this article is not permitted without prior written permission from AAAS.