Researchers develop interface for underwater robotic equipment

A look Into HDS Global’s Ultra-Lean, Hyper-Personalized eCommerce Fulfillment Model

IEEE 17th International Conference on Automation Science and Engineering paper awards (with videos)

The IEEE International Conference on Automation Science and Engineering (CASE) is the flagship automation conference of the IEEE Robotics and Automation Society and constitutes the primary forum for cross-industry and multidisciplinary research in automation. Its goal is to provide a broad coverage and dissemination of foundational research in automation among researchers, academics, and practitioners. Here we bring you the online presentations by the finalists of the four awards given at the conference. Congratulations to all the finalists and winners!

Best student paper award

Winner

- Designing a User-Centred and Data-Driven Controller for Pushrim-Activated Power-Assisted Wheels: A Case Study

Mahsa Khalili, H.F. Machiel Van der Loos and Jaimie Borisoff

Finalists

- Including Sparse Production Knowledge into Variational Autoencoders to Increase Anomaly Detection Reliability

Tom Hammerbacher, Markus Lange-Hegermann, Gorden Platz

- Synthesis and Implementation of Distributed Supervisory Controllers with Communication Delays

Lars Moormann, Reinier Hendrik Jacob Schouten, Joanna Maria Van de Mortel-Fronczak, Wan Fokkink, Jacobus E. Rooda

- Optimal Planning of Internet Data Centers Decarbonized by Hydrogen-Water-Based Energy Systems

Jinhui Liu, Zhanbo Xu, Jiang Wu, kun liu, Xunhang Sun, Xiaohong Guan

- Deep Reinforcement Learning for Prefab Assembly Planning in Robot-Based Prefabricated Construction

Zhu Aiyu, Gangyan Xu, Pieter Pauwels, Bauke de Vries, Meng Fang

- Singularity-Aware Motion Planning for Multi-Axis Additive Manufacturing

Charlie C.L. Wang, Tianyu Zhang, Xiangjia Chen, Guoxin Fang, Yingjun Tian

Best conference paper award

Winner

- Extended Fabrication-Aware Convolution Learning Framework for Predicting 3D Shape Deformation in Additive Manufacturing

Yuanxiang Wang, Cesar Ruiz, Qiang Huang

Finalists

- Probabilistic Movement Primitive Control Via Control Barrier Functions

Mohammadreza Davoodi, Asif Iqbal, Joe Cloud, William Beksi, Nicholas Gans

- Efficient Optimization-Based Falsification of Cyber-Physical Systems with Multiple Conjunctive Requirements

Logan Mathesen, Giulia Pedrielli, Georgios Fainekos

Best application paper award

Winner

- A Seamless Workflow for Design and Fabrication of Multimaterial Pneumatic Soft Actuators

Lawrence Smith, Travis Hainsworth, Zachary Jordan, Xavier Bell, Robert MacCurdy

Finalists

- Dynamic Multi-Goal Motion Planning with Range Constraints for Autonomous Underwater Vehicles Following Surface Vehicles

James McMahon, Erion Plaku

- OpenUAV Cloud Testbed: a Collaborative Design Studio for Field Robotics

Harish Anand, Stephen A. Rees, Zhiang Chen, Ashwin Jose Poruthukaran, Sarah Bearman, Lakshmi Gana Prasad Antervedi, Jnaneshwar Das

Best healthcare automation paper award

Winner

- Hospital Beds Planning and Admission Control Policies for COVID-19 Pandemic: A Hybrid Computer Simulation Approach

Yiruo Lu, Yongpei Guan, Xiang Zhong, Jennifer Fishe, Thanh Hogan

Finalists

- Rollout-Based Gantry Call-Back Control for Proton Therapy Systems

Feifan Wang, Yu-Li Huang, Feng Ju

- Progress in Development of an Automated Mosquito Salivary Gland Extractor: A Step Forward to Malaria Vaccine Mass Production

Wanze Li, Zhuoqun Zhang, Zhuohong He, Parth Vora, Alan Lai, Balazs Vagvolgyi, Simon Leonard, Anna Goodridge, Ioan Iulian Iordachita, Stephen L. Hoffman, Sumana Chakravarty, B Kim Lee Sim, Russell H. Taylor

Teaching robots to think like us: Brain cells, electrical impulses steer robot though maze

Commercial UAVS have potential to halve CO2 emissions for freight deliveries

Mobile Robots On The March – 53,000 Warehouses & Factories Will Have Deployed AMRs & AGVs By End Of 2025

Light-fueled torsional soft robot able to rapidly climb stairs

NVIDIA and ROS Teaming Up To Accelerate Robotics Development

Amit Goel, Director of Product Management for Autonomous Machines at NVIDIA, discusses the new collaboration between Open Robotics and NVIDIA. The collaboration will improve the way ROS and NVIDIA’s line of products such as Isaac SIM and the Jetson line of embedded boards operate together.

NVIDIA’s Isaac SIM lets developers build robust and scalable simulations. Dramatically reducing the costs of capturing real-world data and speeding up development time.

Their Jetson line of embedded boards is core to many robotics architectures, leveraging hardware-optimized chips for machine learning, computer vision, video processing, and more.

The improvements to ROS will allow robotics companies to better utilize the available computational power, while still developing on the robotics-centric platform familiar to many.

Amit Goel

Amit Goel is Director of Product Management for Autonomous Machines at NVIDIA, where he leads the product development of NVIDIA Jetson, the most advanced platform for AI computing at the edge.

Amit has more than 15 years of experience in the technology industry working in both software and hardware design roles. Prior to joining NVIDIA in 2011, he worked as a senior software engineer at Synopsys, where he developed algorithms for statistical performance modeling of digital designs.

Amit holds a Bachelor of Engineering in electronics and communication from Delhi College of Engineering, a Master of Science in electrical engineering from Arizona State University, and an MBA from the University of California at Berkeley.

Links

- Download mp3 (29.8 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

One giant leap for the mini cheetah

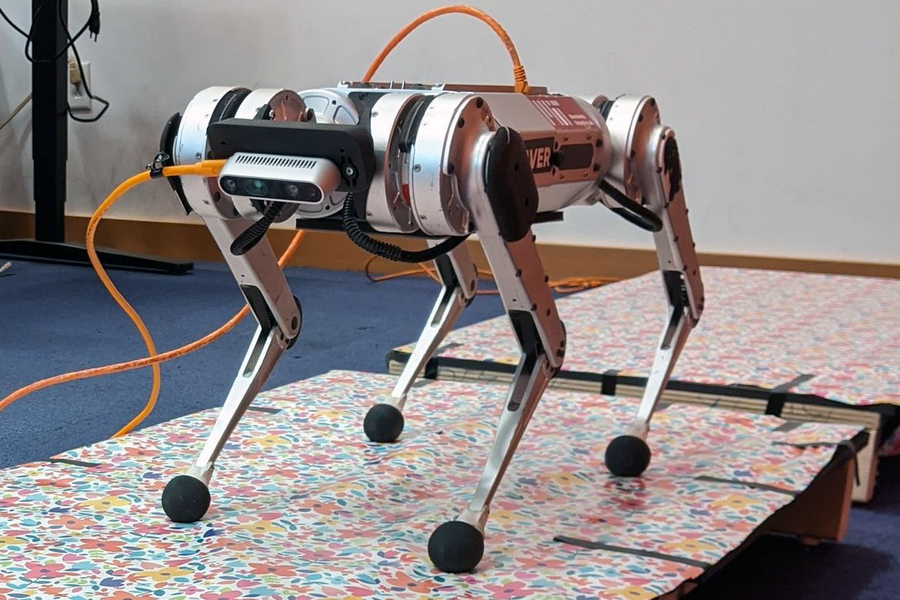

MIT researchers have developed a system that improves the speed and agility of legged robots as they jump across gaps in the terrain. Credits: Photo courtesy of the researchers

By Adam Zewe | MIT News Office

A loping cheetah dashes across a rolling field, bounding over sudden gaps in the rugged terrain. The movement may look effortless, but getting a robot to move this way is an altogether different prospect.

In recent years, four-legged robots inspired by the movement of cheetahs and other animals have made great leaps forward, yet they still lag behind their mammalian counterparts when it comes to traveling across a landscape with rapid elevation changes.

“In those settings, you need to use vision in order to avoid failure. For example, stepping in a gap is difficult to avoid if you can’t see it. Although there are some existing methods for incorporating vision into legged locomotion, most of them aren’t really suitable for use with emerging agile robotic systems,” says Gabriel Margolis, a PhD student in the lab of Pulkit Agrawal, professor in the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT.

Now, Margolis and his collaborators have developed a system that improves the speed and agility of legged robots as they jump across gaps in the terrain. The novel control system is split into two parts — one that processes real-time input from a video camera mounted on the front of the robot and another that translates that information into instructions for how the robot should move its body. The researchers tested their system on the MIT mini cheetah, a powerful, agile robot built in the lab of Sangbae Kim, professor of mechanical engineering.

Unlike other methods for controlling a four-legged robot, this two-part system does not require the terrain to be mapped in advance, so the robot can go anywhere. In the future, this could enable robots to charge off into the woods on an emergency response mission or climb a flight of stairs to deliver medication to an elderly shut-in.

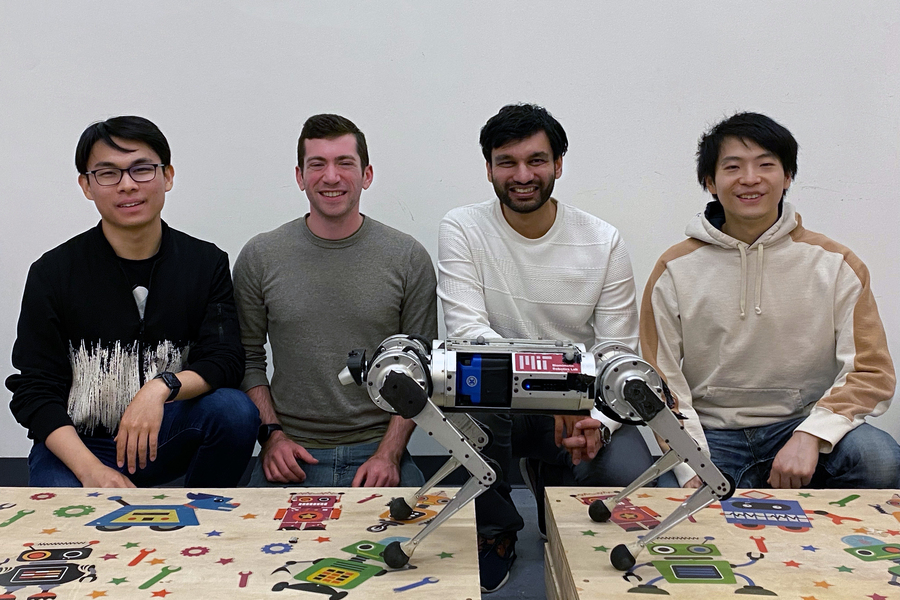

Margolis wrote the paper with senior author Pulkit Agrawal, who heads the Improbable AI lab at MIT and is the Steven G. and Renee Finn Career Development Assistant Professor in the Department of Electrical Engineering and Computer Science; Professor Sangbae Kim in the Department of Mechanical Engineering at MIT; and fellow graduate students Tao Chen and Xiang Fu at MIT. Other co-authors include Kartik Paigwar, a graduate student at Arizona State University; and Donghyun Kim, an assistant professor at the University of Massachusetts at Amherst. The work will be presented next month at the Conference on Robot Learning.

It’s all under control

The use of two separate controllers working together makes this system especially innovative.

A controller is an algorithm that will convert the robot’s state into a set of actions for it to follow. Many blind controllers — those that do not incorporate vision — are robust and effective but only enable robots to walk over continuous terrain.

Vision is such a complex sensory input to process that these algorithms are unable to handle it efficiently. Systems that do incorporate vision usually rely on a “heightmap” of the terrain, which must be either preconstructed or generated on the fly, a process that is typically slow and prone to failure if the heightmap is incorrect.

To develop their system, the researchers took the best elements from these robust, blind controllers and combined them with a separate module that handles vision in real-time.

The robot’s camera captures depth images of the upcoming terrain, which are fed to a high-level controller along with information about the state of the robot’s body (joint angles, body orientation, etc.). The high-level controller is a neural network that “learns” from experience.

That neural network outputs a target trajectory, which the second controller uses to come up with torques for each of the robot’s 12 joints. This low-level controller is not a neural network and instead relies on a set of concise, physical equations that describe the robot’s motion.

“The hierarchy, including the use of this low-level controller, enables us to constrain the robot’s behavior so it is more well-behaved. With this low-level controller, we are using well-specified models that we can impose constraints on, which isn’t usually possible in a learning-based network,” Margolis says.

Teaching the network

The researchers used the trial-and-error method known as reinforcement learning to train the high-level controller. They conducted simulations of the robot running across hundreds of different discontinuous terrains and rewarded it for successful crossings.

Over time, the algorithm learned which actions maximized the reward.

Then they built a physical, gapped terrain with a set of wooden planks and put their control scheme to the test using the mini cheetah.

“It was definitely fun to work with a robot that was designed in-house at MIT by some of our collaborators. The mini cheetah is a great platform because it is modular and made mostly from parts that you can order online, so if we wanted a new battery or camera, it was just a simple matter of ordering it from a regular supplier and, with a little bit of help from Sangbae’s lab, installing it,” Margolis says.

From left to right: PhD students Tao Chen and Gabriel Margolis; Pulkit Agrawal, the Steven G. and Renee Finn Career Development Assistant Professor in the Department of Electrical Engineering and Computer Science; and PhD student Xiang Fu. Credits: Photo courtesy of the researchers

Estimating the robot’s state proved to be a challenge in some cases. Unlike in simulation, real-world sensors encounter noise that can accumulate and affect the outcome. So, for some experiments that involved high-precision foot placement, the researchers used a motion capture system to measure the robot’s true position.

Their system outperformed others that only use one controller, and the mini cheetah successfully crossed 90 percent of the terrains.

“One novelty of our system is that it does adjust the robot’s gait. If a human were trying to leap across a really wide gap, they might start by running really fast to build up speed and then they might put both feet together to have a really powerful leap across the gap. In the same way, our robot can adjust the timings and duration of its foot contacts to better traverse the terrain,” Margolis says.

Leaping out of the lab

While the researchers were able to demonstrate that their control scheme works in a laboratory, they still have a long way to go before they can deploy the system in the real world, Margolis says.

In the future, they hope to mount a more powerful computer to the robot so it can do all its computation on board. They also want to improve the robot’s state estimator to eliminate the need for the motion capture system. In addition, they’d like to improve the low-level controller so it can exploit the robot’s full range of motion, and enhance the high-level controller so it works well in different lighting conditions.

“It is remarkable to witness the flexibility of machine learning techniques capable of bypassing carefully designed intermediate processes (e.g. state estimation and trajectory planning) that centuries-old model-based techniques have relied on,” Kim says. “I am excited about the future of mobile robots with more robust vision processing trained specifically for locomotion.”

The research is supported, in part, by the MIT’s Improbable AI Lab, Biomimetic Robotics Laboratory, NAVER LABS, and the DARPA Machine Common Sense Program.

What Are the Benefits of Automated Plating?

A technique to automatically generate hardware components for robotic systems

Control system enables four-legged robots to jump across uneven terrain in real time

How to Select the Best Motor for a Jointed Arm Robot

Robotics Today latest talks – Raia Hadsell (DeepMind), Koushil Sreenath (UC Berkeley) and Antonio Bicchi (Istituto Italiano di Tecnologia)

Robotics Today held three more online talks since we published the one from Amanda Prorok (Learning to Communicate in Multi-Agent Systems). In this post we bring you the last talks that Robotics Today (currently on hiatus) uploaded to their YouTube channel: Raia Hadsell from DeepMind talking about ‘Scalable Robot Learning in Rich Environments’, Koushil Sreenath from UC Berkeley talking about ‘Safety-Critical Control for Dynamic Robots’, and Antonio Bicchi from the Istituto Italiano di Tecnologia talking about ‘Planning and Learning Interaction with Variable Impedance’.

|

Raia Hadsell (DeepMind) – Scalable Robot Learning in Rich Environments Abstract: As modern machine learning methods push towards breakthroughs in controlling physical systems, games and simple physical simulations are often used as the main benchmark domains. As the field matures, it is important to develop more sophisticated learning systems with the aim of solving more complex real-world tasks, but problems like catastrophic forgetting and data efficiency remain critical, particularly for robotic domains. This talk will cover some of the challenges that exist for learning from interactions in more complex, constrained, and real-world settings, and some promising new approaches that have emerged. Bio: Raia Hadsell is the Director of Robotics at DeepMind. Dr. Hadsell joined DeepMind in 2014 to pursue new solutions for artificial general intelligence. Her research focuses on the challenge of continual learning for AI agents and robots, and she has proposed neural approaches such as policy distillation, progressive nets, and elastic weight consolidation to solve the problem of catastrophic forgetting. Dr. Hadsell is on the executive boards of ICLR (International Conference on Learning Representations), WiML (Women in Machine Learning), and CoRL (Conference on Robot Learning). She is a fellow of the European Lab on Learning Systems (ELLIS), a founding organizer of NAISys (Neuroscience for AI Systems), and serves as a CIFAR advisor. |

|

Koushil Sreenath (UC Berkeley) – Safety-Critical Control for Dynamic Robots: A Model-based and Data-driven Approach Abstract: Model-based controllers can be designed to provide guarantees on stability and safety for dynamical systems. In this talk, I will show how we can address the challenges of stability through control Lyapunov functions (CLFs), input and state constraints through CLF-based quadratic programs, and safety-critical constraints through control barrier functions (CBFs). However, the performance of model-based controllers is dependent on having a precise model of the system. Model uncertainty could lead not only to poor performance but could also destabilize the system as well as violate safety constraints. I will present recent results on using model-based control along with data-driven methods to address stability and safety for systems with uncertain dynamics. In particular, I will show how reinforcement learning as well as Gaussian process regression can be used along with CLF and CBF-based control to address the adverse effects of model uncertainty. Bio: Koushil Sreenath is an Assistant Professor of Mechanical Engineering, at UC Berkeley. He received a Ph.D. degree in Electrical Engineering and Computer Science and a M.S. degree in Applied Mathematics from the University of Michigan at Ann Arbor, MI, in 2011. He was a Postdoctoral Scholar at the GRASP Lab at University of Pennsylvania from 2011 to 2013 and an Assistant Professor at Carnegie Mellon University from 2013 to 2017. His research interest lies at the intersection of highly dynamic robotics and applied nonlinear control. His work on dynamic legged locomotion was featured on The Discovery Channel, CNN, ESPN, FOX, and CBS. His work on dynamic aerial manipulation was featured on the IEEE Spectrum, New Scientist, and Huffington Post. His work on adaptive sampling with mobile sensor networks was published as a book. He received the NSF CAREER, Hellman Fellow, Best Paper Award at the Robotics: Science and Systems (RSS), and the Google Faculty Research Award in Robotics. |

|

Antonio Bicchi (Istituto Italiano di Tecnologia) – Planning and Learning Interaction with Variable Impedance Abstract: In animals and in humans, the mechanical impedance of their limbs changes not only in dependence of the task, but also during different phases of the execution of a task. Part of this variability is intentionally controlled, by either co-activating muscles or by changing the arm posture, or both. In robots, impedance can be varied by varying controller gains, stiffness of hardware parts, and arm postures. The choice of impedance profiles to be applied can be planned off-line, or varied in real time based on feedback from the environmental interaction. Planning and control of variable impedance can use insight from human observations, from mathematical optimization methods, or from learning. In this talk I will review the basics of human and robot variable impedance, and discuss how this impact applications ranging from industrial and service robotics to prosthetics and rehabilitation. Bio: Antonio Bicchi is a scientist interested in robotics and intelligent machines. After graduating in Pisa and receiving a Ph.D. from the University of Bologna, he spent a few years at the MIT AI Lab of Cambridge before becoming Professor in Robotics at the University of Pisa. In 2009 he founded the Soft Robotics Laboratory at the Italian Institute of Technology in Genoa. Since 2013 he is Adjunct Professor at Arizona State University, Tempe, AZ. He has coordinated many international projects, including four grants from the European Research Council (ERC). He served the research community in several ways, including by launching the WorldHaptics conference and the IEEE Robotics and Automation Letters. He is currently the President of the Italian Institute of Robotics and Intelligent Machines. He has authored over 500 scientific papers cited more than 25,000 times. He supervised over 60 doctoral students and more than 20 postdocs, most of whom are now professors in universities and international research centers, or have launched their own spin-off companies. His students have received prestigious awards, including three first prizes and two nominations for the best theses in Europe on robotics and haptics. He is a Fellow of IEEE since 2005. In 2018 he received the prestigious IEEE Saridis Leadership Award. |