When it comes to robocars, new LIDAR products were the story of CES 2018. Far more companies showed off LIDAR products than can succeed, with a surprising variety of approaches. CES is now the 5th largest car show, with almost the entire north hall devoted to cars. In coming articles I will look at other sensors, software teams and non-car aspects of CES, but let’s begin with the LIDARs.

Velodyne

When it comes to robocar LIDAR, the pioneer was certainly Velodyne, who largely owned the market for close to a decade. Their $75,000 64-laser spinning drum has been the core product for many years, while most newer cars feature their 16 and 32 laser “puck” shaped unit. The price was recently cut in half on the pucks, and they showed off the new $100K 128 laser unit as well as a new more rectangular unit called the Veloray that uses a vibrating mirror to steer the beam for a forward view rather than a 360 view.

The Velodyne 64-laser unit has become such an icon that its physical properties have become a point of contention. The price has always been too much for any consumer car (and this is also true of the $100K unit of course) but teams doing development have wisely realized that they want to do R&D with the most high-end unit available, expecting those capabilities to be moderately priced when it’s time to go into production. The Velodyne is also large, heavy, and because it spins, quite distinctive. Many car companies, and LIDAR companies have decried these attributes in their efforts to be different from Waymo (which uses their own in-house LIDAR now) and Velodyne. Most products out there are either clones of the Velodyne, or much smaller units with a 90 to 120 degree field of view.

Quanergy

I helped Quanergy get going, so I have an interest and won’t comment much. Their recent big milestone is going into production on their 8 line solid state LIDAR. Automakers are very big on having few to no moving parts so many companies are trying to produce that. Quanergy’s specifications are well below the Velodyne and many other units, but being in production makes a huge difference to automakers. If they don’t stumble on the production schedule, they will do well. With lower resolution instruments with smaller fields of view, you will need multiple units, so their cost must be kept low.

Luminar and 1.5 micron

The hot rising star of the hour Luminar, with its high performance long range LIDAR. Luminar is part of the subset of LIDAR makers using infrared light in the 1.5 micron (1550nm) range. The eye does not focus this light into a spot on the retina, so you can emit a lot more power from your laser without being dangerous to the eye. (There are some who dispute this and think there may be more danger to the cornea than believed.)

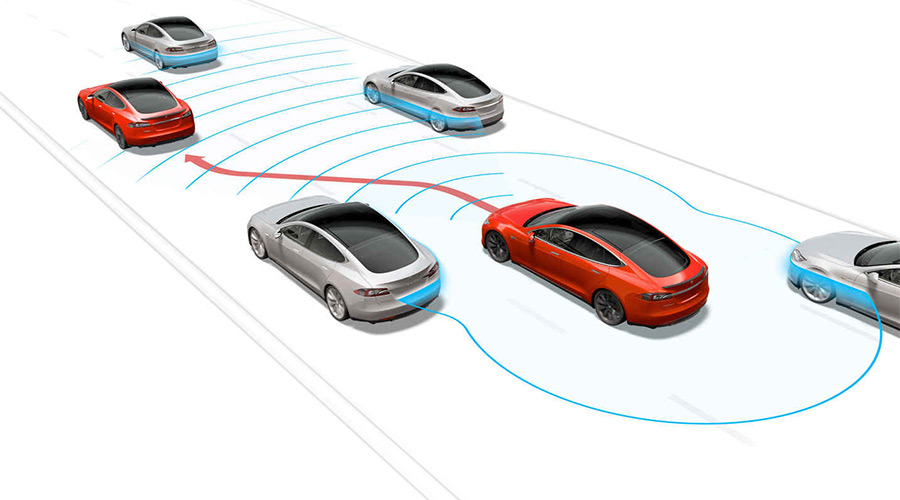

The ability to use more power allows longer range, and longer range is important, particularly getting a clear view of obstacles over 200m away. At that range, more conventional infrared lid Ar in the 900nm band has limitations, particularly on dark objects like a black car or pedestrian in black clothing. Even if the limitations are reduced, as some 900nm vendors claim, that’s not good enough for most teams if they are trying to make a car that goes at highway speeds.

At 80mph on dry pavement, you can hard brake in 100m. On wet pavement it’s 220m to stop, so you should not be going 80mph but people do. But a system, like a human, needs time to decide if it needs to brake, and it also doesn’t want to brake really hard. It usually takes at least 100ms just to receive a frame from sensors, and more time to figure out what it all means. No system figures it out from just one frame, usually a few frames have to be analyzed.

On top of that, 200m out, you really only get a few spots on a LIDAR from a car, and one spot from a pedestrian. That first spot is a warning but not enough to trigger braking. You need a few frames and want to see more spots and get more data to know what you’re seeing. And a safety margin on top of that. So people are very interested in what 1.5 micron LIDAR can see — as well as radar, which also sees that far.

The problem with building 1.5 micron LIDAR is that light that red is not detected with silicon. You need more exotic stuff, like indium gallium arsenide. This is not really “exotic” but compared to silicon it is. Our world knows a lot about how to do things with silicon, and making things with it is super mature and cheap. Anything else is expensive in comparison. Made in the millions, other materials won’t be.

The new Velodyne has 128 lasers and 128 photodetectors and costs a lot. 128 lasers and detectors for 1.5 micron would be super costly today. That’s why Luminar’s design uses two modules, each with a single laser and detector. The beam is very powerful, and moved super fast. It is steered by moving a mirror to sweep out rasters (scan lines) both back and forth and up and down. The faster you move your beam the more power you can put into it — what matters is how much energy it puts into your eye as it is sweeping over it, and how many times it hits your eye every second.

The Luminar can do a super detailed sweep if you only want one sweep per second, and the point clouds (collections of spots with the distance and brightness measured, projected into 3D) look extremely detailed and nice. To drive, you need at least 10 sweeps per second, and so the resolution drops a lot, but is still good.

Another limit which may surprise you is the speed of light. To see something 250m away, the light takes 1.6 microseconds to go out and back. This limits how many points per second you can get from one laser. Speeding up light is not an option. There are also limits on how much power you can put through your laser before it overheats.

(To avoid overheating, you can concentrate your point budget on the regions of greatest interest, such as those along the road or those that have known targets. I describe this in This US patent. While I did not say it, several people reported to me that Luminar’s current units require a fairly hefty box to deliver power to their LIDAR and do the computational post processing to produce the pretty point clouds.

Luminar’s device is also very expensive (they won’t publish the price) but Toyota built a test vehicle with 4 of their double-laser units, one in each of the 4 directions. Some teams are expressing the desire to see over 200m in all directions, while others think it is really only necessary to see that far in the forward direction.

You do have to see far to the left and right when you are entering an intersection with cross traffic, and you also have to see far behind if you are changing lanes on a German autobahn and somebody might be coming up on your left 100km/h faster than you (it happens!) Many teams feel that radar is sufficient there, because the type of decision you need to make (do I go or don’t I) is not nearly so complex and needs less information.

As noted before, while most early LIDARS were in the 900nm bands, Google/Waymo built their own custom LIDAR with long range, and the effort of Uber to build the same is the subject of the very famous lawsuit between the two companies. Princeton Lightwave, another company making 1.5 micron LIDAR, was recently acquired by Ford/Argo AI — an organization run by Bryan Salesky, another Google car alumnus.

I saw a few other companies with 1.5 micron LIDARS, but not as far along as Luminar, but several pointing out they did not need the large box for power and computing, suggesting they only needed about 20 to 40 watts for the whole device. One was Innovusion, which did not have a booth, but showed me the device in their suite. However, in the suite it was not possible to test range claims.

Tetravue and new Time of Flight

Tetravue showed off their radically different time of flight technology. So far there have been only a few methods to measure how long it takes for a pulse of light to go out and come back, thus learning the distance.

The classic method is basic sub-nanosecond timing. To get 1cm accuracy, you need to measure the time at close to about 50 picosecond accuracy. Circuits are getting better than that. This needs to be done with both scanning pulses, where you send out a pulse and then look in precisely that direction for the return, or with “flash” LIDAR where you send out a wide, illuminating pulse and then have an array of detector/timers which count how long each pixels took to get back. This method works at almost any distance.

The second method is to use phase. You send out a continuous beam but you modulate it. When the return comes back, it will be out of phase with the outgoing signal. How much out of phase depends on how long it took to come back, so if you can measure the phase, you can measure the time and distance. This method is much cheaper but tends to only be useful out to about 10m.

Tetravue offers a new method. They send out a flash, and put a decaying (or opening) shutter in front of an ordinary return sensor. Depending on when the light arrives, it is attenuated by the shutter. The amount it is attenuated tells you when it arrived.

I am interested in this because I played with such designs myself back in 2011, instead proposing the technique for a new type of flash camera with even illumination but did not feel you could get enough range. Indeed, Tetravue only claims a maximum range of 80m, which is challenging — it’s not fast enough for highway driving or even local expressway driving, but could be useful for lower speed urban vehicles.

The big advantages of this method are cost — it uses mostly commodity hardware — and resolution. Their demo was a 1280×720 camera, and they said they were making a 4K camera. That’s actually too much resolution for most neural networks, but digital crops from within it could be very happy, and make for the best object recognition result to be found, at least on closer targets. This might be a great tool for recognizing things like pedestrian body language and more.

At present the Tetravue uses light in the 800nm bands. That is easier to receive with more efficiency on silicon, but there is more ambient light from the sun in this band to interfere.

The different ways to steer

In addition to differing ways to measure the pulse, there are also many ways to steer it. Some of those ways include:

- Mechanical spinning — this is what the Velodyne and other round lidars do. This allows the easy making of 360 degree view LIDARs and in the case of the Velodyne, it also stops rain from collecting on the instrument. One big issues is that people are afraid of the reliability of moving parts, especially on the grand scale.

- Moving, spinning or vibrating mirrors. These can be sealed inside a box and the movement can be fairly small.

- MEMS mirrors, which are microscopic mirrors on a chip Still moving, but effectively solid state. These are how DLP projectors work. Some new companies like Innovision featured LIDARs steered this way.

- Phased arrays — you can steer a beam by having several emitters and adjusting the phase so the resulting beam goes where you want it. This is entirely solid state.

- Spectral deflection — it is speculated that some LIDARS do vertical steering by tuning the frequency of the beam, and then using a prism so this adjusts the angle of the beam.

- Flash LIDAR, which does not steer at all, and has an array of detectors

There are companies using all these approaches, or combinations of them.

The range of 900nm LIDAR

The most common and cheapest LIDARS, are as noted, in the 900 nm wavelength band. This is a near infrared band, but it is far enough from visible that not a lot of ambient light interferes. At the same time, up here, it’s harder to get silicon to trigger on the photons, so it’s a trade-off.

Because it acts like visible light and is focused by the lens of the eye, keeping eye safe is a problem. At a bit beyond 100m, at the maximum radiation level that is eye safe, fewer and fewer photons reflect back to the detector from dark objects. Yet many vendors are claiming ranges of 200m or even 300m in this band, while others claim it is impossible. Only a hands-on analysis can tell how reliably these longer ranges can actually be delivered, but most feel that can’t be done at the level needed.

There are some tricks which can help, including increasing sensitivity, but there are physical limits. One technique that is being considered is dynamic adjustment of the pulse power, reducing it when the target is close to the laser. Right now, if you want to send out a beam that you will see back from 200m, it needs to be so powerful that it could hurt the eye of somebody close to the sensor. Most devices try for physical eye-safety; they don’t emit power that would be unsafe to anybody. The beam itself is at a dangerous level, but it moves so fast that the total radiation at any one spot is acceptable. They have interlocks so that the laser shuts down if it ever stops moving.

To see further, you would need to detect the presence of an object (such as the side of a person’s head) that is close to you, and reduce the power before the laser scanned over to their eyes, keeping it low until past the head, then boosting it immediately to see far away objects behind the head. This can work, but now a failure of the electronic power control circuits could turn the devices into a non-safe one, which people are less willing to risk.

The Price of LIDARs

LIDAR prices are all over the map, from the $100,000 Velodyne 128-line to solid state units forecast to drop close to $100. Who are the customers that will pay these prices?

High End

Developers working on prototypes often chose the very best (and thus most expensive) unit they can get their hands on. The cost is not very important on prototypes, and you don’t plan to release for a few years. These teams make the bet that high performance units will be much cheaper when it’s time to ship. You want to develop and test with the performance you can buy in the future.’

That’s why a large fraction of teams drive around with $75,000 Velodynes or more. That’s too much for production unit but they don’t care about that. It made people predict incorrectly that robocars are far in the future.

Middle End

Units in the $7,000 to $20,000 range are too expensive as an add-on feature for a personal car. There is no component of a modern car that is this expensive, except the battery pack in a Tesla. But for a taxi, it’s a different story. With so many people sharing it, the cost is not out of the question compared to the human driver which is taken out of the equation. In fact, some would argue the $100,000 LIDAR over 6 years is still cheaper than that.

In this case, cost is not the big issue, it’s performance. Everybody wants to be out on the market first, and if a better sensor can get you out a year sooner, you’ll pay it.

Low end

LIDARs that cost (or will cost) below $1,000, especially below $250, are the ones of interest to major automakers who still think the old way: Building cars for customers with a self-drive feature.

They don’t want to add a great deal to the bill of materials for their cars and are driving all the low end, typically solid state devices.

None

None of these LIDARS are available today in automotive quantities or quality levels. Thus you see companies like Tesla, who want to ship a car today, designing without LIDAR. Those who imagine LIDAR as expensive believe that lower cost methods, like computer vision with cameras, are the right choice. They are right in the very short run (because you can’t get a LIDAR) and in the very long run (when cost will become the main driving factor) but probably wrong in the time scales that matter.

Some LIDARs are CES

Here is a list of some of the other LIDAR companies I came across at CES. There were even more than this.

AEye — MEMS LIDAR and software fused with visible light camera

Cepton — MEMS-like steering, claims 200 to 300m range

Robosense RS-lidar-m1 pro — claims 200m MEMS, 20fps, .09 deb by .2 deg, 63 x 20

Surestar R-Fans (16 and 32 laser, puck style) to 20hz,

Leishen MX series — short range for robots, 8 to 48 line units, (puck style)

ETRI LaserEye — Korean research product

Benewake Flash Lidar, shorter range.

.

Infineon box LIDAR (Innoluce prototype)

Innoviz, mems mirror 900nm LIDAR with claimed longer range

Leddartech — older company from Quebec, now making a flash LIDAR

Ouster — $12,000 super light LIDAR.

To come: Thermal Sensors, Radars, and Computer Vision, better cameras

I have created a gallery in Google Photos with some of the more interesting items I saw at CES, with the bulk of them being related to robocars, robotic delivery and transportation.

I have created a gallery in Google Photos with some of the more interesting items I saw at CES, with the bulk of them being related to robocars, robotic delivery and transportation.