How to Build a Domain-Specific Compliance Monitoring Agent?

In today’s rapidly evolving regulatory landscape, compliance is no longer just a checkbox, it’s a strategic necessity. As businesses expand globally and data privacy laws tighten, organizations face growing pressure to ensure continuous compliance with complex and domain-specific regulations. Traditional manual audits and fragmented monitoring tools can’t keep pace with the dynamic nature of modern compliance requirements.

That’s where domain-specific compliance monitoring agents come in. Using AI, machine learning (ML), and natural language processing (NLP), these smart systems automatically find, report, and handle compliance risks as they happen. They not only reduce human error but also enhance transparency, operational efficiency, and audit readiness.

What Is a Domain-Specific Compliance Monitoring Agent?

A domain-specific compliance monitoring agent is an AI system made to check and enforce compliance rules in a particular industry or business area, like finance, healthcare, manufacturing, or cybersecurity.

Unlike general compliance software, these agents are tailored to understand industry regulations, terminologies, and operational contexts. For example:

- In healthcare, they monitor adherence to HIPAA and data privacy laws.

- In finance, they track AML, KYC, and SOX compliance.

- In manufacturing, they ensure workplace safety and environmental standards.

By combining specialized knowledge with automated processes, these agents can understand regulatory documents, identify risks of not following the rules, and even recommend fixes, all instantly.

Key Challenges in Compliance Automation

Building a compliance agent is not just about adding AI on top of a rules engine. It involves tackling several challenges:

- Regulatory Complexity: Laws vary by region and industry, often changing frequently.

- Data Silos: Compliance data is often scattered across systems, making integration difficult.

- Unstructured Information: Most regulations exist in text documents that require NLP to interpret.

- False Positives: Inaccurate alerts can overwhelm compliance teams.

- Scalability: Monitoring multiple frameworks simultaneously demands scalable architecture.

Addressing these challenges requires a well-structured, domain-specific approach that blends AI automation with deep regulatory expertise.

Key Benefits of an AI-Powered Compliance Monitoring Agent

Implementing a compliance monitoring agent offers both immediate and long-term benefits:

- Real-Time Risk Detection

An AI-powered compliance monitoring agent enables real-time risk detection, continuously analyzing regulatory data and business operations. It instantly flags potential non-compliance issues before they escalate, allowing organizations to act proactively and avoid costly penalties.

- Reduced Manual Effort

Through regulatory automation, the system eliminates the need for repetitive manual audits and document reviews. By automating routine compliance checks, teams can focus on strategic initiatives that improve governance and operational efficiency.

- Improved Accuracy

Machine learning and natural language processing (NLP) enhance the accuracy of compliance monitoring by minimizing human error and false positives. This ensures consistent interpretation of complex regulations and builds confidence in compliance outcomes.

- Faster Audits

Automated data collection and intelligent reporting make audit preparation faster and simpler. Compliance teams can generate complete, ready-to-submit audit reports in minutes, improving audit readiness and reducing turnaround time.

- Enhanced Transparency

With centralized dashboards and visual reports, organizations gain end-to-end transparency into compliance performance. This visibility improves collaboration between departments and demonstrates accountability to auditors and regulators.

- Cost Efficiency

By leveraging AI automation and predictive analytics, businesses achieve cost-efficient compliance management. The system reduces manual workload, lowers audit expenses, and helps prevent costly compliance violations.

- Scalability

Built on a flexible architecture, the solution offers scalable compliance management that easily adapts to new frameworks, geographies, and regulatory changes. As business and legal environments evolve, the agent grows alongside them, ensuring long-term compliance resilience.

Step-by-Step Guide to Building a Domain-Specific Compliance Monitoring Agent

Step 1: Define the Domain and Compliance Frameworks

Start by clearly identifying the domain (e.g., healthcare, finance) and mapping out the applicable regulations, such as HIPAA, GDPR, or ISO standards. Collaborate with domain experts to define critical compliance KPIs and monitoring rules.

Step 2: Gather and Prepare Regulatory Data

Collect both structured and unstructured data from trusted sources, regulatory bodies, internal policies, and audit reports. Use AI tools to extract, clean, and normalize this data for analysis.

Step 3: Design the Knowledge Graph and Rules Engine

Build a knowledge graph that links obligations, policies, and operational processes. The rules engine translates compliance requirements into actionable logic that can be automatically checked against real-time data.

Step 4: Integrate AI and NLP Models

Implement NLP models to interpret legal text, detect compliance obligations, and classify documents. Machine learning models can identify anomalies and predict future compliance risks based on patterns in historical data.

Step 5: Develop Real-Time Monitoring Dashboards

Design dashboards that provide compliance officers with real-time visibility into the organization’s status. These should include alerts for violations, risk scores, and trend analysis.

Step 6: Test, Validate, and Deploy

Conduct pilot testing with real regulatory scenarios. Validate model accuracy, minimize false positives, and ensure seamless integration with existing enterprise systems before full deployment.

Key Features to Include in Your Compliance Monitoring Agent

Building a domain-specific compliance monitoring agent requires more than automation, it needs intelligent features that deliver accuracy, agility, and scalability. Below are the essential features that make your agent effective and future-ready:

- Intelligent Data Integration

The agent should seamlessly connect with multiple data sources, such as ERP systems, CRMs, audit logs, and external regulatory feeds, to gather, clean, and unify compliance data in real time.

- Natural Language Processing (NLP) Engine

Since most regulations are written in complex legal language, NLP helps the agent interpret and classify regulatory text, identify key obligations, and map them to internal policies automatically.

- Dynamic Rules Engine

A configurable rules engine allows businesses to define, update, and customize compliance policies without coding. It ensures the agent adapts quickly to changing regulations or new jurisdictions.

- Real-Time Risk Detection and Alerts

AI-driven risk models continuously analyze operations to detect anomalies, policy breaches, or deviations from regulatory norms. Real-time alerts help compliance teams take preventive action faster.

- Automated Reporting and Audit Trails

The agent should generate accurate, timestamped audit logs and compliance reports to simplify regulatory audits and demonstrate transparency to stakeholders and authorities.

- Dashboard and Visualization

An intuitive dashboard provides compliance officers with clear, real-time insights, including compliance status, violation trends, and overall risk exposure across business units.

- Self-Learning and Continuous Improvement

With built-in machine learning capabilities, the agent can learn from past incidents, feedback, and audit outcomes to continuously refine its detection models and improve accuracy.

- Role-Based Access Control (RBAC)

Security is crucial. Role-based access ensures that only authorized users can view, edit, or manage compliance data, maintaining privacy and control.

- Multi-Domain Scalability

As organizations grow, the agent should easily scale to monitor multiple domains, such as finance, healthcare, or HR, while maintaining performance and consistency.

- Integration with GRC and Workflow Systems

Seamless integration with Governance, Risk, and Compliance (GRC) platforms, ticketing tools, and workflow systems ensures smooth remediation and compliance management from detection to resolution.

Technologies and Tools Used for AI Compliance Agent Development

Building an AI compliance agent involves integrating multiple technologies, such as:

- AI & ML Frameworks: TensorFlow, PyTorch, scikit-learn

- NLP Libraries: SpaCy, Hugging Face Transformers, OpenAI APIs

- Data Management: Elasticsearch, Neo4j (for knowledge graphs), PostgreSQL

- Automation Tools: Apache Airflow, LangChain, or Rasa

- Visualization: Power BI, Tableau, or custom web dashboards

- Cloud Infrastructure: AWS, Azure, or GCP for scalability and security

Must-Know: Core Components of a Compliance Monitoring Agent

A robust AI-powered compliance monitoring agent typically includes the following components:

- Data Ingestion Layer: Gathers data from multiple sources, documents, databases, and APIs. It ensures continuous, real-time access to all relevant compliance data, reducing manual collection efforts and data silos.

- Knowledge Graph: Maps relationships between regulations, policies, and business processes. It enables a contextual understanding of compliance dependencies, helping organizations trace the impact of regulatory changes across departments.

- NLP Engine: Understands and classifies regulatory texts, identifying key obligations. It automates the extraction of complex legal requirements, saving time and minimizing interpretation errors.

- Rule-Based Engine: Applies specific compliance rules for monitoring and alerting. It provides immediate detection of non-compliance issues, ensuring faster remediation and reduced compliance risk.

- Machine Learning Models: Detects anomalies and predicts potential violations. It enables proactive compliance by forecasting risks before they escalate, improving decision-making and regulatory foresight.

- Dashboard & Reporting: Visualizes compliance status, alerts, and performance metrics. It offers clear, actionable insights for compliance officers and executives to monitor performance and demonstrate audit readiness.

- Integration Layer: Connects seamlessly with enterprise systems (ERP, CRM, GRC tools). It enhances interoperability and data consistency across business systems, streamlining compliance workflows end-to-end.

The Future of AI in Compliance Monitoring Agents

As regulations evolve and data volumes grow, the future of compliance monitoring will rely heavily on agentic AI agents capable of self-learning and adaptation. Emerging trends such as Generative AI, Explainable AI (XAI), and predictive compliance analytics will further enhance accuracy, accountability, and trust.

In the next few years, organizations that invest in intelligent, domain-specific compliance systems will be better equipped to navigate complex regulatory ecosystems—transforming compliance from a cost center into a competitive advantage.

USM Business Systems’ Best Practices in AI Development

At USM, AI development is driven by a structured, scalable, and ethical framework. Their best practices in AI agent development focus on the following pillars:

- Strategic Planning: Aligning AI initiatives with business goals and compliance objectives.

- Data Quality & Governance: Ensuring reliable, bias-free, and secure datasets.

- Scalable Architecture: Building modular, cloud-native AI systems for flexibility and growth.

- Agile Development: Using iterative, feedback-driven development cycles.

- Ethical AI: Embedding transparency, accountability, and fairness into every AI model.

- Continuous Optimization: Regularly retraining models and refining rules based on evolving regulations.

By combining deep domain knowledge with AI expertise, we help enterprises build intelligent compliance agents that deliver measurable ROI while maintaining regulatory confidence.

Conclusion

Building a domain-specific compliance monitoring agent is a strategic step toward smarter governance, reduced risk, and operational excellence. With the right mix of AI technologies, domain expertise, and ethical practices, businesses can move from reactive compliance to proactive, data-driven assurance.

Partnering with experts like USM ensures that every stage, from design to deployment, follows industry best practices for accuracy, scalability, and long-term success.

Ready to automate your compliance journey?

[contact-form-7]

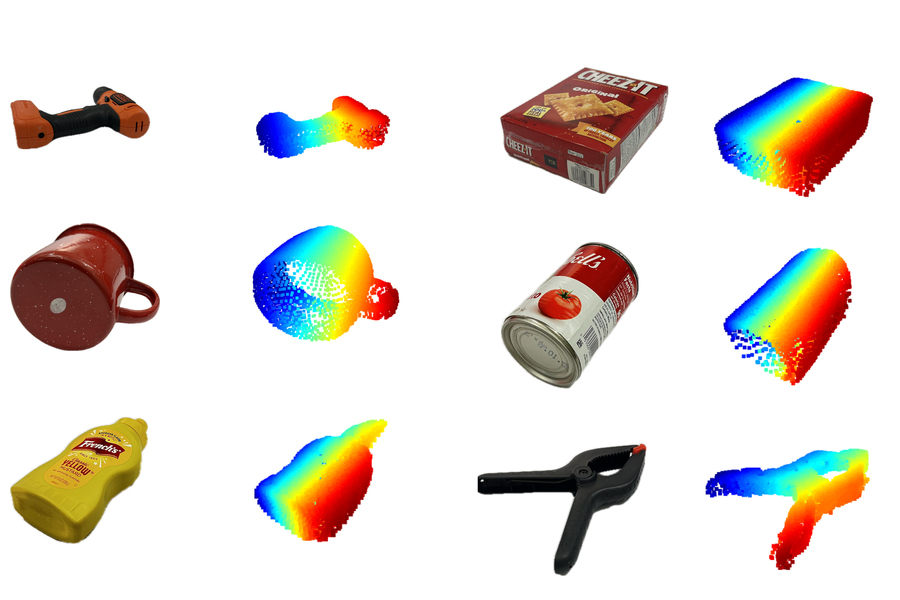

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers.

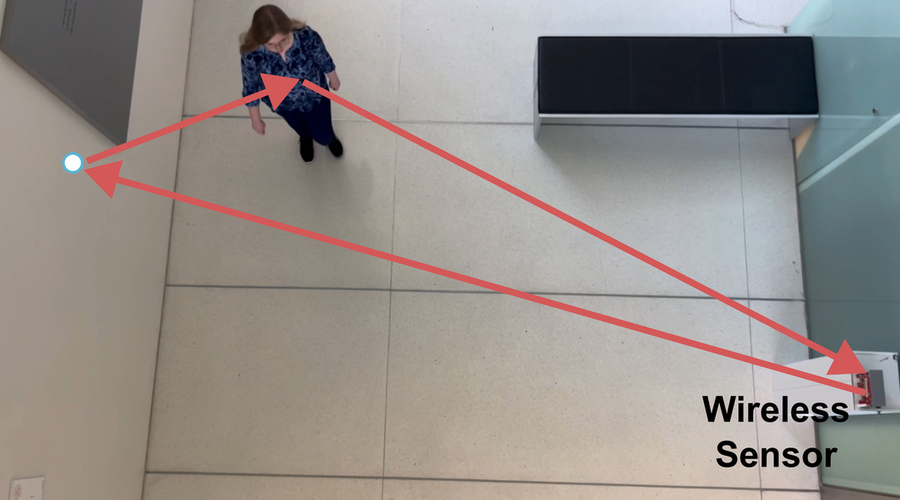

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers. The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.

The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.