Engineers develop a robotic hand with a gecko-inspired grip

Robot hand moves closer to human abilities

A new untethered and insect-sized aerial vehicle

Robotic Research Announces $228 Million Series A Funding Round to Scale Autonomous Technology Commercially

Youssef Benmokhtar: Digitization of Touch and Meta AI Partnership | Sense Think Act Podcast #9

In this episode, Audrow Nash speaks to Youssef Benmokhtar, CEO of GelSight, a Boston-based company that makes high resolution tactile sensors for several industries. They talk about how GelSight’s tactile sensors work, GelSight’s new collaboration with Meta AI (formerly, Facebook AI) to manufacture a low cost touch sensor called DIGIT, on the digitization of touch, touch sensing in robotics, how GelSight is investing in community and open source software, and Youssef’s professional path in several industries.

Episode Links

- Download the episode

- Youssef Benmokhtar’s LinkedIn

- GelSight’s Website

- DIGIT’s open source page

- PyTouch library

Podcast info

Wall climbing robot can reduce workplace accidents

Creating the human-robotic dream team

Big Trends in Offline Programming Software for Robot

Technique speeds up thermal actuation for soft robotics

Human-like brain helps robot out of a maze

How Commercial Robotics Could Improve the Inventory and Shipping Crisis of 2021

Interview with Huy Ha and Shuran Song: CoRL 2021 best system paper award winners

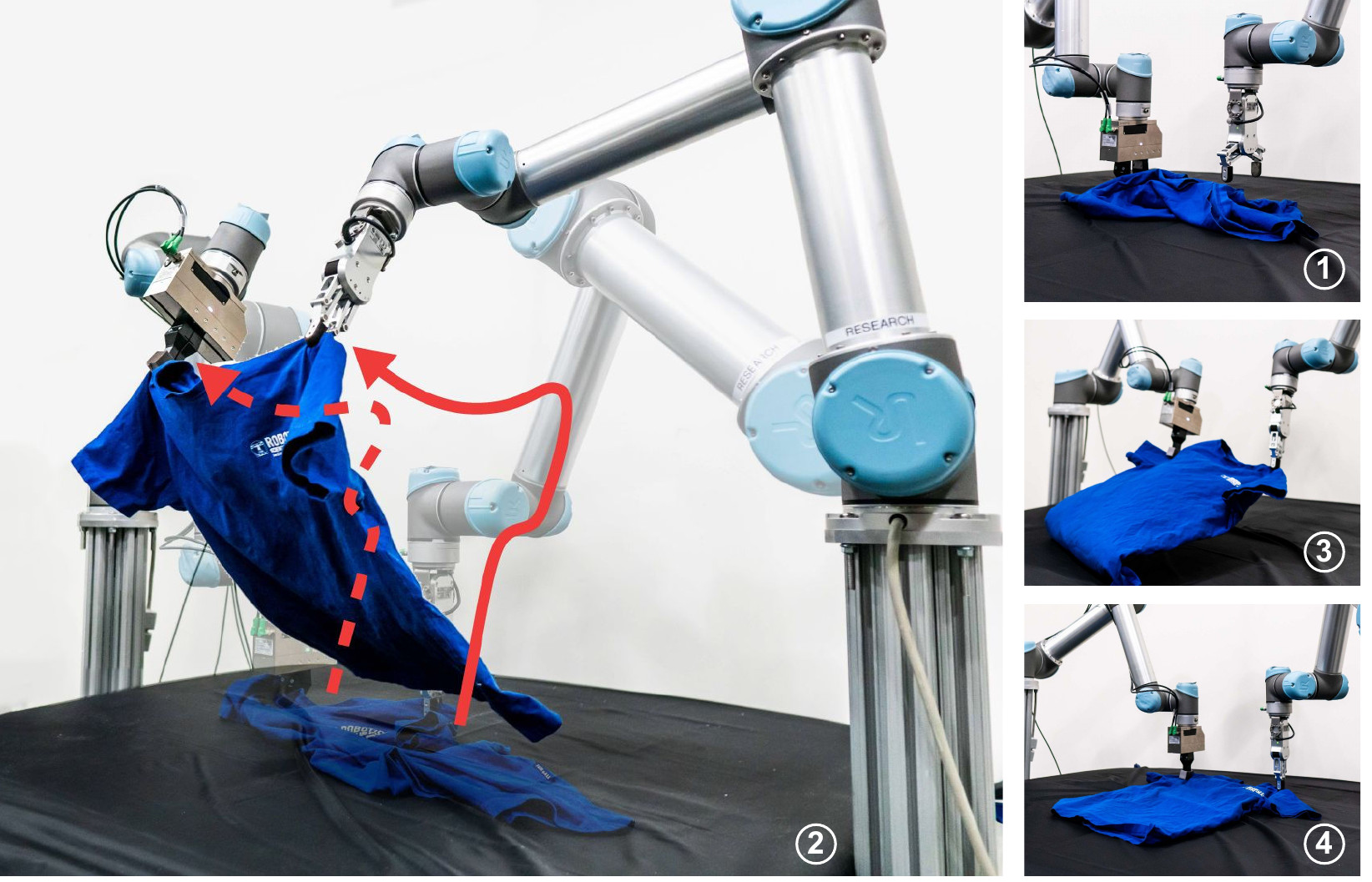

Congratulations to Huy Ha and Shuran Song who have won the CoRL 2021 best system paper award!

Their work, FlingBot: the unreasonable effectiveness of dynamic manipulations for cloth unfolding, was highly praised by the judging committee. “To me, this paper constitutes the most impressive account of both simulated and real-world cloth manipulation to date.”, commented one of the reviewers.

Below, the authors tell us more about their work, the methodology, and what they are planning next.

What is the topic of the research in your paper?

In my most recent publication with my advisor, Professor Shuran Song, we studied the task of cloth unfolding. The goal of the task is to manipulate a cloth from a crumpled initial state to an unfolded state, which is equivalent to maximizing the coverage of the cloth on the workspace.

Could you tell us about the implications of your research and why it is an interesting area for study?

Historically, most robotic manipulation research topics, such as grasp planning, are concerned with rigid objects, which have only 6 degrees of freedom since their geometry does not change. This allows one to apply the typical state estimation – task & motion planning pipeline in robotics. In contrast, deformable objects could bend and stretch in arbitrary directions, leading to infinite degrees of freedom. It’s unclear what the state of the cloth should even be. In addition, deformable objects such as clothes could experience severe self occlusion – given a crumpled piece of cloth, it’s difficult to identify whether it’s a shirt, jacket, or pair of pants. Therefore, cloth unfolding is a typical first step of cloth manipulation pipelines, since it reveals key features of the cloth for downstream perception and manipulation.

Despite the abundance of sophisticated methods for cloth unfolding over the years, they typically only address the easy case (where the cloth already starts off mostly unfolded) or take upwards of a hundred steps for challenging cases. These prior works all use single arm quasi-static actions, such as pick and place, which is slow and limited by the physical reach range of the system.

Could you explain your methodology?

In our daily lives, humans typically use both hands to manipulate cloths, and with as little as a single high velocity fling or two, we can unfold an initially crumpled cloth. Based on this observation, our key idea is simple: Use dual arm dynamic actions for cloth unfolding.

FlingBot is a self-supervised framework for cloth unfolding which uses a pick, stretch, and fling primitive for a dual-arm setup from visual observations. There are three key components to our approach. First is the decision to use a high velocity dynamic action. By relying on cloths’ mass combined with a high-velocity throw to do most of its work, a dynamic flinging policy can unfold cloths much more efficiently than a quasi-static policy. Second is a dual-arm grasp parameterization which makes satisfying collision safety constraints easy. By treating a dual-arm grasp not as two points but as a line with a rotation and length, we can directly constrain the rotation and length of the line to ensure arms do not cross over each other and do not try to grasp too close to each other. Third is our choice of using Spatial Action Maps, which learns translational, rotational, and scale equivariant value maps, and allows for sample efficient learning.

What were your main findings?

We found that dynamic actions have three desirable properties over quasi-static actions for the task of cloth unfolding. First, they are efficient – FlingBot achieves over 80% coverage within 3 actions on novel cloths. Second, they are generalizable – trained on only square cloths, FlingBot also generalizes to T-shirts. Third, they expand the system’s effective reach range – even when FlingBot can’t fully lift or stretch a cloth larger than the system’s physical reach range, it’s able to use high velocity flings to unfold the cloth.

After training and evaluating our model in simulation, we deployed and finetuned our model on a real world dual-arm system, which achieves above 80% coverage for all cloth categories. Meanwhile, the quasi-static pick & place baseline was only able to achieve around 40% coverage.

What further work are you planning in this area?

Although we motivated cloth unfolding as a precursor for downstream modules such as cloth state estimation, unfolding could also benefit from state estimation. For instance, if the system is confident it has identified the shoulders of the shirt in its state estimation, the unfolding policy could directly grasp the shoulders and unfold the shirt in one step. Based on this observation, we are currently working on a cloth unfolding and state estimation approach which can learn in a self-supervised manner in the real world.

About the authors

|

Huy Ha is a Ph.D. student in Computer Science at Columbia University. He is advised by Professor Shuran Song and is a member of the Columbia Artificial Intelligence and Robotics (CAIR) lab. |

|

Shuran Song is an assistant professor in computer science department at Columbia University, where she directs the Columbia Artificial Intelligence and Robotics (CAIR) Lab. Her research focuses on computer vision and robotics. She’s interested in developing algorithms that enable intelligent systems to learn from their interactions with the physical world, and autonomously acquire the perception and manipulation skills necessary to execute complex tasks and assist people. |

Find out more

- Read the paper on arXiv.

- The videos of the real-world experiments and code are available here, as is a video of the authors’ presentation at CoRL.

- Read more about the winning and shortlisted papers for the CoRL awards here.

Pietro Valdastri’s Plenary Talk – Medical capsule robots: a Fantastic Voyage

At the beginning of the new millennia, wireless capsule endoscopy was introduced as a minimally invasive method of inspecting the digestive tract. The possibility of collecting images deep inside the human body just by swallowing a “pill” revolutionized the field of gastrointestinal endoscopy and sparked a brand-new field of research in robotics: medical capsule robots. These are self-contained robots that leverage extreme miniaturization to access and operate in environments that are out of reach for larger devices. In medicine, capsule robots can enter the human body through natural orifices or small incisions, and detect and cure life-threatening diseases in a non-invasive manner. This talk provides a perspective on how this field has evolved in the last ten years. We explore what was accomplished, what has failed, and what were the lessons learned. We also discuss enabling technologies, intelligent control, possible levels of computer assistance, and highlight future challenges in this ongoing Fantastic Voyage.

Bio: Pietro Valdastri (Senior Member, IEEE) received the master’s degree (Hons.) from the University of Pisa, in 2002, and the Ph.D. degree in biomedical engineering, Scuola Superiore Sant’Anna in 2006. He is a Professor and a Chair of Robotics and Autonomous Systems with the University of Leeds. His research interests include robotic surgery, robotic endoscopy, design of magnetic mechanisms, and medical capsule robots. He is a recipient of the Wolfson Research Merit Award from the Royal Society.