How LAPP Rebuilt Its Inventory Process with Autonomous Drones

Bio-hybrid robots turn food waste into functional machines

Teaching robot policies without new demonstrations: interview with Jiahui Zhang and Jesse Zhang

The ReWiND method, which consists of three phases: learning a reward function, pre-training, and using the reward function and pre-trained policy to learn a new language-specified task online.

The ReWiND method, which consists of three phases: learning a reward function, pre-training, and using the reward function and pre-trained policy to learn a new language-specified task online.

In their paper ReWiND: Language-Guided Rewards Teach Robot Policies without New Demonstrations, which was presented at CoRL 2025, Jiahui Zhang, Yusen Luo, Abrar Anwar, Sumedh A. Sontakke, Joseph J. Lim, Jesse Thomason, Erdem Bıyık and Jesse Zhang introduce a framework for learning robot manipulation tasks solely from language instructions without per-task demonstrations. We asked Jiahui Zhang and Jesse Zhang to tell us more.

What is the topic of the research in your paper, and what problem were you aiming to solve?

Our research addresses the problem of enabling robot manipulation policies to solve novel, language-conditioned tasks without collecting new demonstrations for each task. We begin with a small set of demonstrations in the deployment environment, train a language-conditioned reward model on them, and then use that learned reward function to fine-tune the policy on unseen tasks, with no additional demonstrations required.

Tell us about ReWiND – what are the main features and contributions of this framework?

ReWiND is a simple and effective three-stage framework designed to adapt robot policies to new, language-conditioned tasks without collecting new demonstrations. Its main features and contributions are:

- Reward function learning in the deployment environment

We first learn a reward function using only five demonstrations per task from the deployment environment.- The reward model takes a sequence of images and a language instruction, and predicts per-frame progress from 0 to 1, giving us a dense reward signal instead of sparse success/failure.

- To expose the model to both successful and failed behaviors without having to collect failed behavior demonstrations, we introduce a video rewind augmentation: For a video segmentation V(1:t), we choose an intermediate point t1. We reverse the segment V(t1:t) to create V(t:t1) and append it back to the original sequence. This generates a synthetic sequence that resembles “making progress then undoing progress,” effectively simulating failed attempts.

- This allows the reward model to learn a smoother and more accurate dense reward signal, improving generalization and stability during policy learning.

- Policy pre-training with offline RL

Once we have the learned reward function, we use it to relabel the small demonstration dataset with dense progress rewards. We then train a policy offline using these relabeled trajectories. - Policy fine-tuning in the deployment environment

Finally, we adapt the pre-trained policy to new, unseen tasks in the deployment environment. We freeze the reward function and use it as the feedback for online reinforcement learning. After each episode, the newly collected trajectory is relabeled with dense rewards from the reward model and added to the replay buffer. This iterative loop allows the policy to continually improve and adapt to new tasks without requiring any additional demonstrations.

Could you talk about the experiments you carried out to test the framework?

We evaluate ReWiND in both the MetaWorld simulation environment and the Koch real-world setup. Our analysis focuses on two aspects: the generalization ability of the reward model and the effectiveness of policy learning. We also compare how well different policies adapt to new tasks under our framework, demonstrating significant improvements over state-of-the-art methods.

(Q1) Reward generalization – MetaWorld analysis

We collect a metaworld dataset in 20 training tasks, each task include 5 demos, and 17 related but unseen tasks for evaluation. We train the reward function with the metaworld dataset and a subset of the OpenX dataset.

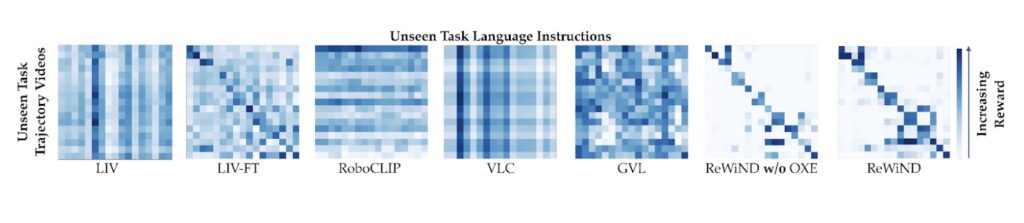

We compare ReWiND to LIV[1], LIV-FT, RoboCLIP[2], VLC[3], and GVL[4]. For generalization to unseen tasks, we use video–language confusion matrices. We feed the reward model video sequences paired with different language instructions and expect the correctly matched video–instruction pairs to receive the highest predicted rewards. In the confusion matrix, this corresponds to the diagonal entries having the strongest (darkest) values, indicating that the reward function reliably identifies the correct task description even for unseen tasks.

Video-language reward confusion matrix. See the paper for more information.

Video-language reward confusion matrix. See the paper for more information.

For demo alignment, we measure the correlation between the reward model’s predicted progress and the actual time steps in successful trajectories using Pearson r and Spearman ρ. For policy rollout ranking, we evaluate whether the reward function correctly ranks failed, near-success, and successful rollouts. Across these metrics, ReWiND significantly outperforms all baselines—for example, it achieves 30% higher Pearson correlation and 27% higher Spearman correlation than VLC on demo alignment, and delivers about 74% relative improvement in reward separation between success categories compared with the strongest baseline LIV-FT.

(Q2) Policy learning in simulation (MetaWorld)

We pre-train on the same 20 tasks and then evaluate RL on 8 unseen MetaWorld tasks for 100k environment steps.

Using ReWiND rewards, the policy achieves an interquartile mean (IQM) success rate of approximately 79%, representing a ~97.5% improvement over the best baseline. It also demonstrates substantially better sample efficiency, achieving higher success rates much earlier in training.

(Q3) Policy learning in real robot (Koch bimanual arms)

Setup: a real-world tabletop bimanual Koch v1.1 system with five tasks, including in-distribution, visually cluttered, and spatial-language generalization tasks.

We use 5 demos for the reward model and 10 demos for the policy in this more challenging setting. With about 1 hour of real-world RL (~50k env steps), ReWiND improves average success from 12% → 68% (≈5× improvement), while VLC only goes from 8% → 10%.

Are you planning future work to further improve the ReWiND framework?

Yes, we plan to extend ReWiND to larger models and further improve the accuracy and generalization of the reward function across a broader range of tasks. In fact, we already have a workshop paper extending ReWiND to larger-scale models.

In addition, we aim to make the reward model capable of directly predicting success or failure, without relying on the environment’s success signal during policy fine-tuning. Currently, even though ReWiND provides dense rewards, we still rely on the environment to indicate whether an episode has been successful. Our goal is to develop a fully generalizable reward model that can provide both accurate dense rewards and reliable success detection on its own.

References

[1] Yecheng Jason Ma et al. “Liv: Language-image representations and rewards for robotic control.” International Conference on Machine Learning. PMLR, 2023.

[2] Sumedh Sontakke et al. “Roboclip: One demonstration is enough to learn robot policies.” Advances in Neural Information Processing Systems 36 (2023): 55681-55693.

[3] Minttu Alakuijala et al. “Video-language critic: Transferable reward functions for language-conditioned robotics.” arXiv:2405.19988 (2024).

[4] Yecheng Jason Ma et al. “Vision language models are in-context value learners.” The Thirteenth International Conference on Learning Representations. 2024.

About the authors

|

Jiahui Zhang is a Ph.D. student in Computer Science at the University of Texas at Dallas, advised by Prof. Yu Xiang. He received his M.S. degree from the University of Southern California, where he worked with Prof. Joseph Lim and Prof. Erdem Bıyık. |

|

Jesse Zhang is a postdoctoral researcher at the University of Washington, advised by Prof. Dieter Fox and Prof. Abhishek Gupta. He completed his Ph.D. at the University of Southern California, advised by Prof. Jesse Thomason and Prof. Erdem Bıyık at USC, and Prof. Joseph J. Lim at KAIST. |

Key Roles of AI Agents in Education Apps

Key Roles of AI Agents in Education Apps