Benefits of Miniature Industrial Robots

Researchers developing fully autonomous robot chef

4 Amazing Advancements in Robotic Grippers to Keep an Eye On

AugLimb: A compact robotic limb to support humans during everyday activities

Reliable Quality Inspection of Plastics With Autonomous Machine Vision

Need a bite at Seattle-Tacoma airport? A robot will now deliver food to you at your gate

10 Factors Warehouse Managers Should Consider for Goods-to-Person Fulfilment

Peachy robot: A glimpse into the peach orchard of the future

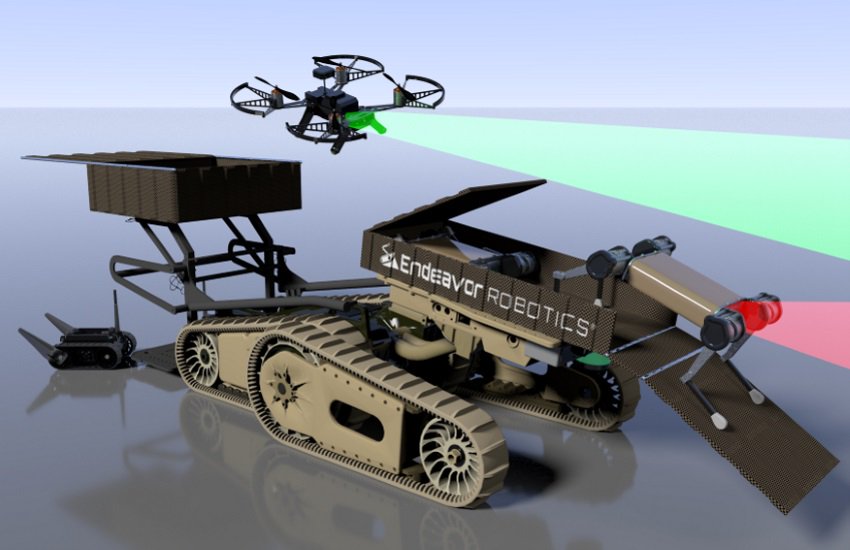

#338: Marsupial Robots, with Chris Lee

Lilly interviews Chris Lee, a graduate student at Oregon State University. Lee explains his research on marsupial robots, or carrier-passenger pairs of heterogeneous robot systems. They discuss the possible applications of marsupial robots including the DARPA Subterranean Competition, and some of the technical challenges including optimal deployment formulated as a stochastic assignment problem.

Chris Lee

Chris Lee is pursuing a Master of Science in Robotics at Oregon State University, having received a Bachelor of Science in Mechanical Engineering from the University of Buffalo. His research is in robotic exploration, frontier extraction, and stochastic assignment.

Links

- Download mp3 (32.23 MB)

- Subscribe to Robohub using iTunes, RSS, or Spotify

- Support us on Patreon

Robotics Designed for Harsh Environments

Do Alexa and Siri make kids bossier? New research suggests you might not need to worry

#IROS2020 ‘Black in Robotics’ special series

Apart from the IEEE/RSJ IROS 2020 (International Conference on Intelligent Robots and Systems) original series Real Roboticist that we have been featuring in the last weeks, another series of three videos was produced together with Black in Robotics and the support of Toyota Research Institute. In this series, black roboticists give their personal examples of why diversity matters in robotics, showcase their research and explain what made them build a career in robotics.

Here’s a list of all the speakers and organisations who took part in the videos:

- Ariel Anders – Roboticist at Robust.AI

- Allison Okamura – Professor of Mechanical Engineering at Stanford University

- Alivia Blount – Data Scientist

- Anthony Jules – Co-founder and COO at Robust.AI

- Andra Keay – Robotics Industry Futurist, Managing Director of Silicon Valley Robotics and Core Team Member of Robohub

- Carlotta A. Berry – Professor of Electrical and Computer Engineering at Rose-Hulman Institute of Technology

- Donna Auguste – Entrepreneur and Data Scientist

- Clinton Enwerem – Robotics Trainee from the Robotics & Artificial Intelligence Nigeria (RAIN) team

- Quentin Sanders – Postdoctoral Research Fellow at North Carolina State University

- George Okoroafor – Robotics Research Engineer from the Robotics & Artificial Intelligence Nigeria (RAIN) team

- Tatiana Jean-Louis – Amazon & Robotics Geek

- Patrick Musau – Graduate Research Assistant at Vanderbilt University

- Melanie Moses – Professor of Computer Science at the University of New Mexico