The agentic AI development lifecycle

Proof-of-concept AI agents look great in scripted demos, but most never make it to production. According to Gartner, over 40% of agentic AI projects will be canceled by the end of 2027, due to escalating costs, unclear business value, or inadequate risk controls.

This failure pattern is predictable. It rarely comes down to talent, budget, or vendor selection. It comes down to discipline. Building an agent that behaves in a sandbox is straightforward. Building one that holds up under real workloads, inside messy enterprise systems, under real regulatory pressure is not.

The risk is already on the books, whether leadership admits it or not. Ungoverned agents run in production today. Marketing teams deploy AI wrappers. Sales deploys Slack bots. Operations embeds lightweight agents inside SaaS tools. Decisions get made, actions get triggered, and sensitive data gets touched without shared visibility, a clear owner, or enforceable controls.

The agentic AI development lifecycle exists to end that chaos, bringing every agent into a governed, observable framework and treating them as extensions of the workforce, not clever experiments.

Key takeaways

- Most agentic AI initiatives stall because teams skip the lifecycle work required to move from demo to deployment. Without a defined path that enforces boundaries, standardizes architecture, validates behavior, and hardens integrations, scale exposes weaknesses that pilots conveniently hide.

- Ungoverned and invisible agents are now one of the most serious enterprise risks. When agents operate outside centralized discovery, observability, and governance, organizations lose the ability to trace decisions, audit behavior, intervene safely, and correct failures quickly. Lifecycle management brings every agent into view, whether approved or not.

- Production-grade agents demand architecture built for change. Modular reasoning and planning layers, paired with open standards and emerging interoperability protocols like MCP and A2A, support interoperability, extensibility, and long-term freedom from vendor lock-in.

- Testing agentic systems requires a reset. Functional testing alone is meaningless. Behavioral validation, large-scale stress testing, multi-agent coordination checks, and regression testing are what earn reliability in environments agents were never explicitly trained to handle.

Phases of the AI development lifecycle

Traditional software lifecycles assume deterministic systems, but agentic AI breaks that assumption. These systems take actions, adapt to context, and coordinate across domains, which means reliability must be built in from the start and reinforced continuously.

This lifecycle is unified by design. Builders, operators, and governors aren’t treated as separate phases or separate handoffs. Development, deployment, and governance move together because separation is how fragile agents slip into production.

Every phase exists to absorb risk early. Skip one (or rush one), and the cost returns later through rework, outages, compliance exposure, and integration failures.

Phase 1: Defining the problem and requirements

Effective agent development starts with humans defining clear objectives through data analysis and stakeholder input — along with explicit boundaries:

- Which decisions are autonomous?

- Where does human oversight intervene?

- Which risks are acceptable?

- How will failure be contained?

KPIs must map to measurable business outcomes, not vanity metrics. Think cost reduction, process efficiency, customer satisfaction — not just the agent’s accuracy. Accuracy without impact is noise. An agent can classify a request correctly and still fail the business if it routes work incorrectly, escalates too late, or triggers the wrong downstream action.

Clear requirements establish the governance logic that constrains agent behavior at scale — and prevent the scope drift that derails most initiatives before they reach production.

Phase 2: Data collection and preparation

Poor data discipline is more costly in agentic AI than in any other context. These are systems making decisions that directly affect real business processes and customer experiences.

AI agents require multi-modal and real-time data. Structured records alone are insufficient. Your agents need access to structured databases, unstructured documents, real-time feeds, and contextual information from your other systems to understand:

- What happened

- When it happened

- Why it matters

- How it relates to other business events

Diverse data exposure expands behavioral coverage. Agents trained across varied scenarios encounter edge cases before production does, making them more adaptive and reliable under dynamic conditions.

Phase 3: Architecture and model design

Your Day 1 architecture choices determine whether agents can scale cleanly or collapse under their own complexity.

Modular architecture with reasoning, planning, and action layers is non-negotiable. Agents need to evolve without full rebuilds. Open standards and emerging interoperability protocols like Model Context Protocol (MCP) and A2A reinforce modularity, improve interoperability, reduce integration friction, and help enterprises avoid vendor lock-in while keeping optionality.

API-first design is equally critical. Agents need to be orchestrated programmatically, not confined to limited proprietary interfaces. If agents can’t be controlled through APIs, they can’t be governed at scale.

Event-driven architecture closes the loop. Agents should respond to business events in real time, not poll systems or wait for manual triggers. This keeps agent behavior aligned with operational reality instead of drifting into side workflows no one owns.

Governance must live in the architecture. Observability, logging, explainability, and oversight belong in the control plane from the start. Standardized, open architecture is how agentic AI stays an asset instead of becoming long-term technical debt.

The architecture decisions made here directly determine what’s testable in Phase 5 and what’s governable in Phase 7.

Phase 4: Training and validation

A “functionally complete” agent is not the same as a “production-ready” agent. Many teams reach a point where an agent works once, or even a hundred times in controlled environments. The real challenge is reliability at 100x scale, under unpredictable conditions and sustained load. That gap is where most initiatives stall, and why so few pilots survive contact with production.

Iterative training using reinforcement and transfer learning helps, but simulation environments and human feedback loops are necessary for validating decision quality and business impact. You’re testing for accuracy and confirming that the agent makes sound business decisions under pressure.

Phase 5: Testing and quality assurance

Testing agentic systems is fundamentally different from traditional QA. You’re not testing static behavior; you’re testing decision-making, multi-agent collaboration, and context-dependent boundaries.

Three testing disciplines define production readiness:

- Behavioral test suites establish baseline performance across representative tasks.

- Stress testing pushes agents through thousands of concurrent scenarios before production ever sees them.

- Regression testing ensures new capabilities don’t silently degrade existing ones.

Traditional software either works or doesn’t. Agents operate in shades of gray, making decisions with varying degrees of confidence and accuracy. Your testing framework needs to account for that. Metrics like decision reliability, escalation appropriateness, and coordination accuracy matter as much as task completion.

Multi-agent interactions demand scrutiny because weak handoffs, resource contention, or information leakage can undermine workflows fast.

When your sales agent hands off to your fulfillment agent, does critical information transfer with it, or does it get lost in translation, or (perhaps worse) is it publicly exposed?

Testing needs to be continuous and aligned with real-world use. Evaluation pipelines should feed directly into observability and governance so failures surface immediately, land with the right teams, and trigger corrective action before the business gets caught in the blast radius.

Production environments will surface scenarios no test suite anticipated. Build systems that detect and respond to unexpected situations gracefully, escalating to human teams when needed.

Phase 6: Deployment and integration

Deployment is where architectural decisions either pay off or expose what was never properly resolved. Agents need to operate across hybrid or on-prem environments, integrate with legacy systems, and scale without surprise costs or performance degradation.

CI/CD pipelines, rollback procedures, and performance baselines are essential in this phase. Agent compute patterns are more demanding and less predictable than traditional applications, so resource allocation, cost controls, and capacity planning must account for agents making autonomous decisions at scale.

Performance baselines establish what “normal” looks like for your agents. When performance eventually degrades (and it will), you need to detect it quickly and identify whether the issue is data, model, or infrastructure.

Phase 7: Lifecycle management and governance

The uncomfortable truth: most enterprises already have ungoverned agents in production. Wrappers, bots, and embedded tools operate outside centralized visibility. Traditional monitoring tools can’t even detect many of them, which creates compliance risk, reliability risk, and security blind spots.

Continuous discovery and inventory capabilities identify every agent deployment, whether sanctioned or not. Real-time drift detection catches agents the moment they exceed their intended scope.

Anomaly detection also surfaces performance issues and security gaps before they escalate into full-blown incidents.

Unifying builders, operators, and governors

Most platforms fragment responsibility. Development lives in one tool, operations in another, governance in a third. That fragmentation creates blind spots, delays accountability, and forces teams to argue over whose dashboard is “right.”

Agentic AI only works when builders, operators, and governors share the same context, the same telemetry, the same controls, and the same inventory. Unification eliminates the gaps where failures hide and projects die.

That means:

- Builders get a production-grade sandbox with full CI/CD integration, not a sandbox disconnected from how agents will actually run.

- Operators need dynamic orchestration and monitoring that reflects what’s happening across the entire agent workforce.

- Governors need end-to-end lineage, audit trails, and compliance controls built into the same system, not bolted on after the fact.

When these roles operate from a shared foundation, failures surface faster, accountability is clearer, and scale becomes manageable.

Ensuring proper governance, security, and compliance

When business users and stakeholders trust that agents operate within defined boundaries, they’re more willing to expand agent capabilities and autonomy.

That’s what governance ultimately gets you. Added as an afterthought, every new use case becomes a compliance review that slows deployment.

Traceability and accountability don’t happen by accident. They require audit logging, responsible AI standards, and documentation that holds up under regulatory scrutiny — built in from the start, not assembled under pressure.

Governance frameworks

Approval workflows, access controls, and performance audits create the structure that moves toward more controlled autonomy. Role-based permissions separate development, deployment, and oversight responsibilities without creating silos that slow progress.

Centralized agent registries provide visibility into what agents exist, what they do, and how they’re performing. This visibility reduces duplicate effort and surfaces opportunities for agent collaboration.

Security and responsible AI

Security for agentic AI goes beyond traditional cybersecurity. The decision-making process itself must be secured — not just the data and infrastructure around it. Zero-trust principles, encryption, role-based access, and anomaly detection need to work together to protect both agent decision logic and the data agents operate on.

Explainable decision-making and bias detection maintain compliance with regulations requiring algorithmic transparency. When agents make decisions that affect customers, employees, or business outcomes, the ability to explain and justify those decisions isn’t optional.

Transparency also provides board-level confidence. When leadership understands how agents make decisions and what safeguards are in place, expanding agent capabilities becomes a strategic conversation rather than a governance hurdle.

Scaling from pilot to agent workforce

Scaling multiplies complexity fast. Managing a handful of agents is straightforward. Coordinating dozens to operate like members of your workforce is not.

This is the shift from “project AI” to “production AI,” where you’re moving from proving agents can work to proving they can work reliably at enterprise scale.

The coordination challenges are concrete:

- In finance, fraud detection agents need to share intelligence with risk assessment agents in real time.

- In healthcare, diagnostic agents coordinate with treatment recommendation agents without information loss.

- In manufacturing, quality control agents need to communicate with supply chain optimization agents before problems compound.

Early coordination decisions determine whether scale creates leverage, creates conflict, or creates risk. Get the orchestration architecture right before the complexity multiplies.

Agent improvement and flywheel

Post-deployment learning separates good agents from great ones. But the feedback loop needs to be systematic, not accidental.

The cycle is straightforward:

Observe → Diagnose → Validate → Deploy

Automated feedback captures performance metrics and black-and-white outcome data, while human-in-the-loop feedback provides the context and qualitative assessment that automated systems can’t generate on their own. Together, they create a continuous improvement mechanism that gets smarter as the agent workforce grows.

Managing infrastructure and consumption

Resource allocation and capacity planning must account for how differently agents consume infrastructure compared to traditional applications. A conventional app has predictable load curves. Agents can sit idle for hours, then process thousands of requests the moment a business event triggers them.

That unpredictability turns infrastructure planning into a business risk if it’s not managed deliberately. As agent portfolios grow, cost doesn’t increase linearly. It jumps, sometimes without warning, unless guardrails are already in place.

The difference at scale is significant:

- Three agents handling 1,000 requests daily might cost $500 monthly.

- Fifty agents handling 100,000 requests daily (with traffic bursts) could cost $50,000 monthly, but might also generate millions in additional revenue or cost savings.

The goal is infrastructure controls that prevent cost surprises without constraining the scaling that drives business value. That means automated scaling policies, cost alerts, and resource optimization that learns from agent behavior patterns over time.

The future of work with agentic AI

Agentic AI works best when it enhances human teams, freeing people to focus on what human judgment does best: strategy, creativity, and relationship-building.

The most successful implementations create new roles rather than eliminate existing ones:

- AI supervisors monitor and guide agent behavior.

- Orchestration engineers design multi-agent workflows.

- AI ethicists oversee responsible deployment and operation.

These roles reflect a broader shift: as agents take on more execution, humans move toward oversight, design, and accountability.

Treat the agentic AI lifecycle as a system, not a checklist

Moving agentic AI from pilot to production requires more than capable technology. It takes executive sponsorship, honest audits of existing AI initiatives and legacy systems, carefully selected use cases, and governance that scales with organizational ambition.

The connections between components matter as much as the components themselves. Development, deployment, and governance that operate in silos produce fragile agents. Unified, they produce an AI workforce that can carry real enterprise responsibility.

The difference between organizations that scale agentic AI and those stuck in pilot purgatory rarely comes down to the sophistication of individual tools. It comes down to whether the entire lifecycle is treated as a system, not a checklist.

Learn how DataRobot’s Agent Workforce Platform helps enterprise teams move from proof of concept to production-grade agentic AI.

FAQs

How is the agentic AI lifecycle different from a standard MLOps or software lifecycle?

Traditional SDLC and MLOps lifecycles were designed for deterministic systems that follow fixed code paths or single model predictions. The agentic AI lifecycle accounts for autonomous decision making, multi-agent coordination, and continuous learning in production. It adds phases and practices focused on autonomy boundaries, behavioral testing, ongoing discovery of new agents, and governance that covers every action an agent takes, not just its model output.

Where do most agentic AI projects actually fail?

Most projects do not fail in early prototyping. They fail at the point where teams try to move from a successful proof of concept into production. At that point gaps in architecture, testing, observability, and governance show up. Agents that behaved well in a controlled environment start to drift, break integrations, or create compliance risk at scale. The lifecycle in this article is designed to close that “functionally complete versus production-ready” gap.

What should enterprises do if they already have ungoverned agents in production?

The first step is discovery, not shutdown. You need an accurate inventory of every agent, wrapper, and bot that touches critical systems before you can govern them. From there, you can apply standardization: define autonomy boundaries, introduce monitoring and drift detection, and bring those agents under a central governance model. DataRobot gives you a single place to register, observe, and control both new and existing agents.

How does this lifecycle work with the tools and frameworks our teams already use?

The lifecycle is designed to be tool-agnostic and standards-friendly. Developers can keep building with their preferred frameworks and IDEs while targeting an API-first, event-driven architecture that uses standards and emerging interoperability protocols like MCP and A2A. DataRobot complements this by providing CLI, SDKs, notebooks, and codespaces that plug into existing workflows, while centralizing observability and governance across teams.

Where does DataRobot fit in if we already have monitoring and governance tools?

Many enterprises have solid pieces of the stack, but they live in silos. One team owns infra monitoring, another owns model tracking, a third manages policy and audits. DataRobot’s Agent Workforce Platform is designed to sit across these efforts and unify them around the agent lifecycle. It provides cross-environment observability, governance that covers predictive, generative, and agentic workflows, and shared views for builders, operators, and governors so you can scale agents without stitching together a new toolchain for every project.

The post The agentic AI development lifecycle appeared first on DataRobot.

DroneQ Robotics Expands Offshore with R/V Mintis

HP IQ: Finally, an AI PC That Actually Does Something Useful for the Enterprise

The history of the PC is littered with “revolutionary” features that ended up being little more than expensive paperweights. We’ve seen it with 3D screens, we’ve seen it with dedicated social media buttons, and lately, we’ve been seeing it with […]

The post HP IQ: Finally, an AI PC That Actually Does Something Useful for the Enterprise appeared first on TechSpective.

Your agentic AI pilot worked. Here’s why production will be harder.

Scaling agentic AI in the enterprise is an engineering problem that most organizations dramatically underestimate — until it’s too late.

Think about a Formula 1 car. It’s an engineering marvel, optimized for one environment, one set of conditions, one problem. Put it on a highway, and it fails immediately. Wrong infrastructure, wrong context, built for the wrong scale.

Enterprise agentic AI has the same problem. The demo works beautifully. The pilot impresses the right people. Then someone says, “Let’s scale this,” and everything that made it look so promising starts to crack. The architecture wasn’t built for production conditions. The governance wasn’t designed for real consequences. The coordination that worked across five agents breaks down across fifty.

That gap between “look what our agent can do” and “our agents are driving ROI across the organization” isn’t primarily a technology problem. It’s an architecture, governance, and organizational problem. And if you’re not designing for scale from day one, you’re not building a production system. You’re building a very expensive demo.

This post is the technical practitioner’s guide to closing that gap.

Key takeaways

- Scaling agentic applications requires a unified architecture, governance, and organizational readiness to move beyond pilots and achieve enterprise-wide impact.

- Modular agent design and strong multi-agent coordination are essential for reliability at scale.

- Real-time observability, auditability, and permissions-based controls ensure safe, compliant operations across regulated industries.

- Enterprise teams must identify hidden cost drivers early and track agent-specific KPIs to maintain predictable performance and ROI.

- Organizational alignment, from leadership sponsorship to team training, is just as critical as the underlying technical foundation.

What makes agentic applications different at enterprise scale

Not all agentic use cases are created equal, and practitioners need to know the difference before committing architecture decisions to a use case that isn’t ready for production.

The use cases with the clearest production traction today are document processing and customer service. Document processing agents handle thousands of documents daily with measurable ROI. Customer service agents scale well when designed with clear escalation paths and human-in-the-loop checkpoints.

When a customer contacts support about a billing error, the agent accesses payment history, identifies the cause, resolves the issue, and escalates to a human rep when the situation requires it. Each interaction informs the next. That’s the pattern that scales: clear objectives, defined escalation paths, and human-in-the-loop checkpoints where they matter.

Other use cases, including autonomous supply chain optimization and financial trading, remain largely experimental. The differentiator isn’t capability. It’s the reversibility of decisions, the clarity of success metrics, and how tractable the governance requirements are.

Use cases where agents can fail gracefully and humans can intervene before material harm occurs are scaling today. Use cases requiring real-time autonomous decisions with significant business consequences are not.

That distinction should drive your architecture decisions from day one.

Why agentic AI breaks down at scale

What works with five agents in a controlled environment breaks at fifty agents across multiple departments. The failure modes aren’t random. They’re predictable, and they compound.

Technical complexity explodes

Coordinating a handful of agents is manageable. Coordinating thousands while maintaining state consistency, ensuring proper handoffs, and preventing conflicts requires orchestration that most teams haven’t built before.

When a customer service agent needs to coordinate with inventory, billing, and logistics agents simultaneously, each interaction creates new integration points and new failure risks.

Every additional agent multiplies that surface area. When something breaks, tracing the failure across dozens of interdependent agents isn’t just difficult — it’s a different class of debugging problem entirely.

Governance and compliance risks multiply

Governance is the challenge most likely to derail scaling efforts. Without auditable decision paths for every request and every action, legal, compliance, and security teams will block production deployment. They should.

A misconfigured agent in a pilot generates bad recommendations. A misconfigured agent in production can violate HIPAA, trigger SEC investigations, or cause supply chain disruptions that cost millions. The stakes aren’t comparable.

Enterprises don’t reject scaling because agents fail technically. They reject it because they can’t prove control.

Costs spiral out of control

What looks affordable in testing becomes budget-breaking at scale. The cost drivers that hurt most aren’t the obvious ones. Cascading API calls, growing context windows, orchestration overhead, and non-linear compute costs don’t show up meaningfully in pilots. They show up in production, at volume, when it’s expensive to change course.

A single customer service interaction might cost $0.02 in isolation. Add inventory checks, shipping coordination, and error handling, and that cost multiplies before you’ve processed a fraction of your daily volume.

None of these challenges make scaling impossible. But they make intentional architecture and early cost instrumentation non-negotiable. The next section covers how to build for both.

How to build a scalable agentic architecture

The architecture decisions you make early will determine whether your agentic applications scale gracefully or collapse under their own complexity. There’s no retrofitting your way out of bad foundational choices.

Start with modular design

Monolithic agents are how teams accidentally sabotage their own scaling efforts.

They feel efficient at first with one agent, one deployment, and one place to manage logic. But as soon as volume, compliance, or real users enter the picture, that agent becomes an unmaintainable bottleneck with too many responsibilities and zero resilience.

Modular agents with narrow scopes fix this. In customer service, split the work between orders, billing, and technical support. Each agent becomes deeply competent in its domain instead of vaguely capable at everything. When demand surges, you scale precisely what’s under strain. When something breaks, you know exactly where to look.

Plan for multi-agent coordination

Building capable individual agents is the easy part. Getting them to work together without duplicating effort, conflicting on decisions, or creating untraceable failures at scale is where most teams underestimate the problem.

Hub-and-spoke architectures use a central orchestrator to manage state, route tasks, and keep agents aligned. They work well for defined workflows, but the central controller becomes a bottleneck as complexity grows.

Fully decentralized peer-to-peer coordination offers flexibility, but don’t use it in production. When agents negotiate directly without central visibility, tracing failures becomes nearly impossible. Debugging is a nightmare.

The most effective pattern in enterprise environments is the supervisor-coordinator model with shared context. A lightweight routing agent dispatches tasks to domain-specific agents while maintaining centralized state. Agents operate independently without blocking each other, but coordination stays observable and debuggable.

Leverage vendor-agnostic integrations

Vendor lock-in kills adaptability. When your architecture depends on specific providers, you lose flexibility, negotiating power, and resilience.

Build for portability from the start:

- Abstraction layers that let you swap model providers or tools without rebuilding agent logic

- Wrapper functions around external APIs, so provider-specific changes don’t propagate through your system

- Standardized data formats across agents to prevent integration debt

- Fallback providers for your most important services, so a single outage doesn’t take down production

When a provider’s API goes down or pricing changes, your agents route to alternatives without disruption. The same architecture supports hybrid deployments, letting you assign different providers to different agent types based on performance, cost, or compliance requirements.

Ensure real-time monitoring and logging

Without real-time observability, scaling agents is reckless.

Autonomous systems make decisions faster than humans can track. Without deep visibility, teams lose situational awareness until something breaks in public.

Effective monitoring operates across three layers:

- Individual agents for performance, efficiency, and decision quality

- The system for coordination issues, bottlenecks, and failure patterns

- Business outcomes to confirm that autonomy is delivering measurable value

The goal isn’t more data, though. It’s better answers. Monitoring should let you trace all agent interactions, diagnose failures with confidence, and catch degradation early enough to intervene before it reaches production impact.

Managing governance, compliance, and risk

Agentic AI without governance is a lawsuit in progress. Autonomy at scale magnifies everything, including mistakes. One bad decision can trigger regulatory violations, reputational damage, and legal exposure that outlasts any pilot success.

Agents need sharply defined permissions. Who can access what, when, and why must be explicit. Financial agents have no business touching healthcare data. Customer service agents shouldn’t modify operational records. Context matters, and the architecture needs to enforce it.

Static rules aren’t enough. Permissions need to respond to confidence levels, risk signals, and situational context in real time. The more uncertain the scenario, the tighter the controls should get automatically.

Auditability is your insurance policy. Every meaningful decision should be traceable, explainable, and defensible. When regulators ask why an action was taken, you need an answer that stands up to scrutiny.

Across industries, the details change, but the demand is universal: prove control, prove intent, prove compliance. AI governance isn’t what slows down scaling. It’s what makes scaling possible.

Optimizing costs and tracking the right metrics

Cheaper APIs aren’t the answer. You need systems that deliver predictable performance at sustainable unit economics. That requires understanding where costs actually come from.

1. Identify hidden cost drivers

The costs that kill agentic AI projects aren’t the obvious ones. LLM API calls add up, but the real budget pressure comes from:

- Cascading API calls: One agent triggers another, which triggers a third, and costs compound with every hop.

- Context window growth: Agents maintaining conversation history and cross-workflow coordination accumulate tokens fast.

- Orchestration overhead: Coordination complexity adds latency and cost that doesn’t show up in per-call pricing.

A single customer service interaction might cost $0.02 on its own. Add an inventory check ($0.01) and shipping coordination ($0.01), and that cost doubles before you’ve accounted for retries, error handling, or coordination overhead. With thousands of daily interactions, the math becomes a serious problem.

2. Define KPIs for enterprise AI

Response time and uptime tell you whether your system is running. They don’t tell you whether it’s working. Agentic AI requires a different measurement framework:

Operational effectiveness

- Autonomy rate: percentage of tasks completed without human intervention

- Decision quality score: how often agent decisions align with expert judgment or target outcomes

- Escalation appropriateness: whether agents escalate the right cases, not just the hard ones

Learning and adaptation

- Feedback incorporation rate: how quickly agents improve based on new signals

- Context utilization efficiency: whether agents use available context effectively or wastefully

Cost efficiency

- Cost per successful outcome: total cost relative to value delivered

- Token efficiency ratio: output quality relative to tokens consumed

- Tool and agent call volume: a proxy for coordination overhead

Risk and governance

- Confidence calibration: whether agent confidence scores reflect actual accuracy

- Guardrail trigger rate: how often safety controls activate, and whether that rate is trending in the right direction

3. Iterate with continuous feedback loops

Agents that don’t learn don’t belong in production.

At enterprise scale, deploying once and moving on isn’t a strategy. Static systems decay, but smart systems adapt. The difference is feedback.

The agents that succeed are surrounded by learning loops: A/B testing different strategies, reinforcing outcomes that deliver value, and capturing human judgment when edge cases arise. Not because humans are better, but because they provide the signals agents need to improve.

You don’t reduce customer service costs by building a perfect agent. You reduce costs by teaching agents continuously. Over time, they handle more complex cases autonomously and escalate only when it matters, giving you cost reduction driven by learning.

Organizational readiness is half the problem

Technology only gets you halfway there. The rest is organizational readiness, which is where most agentic AI initiatives quietly stall out.

Get leadership aligned on what this actually requires

The C-suite needs to understand that agentic AI changes operating models, accountability structures, and risk profiles. That’s a harder conversation than budget approval. Leaders need to actively sponsor the initiative when business processes change and early missteps generate skepticism.

Frame the conversation around outcomes specific to agentic AI:

- Faster autonomous decision-making

- Reduced operational overhead from human-in-the-loop bottlenecks

- Competitive advantage from systems that improve continuously

Be direct about the investment required and the timeline for returns. Surprises at this level kill programs.

Upskilling has to cut across roles

Hiring a few AI experts and hoping the rest of your teams catch up isn’t a plan. Every role that touches an agentic system needs relevant training. Engineers build and debug. Operations teams keep systems running. Analysts optimize performance. Gaps at any stage become production risks.

Culture needs to shift

Business users need to learn how to work alongside agentic systems. That means knowing when to trust agent recommendations, how to provide useful feedback, and when to escalate. These aren’t instinctive behaviors — they have to be taught and reinforced.

Moving from “AI as threat” to “AI as partner” doesn’t happen through communication plans. It happens when agents demonstrably make people’s jobs easier, and leaders are transparent about how decisions get made and why.

Build a readiness checklist before you scale

Before expanding beyond a pilot, confirm you have the following in place:

- Executive sponsors committed for the long term, not just the launch

- Cross-functional teams with clear ownership at every lifecycle stage

- Success metrics tied directly to business objectives, not just technical performance

- Training programs developed for all roles that will touch production systems

- A communication plan that addresses how agentic decisions get made and who is accountable

Turning agentic AI into measurable business impact

Scale doesn’t care how well your pilot performed. Each stage of deployment introduces new constraints, new failure modes, and new definitions of success. The enterprises that get this right move through four stages deliberately:

- Pilot: Prove value in a controlled environment with a single, well-scoped use case.

- Departmental: Expand to a full business unit, stress-testing architecture and governance at real volume.

- Enterprise: Coordinate agents across the organization, introducing new use cases against a proven foundation.

- Optimization: Continuously improve performance, reduce costs, and expand agent autonomy where it’s earned.

What works at 10 users breaks at 100. What works in one department breaks at enterprise scale. Reaching full deployment means balancing production-grade technology with realistic economics and an organization willing to change how decisions get made.

When those elements align, agentic AI stops being an experiment. Decisions move faster, operational costs drop, and the gap between your capabilities and your competitors’ widens with every iteration.

The DataRobot Agent Workforce Platform provides the production-grade infrastructure, built-in governance, and scalability that make this journey possible.

Start with a free trial and see what enterprise-ready agentic AI actually looks like in practice.

FAQs

How do agentic applications differ from traditional automation?

Traditional automation executes fixed rules. Agentic applications perceive context, reason about next steps, act autonomously, and improve based on feedback. The key difference is adaptability under conditions that weren’t explicitly scripted.

Why do most agentic AI pilots fail to scale?

The most common blocker isn’t technical failure — it’s governance. Without auditable decision chains, legal and compliance teams block production deployment. Multi-agent coordination complexity and runaway compute costs are close behind.

What architectural decisions matter most for scaling agentic AI?

Modular agents, vendor-agnostic integrations, and real-time observability. These prevent dependency issues, enable fault isolation, and keep coordination debuggable as complexity grows.

How can enterprises control the costs of scaling agentic AI?

Instrument for hidden cost drivers early: cascading API calls, context window growth, and orchestration overhead. Track token efficiency ratio, cost per successful outcome, and tool call volume alongside traditional performance metrics.

What organizational investments are necessary for success?

Long-term executive sponsorship, role-specific training across every team that touches production systems, and governance frameworks that can prove control to regulators. Technical readiness without organizational alignment is how scaling efforts stall.

The post Your agentic AI pilot worked. Here’s why production will be harder. appeared first on DataRobot.

When Machines Learn to See Like Experts: The Rise of Vision Language Models in Manufacturing

What to look for when evaluating AI agent monitoring capabilities

Your AI agents are making hundreds — sometimes thousands — of decisions every hour. Approving transactions. Routing customers. Triggering downstream actions you don’t directly control.

Here’s the uncomfortable question most enterprise leaders can’t answer with confidence: Do you actually know what those agents are doing?

If that question gives you pause, you’re not alone. Many organizations deploy agentic AI, wire up basic dashboards, and assume they’re covered. Uptime looks fine, latency is acceptable, and nothing is on fire, so why question it?

Because unmonitored agents can quietly change behavior, stretch policy boundaries, or drift away from the intent you originally set up. And they can do it without tripping traditional alerts, which is a governance, compliance, and liability nightmare waiting to happen.

While traditional applications generally follow predictable code paths, AI agents make their own decisions, adapt to new inputs, and interact with other systems in ways that can cascade across your entire infrastructure. When something breaks (and it will), logs and metrics won’t explain why. Without monitoring and visibility into reasoning, context, and decision paths, teams react too late and repeat the same failures.

Choosing an AI agent monitoring platform is more about control than tooling. At enterprise scale, you either have deep visibility into how agents reason, decide, and act, or you accept gaps that regulators, auditors, and incident reviews won’t tolerate. The best platforms are converging around a clear standard: decision-level transparency, end-to-end traceability, and enforceable governance built for systems that think and act autonomously.

Key takeaways

- AI agent monitoring isn’t just about uptime and latency — enterprises need visibility into why agents act the way they do so they can manage governance, risk, and performance.

- The most important capabilities fall into three buckets: reliability (drift and anomaly detection), compliance (audit trails, role-based access, policy enforcement), and optimization (cost and performance insights tied to business outcomes).

- Many tools solve only a part of the problem. Point solutions can monitor traces or tokens, but they often lack the governance, lifecycle management, and cross-environment coverage enterprises need.

- Choosing the right platform means weighing tradeoffs between control and convenience, specialization and integration, and cost and capability — especially as requirements evolve and monitoring needs to cover predictive, generative, and agentic workflows together.

What is AI agent monitoring, and why does it matter?

Traditional observability tells you what happened, but AI agent monitoring builds on observability by telling you why it happened.

When you monitor a web application, behavior is predictable: user clicks button, system processes request, database returns result. The logic is deterministic, and the failure modes are well understood.

AI agents operate differently. They evaluate context, weigh options, and make decisions based on real-time inputs and environmental factors.

Because agent behavior is non-deterministic, effective monitoring depends on observability signals: reasoning traces, context, and tool-call paths. An agent might choose to escalate a customer service request to a human representative, recommend a specific product, or trigger a supply chain adjustment — all based on some sort of inference criterion. The outcome is clear, but the reasoning isn’t.

Here’s why that gap matters more than most teams realize:

- Governance becomes even more important: Every agent decision needs to be traceable, explainable, and auditable. When a financial services agent denies a loan application or a healthcare agent recommends a treatment path, you need complete visibility into the “why” behind the decision, not just the outcome.

- Performance degradation is subtle: Traditional systems fail faster and more obviously. Agents can drift slowly. They start making slightly different choices, responding to edge cases differently, or exhibiting bias that compounds over time. Without proper monitoring, these changes go undetected until it’s too late.

- Compliance exposure multiplies: Every autonomous decision carries regulatory risk. In regulated industries, agents that operate without in-depth monitoring create compliance gaps that auditors will find (and regulators will penalize).

With so much at stake, letting agents make autonomous decisions without visibility is a gamble you can’t afford.

Key features to look for in AI agent observability

Enterprise observability tools need to move beyond logging and alerting to deliver full-lifecycle visibility across AI agents, data flows, and governance controls.

But instead of getting lost in checklists as you compare solutions, focus on the capabilities that deliver the clearest business value.

Reliability features that prevent failures:

- Real-time drift detection → fewer silent failures and faster intervention

- Context-aware anomaly analysis → detect anomalies across massive volumes of data

- Adaptive alerting → lower alert fatigue and faster response times

- Cross-agent dependency mapping → visibility into how failures cascade across multi-agent systems

Compliance features that reduce risk:

- Decision-level audit trails → faster audits and defensible explanations under regulatory scrutiny

- Role-based access controls → prevention of unauthorized actions instead of after-the-fact remediation

- Automated bias and fairness monitoring → early detection of emerging risk before it becomes a compliance issue

- Policy enforcement and remediation → consistent enforcement of governance policies across teams and environments

Optimization features that improve ROI:

- Cost monitoring across multi-cloud environments → predictable spend and fewer budget surprises

- Usage-driven performance tuning → higher throughput without overprovisioning

- Resource utilization tracking → reduced waste and smarter capacity planning

- Business impact correlation → clear linkage between agent behavior, revenue, and operational outcomes

The best platforms integrate monitoring into existing enterprise workflows, security frameworks, and governance processes. Be skeptical of tools that lean too heavily on flashy promises like “self-healing agents” or vague “AI-powered root cause analysis.” These capabilities can be helpful, but they shouldn’t distract from core fundamentals like transparent traces, robust governance, and strong integration with your existing stack.

How to choose the right AI agent monitoring tool

Choosing a monitoring platform is about fit, not features. The biggest mistake enterprises make is underestimating governance.

Point solutions often work as add-ons. They observe external flows but can’t govern them. That means no versioning, limited documentation, weak quota and policy management, and no way to intervene when agents cross boundaries.

When evaluating platforms, focus on:

- Governance alignment: Built-in governance can save months of custom development and reduce regulatory risk.

- Integration depth: The most sophisticated monitoring platform is worthless if it doesn’t integrate with your existing infrastructure, security frameworks, and operational processes.

- Scalability: Proofs of concept don’t predict production reality. Plan for 10x growth. Will the platform handle expansions without major architectural changes? If not, it’s the wrong choice.

- Expertise requirements: Some platforms with custom frameworks require specialized skills (like sustained engineering expertise) that you may not have.

For most enterprises, the winning combination is a platform that balances governance maturity, operational simplicity, and ecosystem integration. Tools that excel in all three areas may justify higher upfront investments thanks to a lower barrier to entry and faster time to value.

See real business outcomes with enterprise-grade AI

Monitoring enables confidence at scale: Organizations with mature observability outperform peers on the uptime, mean time to detection, compliance readiness, and cost control metrics that matter to executive leadership.

Of course, metrics only matter if they translate to business outcomes.

When you can see what your agents are doing, understand why they’re doing it, and predict how changes will ripple across systems with confidence, AI becomes an operational asset instead of a gamble.

DataRobot’s Agent Workforce Platform delivers that confidence through unified observability and governance that spans the entire AI lifecycle. It removes the operational drag that slows AI initiatives and scales with enterprise ambition.

It’s time to look beyond point solutions. See what enterprise-gradeAI observabilitylooks like in practice with DataRobot.

FAQs

How is AI agent monitoring different from traditional application monitoring?

Traditional monitoring focuses on system health signals like CPU, memory, and uptime. AI agent monitoring has to go deeper. It tracks how agents reason, which tools they call, how they interact with other agents, and whether their behavior is drifting away from business rules or policies. In other words, it explains why something happened, not just that it happened.

What features matter most when choosing an AI agent monitoring platform?

For enterprises, the must-haves fall into three groups: reliability features like drift detection, guardrails, and anomaly analysis; compliance features like tracing, role-based access, and policy enforcement; and optimization features such as cost monitoring, performance tuning insights, and links between agent behavior and business KPIs. Anything that does not support one of those outcomes is usually secondary.

Do we really need a dedicated agent monitoring tool if we already have an observability stack?

General observability tools are useful for infrastructure and application health, but they rarely capture agent reasoning paths, decision context, or policy adherence out of the box. Most organizations end up layering a dedicated AI or agent monitoring solution on top so they can see how models and agents behave, not just how servers and APIs perform.

Should we build our own monitoring framework or buy a platform?

Building can make sense if you have strong platform engineering teams and highly specialized needs, but it is a large, ongoing investment. Monitoring requirements and metrics are changing quickly as agent architectures evolve. Most enterprises get better long-term value by buying a platform that already covers predictive, generative, and agentic components, then extending it where needed.

Where does DataRobot fit among these AI agent monitoring tools?

DataRobot AI Observability is designed as a unified platform rather than a point solution. It monitors models and agents across environments, ties monitoring to governance and compliance, and supports both predictive and generative workflows. For enterprises that want one place to manage visibility, risk, and performance across their AI estate, it serves as the central foundation other tools plug into.

The post What to look for when evaluating AI agent monitoring capabilities appeared first on DataRobot.

Introducing MirrorBot, a robot designed to foster human connection

AI agent observability: what enterprises need to know

You wouldn’t run a hospital without monitoring patients’ vitals. Yet most enterprises deploying AI agents have no real visibility into what those agents are actually doing — or why.

What began as chatbots and demos has evolved into autonomous systems embedded in core workflows: handling customer interactions, executing decisions, and orchestrating actions across complex infrastructures. The stakes have changed. The monitoring hasn’t.

Traditional tools tell you if your servers are up and your APIs are responding. They don’t tell you why your customer service agent started hallucinating responses, or why your multi-agent workflow failed three steps into a decision tree.

That visibility gap scales with every agent you deploy. When agents operate autonomously across critical business processes, guesswork isn’t a strategy.

If you can’t see reasoning, tool calls, and behavior over time, you don’t have real observability. You have infrastructure telemetry.

Deploying agents at scale requires observability that exposes behavior, decision paths, and outcomes across the entire agent workforce. Anything less breaks down fast.

Key takeaways

- AI agent observability isn’t an extension of traditional monitoring. It’s a different discipline entirely, focused on reasoning chains, tool usage, multi-agent coordination, and behavioral drift.

- Agentic systems evolve dynamically. Without deep visibility, failures stay hidden, costs creep up, and compliance risk grows.

- Evaluating platforms means looking past basic tracing and asking harder questions about governance integration, multi-cloud support, drift detection, security controls, and explainability.

- Treating observability as core infrastructure (not a debugging add-on) accelerates growth at scale, improves reliability, and makes agentic AI safe to run in production.

What is AI agent observability?

AI agent observability gives you visibility into behavior, reasoning, tool interactions, and outcomes across your agents. It shows how agents think, act, and coordinate — not just whether they run.

Traditional app monitoring looks mostly at system health and performance metrics. Agent observability opens the intelligence layer and helps teams answer questions like:

- Why did the agent choose this approach?

- What context shaped the decision?

- How did agents coordinate across a workflow?

- Where exactly did execution fall apart?

If a platform can’t answer these questions, it isn’t agent-ready.

When agents act autonomously, human teams stay accountable for outcomes. Observability is how that accountability stays grounded in facts, covering incident prevention, cost control, compliance, and behavior understanding at scale.

There’s also a distinction worth making between monitoring and observability that most teams underestimate. Monitoring tells you what happened. Observability helps you detect what should have happened but didn’t.

If an agent is supposed to trigger every time a new sales lead arrives, and that trigger silently fails, monitoring may never surface it. Observability catches the absence, flagging that an agent ran twice today when it should have run fifty times.

Multi-agent systems raise the bar further. Individual agents may look fine in isolation, while coordination failures, context handoffs, or resource conflicts quietly degrade results. Traditional monitoring misses all of it.

Why AI agents require different monitoring than traditional apps

Traditional monitoring assumes predictable behavior. AI agents don’t work that way. They reason probabilistically, adapt to context, and change behavior as underlying components evolve.

Here are common failure patterns that standard monitoring misses entirely:

- Execution failures show up as silent failures, not dramatic system crashes: permission errors, API rate limits, or bad parameters that slip through and cause slow, hidden performance decay that traditional alerts never catch.

- Context window overflow happens when agents continue to run, but with incomplete context. Different large language models (LLMs) have varying context limits, and when agents exceed those boundaries, they lose important information, leading to misinformed decisions that standard monitoring can’t detect.

- Agent orchestration issues grow more complex in sophisticated architectures. Traditional monitoring may see successful API calls and normal resource utilization, while missing coordination failures that compromise the entire workflow.

- Behavioral drift happens when models, templates, or training data change, causing agents to behave differently over time. Invisible to system-level metrics, it can completely alter agent performance and decision quality.

- Cost explosion occurs when agents get caught in loops of repeated actions, such as redundant API calls, excessive token usage, or inefficient tool interactions. Traditional monitoring treats this as normal system activity.

- Latency as a false signal: For traditional systems, latency is a reliable health indicator. For LLMs, it isn’t. A request might take two seconds or 60 seconds, and both outcomes can be perfectly valid. Treating latency spikes as failure signals generates noise that obscures what actually matters: behavior, decision quality, and outcome accuracy.

If your monitoring stops at infrastructure health, you’re only seeing the shadows of agent behavior, not the behavior itself.

Key features of modern agent observability platforms

The right platforms deliver outcomes enterprises actually care about:

- Security and access controls: Strong RBAC, PII detection and redaction, audit trails, and policy enforcement let agents operate in sensitive workflows without losing control or exposing the organization to regulatory risk.

- Granular cost tracking and guardrails: Fine-grained visibility into spend by agent, workflow, and team helps leaders understand where value is coming from, shut down waste early, and prevent cost overruns before they turn into budget surprises.

- Reproducibility: When something goes wrong, “we don’t know why” isn’t an acceptable answer. Replaying agent decisions gives teams a clear line of sight into what happened, why it happened, and how to fix it, whether the issue is performance, safety, or compliance.

- Multiple testing environments: Enterprises can’t afford to discover agent behavior issues in production. Full observability in pre-production environments lets teams pressure-test agents, validate changes, and catch failures before customers or regulators do.

- Unified visibility across environments: A single, consistent view across clouds, tools, and teams makes it possible to understand agent behavior end to end. Most platforms don’t deliver this without heavy customization.

- Reasoning trace capture: Seeing how agents reason — not just what they output — supports better decision review, faster debugging, and real accountability when autonomous decisions impact the business.

- Multi-agent workflow visualization: Visualizing how agents hand off context, delegate tasks, and coordinate work exposes bottlenecks and failure points that directly affect reliability, customer experience, and operational efficiency.

- Drift detection: Detecting when behavior slowly moves away from expectations lets teams intervene early, protecting decision quality and business outcomes as systems evolve.

- Context window monitoring: Tracking context usage helps teams spot when agents are operating with incomplete information, preventing silent degradation that’s invisible to traditional performance metrics.

How to evaluate an AI agent observability platform

Choosing the right platform goes beyond surface-level monitoring. Your evaluation process should prioritize:

(H3) Integration with existing infrastructure

Most enterprises already run across multiple clouds, on-prem systems, and custom orchestration layers. An observability platform has to fit into that reality, integrating with frameworks like LangChain, CrewAI, and custom agent orchestration layers without requiring significant architectural changes.

Cloud flexibility matters just as much. Observability should behave consistently across AWS, Azure, GCP, and hybrid or on-prem environments. If visibility changes depending on where agents run, blind spots creep in fast.

Look for OpenTelemetry (OTel) compatibility and data export capabilities. Vendor lock-in at the observability layer is especially painful because historical traces, behavioral baselines, and behavior data carry long-term operational value.

Cost and scalability considerations

Pricing models vary widely and can become expensive fast as agent usage scales. Review structures carefully, especially for high-volume workflows that generate extensive trace data.

Many platforms charge based on data ingestion, storage, or API calls, costs that aren’t always obvious upfront. Validate pricing against realistic scaling scenarios, including data retention costs for traces, logs, and reasoning histories.

For multi-cloud deployments, keep ingress and egress costs in mind. Data movement between regions or providers can create unexpected expenses that compound quickly at scale.

Security, compliance, and governance fit

Once agents touch sensitive data or regulated workflows, observability becomes part of the organization’s risk posture. Platforms need to support enterprise-grade security without relying on bolt-ons or manual processes.

That starts with strong access controls, encryption, and auditability. AI leaders should also look for real-time PII detection and redaction, policy enforcement tied to agent behavior, and clear audit trails that explain how decisions were made and who had access.

Alignment with relevant compliance frameworks is also a priority here, including SOC 2, HIPAA, GDPR, and industry-specific requirements that govern your organization. The platform should provide governance integration that supports audit processes and regulatory reporting.

Support for bring-your-own LLM deployments, private infrastructure, and air-gapped environments is also a differentiator. Enterprises running sensitive workloads need observability that works where their agents run — not just where vendors prefer them to run.

Dashboards, alerts, and user experience

Different stakeholders need different views of agent behavior. Builders need deep traces and reasoning paths. Operators need clear signals when workflows degrade or costs spike. Leaders need summaries that explain performance and risk in business terms.

Look for role-based views that surface the right level of detail without overwhelming each audience. Executives shouldn’t have to wade through logs to understand whether agents are behaving safely. Teams on the ground need to drill down fast when something breaks.

The platform should automatically flag drift, safety issues, or unexpected behavior, and route those alerts directly into collaboration tools like Slack or Microsoft Teams, so teams can respond without living in a dashboard.

Best practices for implementing agent observability

Getting observability right isn’t a one-time setup. It requires ongoing attention as your agents and the systems they operate in continue to evolve.

Establish clear metrics and KPIs

System performance is important, but agent observability only delivers value when metrics align with business outcomes. Define KPIs that reflect decision quality, business impact, and operational efficiency.

That means looking at how reliably agents achieve their goals, putting guardrails in place to prevent harmful behavior, and monitoring cost-per-action to keep execution efficient.

Metrics should apply to both individual agents and multi-agent workflows. Complex workflows require coordination metrics that individual-agent KPIs don’t capture.

Leverage continuous evaluation and feedback loops

Set up automated evaluation pipelines that catch drift or unexpected behaviors before they affect real business operations. Waiting until something breaks is not a detection strategy.

For sensitive, high-impact tasks, automated evaluation isn’t enough. Human review is still essential where the stakes are too high to rely solely on automated signals.

Run A/B comparisons as agents are updated to validate that changes actually improve performance. This matters, especially as agents evolve through model updates or configuration changes.

The foundation of scalable, trustworthy agentic AI

Observability connects everything — platform evaluation, multi-agent monitoring, governance, security, and continuous improvement — into one operational framework. Without it, scaling agents means scaling risk.

When teams can see what agents are doing and why, autonomy becomes something to expand, not fear.

Ready to build a stronger foundation? Download the enterprise guide to agentic AI.

FAQs

How is agent observability different from traditional AI or application monitoring?

Traditional monitoring focuses on infrastructure health — CPU, memory, uptime, error rates. Agent observability goes deeper, capturing reasoning paths, tool-call chains, context usage, and multi-step workflows. That visibility explains why agents behave the way they do, not just whether systems stay up.

What metrics matter most when evaluating multi-agent system performance?

Teams need to track both technical health and decision quality. That includes tool-call success rates, reasoning accuracy, latency across workflows, cost per decision, and behavioral drift over time. For multi-agent systems, coordination signals like message passing and task delegation matter just as much.

How do I know which observability platform is best for my organization’s agent architecture?

The right platform supports multi-agent workflows, exposes reasoning paths, integrates with orchestration layers, and meets enterprise security standards. Tools that stop at tracing or token counts usually fall short in regulated or large-scale deployments. DataRobot unifies observability, governance, and lifecycle oversight in one platform, making it purpose-built for enterprise scale.

What observability capabilities are essential for maintaining compliance and safety in enterprise agent deployments?

Prioritize full audit trails, RBAC, PII protection, explainable decisions, drift detection, and automated guardrails. A unified platform simplifies this by handling observability and governance together, rather than forcing teams to stitch controls across tools.

The post AI agent observability: what enterprises need to know appeared first on DataRobot.

Do you trust me? A framework for making networks of robots and vehicles safer

Gemma 4: Byte for byte, the most capable open models

Air-powered artificial muscles could help robots lift 100 times their weight

How to design and run an agent in rehearsal – before building it

Most AI agents fail because of a gap between design intent and production reality. Developers often spend days building only to find that escalation logic or tool calls fail in the wild, forcing a total restart. DataRobot Agent Assist closes this gap. It is a natural language CLI tool that lets you design, simulate, and validate your agent’s behavior in “rehearsal mode” before you write any implementation code. This blog will show you how to execute the full agent lifecycle from logic design to deployment within a single terminal session, saving you extra steps, rework, and time.

How to quickly develop and ship an agent from a CLI

DataRobot’s Agent Assist is a CLI tool built for designing, building, simulating, and shipping production AI agents. You run it from your terminal, describe in natural language what you want to build, and it guides the full journey from idea to deployed agent, without switching contexts, tools, or environments.

It works standalone and integrates with the DataRobot Agent Workforce Platform for deployment, governance, and monitoring. Whether you’re a solo developer prototyping a new agent or an enterprise team shipping to production, the workflow is the same: design, simulate, build, deploy.

Users are going from idea to a running agent quickly, reducing the scaffolding and setup time from days to minutes.

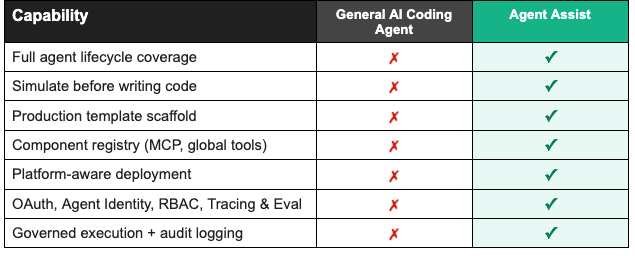

Why not just use a general-purpose coding agent?

General AI coding agents are built for breadth. That breadth is their strength, but it is exactly why they fall short for production AI agents.

Agent Assist was built for one thing: AI agents. That focus shapes every part of the tool. The design conversation, the spec format, the rehearsal system, the scaffolding, and the deployment are all purpose-built for how agents actually work. It understands tool definitions natively. It knows what a production-grade agent needs structurally before you tell it. It can simulate behavior because it was designed to think about agents end to end.

The agent building journey: from conversation to production

Step 1: Start designing your agent with a conversation

You open your terminal and run dr assist. No project setup, no config files, no templates to fill out. You’ll immediately get a prompt asking what you want to build.

Agent Assist asks follow-up questions, not only technical ones, but business ones too. What systems does it need access to? What does a good escalation look like versus an unnecessary one? How should it handle a frustrated customer differently from someone with a simple question?

Guided questions and prompts will help with building a complete picture of the logic, not just collecting a list of requirements. You can keep refining your ideas for the agent’s logic and behavior in the same conversation. Add a capability, change the escalation rules, adjust the tone. The context carries forward and everything updates automatically.

For developers who want fine-grained control, Agent Assist also provides configuration options for model selection, tool definitions, authentication setup, and integration configuration, all generated directly from the design conversation.

When the picture is complete, Agent Assist generates a full specification: system prompt, model selection, tool definitions, authentication setup, and integration configuration. Something a developer can build from and a business stakeholder can actually review before any code exists. From there, that spec becomes the input to the next step: running your agent in rehearsal mode, before a single line of implementation code is written.

Step 2: Watch your agent run before you build it

This is where Agent Assist does something no other tool does.

Before writing any implementation, it runs your agent in rehearsal mode. You describe a scenario and it executes tool calls against your actual requirements, showing you exactly how the agent would behave. You see every tool that fires, every API call that gets made, every decision the agent takes.

If the escalation logic is wrong, you catch it here. If a tool returns data in an unexpected format, you see it now instead of in production. You fix it in the conversation and run it again.

You validate the logic, the integrations, and the business rules all at once, and only move to code when the behavior is exactly what you want.

Step 3: The code that comes out is already production-ready

When you move to code generation, Agent Assist does not hand you a starting point. It hands you a foundation.

The agent you designed and simulated comes scaffolded with everything it needs to run in production, including OAuth authentication (no shared API keys), modular MCP server components, deployment configuration, monitoring, and testing frameworks. Out of the box, Agent Assist handles infrastructure that normally takes days to piece together.

The code is clean, documented, and follows standard patterns. You can take it and continue building in your preferred environment. But from the very first file, it is something you could show to a security team or hand off to ops without a disclaimer.

Step 4: Deploy from the same terminal you built in

When you are ready to ship, you stay in the same workflow. Agent Assist knows your environment, the models available to you, and what a valid deployment requires. It validates the configuration before touching anything.

One command. Any environment: on-prem, edge, cloud, or hybrid. Validated against your target environment’s security and model constraints. The same agent that helped you design and simulate also knows how to ship it.

What teams are saying about Agent Assist

“The hardest part of AI agent development is requirement definition, specifically bridging the gap between technical teams and domain experts. Agent Assist solves this interactively. A domain user can input a rough idea, and the tool actively guides them to flesh out the missing details. Because domain experts can immediately test and validate the outputs themselves, Agent Assist dramatically shortens the time from requirement scoping to actual agent implementation.”

The road ahead for Agent Assist

AI agents are becoming core business infrastructure, not experiments, and the tooling around them needs to catch up. The next phase of Agent Assist goes deeper on the parts that matter most once agents are running in production: richer tracing and evaluation so you can understand what your agent is actually doing, local experimentation so you can test changes without touching a live environment, and tighter integration with the broader ecosystem of tools your agents work with. The goal stays the same: less time debugging, more time shipping.

The hard part was never writing the code. It was everything around it: knowing what to build, validating it before it touched production, and trusting that what shipped would keep working. Agent Assist is built around that reality, and that is the direction it will keep moving in.

Get started with Agent Assist in 3 steps

Ready to ship your first production agent? Here’s all you need:

1. Install the toolchain:

brew install datarobot-oss/taps/dr-cli uv pulumi/tap/pulumi go-task node git python

2. Install Agent Assist:

dr plugin install assist

3. Launch:

dr assist

Full documentation, examples, and advanced configuration are in the Agent Assist documentation.

The post How to design and run an agent in rehearsal – before building it appeared first on DataRobot.

ANYmal deployed at Northern Lights CCS Facility

Back to school: robots learn from factory workers

By Anthony King

What if training a robot to handle dirty, dangerous work on the factory floor was as simple as showing it how? Czech startup RoboTwin is doing exactly that, helping factory workers teach robots new skills by demonstration.

Instead of writing complex code, workers perform the job once and RoboTwin’s technology turns those movements into a robot programme – opening the door to automation for smaller manufacturers.

Founded in Prague in 2021, RoboTwin builds handheld devices and no-code software that capture human movements and translate them into instructions for industrial robots. The aim is to make automation faster, simpler and more accessible to manufacturers that do not have specialist robotics programmers.

“The robot basically copies the human demonstration,” said Megi Mejdrechová, RoboTwin’s co-founder and chief technology officer. “People with no coding skills can transfer their know-how and experience to robots.”

Mejdrechová, a mechanical engineer trained at the Czech Technical University in Prague, developed the core technology behind RoboTwin during her work in robotics research and industry. Her experience in robot control using AI and computer vision inspired her to create something practical for European manufacturers.

“Czech engineering is quite traditional and focused on scientific papers,” said Mejdrechová. “Visits to Singapore and Canada and other work experiences led me to focus on making a product that people could use.”

Getting started

In 2021, Mejdrechová entered a jump‑starter programme and won first prize in the manufacturing category. “We saw then that there was potential for the technology,” she said.

This encouraged her to start RoboTwin with colleagues Ladislav Dvořák and David Polák, who shared her enthusiasm for human‑robot partnerships. Mejdrechová received backing from Women TechEU, an EU scheme supporting women founders of deep‑tech startups.

The RoboTwin team shared their results on the Horizon Results Platform, an online showcase for EU‑funded innovations, which led to an invitation to the EU’s Empowering Start‑ups and SMEs initiative.

This helped fund their trip to Hannover Messe 2025, a major global manufacturing trade fair, and opened doors to new business contacts and deals.

Through a mix of public and private investment, RoboTwin has secured funding to refine its technology and expand to manufacturers in Central Europe, the Netherlands, Mexico and Canada.

In 2025, Mejdrechová was named in Forbes Czechia’s 30 Under 30 list for her work in making the training of robots accessible to more manufacturers.

Schooling robots

At the heart of RoboTwin’s system is a handheld device equipped with sensors. When a worker performs a task, for example spray painting a metal component, the system records the movement and converts it into a robot programme that can be reused in production.

Instead of requiring a specialist engineer to manually code every movement, the system captures the worker’s natural technique and translates it into precise instructions a robot can follow.

“We started with jobs that are ugly, dirty and unhealthy for workers to do manually,” said Mejdrechová.

Thanks to the no‑coding system, the process can be completed in a few steps and typically takes about a minute. For factories producing small batches or frequently changing products, this speed can make automation far more practical than traditional robot programming.

Making automation easy for all

Robotics in manufacturing is not new. The automotive industry already leads the way with about 23 000 new robots added to production lines in 2024. But while large companies can invest heavily, automation remains challenging and expensive for many SMEs.

This is where RoboTwin lends a hand. It has assisted firms in the surface‑treatment industry – companies that powder coat, paint or polish metal or plastic parts for car factories.

“Even if the batch of products you are producing is small, with our approach you can create a robot programme fast and easily,” said Mejdrechová.

For example, RoboTwin has assisted RobPainting, a Dutch company that robotises painting for SMEs to improve quality, reduce costs and minimise rework.