What Makes ‘Mission Critical’ Different?

How to build resilient agentic AI pipelines in a world of change

Change is the only constant in enterprise AI. If your data workflows aren’t built to handle it, you’re setting your entire operation up for failure.

Most data pipelines are brittle, breaking when data or infrastructures slightly change. That downtime can cost millions (upwards of $540,000 per hour), lead to compliance gaps that invite lawsuits, and ultimately result in failed AI initiatives that never make it past proof of concept.

But resilient agentic AI pipelines can adapt, recover, and keep delivering value even as everything around them changes. These systems maintain performance and recover without manual intervention, even when data drift, regulation changes, or infrastructure failures happen.

Resilient pipelines reduce downtime costs, improve compliance, and accelerate AI deployment. Fragile ones do the opposite.

Why resilient AI pipelines matter in changing environments

When a traditional software application breaks, you might lose some functionality. But when an AI pipeline breaks, you lose trust from wrong recommendations and bad predictions.

The proof is in the numbers: organizations report up to 40% less downtime and 30% in cost savingswith smarter, more proactive AI systems.

| Fragile pipelines | Resilient pipelines | |

|---|---|---|

| Monitoring and response | Manual monitoring and reactive fixes | Automated anomaly detection and proactive responses |

| System reliability | Single points of failure | Redundant, self-healing components |

| Architectural flexibility | Rigid architectures that break under change | Adaptive designs that evolve with business needs |

| Security and compliance | Governance as an afterthought | Built-in compliance and security |

| Deployment strategy | Vendor lock-in and environment dependencies | Cloud-agnostic, portable deployments |

Resilient systems keep learning, adapting, and delivering value. That’s exactly why enterprise AI platforms like DataRobot build resilience into every layer of the stack. When the only constant is accelerating change, your AI either adapts or becomes obsolete.

Identifying vulnerabilities and failure points

Waiting for something to break and then scrambling to fix it is backward and ultimately hurts operations. Organizations that systematically evaluate risks at each stage of the pipeline can identify potential failure points before they become costly outages.

For AI pipelines, vulnerabilities cluster around three core categories:

Data drift and pipeline breakdowns

Data drift is the silent killer of AI systems.

Your model was trained on historical data that reflected specific patterns, distributions, and relationships. But data evolves, customer behavior shifts, and market conditions change. Constantly. Suddenly, your model is making predictions based on an outdated reality.

For example, an e-commerce recommendation engine trained on shopping data pre-pandemic would completely miss the shift toward home fitness equipment and remote work tools. The model is operating on wildly outdated assumptions.

The warning signs are clear if you know where to look. Changes in your input data features, population stability index (PSI) scores above threshold, and gradual drops in model accuracy are all signs of drift in progress.

But monitoring isn’t enough. You need automated responses through machine learning pipelines that trigger retraining when drift detection crosses predetermined thresholds. Set up backtesting to validate new models against recent data before deployment, with rollback processes that can quickly revert to previous model versions if performance degrades.

It’s impossible to prevent drift completely. But you can detect it early and respond automatically, keeping your AI aligned with changing reality.

Model decay and technical debt

Model decay happens when shortcuts accumulate into larger systemic problems.

Every AI project starts with good intentions, along with organized code, clear notes, proper tracking, and thorough testing. But when deadlines approach, the pressure builds. Shortcuts start to creep in, and data tweaks become quick fixes. Models inevitably get messy, and the documentation never quite catches up.

Before you know it, you’re dealing with technical debt that makes your pipelines fragile and nearly impossible to maintain.

Ad hoc models that can’t be easily reproduced, feature logic buried in uncommented code, and deployment processes that depend on historical knowledge all point to (eventual) decay. And when your original developer leaves, that institutional knowledge walks out the door with them.

The fix takes proactive discipline:

- Enforce modular code architecture that separates data processing, feature engineering, model training, and deployment logic.

- Keep detailed documentation for every model and feature transformation.

- Use MLflow or similar tools for version control that tracks models, as well as the data and code that created them.

This gets you closer to operational resilience. When you can quickly understand, modify, and redeploy any component of your pipeline, you can adapt to change without breaking everything else.

Governance gaps and security risks

Governance is a business-critical requirement that, when missing, creates massive risk and potentially catastrophic vulnerabilities:

- Weak access controls mean unauthorized users can modify production models.

- Missing audit trails make it impossible to track changes or investigate incidents.

- Unmanaged bias can lead to discriminatory outcomes that trigger lawsuits.

Poor data lineage tracking makes compliance reporting a nightmare. GDPR, CCPA, and industry-specific regulations are just the beginning. More AI-specific legislation (like the EU AI Act and Executive Order 14179) is coming, and at some point, compliance won’t be optional.

A strong governance checklist includes:

- Role-based access control (RBAC) that enforces least-privilege principles

- Detailed audit logging that tracks every model change and prediction (and why it made each decision)

- End-to-end encryption for data at rest and in transit

- Automated fairness audits that detect and flag potential bias

- Complete data lineage tracking, from data source to prediction

Of course, AI governance solutions aren’t just in place to check off compliance boxes. They ultimately build trust with customers, regulators, and internal stakeholders who need to know your AI systems are operating safely and ethically.

Designing adaptive pipeline architectures

Architecture is where resilience is won or lost.

Monolithic, tightly coupled systems might seem simpler to build, but they’re disasters waiting to happen. When one component fails, everything else does too. When you need to update a single model, you risk breaking the entire pipeline, leading to months of re-architecturing.

Adaptive architectures are inherently resilient. They’re modular, cloud-ready, and designed to self-heal, anticipating change rather than resisting it.

Modular components for rapid updates

Modular design is your first line of defense against cascading failures.

Break up those monolithic pipelines into discrete, loosely connected components. Each component should have a single responsibility, well-defined interfaces, and the ability to be updated on its own.

Microservices also enable resource optimization, letting you scale only the components that need extra compute (e.g., a GPU-intensive tool) rather than the full system.

Containerization makes this practical. Docker containers keep each component contained with its dependencies, making them portable and version-controlled. Kubernetes orchestrates these containers, handling scaling, health checks, and resource allocation automatically.

The payoff is agility. When you need to update a single component, you can deploy changes without touching anything else, allocating resources precisely where they’re needed as you scale.

Cloud-native and hybrid harmony

Pure cloud deployments offer scalability and managed services, but many enterprises still need on-premises components for data sovereignty, latency requirements, or regulatory compliance. Solely on-premises deployments offer control, but lack cloud flexibility and managed AI services.

Hybrid architectures give you both. Your most important data stays on-premises, while compute-intensive training happens in the cloud. Secure on-premises AI handles sensitive workloads, while cloud services provide elastic scaling for batch processing.

The aim with this type of setup is standardization. Use Kubernetes for consistent workflow orchestration across environments, with APIs designed to work the same whether they’re calling on-premises or cloud services.

When your pipelines can run anywhere, you can avoid vendor lock-in, keep your negotiating power, and optimize costs by moving workloads to the most efficient environment.

Self-healing mechanisms for resilience

Implement self-healing mechanisms to keep your systems running smoothly without constant human intervention:

- Build health checks into every component. Monitor response times, accuracy metrics, data quality scores, and resource utilization to make sure services are performing correctly.

- Put circuit breakers in place that automatically block off failing components before they can cascade failures throughout your system. If your feature engineering service starts timing out, the circuit breaker prevents it from bringing down other services.

- Design automatic rollback mechanisms. When a new model deployment shows degraded performance, your system should automatically revert to the previous version while alerting the operations team.

- Add intelligent resource reallocation. When demand spikes for specific models, automatically scale those services while maintaining resource limits for the overall system.

These mechanisms can reduce your mean time to recovery (MTTR) from hours to minutes. But more importantly, they often prevent outages entirely by catching and resolving issues before they impact end users.

Automating monitoring, retraining, and governance

When you’re managing dozens (or hundreds) of models across multiple environments, manual monitoring is impossible. Human-driven retraining introduces delays and inconsistencies, while manual governance creates compliance gaps and audit headaches.

Automation helps you maintain continuous performance and compliance as your AI systems grow.

Real-time observability

You can’t manage what you can’t measure, and you can’t measure what you can’t see. AI observability gives you real-time visibility into model performance, data quality, prediction accuracy, and business impact through metrics like:

- Prediction latency and throughput

- Model accuracy and drift indicators

- Data quality scores and distribution shifts

- Resource utilization and cost per prediction

- KPIs tied to AI decisions

That said, metrics without action are just dashboards. So set up proactive alerting based on thresholds that adapt to normal variation while catching anomalies. Then have escalation paths that route different types of issues to the right teams, as well as automated responses for common scenarios.

You want to know about problems before your customers do, and resolve them before they impact the business.

Automated retraining

There’s no question about whether your models will need retraining. All models degrade over time, so retraining needs to be proactive and automatic.

Set up clear triggers for retraining, like accuracy dropping below defined thresholds, drift detection scores exceeding acceptable ranges, or data volume reaching predetermined refresh intervals. Don’t rely on calendar-based retraining schedules. They’re either too frequent (wasting resources) or not frequent enough (missing critical changes).

Use AutoML for consistent, repeatable retraining processes, along with strong backtesting that validates new models against recent data before deployment. Shadow deployments let you compare new model performance against current production models using real-world traffic.

This creates a continuous learning loop where your AI systems adapt to changing conditions automatically, maintaining performance without manual intervention.

Embedded governance

Trying to add governance after your pipeline is built? Too late. It needs to be baked in from the start, or you’re gambling with compliance violations and broken trust.

Automate your documentation with model cards that capture training data, metrics, limitations, and use cases. Run bias detection on every new version to catch fairness issues before deployment, and log every change, every deployment, every prediction. When regulators come knocking, you’ll need that paper trail.

Lock down access so only the right people can make changes, but keep it collaborative enough that work actually gets done. And automate your compliance reports so audits don’t become months-long nightmares.

Done right, governance runs silently in the background. Your data scientists and engineers work freely, and every model still meets your standards for performance, fairness, and compliance.

Preparing for multi-cloud and hybrid deployments

When your AI pipelines are stuck with specific cloud providers or on-premises infrastructure, you lose flexibility, negotiating power, and the ability to optimize for changing business needs.

Environment-agnostic pipelines prevent vendor lock-in and support global operations across different regulatory and performance requirements, letting you optimize costs by moving workloads to the most efficient environment. They also provide redundancy that protects against bottlenecks like provider outages or service disruptions.

Build this portability in from Day 1.

Use infrastructure-as-code tools like Terraform to define your environments declaratively. Helm charts keep Kubernetes deployments working consistently across providers, while CI/CD pipelines can deploy to any target environment with configuration changes rather than code changes.

Plan your redundancy strategies carefully. Implement active-passive replication for critical models with automatic failover, and set up load balancing that can route traffic between multiple environments. Design data synchronization that keeps your training and serving data consistent across locations.

Getting your AI infrastructure right means building for portability from the beginning, not trying to retrofit it later.

Ensuring compliance and security at scale

Fragile systems build walls around the perimeter and hope that nothing gets through. Resilient systems assume attackers will get in and plan accordingly with:

- Data encryption everywhere — at rest, in transit, in use

- Granular access controls that limit who can do what

- Continuous scanning for vulnerabilities in containers, dependencies, and infrastructure

Match your compliance needs to actual controls. SOC 2 requires audit logs and access management. ISO 27001 demands incident response plans. GDPR enforces privacy by design. Industry regulations each have their own specific requirements.

The cheapest fix is the earliest fix, so adopt DevSecOps practices that catch security issues during development, not after, when they can cost exponentially more to resolve. Build security and compliance checks into every stage using your machine learning project checklist. Retrofitting protection after the fact means you’re already losing the battle.

Incident response strategies for AI pipelines

Failures will happen. The question is whether you’ll respond quickly and effectively, or whether you’ll scramble in crisis mode while your business suffers.

Proactive incident response minimizes impact through preparation, not reaction. You need playbooks, tools, and processes ready before you need them.

Playbooks for containment and recovery

Every type of AI incident needs a specific response playbook with clear triage steps, escalation paths, rollback procedures, and communication templates. Here are some examples:

- For pipeline outages: Immediate health checks to isolate the failure, automatic traffic routing to backup systems, rollback to last known good configuration, and transparent stakeholder communication about impact and recovery timeline

- For accuracy drops: Model performance validation against recent data, comparison with shadow deployments or A/B tests, decision on rollback versus emergency retraining, and documentation of root cause for future prevention

- For security breaches: Immediate isolation of affected systems, assessment of the data exposure, notification of legal and compliance teams, and coordinated response with existing security operations

Close any gaps by testing these playbooks regularly through simulated incidents. Update based on lessons learned, and keep them easily accessible to all team members who might need them.

Cross-team collaboration

AI incidents are “all-hands-on-deck” efforts that depend on collaboration between data science, engineering, operations, security, legal, and business stakeholders.

Set up shared dashboards that give all teams visibility into system health and incident status, and create dedicated incident response channels in Slack or Microsoft Teams that automatically include the right people based on incident type. Tools like PagerDuty can help with alerting and coordination, while Jira is useful for incident tracking and post-mortem analysis.

A coordinated response ensures everyone knows their role and has access to the information they need, so they can resolve issues quickly — without stepping on each other’s toes.

Driving real business outcomes with resilient AI

Resilient pipelines allow you to deploy with confidence, knowing your systems will adapt to changing conditions. They reduce operational costs and deliver faster time-to-value through automation, self-healing capabilities, and increased uptime and reliability, which ultimately builds trust with customers and stakeholders.

Most importantly, they enable AI at scale. When you’re not constantly reacting to broken pipelines, you can focus on building new capabilities, expanding to new use cases, and driving innovation that creates a competitive advantage.

DataRobot’s enterprise platform builds this resilience into every layer of the stack, from automated monitoring and retraining to built-in governance and security, reinforcing your systems so they keep delivering value no matter what changes around them.Find out how AI leaders leverage DataRobot’s enterprise platform to make resilience the default, not an aspiration.

The post How to build resilient agentic AI pipelines in a world of change appeared first on DataRobot.

Are Your Robot Frames Wearing Out Too Fast — and Is the Finish to Blame?

Learn AI — Or Forget About That Bonus

Bausch + Lomb’s CEO Brent Saunders has issued a simple ultimatum to employees: Get a clue when it comes to AI, or kiss your bonus goodbye.

Observes writer Francisco Velasquez: “By tying bonuses to (AI) education, Saunders is essentially legislating the end of resistance.

“He also noted that employees risk becoming ‘irrelevant’ should they fall short of implementing AI in their career pursuits.”

In other news and analysis on AI writing:

*Cattle Call: Finally, a Place We Can All Go to Serve Our AI Overlords: Wired reports that ‘RentAHuman’ – a new Web site where mere flesh-bags can get work from AI-powered agents – has already signed-up a half-million-plus souls.

The site witnessed a meteoric rise during the past few weeks after the release of OpenClaw, AI agent software that ‘empowers’ AI agents to work in an extremely independent way — and even dole-out money to achieve their missions.

Observes writer Kyle Macneil: “These humans seem stoked. Sapien workers are already offering to pick things up, take meetings, sign contracts, conduct recon, host events and snap photos for the bot bosses.”

*WordPress Gets a New AI Assistant: The world’s most popular Web authoring software now has a new, AI-powered assistant. Think AI-powered image generation, editing, translation and more.

Observes writer Stevie Bonifield: “To try out the AI assistant, users have to manually enable it by going into their site’s settings and toggling on ‘AI tools.’”

“Sites that were made with the AI website builder WordPress launched last year will have AI tools enabled by default.”

*Dead in the Water: Apple Intelligence?: An informal poll by writer Roland Moore-Colyer finds that 96% of Apple users surveyed don’t use the company’s AI tool, Apple Intelligence.

Observes Moore-Colyer: “Given that the world and its virtual dog seems to be using AI or talking about it — either positively or negatively — I’d have expected at least a good percentage more people to be using Apple Intelligence.

“But it seems that Apple just isn’t scratching the AI itch in the way people expect.”

*Microsoft Exec: AI to Automate Virtually All White Collar Tasks in 18 Months: Given all the headlines lately, it’s hard not to be wistful for the days when AI was packaged as a warm-and-fuzzy office helper.

Case in point: A decree from Microsoft’s AI CEO Mustafa Suleyman, declaring that by the close of 2027, AI will be capable of handling most of all white collar work.

Even so, writer Frank Landymore also observes “many companies, however, are arguably using the pretense of AI to fire employees for purely financial reasons — a practice that some are calling ‘AI washing.’”

*ChatGPT Still an Ace at Making Things Up: While AI like ChatGPT is an incredibly powerful writer when working with documented data, trusting its research is still a fool’s game.

(To be fair, it’s a failing of all generative AI, including Gemini, Claude, Grok and others.)

Specifically: A new study from PAN finds that only 69% of ChatGPT links ‘documenting’ facts, trends and other supposed knowledge actually lead to real and correctly attributed info.

The take-away: Unless you’re sure, always demand a hotlink for ‘facts’ generated by ChatGPT – and always manually check the link.

*Trump to AI Titans: Pony-Up for the Power Costs: Writer Willow Tohi reports that fears of high electricity costs triggered by the coming onslaught of new AI data centers may be quashed by the Trump Administration.

Observes Tohi: Trump “is developing a policy to require major tech companies to fully cover the electricity, water, and grid infrastructure costs of their expanding AI data centers.

“The move aims to prevent these costs from being passed on to utility ratepayers amid rising national energy prices.”

*Google Releases Upgrade: Gemini 3.1 Pro: In the endless leapfrogging for the title of best AI, Google is out with its latest contender, Gemini 3.1 Pro.

Observes Carl Franzen: “Already, evaluations by third-party firm Artificial Analysis show that Google’s Gemini 3.1 Pro has leapt to the front of the pack and is once more the most powerful and performant AI model in the world.”

Gemini 3.1 Pro’s biggest gain came in advanced reasoning, according to Franzen.

*Claude Opus 4.6 Debuts: Anthropic has unveiled its new, flagship AI – Claude Opus 4.6.

Observes writer Vignesh R: “The company says the new model improves significantly in coding, reasoning, long-context understanding and real-world knowledge work.”

It’s also designed to plan tasks more carefully and work for longer periods of time without losing focus.

*Almost-As-Good Alternative to Claude Opus 4.6 Released: AI users willing to sacrifice a bit of smarts in exchange for AI that’s cheaper and faster may want to check-out Anthropic’s alternative, Sonnet 4.6.

Observes the company’s release notes: “Claude Sonnet 4.6 is our most capable Sonnet model yet. It’s a full upgrade of the model’s skills across coding, computer use, long-context reasoning, agent planning, knowledge work, and design.”

Share a Link: Please consider sharing a link to https://RobotWritersAI.com from your blog, social media post, publication or emails. More links leading to RobotWritersAI.com helps everyone interested in AI-generated writing.

–Joe Dysart is editor of RobotWritersAI.com and a tech journalist with 20+ years experience. His work has appeared in 150+ publications, including The New York Times and the Financial Times of London.

The post Learn AI — Or Forget About That Bonus appeared first on Robot Writers AI.

How eyes affect our perception of a humanoid robot’s mind

Generative AI analyzes medical data faster than human research teams

How to make a cash flow forecasting app work for other systems

Your cash flow forecasting app is working beautifully. Your teams add their own data to keep forecasts running smoothly. Its predictions, tracking variances, and insights seem great.

…Until you take a closer look at the details, and determine that none of these systems actually talk to one another. And that’s a problem.

Consolidating all of that data is time-consuming, burning up hours and creating blind spots, not to mention introducing the likelihood of human error. The best forecasting algorithms are only as good as the data they can access, and siloed systems mean predictions are being made with incomplete information.

The solution is making your existing systems work together intelligently.

By connecting your cash flow forecasting app to your broader tech stack, you can turn data-limited predictions into enterprise-wide intelligence that drives business outcomes.

Key takeaways

- Cash flow forecasts fail when systems stay siloed. ERP, CRM, banking, and payment data must work together or forecasts will always lag behind reality.

- Integration is a data and governance problem, not just a technical one. Inconsistent definitions, latency, and unclear ownership create blind spots that undermine forecast trust.

- AI agents enable real-time, adaptive forecasting across systems. By ingesting data continuously and orchestrating responses, agents turn delayed insights into proactive cash management.

- Unified data models are the foundation of accurate forecasting. Standardizing how transactions, timing, and confidence are defined prevents double-counting and hallucinated cash.

- Explainability is what makes AI forecasts usable in finance. Forecasts must show drivers, confidence ranges, and audit trails to earn CFO and auditor trust.

Why cross-system cash flow forecasting matters

Cash flow data lives everywhere. ERP systems track invoices, CRMs monitor payment patterns, banks process transactions. When these systems don’t talk to each other, neither can your forecasts.

The hidden cost is staggering: teams can spend 50–70% of their time preparing and validating data across systems. That’s at least two days every week spent on manual reconciliation instead of strategic analysis.

Think about what you’re missing. Your ERP shows a $5 million receivable due tomorrow, but your payment processor knows it won’t settle for three days. Your CRM flagged a major customer’s credit deterioration last week, but your forecast still assumes normal payment terms. Your team has to scramble to cover all of these disruptions that integrated systems would have predicted days ago.

The disconnect between these systems means you’re making million-dollar decisions with incomplete information. Invoice timing, settlement patterns, customer behavior, bank account balances, vendor terms. Without connecting this data, you’re forecasting in the dark.

Integrated forecasting transforms cash management from reactive firefighting to proactive optimization. Real-time, cross-system forecasting improves working capital decisions, strengthens liquidity control, and reduces financial risk.

Key challenges of integrating forecasting across multiple platforms

Integration takes technical sophistication and organizational alignment; the challenges that come with this are real enough to derail unprepared teams.

For example:

| Integration challenge | What goes wrong | Real cost to your business | How to fix it |

| Data inconsistencies | Your ERP calls it “payment received,” while your bank says “pending settlement,” with different date formats and three different IDs for the same customer. | 40% of your team’s time is spent on re-mapping data for integration. | Build a single source of truth with canonical data models that translate every system’s quirks into one language. |

| System latency | APIs time out during month-end. Batch jobs run at midnight. By 9 a.m., your “real-time” data is already nine hours old. | Strategic decision-making on stale data. Missed same-day funding opportunities. | Deploy event-driven architecture with smart caching to get updates as they happen, not when they’re scheduled. |

| Legacy limitations | The 2015 ERP has no API. Your finance system exports CSV only. IT says, “Six months to build connectors.” | Teams waste 10+ hours weekly on slicing and dicing manual exports. Automation ROI evaporates. | Start where you can win. Prioritize API-ready systems first, then build bridges for must-have legacy data. |

| Governance gaps | Finance owns GL data. Finance controls bank feeds. Sales guards CRM access. No one agrees on a formal forecast methodology. | Projects stall because different teams produce conflicting forecasts. Executives lose trust in the numbers. | Appoint a forecast owner with cross-functional authority. Document one source-of-truth methodology. |

By combining early ML-driven insights with an iterative approach to data quality and governance, organizations can realize value quickly while continuously enhancing forecasting precision.

The key is to start with the data you have. Even imperfect datasets can be used to build initial models and generate early forecasts, providing value over current manual methods. As integration processes mature through flexible data adapters, event-driven updates, and clear role-based access, forecast accuracy and reliability improve.

Organizations that acknowledge integration complexity and actively build safeguards can avoid the costly missteps that turn promising AI initiatives into expensive operational failures.

How AI agents work under the hood for cash flow forecasting

Forget what you know about “traditional” forecasting models. AI agents are autonomous systems that can learn, adapt, and get smarter every day.

They don’t just crunch numbers. Think of them as three layers working together:

- Data ingestion pulls data from every system (ERP, banks, payment processors) in real time. When your bank API crashes at month-end (and it will at some point), the agent itself keeps running. When payment processors change formats overnight, it adapts automatically.

- The machine learning engine runs multiple forecasting models simultaneously to uncover steady patterns, seasonal swings, and outlier relationships, and picks the winner for each scenario.

- Orchestration makes everything work together. Large payment hits unexpectedly? The system instantly recalculates, updates forecasts, and alerts finance accordingly.

So when a major customer delays a $2 million payment, the finance team knows within minutes, not days. Their AI agent spots the missing transaction, recalculates liquidity needs, and gives them a three-day head start on bridge financing.

These agents also improve upon themselves. Every market surprise or forecast error becomes a lesson that informs the next decision, with each new data source making predictions sharper.

Steps to automate and scale cash forecasting

If you’re ready to build cross-system forecasting capabilities, here’s a step-by-step forecasting process you can follow. It’s designed for organizations that want to move beyond proof-of-concept automated cash flow management.

1. Assess data sources and connectivity

Start by mapping what you actually have. You’ll map the obvious sources, like your ERP and banking platforms. You’ll also want to identify hidden cash flow drivers, like the Excel file that finance updates daily and the subsidiary system installed in 2017.

For each system, answer the following questions:

- Who owns the keys (data access)?

- Can it talk to other systems (API-ready)?

- How fresh is the data (real-time vs. overnight batch)?

- How accurate and complete is the output (rate 1–5)?

- Would bad data derail your forecast (business impact)?

Once you have a complete view of what you’re already working with, start with systems that are API-ready and business-critical. That industry-standard cloud ERP? Perfect. The DOS-based finance system from 1995? Push that to phase two.

2. Define unified data models

Create a unified data model and standard formats that all sources map to. This is important for your integration backbone to maintain consistency, regardless of differences across source systems.

Every transaction, regardless of source, is translated into the same language:

- What: Cash movement type (AR collection, AP payment, transfer)

- When: Standardized ISO-formatted timestamps that match across systems

- How much: Consistent currency and decimal handling (no more penny discrepancies)

- Where: Which account, entity, and business unit, using one naming convention

- Confidence: AI-generated score to keep tabs on how reliable the data is

Skipping this step will likely create downstream issues: your AI agent may hallucinate, predicting phantom cash because it counted the same payment two or three times under different names or IDs.

3. Configure and train AI agents

Start with your two or three best data sources to optimize forecasting with reliable, trusted data.

Give your AI agent enough historical data from those sources to learn your business rhythms. With at least 13 months of data, it should be able to identify patterns like “customers always pay late in December” or “we see a cash crunch every year.”

AI-powered time series modeling adds value through AutoML tests with multiple approaches simultaneously before making its decision:

- ARIMA for steady patterns

- Prophet for seasonal swings

- Neural networks for complex relationships

The best model wins automatically, every time.

During this phase, validate everything. Ruthlessly. Backtest against last year’s actuals. If your model predicts within 5%, that’s a great threshold. If it’s off by 30%, keep training.

4. Monitor and refine forecast accuracy

Far from a one-time project, your AI agent needs to learn from its mistakes. Daily variance analysis shows where predictions fell short of actual results. When accuracy drops below your defined thresholds, say, from 85% to 70%, the system automatically retrains itself on fresh data.

Manual data entry isn’t always a bad thing. Your team’s expertise and overrides are especially valuable, as well. When finance knows that a major customer always pays late in December (despite what the data says), capture that intelligence. Feed it back into the agent to make it smarter.

Measuring adoption rate is also a major driver, especially for scalability: the biggest roadblock is often organizational resistance. Teams wait for perfect data that never comes. Meanwhile, competitors are already optimizing working capital with “good enough” forecasts.

Get stakeholder and organizational buy-in by starting with two departments that are already decently engaged, along with their trusted data. Show accurate improvements in 30–60 days, letting success sell itself — and then scale.

Tips for building trust and explainability in AI forecasts

Your CFO won’t sign off on black box AI that spits out numbers. They need to know why the forecast jumped $2 million overnight.

- Make AI explain itself. When your forecast changes, the system should tell you exactly why. Be specific. For example, “Customer payment patterns shifted 20%, driving a $500K variance.” Every prediction needs a story your team can verify.

- Show confidence, not false precision. Present forecasts with context. For instance, “2.5 million” can be shown as “$2.5 million ± $200K (high confidence)” or “$2.5 million ± $800K (volatile conditions).” The ranges tell finance how much they can relax or if they need to start preparing contingencies.

- Track everything. Every data point, model decision, and human override should be logged and auditable. When auditors ask questions, you’ll have answers. When the model gets something wrong, you’ll know why.

- Let experts override. Your finance team knows your customers and their payment patterns. Allow them to adjust the forecast, but with specific context. That human intelligence makes your AI smarter.

Finance data will never be perfect. But trust in your system is built when it shows its work, calls out uncertainty, and learns from the experts who use it daily.

You can use different explainability approaches for your different audiences:

| Audience | Explainability need | Recommended approach |

| C-suite | High-level confidence and key drivers | Dashboard showing confidence level (“85% sure”) and top three drivers (“Customer delays driving -$500K variance”) |

| Finance | Detailed factor analysis and scenario impacts | Interactive scenario planning with drill-downs: click any number to see specific invoices, customers, and patterns in fluctuations and market conditions |

| Auditors | Audit trails and model governance | Complete audit trail: every data source, timestamp, model version, and human override with documented reasoning |

| IT/data science | Technical model performance and diagnostics | Technical diagnostics: prediction accuracy trends, feature importance scores, model drift alerts, performance metrics |

Common tools and models for end-to-end cash flow management

The build-vs-buy decision for accurate cash flow forecasting software comes down to spending 18 months building with TensorFlow or going live in six weeks with a platform that already works and plugs into the tools you currently use.

What to look for in a forecasting tool stack:

- AI platforms do the heavy lifting, running multiple models, picking winners, and explaining predictions. DataRobot’s enterprise-scale capabilities get you from Excel to AI without hiring a team of data scientists.

- Integration layer (MuleSoft, Informatica) moves data between systems. Pick this layer based on what you already have to avoid adding complexity.

- Visualization (Tableau, Power BI) turns forecasts into decisions. Leadership can quickly evaluate visual data and make a decision.

Your evaluation criteria checklist:

- Scale: Will it handle 5x or 10x your current volume?

- Compliance: Does it satisfy auditors and regulators?

- Real TCO: Factor in the hidden costs (integration, training, maintenance)

- Speed to value: Weeks, months, or quarters to first forecast?

Smart money leverages existing investments rather than ripping and replacing everything from scratch. Compare platforms that plug into your current stack to deliver value faster.

Transform your cash flow forecasting with production-ready AI

In 2022, AI-driven forecasting in supply chain management reportedly reduced errors by 20–50%. Fast-forward to today’s even more accurate and intelligent agent capabilities, and your cash flow forecasting potential is poised for even greater success:

- Connected data that eliminates blind spots

- Explainable AI that finance teams trust

- Continuous learning that gets smarter every day

- Built-in governance that keeps auditors happy

Better forecasts mean less idle cash and lower financing costs. Basically, improved financial health. Your team stops fighting with spreadsheets and starts preventing problems, while you negotiate from a position of strength because you know precisely when cash hits.

AI agent early adopters are already learning patterns, catching anomalies, and freeing up finance teams to think more strategically. These systems will autonomously predict cash flow, actively manage liquidity, negotiate payment terms, and optimize working capital across global operations.

Learn how DataRobot’s financial services solutionsintegrate with your existing systems and deliver enterprise-grade forecasting that actually works. No rip-and-replace. No multi-year implementations.

FAQs

Why do cash flow forecasting apps struggle to work across systems?

Most forecasting tools rely on partial data from a single source. When ERP, banking, CRM, and payment systems are disconnected, forecasts miss timing delays, customer behavior changes, and real liquidity risks.

How do AI agents improve cross-system cash flow forecasting?

AI agents continuously ingest data from multiple systems, run and select the best forecasting models, and automatically update projections when conditions change. This allows finance teams to react in minutes instead of days.

Do you need perfect data before automating cash flow forecasts?

No. Even imperfect data can deliver better results than manual spreadsheets. The key is starting with trusted, API-ready systems and improving data quality iteratively as integrations mature.

How do finance teams trust AI-generated forecasts?

Trust comes from explainability. The system must show why numbers changed, highlight key drivers, surface confidence ranges, and log every data source, model decision, and human override for auditability.

What platforms support enterprise-grade, integrated forecasting?

Platforms like DataRobot support cross-system integration, AI agent orchestration, explainable forecasting, and built-in governance, helping finance teams scale forecasting without ripping out existing systems.

The post How to make a cash flow forecasting app work for other systems appeared first on DataRobot.

Humanoid home robots are on the market—but do we really want them?

Humanoid robots that ‘catch themselves’ instead of falling: What a new walking algorithm changes

Quantum computer breakthrough tracks qubit fluctuations in real time

Solving Real-World Problems with Robotics That Actually Work

Robot Talk Episode 145 – Robotics and automation in manufacturing, with Agata Suwala

Claire chatted to Agata Suwala from the Manufacturing Technology Centre about leveraging robotics to make manufacturing systems more sustainable.

Agata Suwala is a Technology Manager at the Manufacturing Technology Centre, where she leads cutting-edge work in automation and robotics. With over a decade of experience in R&D, Agata specialises in developing and implementing advanced manufacturing systems—particularly for the aerospace sector—transforming complex, skill-intensive processes through automation. Her recent focus is on enabling the transition to a circular economy by leveraging automation and robotics to create sustainable, scalable technologies.

Reversible, detachable robotic hand redefines dexterity

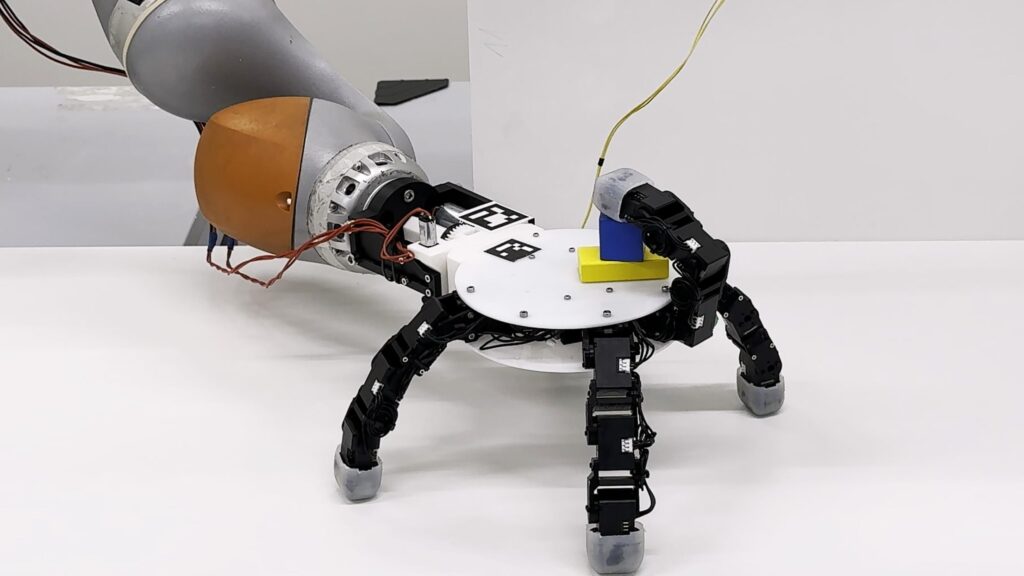

2025 LASA/CREATE/EPFL CC BY SA.

2025 LASA/CREATE/EPFL CC BY SA.

By Celia Luterbacher

With its opposable thumb, multiple joints and gripping skin, human hands are often considered to be the pinnacle of dexterity, and many robotic hands are designed in their image. But having been shaped by the slow process of evolution, human hands are far from optimized, with the biggest drawbacks including our single, asymmetrical thumbs and attachment to arms with limited mobility.

“We can easily see the limitations of the human hand when attempting to reach objects underneath furniture or behind shelves, or performing simultaneous tasks like holding a bottle while picking up a chip can,” says Aude Billard, head of the Learning Algorithms and Systems Laboratory (LASA) in EPFL’s School of Engineering. “Likewise, accessing objects positioned behind the hand while keeping the grip stable can be extremely challenging, requiring awkward wrist contortions or body repositioning.”

A team composed of Billard, LASA researcher Xiao Gao, and Kai Junge and Josie Hughes from the Computational Robot Design and Fabrication Lab designed a robotic hand that overcomes these challenges. Their device, which can support up to six identical silicone-tipped fingers, fixes the problem of human asymmetry by allowing any combination of fingers to form opposing pairs in a thumb-like pinch. Thanks to its reversible design, the ‘back’ and ‘palm’ of the robotic hand are interchangeable. The hand can even detach from its robotic arm and ‘crawl’, spider-like, to grasp and carry objects beyond the arm’s reach.

“Our device reliably and seamlessly performs ‘loco manipulation’ — stationary manipulation combined with autonomous mobility – which we believe has great potential for industrial, service, and exploratory robotics,” Billard summarizes. The research has been published in Nature Communications.

Human applications – and beyond

While the robotic hand looks like something from a futuristic sci-fi movie, the researchers say they drew inspiration from nature.

“Many organisms have evolved versatile limbs that seamlessly switch between different functionalities like grasping and locomotion. For example, the octopus uses its flexible arms both to crawl across the seafloor and open shells, while in the insect world, the praying mantis use specialized limbs for locomotion and prey capture,” Billard says.

Indeed, the EPFL robot can crawl while maintaining a grip on multiple objects, holding them under its ‘palm’, on its ‘back’, or both. With five fingers, the device can replicate most of the traditional human grasps. When equipped with more than five fingers, it can single-handedly tackle tasks usually requiring two human hands – such as unscrewing the cap on a large bottle or driving a screw into a block of wood with a screwdriver.

“There is no real limitation in the number of objects it can hold; if we need to hold more objects, we simply add more fingers,” Billard says.

The researchers foresee applications of their innovative design in real-world settings that demand compactness, adaptability, and multi-modal interaction. For example, the technology could be used to retrieve objects in confined environments or expand the reach of traditional industrial arms. And while the proposed robotic hand is not itself anthropomorphic, they also believe it could be adapted for prosthetic applications.

“The symmetrical, reversible functionality is particularly valuable in scenarios where users could benefit from capabilities beyond normal human function,” Billard says. “For example, previous studies with users of additional robotic fingers demonstrate the brain’s remarkable adaptability to integrate additional appendages, suggesting that our non-traditional configuration could even serve in specialized environments requiring augmented manipulation abilities.”

Reference

A detachable crawling robotic hand, Xiao Gao (高霄), Kunpeng Yao (姚坤鹏), Kai Junge, Josie Hughes & Aude Billard, Nat Commun 17, 428 (2026).