Origami-inspired robot built from printable polymers uses electric current to move

KNF Introduces Intelligent Pump Features for Flow, Pressure and Vacuum Control and Versatile Dosing

Best agentic AI platforms: Why unified platforms win

Search “best agentic AI platform,” and you’ll drown in a sea of vendor comparisons, feature matrices, and tool catalogs. The real enemy isn’t picking the wrong vendor, though. Building your own AI solution can kill your ambitions before they even get off the ground.

In most enterprises, teams are cobbling together their own mix-and-match stack of open-source tools, cloud services, and point solutions. Marketing has its chatbot builder, IT is experimenting with some hyperscaler’s agent framework, and data science is spinning up vector databases on whatever cloud credits they can scrounge up.

That’s shadow AI in a nutshell, with governance gaps that no compliance audit can easily untangle.

Everyone loves talking about building agents. That’s the easy part.

The part nobody wants to admit is that most of those agents will never make it out of a demo. Siloed teams don’t have a unified way to run them, govern them, or keep them from stepping on each other’s toes.

Enterprises don’t need more pet projects. They need a governed agent workforce: AI that works across teams, clouds, and business systems without falling apart at the slightest disruption.

Key takeaways

- Fragmented AI stacks slow enterprises down. Tool sprawl and shadow AI make agents brittle, hard to govern, and difficult to scale.

- End-to-end means unifying build, deploy, and govern. A single control plane eliminates handoff failures and gets agents into production faster.

- The blank-slate problem is real. Reference architectures, agent templates, and pre-built starter patterns help teams deliver value quickly instead of rebuilding from zero.

- Openness only works with governance. Supporting any tool or model means nothing without consistent security, lineage, and policy controls traveling with every agent.

- Structural partnerships accelerate enterprise readiness. Co-engineered integrations with infrastructure and application providers give teams production-grade agentic workflows without months of manual setup.

Why fragmentation is the real enemy to enterprise AI

Walk into any enterprise today and ask how many different AI tools are running across the organization. The honest answer is usually, “We have no idea.” That’s not incompetence. It’s the natural result of teams trying to perform their jobs as quickly and accurately as possible.

Shadow AI, duplicated efforts, and niche point solutions are all part of the problem.

This leads to two common failure modes that kill more AI initiatives than any vendor selection mistake ever could:

- Tool sprawl and “LEGO block” architectures: Somewhere along the way, “shipping an AI use case” turned into a scavenger hunt. Teams are stitching together 10–14 tools, like vector stores, orchestrators, log aggregators, and governance band-aids, just to get a single agent out the door. Each API and integration point is just another output away from failure, security exposure, or a performance meltdown. A project that should take weeks dissolves into a multi-month integration saga nobody signed up for.

- Siloed, cloud-specific stacks that don’t interoperate: Speed over flexibility is how most teams end up locked into a hyperscaler ecosystem. It’s smooth sailing until you try to plug into a system you don’t control, deploy in a regulated environment, or collaborate with a partner on a different platform. Then you end up choosing between two painful paths: move fast and lose control, or keep control and fall behind.

Any serious conversation about agentic AI platforms has to start with eliminating this fragmentation. Everything else is secondary.

What “end-to-end” actually means for agentic AI

“End-to-end” gets thrown around by nearly every vendor in the space. But in an enterprise context, it has a specific meaning that most tool collections fail to meet.

Real end-to-end coverage spans three critical stages, each with specific requirements that fragmented tool chains struggle to address:

- Build: Teams shouldn’t start from scratch every time they need an agent. That means reference architectures, reusable patterns, and starter kits aligned with real enterprise workflows.

- Operate: Single agents are proofs of concept. Production systems need dozens or hundreds of agents coordinating across systems, sharing memory, handling errors gracefully, and optimizing for cost and latency. That requires sophisticated orchestration, continuous evaluation, and the ability to adjust behavior based on real-world performance.

- Govern: Lineage, access control, policy enforcement, and auditability are needed the moment agents start making decisions and interacting with real business systems. Governance isn’t a checklist. It’s the operating system.

Stitching together separate tools for each stage creates drift, governance gaps, and extended time-to-production. Teams spend more time on integration than innovation, and by the time they’re ready to deploy, the business requirements have already moved on.

From building agents to running an agent workforce

Most platform conversations go off the rails by focusing on building individual agents instead of running a workforce of agents at scale.

That shift changes everything. Running a workforce means you need:

- Shared memory so agents can learn from each other’s interactions

- Consistent reasoning behavior so agents don’t make contradictory decisions

- Centralized policies that update across the entire workforce without redeploying everything

- Unified observability so you can debug multi-agent workflows without chasing logs across a dozen different systems

Most importantly, you need agent lifecycle management at the workforce level. New agents should automatically inherit organizational knowledge and policies. Updates should roll out consistently across related agents to prevent coordination failures.

Building individual agents is a development problem. Running an agent workforce is an operational challenge that requires platform-level thinking. The two require fundamentally different approaches.

How to solve the blank slate problem

The industry loves to offer infinite flexibility, as if giving teams a blank canvas is a gift. It isn’t. Without a starting point, teams spend months making foundational decisions that have already been solved elsewhere, time-to-value slipping straight into the next fiscal year.

What teams actually need is momentum.

That means starting with fully formed agent templates and reference architectures shaped around real enterprise workflows. Not hypotheticals or academic examples, but real document pipelines, supply chain agents, and customer service automations with the hard edge cases already accounted for.

The best templates aren’t code samples polished for a conference demo. They’re production-ready patterns co-engineered with the infrastructure and application providers enterprises already run on, covering security, governance, error handling, and integrations from the start.

The difference in outcome is significant. Teams that start from proven patterns ship in weeks. Teams that start from scratch are still building foundations when the business requirements change.

When the question becomes “What has AI actually delivered?”, blank slates won’t have an answer. Proven patterns will.

Why a unified, vendor-neutral control plane matters

Enterprise AI teams face a structural tension: the tools and infrastructure they need to move fast are rarely the same ones IT needs to maintain control, security, and compliance.

That tension doesn’t resolve itself. It has to be designed around.

A unified control plane gives every team — AI developers, IT, security, and business owners — a single operating environment, without forcing them to abandon the tools they already use. Models, databases, frameworks, and deployment targets remain flexible. Governance, lineage, and policy enforcement travel with every agent, regardless of where it runs.

This matters most at the edges: sovereign cloud deployments, regulated industries, air-gapped environments, and hybrid infrastructure. These are precisely the situations where tool-by-tool governance breaks down, and where a single control plane proves its value.

Vendor neutrality isn’t a feature. It’s the prerequisite for enterprise AI that can scale beyond a single team, a single cloud, or a single use case. As AI becomes more deeply embedded in enterprise systems, the ability to govern across any environment becomes the only sustainable path forward.

What deep infrastructure partnerships actually enable

Not all technology partnerships are equal. Logo-level integrations add a name to a slide. Structural, co-engineered partnerships shape platform architecture and change what’s actually possible for enterprise teams.

The practical difference shows up in time and complexity. When infrastructure capabilities like inference microservices, reasoning models, guardrail frameworks, GPU optimizations, and decision engines are co-engineered into a platform rather than bolted on, teams get access to them without months of manual setup, validation, and tuning.

That acceleration unlocks use cases that require combining reasoning, simulation, and optimization together:

- Supply chain routing that considers real-time constraints and optimizes across multiple objectives

- Digital twins that simulate complex scenarios and recommend actions

- Clinical workflows that reason through patient data while maintaining strict privacy controls

Operational reliability matters as much as technical depth. Production-grade architectures need to be validated across cloud, on-premises, sovereign, and air-gapped environments. Co-engineered integrations carry that validation with them. Teams inherit it rather than having to build it themselves.

The technical and organizational impact of unifying build, deploy, and govern

The technical case for unifying build, deploy, and govern is well understood. The organizational impact is where the real breakthroughs happen.

Assumptions stay intact through every handoff. The entire multi-agent workflow is traceable in one place, so when something misbehaves, teams can diagnose and fix it without hunting through scattered logs across disconnected systems.

Organizationally, a unified platform creates shared clarity. AI teams, IT, security, compliance, and business owners operate from the same source of truth. Governance stops being a bureaucratic burden passed between teams and becomes a shared operating language built into the platform itself.

That shift has a direct effect on shadow AI. When the official platform is easier to use than rogue alternatives, teams stop building around it. Fragmentation recedes, not because it was mandated away, but because the better path became obvious.

What multi-agent orchestration actually requires

Single-agent demos make AI look straightforward. Multi-agent systems reveal the real complexity.

The moment you move beyond one agent, the gaps in most toolchains become obvious. Shared memory, consistent governance, workflow supervision, and unified debugging aren’t optional features. They’re the foundation that keeps multi-agent systems from becoming unmanageable.

Effective multi-agent orchestration requires several capabilities working together: dependency management and retries to handle failures gracefully, dynamic workload optimization to balance cost and performance across agents, and consistent safety and reasoning guardrails applied uniformly across the entire system.

Without these, multi-agent workflows create more operational risk than they eliminate. With them, a coordinated agent workforce becomes possible: one where agents share context, operate under consistent policies, and escalate appropriately when they reach the boundaries of their autonomy.

The workforce analogy holds here. A functioning workforce, human or AI, needs coordination, shared knowledge, guardrails, and clear escalation paths. Orchestration is what makes that possible at scale.

What a unified platform actually delivers

At some point, the architecture discussion has to give way to outcomes. Here’s what enterprises consistently see when the AI lifecycle is properly unified:

- Production timelines collapse. Teams that used to spend 12–18 months on build cycles ship in weeks when they’re not rebuilding foundational infrastructure from scratch. The difference isn’t effort — it’s starting position.

- Inference costs stay manageable. Multi-agent systems can burn through budgets faster than they generate insights. Real-time workload optimization and GPU-aware scheduling keep performance high and costs predictable.

- Resilience increases. When orchestration, retries, and error handling are handled at the platform level, a single failure can’t topple an entire workflow. Issues surface before they become customer-visible outages.

- Governance risk shrinks. Lineage, access control, and policy enforcement remain consistent across all agents. No blind spots, no mystery systems, no surprises in production. Audits become routine rather than disruptive.

These outcomes share a common cause: When the full lifecycle is unified, teams spend their energy on problems that matter to the business instead of problems created by their own infrastructure.

Build an agent workforce, not another tool stack

There’s a point where collecting more tools stops being a strategy and starts being a liability. Every addition creates another integration to maintain, another governance gap to close, and another point of failure to debug at the worst possible moment.

The enterprises making real progress with agentic AI aren’t the ones with the longest tool lists. They’re the ones that stopped stitching and started operating — with platforms that handle coordination, governance, and lifecycle management as core functions rather than afterthoughts.

An agent workforce needs to behave like a real team: coordinated, reliable, scalable, and aligned with business outcomes. That doesn’t happen by accident. It happens by design.

Ready to move from experiments to production-grade impact? See how the Agent Workforce Platform works.

FAQs

What makes an agentic AI platform truly “end-to-end”?

An end-to-end agentic AI platform unifies the entire lifecycle, building agents, orchestrating multi-agent workflows, deploying them across environments, and governing them with consistent policies. Most vendors offer a collection of tools that must be stitched together manually.

A true end-to-end platform provides a single control plane with shared lineage, observability, and governance, so teams can move from prototype to production without rebuilding everything.

Why is fragmentation such a major problem for enterprises?

When teams use different tools, LLMs, and workflows, enterprises end up with brittle agents, inconsistent policies, duplicated infrastructure, and security blind spots. Most production failures happen at the handoff between AI, IT, and DevOps.

Fragmentation also fuels shadow AI, where teams build unmanaged agents without oversight. A unified platform removes these gaps by giving all stakeholders a shared environment and the governance guardrails they need.

How does DataRobot differ from hyperscalers or open-source toolchains?

Hyperscalers and open-source stacks provide components like vector stores, LLMs, gateways, observability tools, but customers must assemble, integrate, and secure them themselves. DataRobot provides a single platform that unifies these pieces, supports any model or framework, and embeds governance from day one.

The difference is agent lifecycle management, multi-agent orchestration, and vendor-neutral governance that scales across the business.

How does the NVIDIA partnership improve enterprise readiness?

DataRobot is co-engineered with NVIDIA, giving customers day-zero access to NVIDIA NIMs, NeMo Guardrails, decision optimizers like cuOpt, and industry-specific SDKs without manual setup.

These integrations turn advanced models and infrastructure into usable, production-grade agentic patterns that would otherwise require months of assembly and validation.

Why does governance need to be embedded from the start?

Governance added at the end creates gaps in lineage, security, access control, and auditability, especially when agents move between tools. DataRobot embeds governance into every stage of the lifecycle: versioning, approvals, policy enforcement, monitoring, and runtime controls are applied automatically. This prevents drift, ensures reproducibility, and gives AI leaders visibility across all agents and workloads, even in highly regulated environments.

How does DataRobot support multi-agent systems at scale?

Multi-agent systems break easily when orchestrators, tools, and safety frameworks aren’t aligned. DataRobot handles coordination, retries, shared memory, policy consistency, and debugging across agents through Covalent orchestration, syftr optimization, and NVIDIA guardrails. Instead of running isolated agent demos, enterprises can run a governed, scalable workforce of agents that collaborate reliably across systems.

The post Best agentic AI platforms: Why unified platforms win appeared first on DataRobot.

These AI-powered guide dogs don’t just lead, they talk

Revolutionizing Cheese Production with AI and Machine Vision: A Success Story from Eberle Automatische Systeme

Generative AI improves a wireless vision system that sees through obstructions

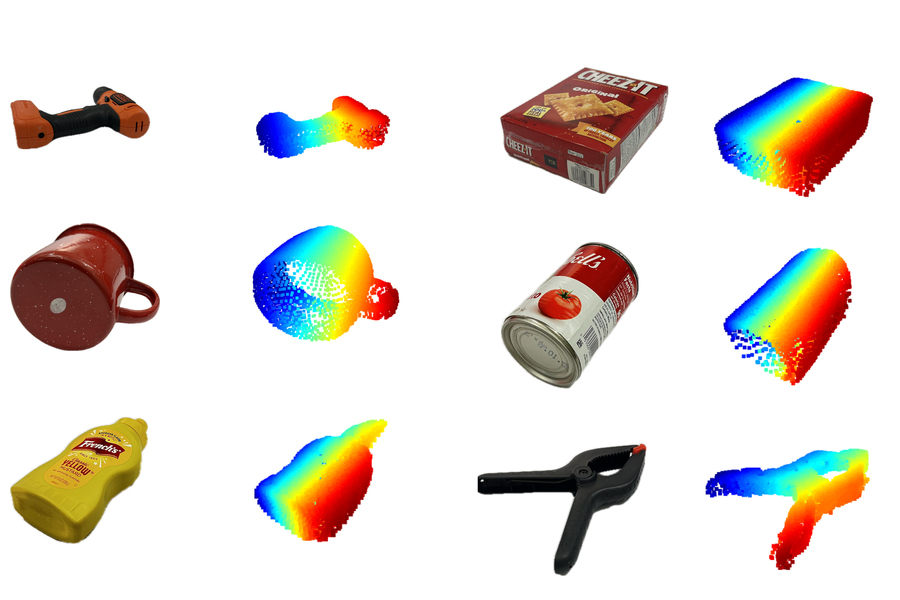

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers.

MIT researchers utilized specially trained generative AI models to create a system that can complete the shape of hidden 3D objects, like the ones pictured. Credit: Courtesy of the researchers.

By Adam Zewe

MIT researchers have spent more than a decade studying techniques that enable robots to find and manipulate hidden objects by “seeing” through obstacles. Their methods utilize surface-penetrating wireless signals that reflect off concealed items.

Now, the researchers are leveraging generative artificial intelligence models to overcome a longstanding bottleneck that limited the precision of prior approaches. The result is a new method that produces more accurate shape reconstructions, which could improve a robot’s ability to reliably grasp and manipulate objects that are blocked from view.

This new technique builds a partial reconstruction of a hidden object from reflected wireless signals and fills in the missing parts of its shape using a specially trained generative AI model.

The researchers also introduced an expanded system that uses generative AI to accurately reconstruct an entire room, including all the furniture. The system utilizes wireless signals sent from one stationary radar, which reflect off humans moving in the space.

This overcomes one key challenge of many existing methods, which require a wireless sensor to be mounted on a mobile robot to scan the environment. And unlike some popular camera-based techniques, their method preserves the privacy of people in the environment.

These innovations could enable warehouse robots to verify packed items before shipping, eliminating waste from product returns. They could also allow smart home robots to understand someone’s location in a room, improving the safety and efficiency of human-robot interaction.

“What we’ve done now is develop generative AI models that help us understand wireless reflections. This opens up a lot of interesting new applications, but technically it is also a qualitative leap in capabilities, from being able to fill in gaps we were not able to see before to being able to interpret reflections and reconstruct entire scenes,” says Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science, director of the Signal Kinetics group in the MIT Media Lab, and senior author of two papers on these techniques. “We are using AI to finally unlock wireless vision.”

Adib is joined on the first paper by lead author and research assistant Laura Dodds; as well as research assistants Maisy Lam, Waleed Akbar, and Yibo Cheng; and on the second paper by lead author and former postdoc Kaichen Zhou; Dodds; and research assistant Sayed Saad Afzal. Both papers will be presented at the IEEE Conference on Computer Vision and Pattern Recognition.

Surmounting specularity

The Adib Group previously demonstrated the use of millimeter wave (mmWave) signals to create accurate reconstructions of 3D objects that are hidden from view, like a lost wallet buried under a pile.

These waves, which are the same type of signals used in Wi-Fi, can pass through common obstructions like drywall, plastic, and cardboard, and reflect off hidden objects.

But mmWaves usually reflect in a specular manner, which means a wave reflects in a single direction after striking a surface. So large portions of the surface will reflect signals away from the mmWave sensor, making those areas effectively invisible.

“When we want to reconstruct an object, we are only able to see the top surface and we can’t see any of the bottom or sides,” Dodds explains.

The researchers previously used principles from physics to interpret reflected signals, but this limits the accuracy of the reconstructed 3D shape.

In the new papers, they overcame that limitation by using a generative AI model to fill in parts that are missing from a partial reconstruction.

“But the challenge then becomes: How do you train these models to fill in these gaps?” Adib says.

Usually, researchers use extremely large datasets to train a generative AI model, which is one reason models like Claude and Llama exhibit such impressive performance. But no mmWave datasets are large enough for training.

Instead, the researchers adapted the images in large computer vision datasets to mimic the properties in mmWave reflections.

“We were simulating the property of specularity and the noise we get from these reflections so we can apply existing datasets to our domain. It would have taken years for us to collect enough new data to do this,” Lam says.

The researchers embed the physics of mmWave reflections directly into these adapted data, creating a synthetic dataset they use to teach a generative AI model to perform plausible shape reconstructions.

The complete system, called Wave-Former, proposes a set of potential object surfaces based on mmWave reflections, feeds them to the generative AI model to complete the shape, and then refines the surfaces until it achieves a full reconstruction.

Wave-Former was able to generate faithful reconstructions of about 70 everyday objects, such as cans, boxes, utensils, and fruit, boosting accuracy by nearly 20 percent over state-of-the-art baselines. The objects were hidden behind or under cardboard, wood, drywall, plastic, and fabric.

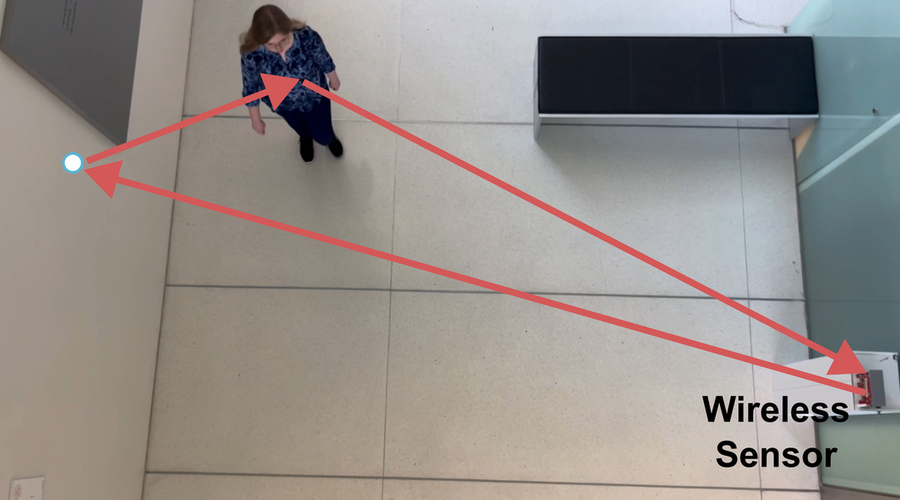

The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.

The team also built an expanded system that fully reconstructs entire indoor scenes by leveraging wireless signal reflections off humans moving in a room. Credit: Courtesy of the researchers.

Seeing “ghosts”

The team used this same approach to build an expanded system that fully reconstructs entire indoor scenes by leveraging mmWave reflections off humans moving in a room.

Human motion generates multipath reflections. Some mmWaves reflect off the human, then reflect again off a wall or object, and then arrive back at the sensor, Dodds explains.

These secondary reflections create so-called “ghost signals,” which are reflected copies of the original signal that change location as a human moves. These ghost signals are usually discarded as noise, but they also hold information about the layout of the room.

“By analyzing how these reflections change over time, we can start to get a coarse understanding of the environment around us. But trying to directly interpret these signals is going to be limited in accuracy and resolution.” Dodds says.

They used a similar training method to teach a generative AI model to interpret those coarse scene reconstructions and understand the behavior of multipath mmWave reflections. This model fills in the gaps, refining the initial reconstruction until it completes the scene.

They tested their scene reconstruction system, called RISE, using more than 100 human trajectories captured by a single mmWave radar. On average, RISE generated reconstructions that were about twice as precise than existing techniques.

In the future, the researchers want to improve the granularity and detail in their reconstructions. They also want to build large foundation models for wireless signals, like the foundation models GPT, Claude, and Gemini for language and vision, which could open new applications.

This work is supported, in part, by the National Science Foundation (NSF), the MIT Media Lab, and Amazon.

Find out more

- Wave-Former: Through-Occlusion 3D Reconstruction via Wireless Shape Completion, Laura Dodds, Maisy Lam, Waleed Akbar, Yibo Cheng, Fadel Adib

- RISE: Single Static Radar-based Indoor Scene Understanding, Kaichen Zhou, Laura Dodds, Sayed Saad Afzal, Fadel Adib

Samsung Electronics (005930.KS) — AI Equity Research | April 2026

This analysis was produced by an AI financial research system. All data is sourced exclusively from publicly available filings, earnings transcripts, government data, and free financial aggregators — no proprietary data, paid research, or institutional tools are used. Every figure cited can be independently verified by the reader using the sources listed at the end...

The post Samsung Electronics (005930.KS) — AI Equity Research | April 2026 appeared first on 1redDrop.

Magnetic coil setup guides microrobots without seeing them

Wearable robots improve coordination between pairs of violin players

The Biggest Impact of Autonomous Capture and AI

Walmart Inc. (WMT) — AI Equity Research | April 2026

This analysis was produced by an AI financial research system. All data is sourced exclusively from publicly available filings, earnings transcripts, government data, and free financial aggregators — no proprietary data, paid research, or institutional tools are used. Every figure cited can be independently verified by the reader using the sources listed at the end...

The post Walmart Inc. (WMT) — AI Equity Research | April 2026 appeared first on 1redDrop.

Resource-constrained image generation and visual understanding: an interview with Aniket Roy

In the latest in our series of interviews meeting the AAAI/SIGAI Doctoral Consortium participants, we caught up with Aniket Roy to find out more about his research on generative models for computer vision tasks.

Tell us a bit about your PhD – where did you study, and what was the topic of your research?

I recently completed my PhD in Computer Science at Johns Hopkins University, where I worked under the supervision of Bloomberg Distinguished Professor Rama Chellappa. My research primarily focused on developing methods for resource-constrained image generation and visual understanding. In particular, I explored how modern generative models can be adapted to operate efficiently while maintaining strong performance.

During my PhD, I worked broadly at the intersection of generative AI, multimodal learning, and few-shot learning. Much of my work involved designing techniques that enable models to learn new concepts or perform complex visual tasks with limited data or computational resources. This included research on diffusion models, personalized image generation, and multimodal representation learning. Overall, my work aims to make advanced vision and generative AI systems more adaptable, efficient, and practical for real-world applications.

Could you give us an overview of the research you carried out during your PhD?

During my PhD, my research broadly focused on improving the adaptability, efficiency, and quality of modern generative models for computer vision tasks. The rapid progress in generative AI–particularly diffusion models and vision–language models–has created new opportunities to address long-standing challenges such as data scarcity, controllable generation, and personalized image synthesis. My work aimed to develop methods that allow these large models to adapt effectively with limited data and computational resources while maintaining high visual fidelity.

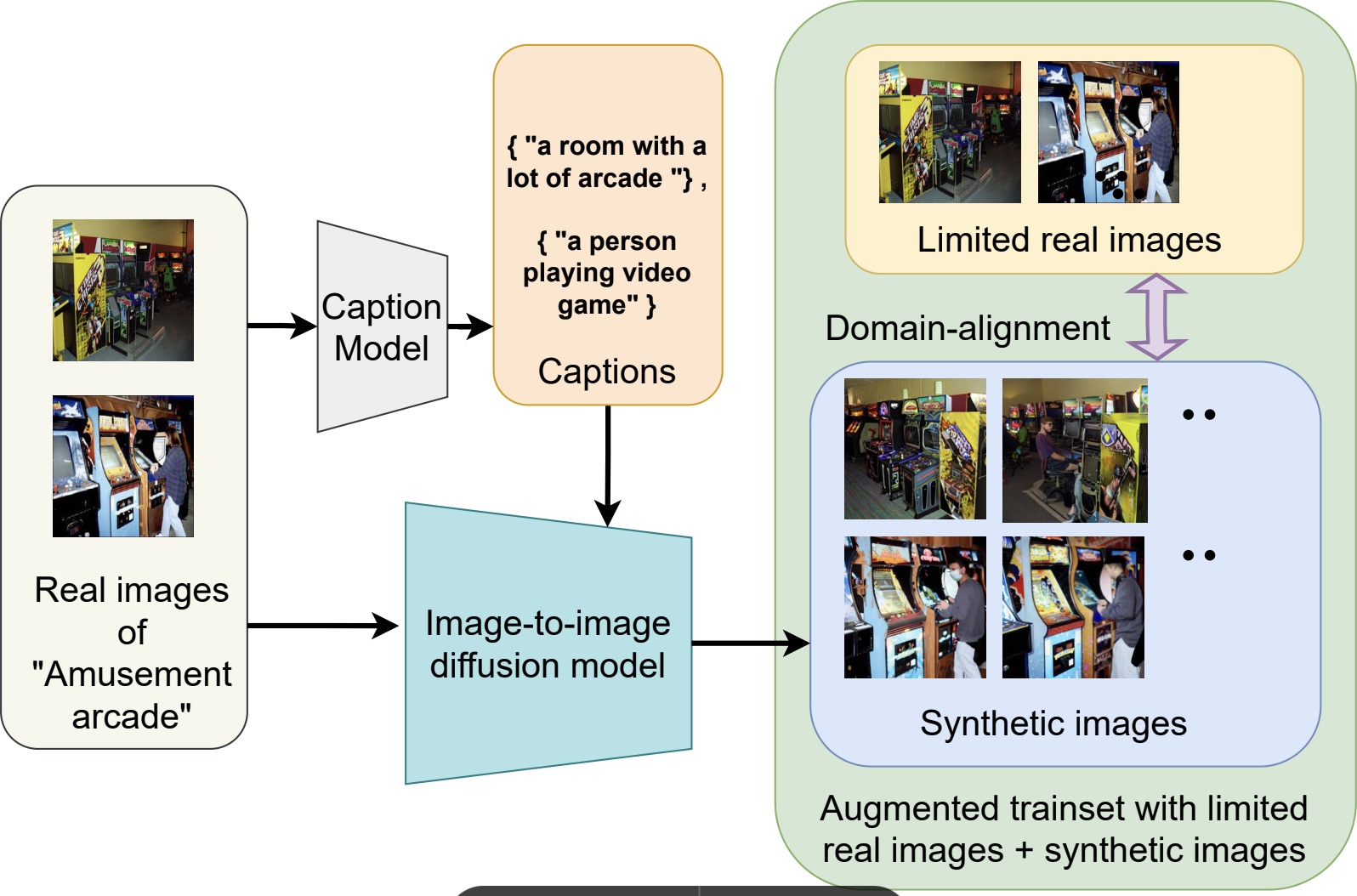

One line of my research addressed learning in data-constrained settings. For example, I proposed FeLMi, a few-shot learning framework that leverages uncertainty-guided hard mixup strategies to improve robustness and generalization when only a small number of labeled samples are available. Building on this idea of improving training data quality, I also developed Cap2Aug, which introduces caption-guided multimodal augmentation. This approach uses textual descriptions to guide synthetic image generation, improving visual diversity while reducing the domain gap between real and generated data.

Overview of Cap2Aug.

Overview of Cap2Aug.

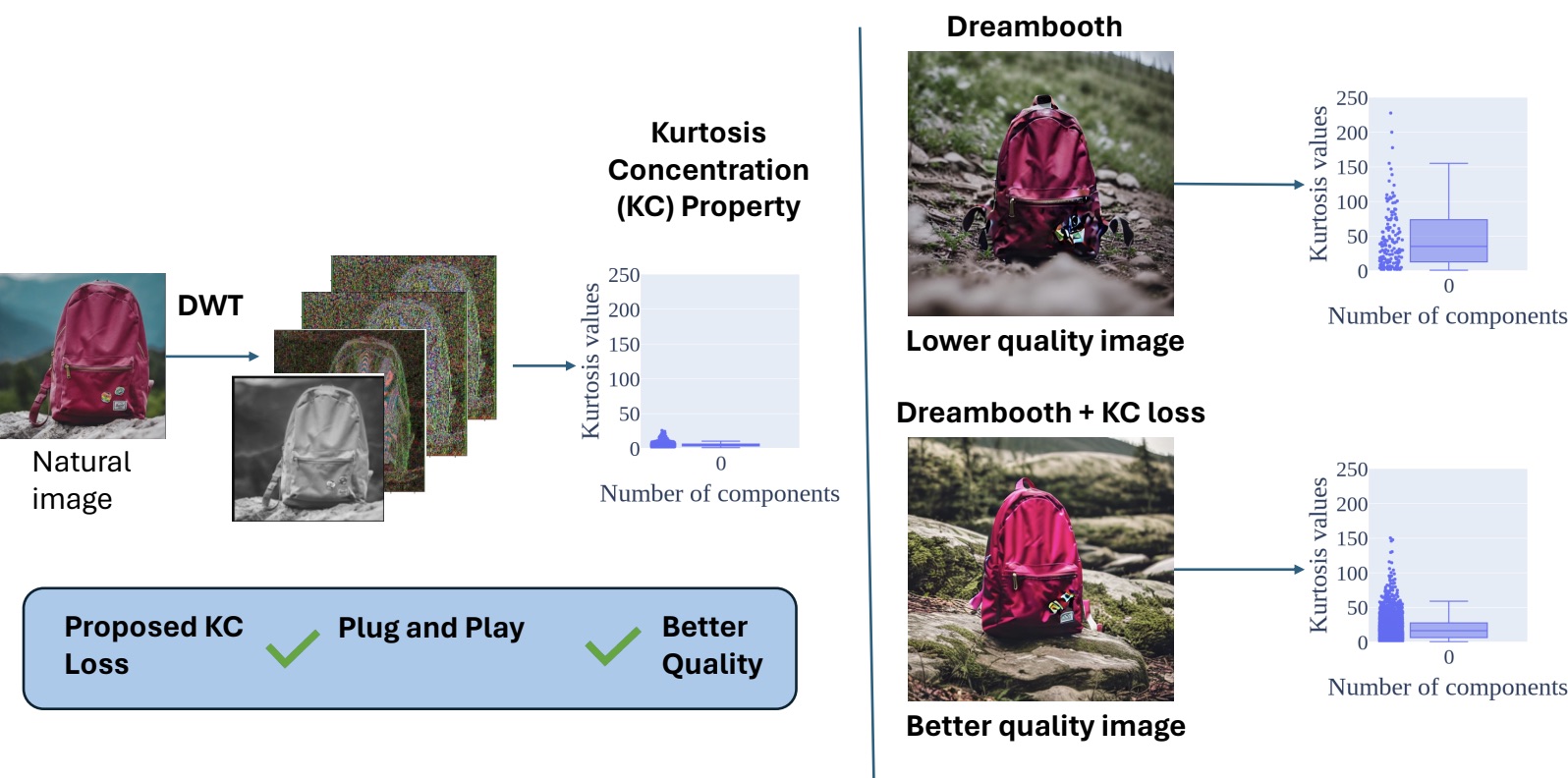

Another aspect of my research focused on improving the perceptual quality of images generated by diffusion models. In this direction, I proposed DiffNat, a plug-and-play regularization method based on the kurtosis-concentration property observed in natural images. By incorporating this principle into diffusion models through a KC loss, the generated images exhibit more natural texture statistics and improved perceptual realism, which also benefits downstream vision tasks.

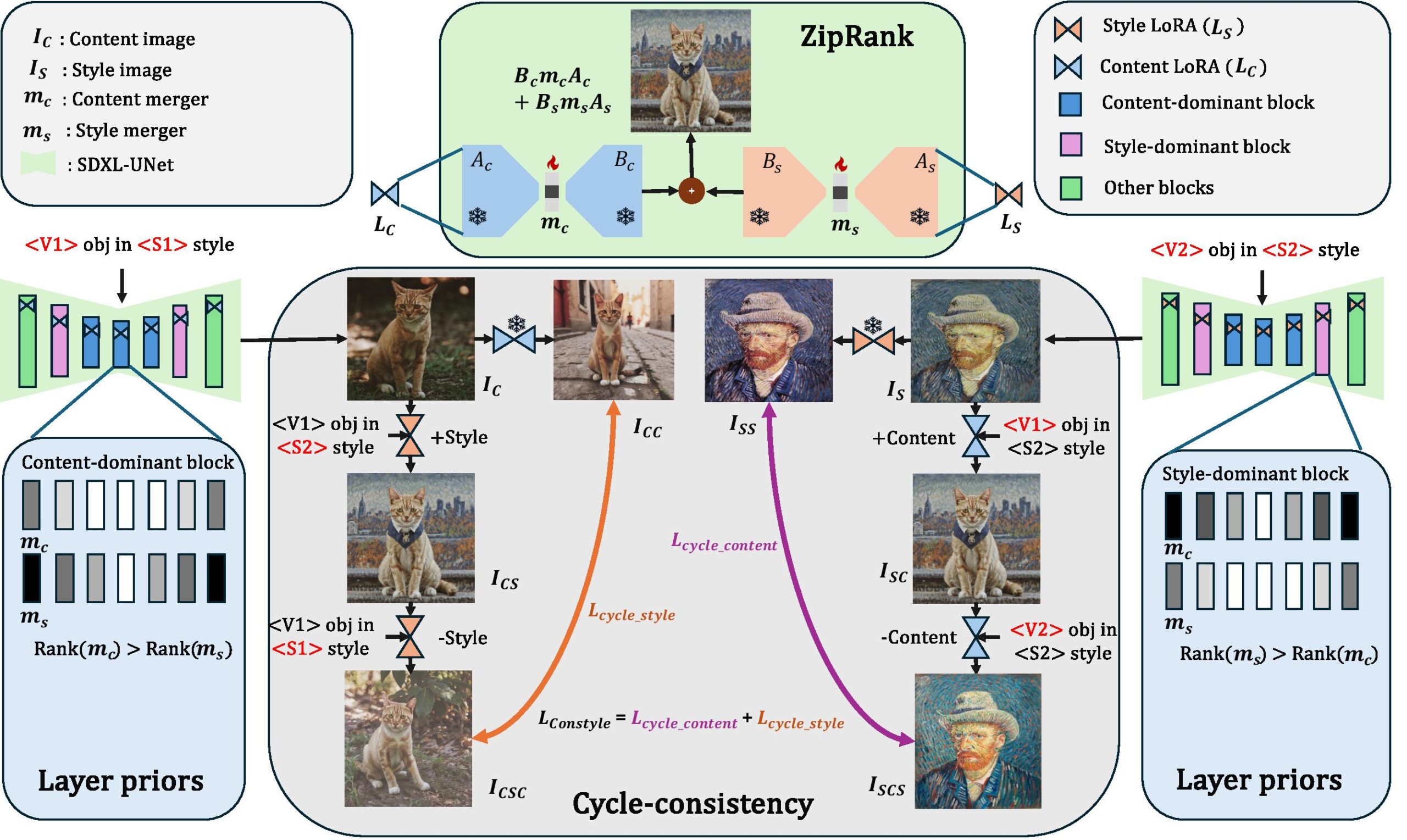

A major part of my work explored personalization and efficient adaptation of large generative models. I introduced DuoLoRA, a parameter-efficient framework for composing low-rank adapters that enables fine-grained control over content and style without requiring full retraining of the base model. I further extended personalization to zero-shot settings using a training-free textual inversion approach that allows arbitrary objects to be customized directly during generation. Finally, I proposed MultiLFG, a frequency-guided multi-LoRA composition framework that uses wavelet-domain representations and timestep-aware weighting to enable accurate and training-free fusion of multiple concepts in diffusion models.

Overview of DuoLoRA.

Overview of DuoLoRA.

Overall, my research contributes toward building generative systems that are more efficient, adaptable, and controllable, enabling high-quality image generation and understanding even in data-limited or resource-constrained scenarios.

Was there a specific project or an aspect of your research that was particularly interesting?

One project that I found particularly interesting during my PhD is DiffNat, which was published in TMLR 2025. Diffusion models have become the backbone of many modern generative AI systems and have achieved impressive results in generating and editing realistic images. However, improving the perceptual quality and naturalness of generated images remains an important challenge.

Overview of DiffNat.

Overview of DiffNat.

In this work, we introduced a simple but effective regularization technique called the kurtosis concentration (KC) loss, which can be integrated into standard diffusion model pipelines as a plug-and-play component. The idea was inspired by a statistical property of natural images: when an image is decomposed into different band-pass filtered versions–for example using the Discrete Wavelet Transform–the kurtosis values across these frequency bands tend to be relatively consistent. In contrast, generated images often show large discrepancies across these bands. Our method reduces the gap between the highest and lowest kurtosis values across the frequency components, encouraging the generated images to follow more natural image statistics.

In addition, we introduced a condition-agnostic perceptual guidance strategy during inference that further improves image fidelity without requiring additional training signals. We evaluated the approach across several diverse tasks, including personalized few-shot finetuning with text guidance, unconditional image generation, image super-resolution, and blind face restoration. Across these tasks, incorporating the KC loss and perceptual guidance consistently improved perceptual quality, measured through metrics such as FID and MUSIQ, as well as through human evaluation.

What I particularly liked about this project is that it connects classical image statistics with modern diffusion models. It shows that relatively simple statistical insights about natural images can still play a powerful role in improving large generative models.

What are your plans for building on the PhD – where are you working now and what will you be investigating next?

During my PhD, I discovered that I genuinely enjoy the process of research–especially the moment when an intuition or idea turns out to work in practice. That process of exploring new ideas and pushing the boundaries of what we know is something I find very motivating.

To continue pursuing this, I will be joining NEC Laboratories America as a Research Scientist. In this role, I hope to build on my PhD work by developing new methods for generative models and exploring how these models can interact with broader multimodal systems. In particular, I am interested in advancing research at the intersection of generative models, vision–language–action models, and embodied AI. More broadly, my goal is to contribute to the development of intelligent systems that can understand, generate, and interact with the visual world more effectively, while also continuing to push forward the scientific understanding of these models.

I’m interested in how you got into the field. What inspired you to study computer vision and machine learning?

My interest in computer vision and machine learning started during my undergraduate studies, when I took courses in signal processing and image processing. I found those subjects particularly fascinating because they allowed you to experiment with algorithms and immediately see their effects on images. That visual and intuitive aspect made the field very engaging, and it helped me appreciate how mathematical concepts can directly translate into meaningful visual results.

At the same time, I was also curious about how the human brain processes visual information—how we are able to recognize objects, understand scenes, and interpret complex visual signals so effortlessly. That curiosity led me to wonder whether we could design computational models that mimic aspects of human perception and enable machines to understand visual data in a similar way.

A major influence during this time was my professor, Dr. Kuntal Ghosh, who encouraged me to think more deeply about these problems and approach them with a scientific mindset. His mentorship played an important role in shaping my interest in research. Since then, that curiosity about visual perception and intelligent systems has continued to drive my work in computer vision and machine learning.

What was your experience of the Doctoral Consortium at AAAI?

Unfortunately, I was not able to attend the AAAI Doctoral Consortium in person due to visa-related issues. However, a colleague kindly helped present my poster on my behalf during the event. Even though I could not be there physically, I was very encouraged by the response my work received. Several researchers reached out to me after seeing the poster, and we had some very insightful discussions about the ideas and potential future directions of the research. In that sense, I still found the experience quite rewarding. The Doctoral Consortium is a great platform for sharing early-stage ideas, receiving feedback from the community, and connecting with other researchers working on related problems. I appreciated the opportunity to engage with people who were interested in the work, and those interactions helped spark new perspectives and collaborations.

Could you tell us an interesting (non-AI related) fact about you?

Outside of research, I’m a big fan of music and stand-up comedy, and I really enjoy traveling whenever I get the chance. Exploring new places, cultures, and perspectives is something I find refreshing—it’s a great way to recharge and stay curious about the world beyond work. I also enjoy writing poetic satire from time to time, and I occasionally perform it. It’s a fun creative outlet that allows me to mix humor and storytelling, which is quite different from the analytical nature of the research work I usually do.

About Aniket Roy

|

Aniket is currently a Research Scientist at NEC Labs America. He obtained his PhD from the Computer Science dept at Johns Hopkins University under the guidance of Bloomberg Distinguished Professor Prof. Rama Chellappa. Prior to that, he did a Master’s from Indian Institute of Technology Kharagpur. He was recognized with the Best Paper Award at IWDW 2016 and the Markose Thomas Memorial Award for the best research paper at the Master’s level. During PhD, he explored domains of few-shot learning, multimodal learning, diffusion models, LLMs, LoRA merging with publications in leading venues such as NeurIPS, ICCV, TMLR, WACV, CVPR and also 3 US patents filed. During his PhD, he also gained industrial experience through multiple internships in Amazon, Qualcomm, MERL, and SRI International. He was awarded as an Amazon Fellow (2023-24) at JHU and selected to participate in ICCV’25 and AAAI’26 doctoral consortium. |

This new chip survives 1300°F (700°C) and could change AI forever

How to achieve zero-downtime updates in large-scale AI agent deployments

When your website goes down, you know it immediately. Alerts fire, users complain, revenue may stop. When your AI agents fail, none of that happens. They keep responding. They just respond wrong.

Agents can appear fully operational while hallucinating policy details, losing conversation context mid-session, or burning through token budgets until rate limits shut them down.

Zero-downtime for AI agents isn’t the same as infrastructure uptime. It means preserving behavioral continuity, controlling costs, and maintaining decision quality through every deployment, update, and scaling event. This post is for the teams responsible for making that happen.

Key takeaways

- Zero-downtime for AI agents is about behavior, not availability. Agents can be “up” while hallucinating, losing context, or silently exceeding budgets.

- Functional uptime matters more than system uptime. Accurate decisions, consistent behavior, controlled costs, and preserved context define whether agents are truly available.

- Agent failures are often invisible to traditional monitoring. Behavioral drift, orchestration mismatches, and token throttling don’t trigger infrastructure alerts — they erode user trust.

- Availability must be managed across three tiers. Infrastructure uptime, orchestration continuity, and agent-level behavior all need dedicated monitoring and ownership.

- Observability is non-negotiable. Without correlated insight into correctness, latency, cost, and behavior, safe deployments at scale aren’t possible.

Why zero‑downtime means something different for AI agents

Your web services either respond or they don’t. Databases either accept queries or they fail. But your AI agents don’t work that way. They remember context across a conversation, produce different outputs for identical inputs, make multi-step decisions where latency compounds, and consume real budget with every token processed.

“Working” and “failing” aren’t binary for agents. That’s what makes them hard to monitor and harder to deploy safely.

System uptime vs. functional uptime

System uptime is binary: Infrastructure responds, endpoints return 200s, and logs show activity.

Functional uptime is what matters. Your agent produces accurate, timely, and cost-effective outputs that users can trust.

The difference plays out like this:

- Your customer service agent responds instantly (system), but hallucinates policy details (functional)

- Your document processing agent runs without error (system), then times out after completing 80% of a critical contract (functional)

- Your monitoring dashboard shows 100% availability (system) while users abandon the agent in frustration (functional)

“Up and running” is not the same as “working as intended.” For enterprise AI, only the latter counts.

Why agents fail softly instead of crashing

Traditional software throws errors. AI agents don’t — they produce confidently wrong answers instead. Because large language models (LLMs) are non-deterministic, failures surface as subtly degraded outputs, not 500 errors. Users can’t tell the difference between a model limitation and a deployment problem, which means trust erodes before anyone on your team knows something is wrong.

Deployment strategies for agents must detect behavioral degradation, not just error rates. Traditional DevOps wasn’t built for systems that degrade instead of crash.

A tiered model for zero‑downtime AI agent availability

Real zero-downtime for enterprise AI agents requires managing three distinct tiers — each entering the lifecycle at a different stage, each with different owners:

- Infrastructure availability: The foundation

- Orchestration availability: The intelligence layer

- Agent availability: The user-facing reality

Most teams have tier one covered. The gaps that break production agents live in tiers two and three.

Tier 1: Infrastructure availability (the foundation)

Infrastructure availability is necessary, but insufficient for agent reliability. This tier belongs to your platform, cloud, and infrastructure teams: the people keeping compute, networking, and storage operational.

Perfect infrastructure uptime guarantees only one thing: the possibility of agent success.

Infrastructure uptime as a prerequisite, not the goal

Traditional SLAs matter, but they stop short for agent workloads.

CPU utilization, network throughput, and disk I/O tell you nothing about whether your agent is hallucinating, exceeding token budgets, or returning incomplete responses.

Infrastructure health and agent health are not the same metric.

Container orchestration and workload isolation

Kubernetes, scheduling, and resource isolation carry more weight for AI workloads than traditional applications. GPU contention degrades response quality. Cold starts interrupt conversation flow. Inconsistent runtime environments introduce subtle behavioral changes that users experience as unreliability.

When your sales assistant suddenly changes its tone or reasoning approach because of underlying infrastructure changes, that’s functional downtime, despite what your uptime dashboard may say.

Tier 2: Orchestration availability (the intelligence layer)

This tier moves beyond machines running to models and orchestration functioning correctly together. It belongs to the ML platform, AgentOps, and MLOps teams. Latency, throughput, and orchestration integrity are the availability metrics that matter here.

Model loading, routing, and orchestration continuity

Enterprise AI agents rarely rely on a single model. Orchestration chains route requests, apply reasoning, select tools, and blend responses, often across multiple specialized models per request.

Updating any single component risks breaking the entire chain. Your deployment strategy must treat multi-model updates as a unit, not independent versioning. If your reasoning model updates but your routing model doesn’t, the behavioral inconsistencies that follow won’t surface in traditional monitoring until users are already affected.

Token cost and latency as availability constraints

Budget overruns create hidden downtime. When an agent hits token caps mid-month, it’s functionally unavailable, regardless of what infrastructure metrics show.

Latency compounds the same way. A 500 ms slowdown across five sequential reasoning calls produces a 2.5-second user-visible delay — enough to degrade the experience, not enough to trigger an alert. Traditional availability metrics don’t account for this stacking effect. Yours need to.

Why traditional deployment strategies break at this layer

Standard deployment approaches assume clean version separation, deterministic outputs, and reliable rollback to known-good states. None of those assumptions hold for enterprise AI agents.

Blue-green, canary, and rolling updates weren’t designed for stateful, non-deterministic systems with token-based economics. Each requires meaningful adaptation before it’s safe for agent deployments.

Tier 3: Agent availability (the user‑facing reality)

This tier is what users actually experience. It’s owned by AI product teams and agent developers, and measured through task completion, accuracy, cost per interaction, and user trust. It’s where the business value of your AI investment is realized or lost.

Stateful context and multi‑turn continuity

Losing context qualifies as functional downtime.

When a customer explains their problem to your support agent, and it then loses that context mid-conversation during a deployment rollout, that’s functional downtime — regardless of what system metrics report. Session affinity, memory persistence, and handoff continuity are availability requirements, not nice-to-haves.

Agents must survive updates mid-conversation. That demands session management that traditional applications simply don’t require.

Tool and function calling as a hidden dependency surface

Enterprise agents depend on external APIs, databases, and internal tools. Schema or contract changes can break agent functionality without triggering any alerts.

A minor update to your product catalog API structure can render your sales agent useless without touching a line of agent code. Versioned tool contracts and graceful degradation aren’t optional. They’re availability requirements.

Behavioral drift as the hardest failure to detect

Subtle prompt changes, token usage shifts, or orchestration tweaks can alter agent behavior in ways that don’t show up in metrics but are immediately apparent to users.

Deployment processes must validate behavioral consistency, not just code execution. Agent correctness requires continuous monitoring, not a one-time check at release.

Rethinking deployment strategies for agentic systems

Traditional deployment patterns aren’t wrong. They’re just incomplete without agent-specific adaptations.

Blue‑green deployments for agents

Blue-green deployments for agents require session migration, sticky routing, and warm-up procedures that account for model loading time and cold-start penalties. Running parallel environments doubles token consumption during transition periods — a meaningful cost at enterprise scale.

Most importantly, behavioral validation must happen before cutover. Does the new environment produce equivalent responses? Does it maintain conversation context? Does it respect the same token budget constraints? These checks matter more than traditional health checks.

Canary releases for agents

Even small canary traffic percentages — 1% to 5% — incur significant token costs at enterprise scale. A problematic canary stuck in reasoning loops can consume disproportionate resources before anyone notices.

Effective canary strategies for agents require output comparison and token tracking alongside traditional error rate monitoring. Success metrics must include correctness and cost efficiency, not just error rates.

Rolling updates and why they rarely work for agents

Rolling updates are incompatible with most stateful enterprise agents. They create mixed-version environments that produce inconsistent behavior across multi-turn conversations.

When a user starts a conversation with version A and continues with the new version B mid-rollout, reasoning shifts — even subtly. Context handling differences between versions cause repeated questions, missing information, and broken conversation flow. That’s functional downtime, even if the service never technically went offline.

For most enterprise agents, full environment swaps with careful session handling are the only safe option.

Observability as the backbone of functional uptime

For AI agents, observability is about agent behavior: what the agent is doing, why, and whether it’s doing it correctly. It’s the foundation of deployment safety and zero-downtime operations.

Monitoring correctness, cost, and latency together

No single metric captures agent health. You need correlated visibility across correctness, cost, and latency — because each can move independently in ways that matter.

When accuracy improves but token consumption doubles, that’s a deployment decision. When latency stays flat but correctness degrades, that’s a regression. Individual metrics won’t surface either. Correlated observability will.

Detecting drift before users feel it

By the time users report agent issues, trust is already eroding. Proactive observability is what prevents that.

Effective observability tracks semantic drift in responses, flags changes in reasoning paths, and detects when agents access tools or data sources outside defined boundaries. These signals let you catch regressions before they reach users, not after.

Take the necessary steps to keep your agents running

Agent failures aren’t just technical problems — they erode trust, create compliance exposure, and put your AI strategy at risk.

Fixing that means treating deployment as an agent-first discipline: tiered monitoring across infrastructure, orchestration, and behavior; deployment strategies built for statefulness and token economics; and observability that catches drift before users do.

The DataRobot Agent Workforce Platform addresses these challenges in one place — with agent-specific observability, governance across every layer, and the operational controls enterprises need to deploy and update agents safely at scale.

Learn whyAI leaders turn to DataRobot’s Agent Workforce Platform to keep agents reliable in production.

FAQs

Why isn’t traditional uptime enough for AI agents?

Traditional uptime only tells you whether infrastructure responds. AI agents can appear healthy while producing incorrect answers, losing conversation state, or failing mid-workflow due to cost or latency issues, all of which are functional downtime for users.

What’s the difference between system uptime and functional uptime?

System uptime measures whether services are reachable. Functional uptime measures whether agents behave correctly, maintain context, respond within acceptable latency, and operate within budget. Enterprise AI success depends on the latter.

Why do AI agents “fail softly” instead of crashing?

LLMs are non-deterministic and degrade gradually. Instead of throwing errors, agents produce subtly worse outputs, inconsistent reasoning, or incomplete responses, making failures harder to detect and more damaging to trust.

Which deployment strategies work best for AI agents?

Traditional rolling updates often break stateful agents. Blue-green and canary deployments can work, but only when adapted for session continuity, behavioral validation, token economics, and multi-model orchestration dependencies.

How can teams achieve real zero-downtime AI deployments?

Teams need agent-specific observability, behavioral validation during deployments, cost-aware health signals, and governance across infrastructure, orchestration, and application layers. DataRobot’s Agent Workforce Platform provides these capabilities in one control plane, keeping agents reliable through updates, scaling, and change.

The post How to achieve zero-downtime updates in large-scale AI agent deployments appeared first on DataRobot.